# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import pandas as pd # 数据表格处理库

# Import the pandas library for tabular data manipulation

import numpy as np # 数值计算库

# Import the numpy library for numerical computation

from scipy.stats import binom # 二项分布统计函数

# Import the binomial distribution statistical functions from scipy

import matplotlib.pyplot as plt # 数据可视化绘图库

# Import the matplotlib plotting library for data visualization

# ========== 第1步:设置绘图环境 ==========

# ========== Step 1: Configure the plotting environment ==========

plt.rcParams['font.sans-serif'] = ['SimHei'] # 设置中文黑体字体

# Set the Chinese font to SimHei (bold)

plt.rcParams['axes.unicode_minus'] = False # 解决负号显示问题

# Fix the display issue with negative signs

# ========== 第2步:定义电商场景参数 ==========

# ========== Step 2: Define e-commerce scenario parameters ==========

number_of_visitors = 1000 # 每天网站访客数量

# Number of daily website visitors

estimated_conversion_rate = 0.03 # 行业平均转化率为3%

# Industry average conversion rate of 3%

# ========== 第3步:计算期望与标准差 ==========

# ========== Step 3: Compute the expectation and standard deviation ==========

# 二项分布期望 E(X) = n * p

# Binomial expectation E(X) = n * p

expected_orders = number_of_visitors * estimated_conversion_rate # 计算期望订单数 E(X) = 1000 × 0.03 = 30

# Compute the expected number of orders E(X) = 1000 × 0.03 = 30

# 二项分布标准差 σ = sqrt(n * p * (1 - p))

# Binomial standard deviation σ = sqrt(n * p * (1 - p))

standard_deviation_orders = np.sqrt(number_of_visitors * estimated_conversion_rate * (1 - estimated_conversion_rate)) # 计算标准差 σ = √(npq)

# Compute the standard deviation σ = √(npq)4 概率分布 (Probability Distributions)

概率分布(probability distribution)描述了随机变量取不同值的概率规律。它是连接理论概率与实际数据的桥梁,是统计推断的理论基础。本章将系统介绍常见的离散型和连续型概率分布,以及它们在商业和经济领域的应用。

A probability distribution describes the probabilistic patterns governing the different values a random variable can take. It serves as the bridge connecting theoretical probability to real-world data and constitutes the theoretical foundation of statistical inference. This chapter systematically introduces the most common discrete and continuous probability distributions, along with their applications in business and economics.

4.1 概率分布在金融建模中的典型应用 (Typical Applications of Probability Distributions in Financial Modeling)

概率分布是金融风险度量和资产定价的数学语言。以下展示各类概率分布在中国金融市场分析中的核心应用。

Probability distributions constitute the mathematical language of financial risk measurement and asset pricing. The following showcases the core applications of various probability distributions in the analysis of the Chinese financial market.

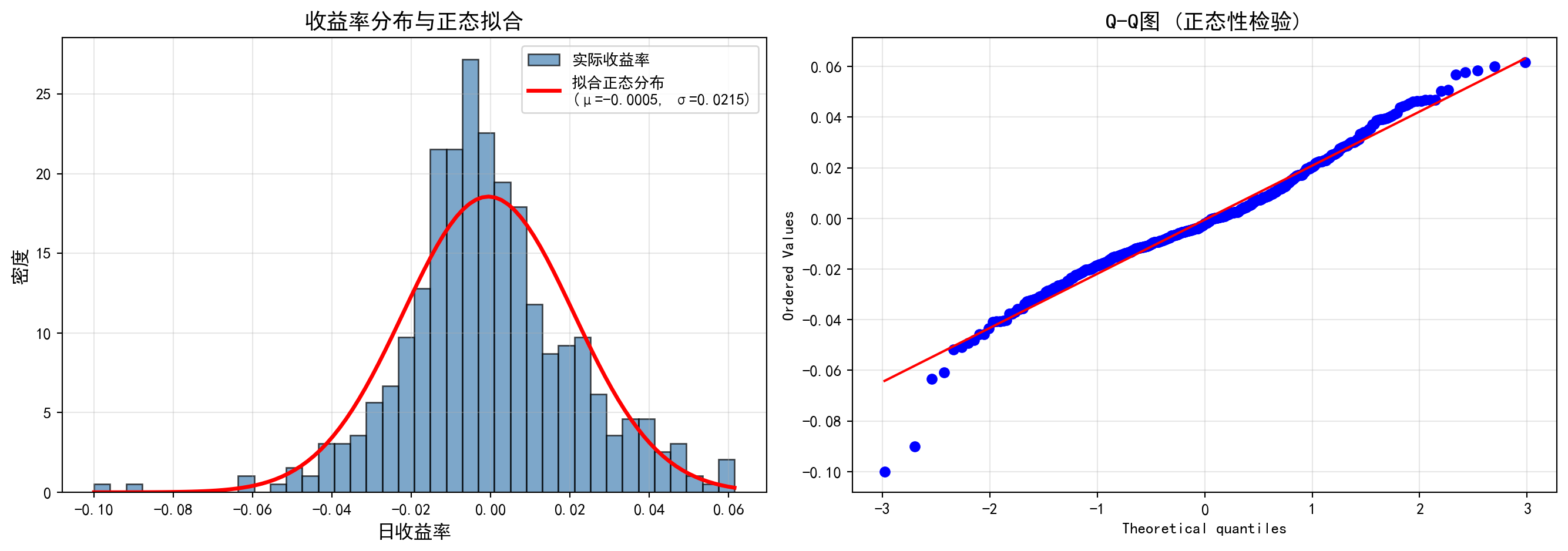

4.1.1 应用一:股票收益率的正态性检验与厚尾风险 (Application 1: Normality Tests and Fat-Tail Risk for Stock Returns)

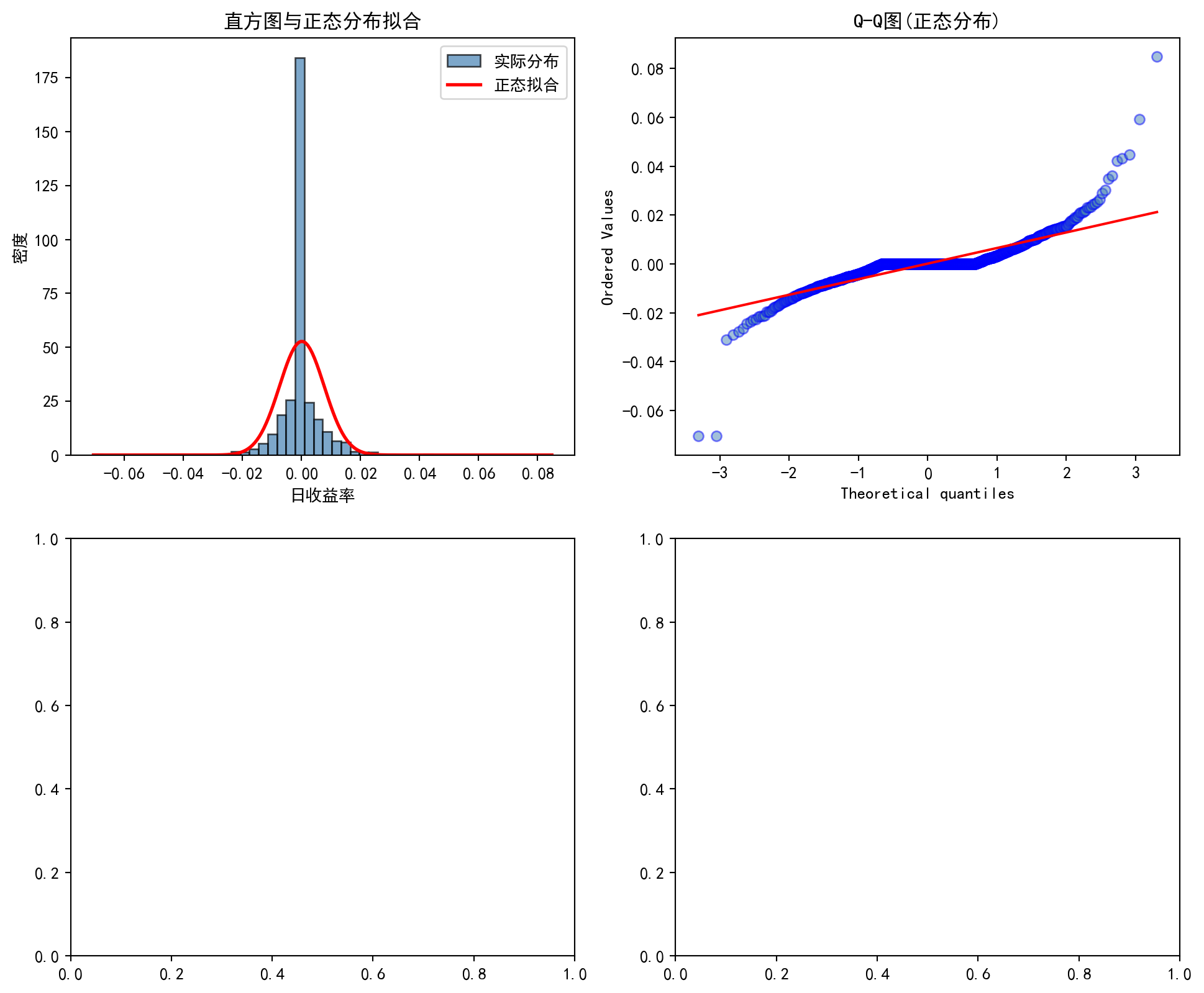

正态分布是金融学中最常用的假设之一(如Black-Scholes模型、马科维茨投资组合理论),但A股市场的实证数据表明,日收益率分布通常呈现尖峰厚尾(Leptokurtic)特征——峰度远大于3,意味着极端涨跌幅出现的概率远高于正态分布的预测。使用 stock_price_pre_adjusted.h5 中的历史收益率数据,通过计算偏度和峰度并进行Jarque-Bera检验,可以量化这种偏离程度。这一发现对风险管理至关重要:如果假设收益率服从正态分布,VaR(在险价值)模型将严重低估尾部风险。

The normal distribution is one of the most commonly adopted assumptions in finance (e.g., the Black-Scholes model and Markowitz’s Modern Portfolio Theory). However, empirical evidence from China’s A-share market shows that daily return distributions typically exhibit leptokurtic (fat-tailed) characteristics — kurtosis far exceeds 3, implying that the probability of extreme gains or losses is much higher than what the normal distribution predicts. Using historical return data from stock_price_pre_adjusted.h5, one can quantify the degree of this deviation by computing skewness and kurtosis and conducting the Jarque-Bera test. This finding is critical for risk management: if returns are assumed to follow a normal distribution, the Value-at-Risk (VaR) model will severely underestimate tail risk.

4.1.2 应用二:泊松分布与金融事件建模 (Application 2: The Poisson Distribution and Financial Event Modeling)

泊松分布广泛应用于金融领域中稀有事件的建模:

The Poisson distribution is widely applied to model rare events in finance:

信用风险:一定期间内企业债违约的次数可用泊松过程建模

跳跃扩散模型:Merton的跳跃扩散模型假设股价的跳跃次数服从泊松分布

操作风险:银行操作风险损失事件的频率建模

Credit risk: The number of corporate bond defaults within a given period can be modeled using a Poisson process.

Jump-diffusion models: Merton’s jump-diffusion model assumes that the number of jumps in stock prices follows a Poisson distribution.

Operational risk: Modeling the frequency of operational risk loss events in banking.

利用 financial_statement.h5 中的历史数据,可以统计特定行业在不同时期发生ST(特别处理)或退市事件的频率,验证这些稀有事件是否符合泊松分布的假定。

Using historical data from financial_statement.h5, one can tabulate the frequency of ST (Special Treatment) designations or delisting events across specific industries in different periods, and verify whether these rare events conform to the assumptions of the Poisson distribution.

4.1.3 应用三:t分布与小样本金融分析 (Application 3: The t-Distribution and Small-Sample Financial Analysis)

在金融研究中,许多重要的推断问题面临小样本约束。例如,评估一个新基金经理3年的业绩(仅36个月度数据),或分析IPO后12个月的超额收益——样本量远不足以依赖正态分布的大样本性质。此时,t分布成为正确推断的保障。t分布的”厚尾”特性迫使我们在小样本下做出更保守的判断,避免过度自信地宣称发现了”显著”的超额收益。这一方法将在 章节 7 中深入讨论。

In financial research, many important inference problems are constrained by small sample sizes. For instance, evaluating a new fund manager’s track record over 3 years (only 36 monthly observations), or analyzing post-IPO excess returns over 12 months — the sample sizes are far too small to rely on the large-sample properties of the normal distribution. In such cases, the t-distribution becomes the safeguard for correct inference. The “fat-tailed” property of the t-distribution compels us to make more conservative judgments with small samples, preventing overconfident claims of having discovered “significant” excess returns. This methodology is discussed in depth in 章节 7.

4.2 随机变量 (Random Variables)

4.2.1 定义 (Definition)

随机变量(random variable)是一个将样本空间映射到实数的函数。我们用大写字母(如 \(X, Y, Z\))表示随机变量,用小写字母(如 \(x, y, z\))表示其具体取值。

A random variable is a function that maps outcomes from the sample space to real numbers. We use uppercase letters (such as \(X, Y, Z\)) to denote random variables and lowercase letters (such as \(x, y, z\)) to denote their specific realized values.

分类:

- 离散型随机变量: 取值为可数集(如:抛硬币次数、缺陷产品数量)

- 连续型随机变量: 取值在某个区间内连续(如:日收益率、资产规模、市盈率)

Classification:

- Discrete random variable: Takes values from a countable set (e.g., number of coin flips, number of defective products).

- Continuous random variable: Takes values continuously within an interval (e.g., daily returns, asset size, price-to-earnings ratio).

理解随机变量:从结果到数值的映射

Understanding Random Variables: Mapping Outcomes to Numbers

考虑”掷两个骰子”这个试验:

- 样本空间: {(1,1), (1,2), …, (6,6)} 共36种结果

- 随机变量X: 定义为两个骰子的点数和

- X((1,1)) = 2

- X((1,2)) = X((2,1)) = 3

- …

- X((6,6)) = 12

Consider the experiment of “rolling two dice”:

- Sample space: {(1,1), (1,2), …, (6,6)}, a total of 36 outcomes.

- Random variable X: Defined as the sum of the two dice.

- X((1,1)) = 2

- X((1,2)) = X((2,1)) = 3

- …

- X((6,6)) = 12

这样,随机变量将抽象的样本结果映射为具体的数值,便于进行数学分析。

In this way, the random variable maps abstract sample outcomes to concrete numerical values, facilitating mathematical analysis.

4.3 离散型概率分布 (Discrete Probability Distributions)

4.3.1 概率质量函数 (PMF)

对于离散型随机变量 \(X\),概率质量函数(Probability Mass Function, PMF)定义如 式 4.1 所示:

For a discrete random variable \(X\), the Probability Mass Function (PMF) is defined as shown in 式 4.1:

\[ f(x) = P(X = x) \tag{4.1}\]

满足: 1. \(f(x) \geq 0\) 对所有 \(x\) 2. \(\sum_x f(x) = 1\)

The PMF must satisfy: 1. \(f(x) \geq 0\) for all \(x\) 2. \(\sum_x f(x) = 1\)

4.3.2 二项分布 (Binomial Distribution)

定义: \(X \sim \text{Binomial}(n, p)\)

Definition: \(X \sim \text{Binomial}(n, p)\)

\(n\) 次独立的伯努利试验(每次试验只有”成功”/“失败”两种结果)中,成功次数 \(X\) 的分布。

The distribution of the number of successes \(X\) in \(n\) independent Bernoulli trials (each trial has only two outcomes: “success” or “failure”).

概率质量函数如 式 4.2 所示:

The probability mass function is shown in 式 4.2:

\[ P(X = k) = \binom{n}{k} p^k (1-p)^{n-k}, \quad k = 0, 1, ..., n \tag{4.2}\]

其中 \(\binom{n}{k} = \frac{n!}{k!(n-k)!}\) 是二项系数。

where \(\binom{n}{k} = \frac{n!}{k!(n-k)!}\) is the binomial coefficient.

二项分布的期望与方差(式 4.3):

The expectation and variance of the binomial distribution (式 4.3):

\[ E[X] = np, \quad \text{Var}(X) = np(1-p) \tag{4.3}\]

二项分布的直观理解

Intuitive Understanding of the Binomial Distribution

考虑某银行每天审批100笔贷款申请,历史数据显示每笔申请的违约概率为2%。

Consider a bank that approves 100 loan applications per day, with historical data indicating a default probability of 2% for each application.

问题: 明天违约的贷款申请数量是多少?

Question: How many loan applications will default tomorrow?

这是一个二项分布问题: \(X \sim \text{Binomial}(100, 0.02)\)

This is a binomial distribution problem: \(X \sim \text{Binomial}(100, 0.02)\)

期望违约数: \(E[X] = 100 \times 0.02 = 2\) 笔

方差: \(\text{Var}(X) = 100 \times 0.02 \times 0.98 = 1.96\)

标准差: \(\sqrt{1.96} \approx 1.4\) 笔

Expected number of defaults: \(E[X] = 100 \times 0.02 = 2\)

Variance: \(\text{Var}(X) = 100 \times 0.02 \times 0.98 = 1.96\)

Standard deviation: \(\sqrt{1.96} \approx 1.4\)

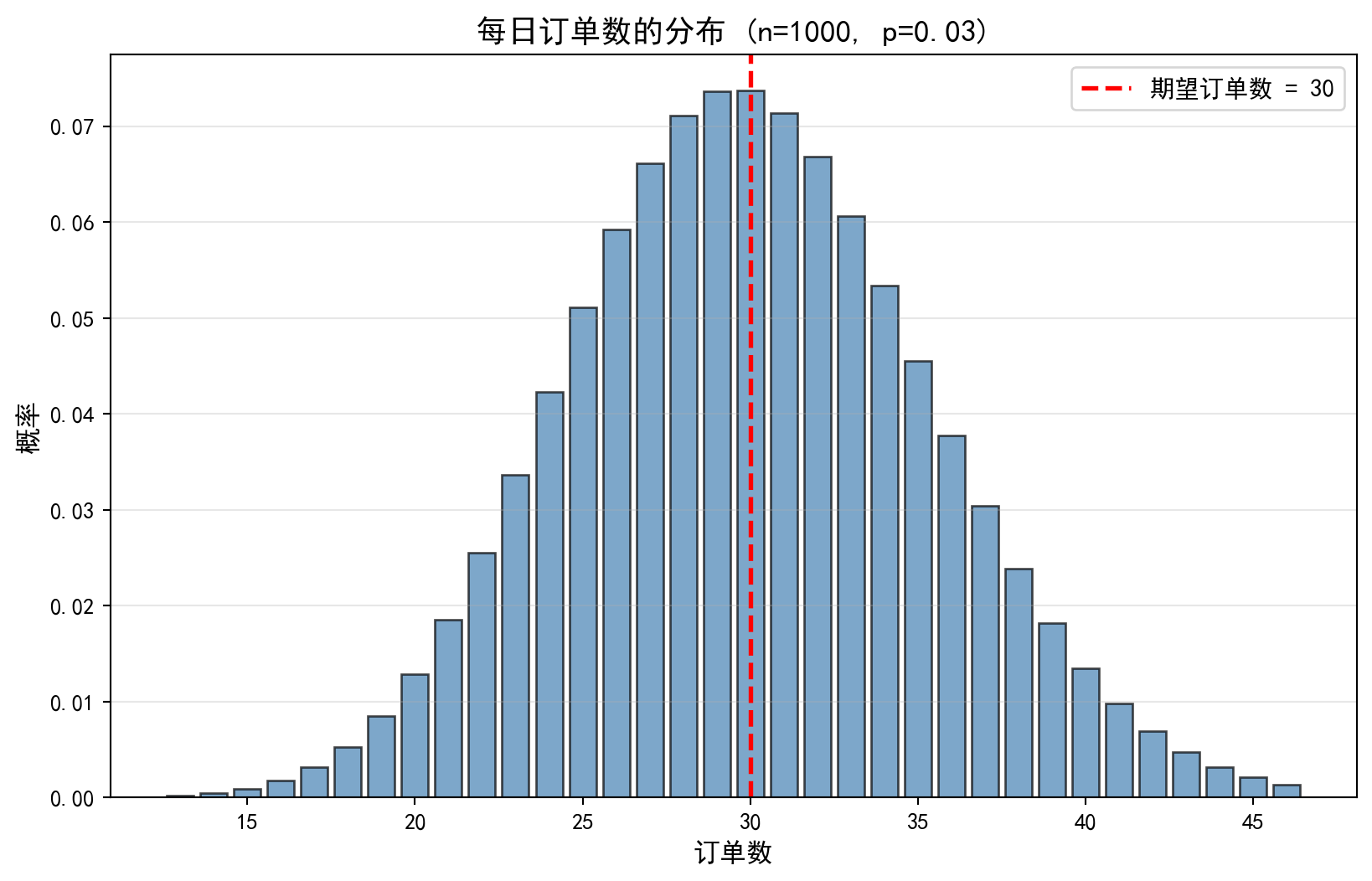

银行可以据此准备坏账准备金。注意:虽然平均是2笔,但实际违约数可能在0到6笔之间波动(3个标准差范围)。表 4.1 展示了一个电商平台转化率的二项分布分析案例。

The bank can use this information to prepare loan loss provisions. Note that although the average is 2, the actual number of defaults may fluctuate between 0 and 6 (within a 3-standard-deviation range). 表 4.1 presents a binomial distribution analysis of an e-commerce platform’s conversion rate.

4.3.2.1 案例:电商平台转化率 (Case Study: E-Commerce Conversion Rate)

什么是转化率?

What Is a Conversion Rate?

在电子商务领域,转化率(Conversion Rate)是衡量营销效果和用户体验的核心关键绩效指标(KPI)。它的定义是:在一定时间内,完成目标行为(如下单购买)的用户数占访问总用户数的比例。例如,某天一家电商网站有1000名访客,其中30人最终下单购买了商品,则当天的转化率为 \(30/1000 = 3\%\)。

In e-commerce, the conversion rate is the core Key Performance Indicator (KPI) for measuring marketing effectiveness and user experience. It is defined as the proportion of visitors who complete a target action (such as placing an order) out of the total number of visitors within a given time period. For example, if an e-commerce website receives 1,000 visitors in a day and 30 of them ultimately place an order, the conversion rate for that day is \(30/1000 = 3\%\).

从统计建模的角度看,每一位访客的行为可以看作一次独立的伯努利试验:要么购买(“成功”,概率为 \(p\)),要么不购买(“失败”,概率为 \(1-p\))。当我们关注 \(n\) 位独立访客中有多少人会购买时,购买人数 \(X\) 就服从二项分布 \(X \sim \text{Binomial}(n, p)\)。这使得二项分布成为分析电商转化率问题的天然工具——运营团队可以借此估算每日订单量的期望值和波动范围,从而合理安排库存和客服资源。

From a statistical modeling perspective, each visitor’s behavior can be viewed as an independent Bernoulli trial: either a purchase (“success,” with probability \(p\)) or no purchase (“failure,” with probability \(1-p\)). When we focus on how many of \(n\) independent visitors will make a purchase, the number of purchasers \(X\) follows a binomial distribution \(X \sim \text{Binomial}(n, p)\). This makes the binomial distribution a natural tool for analyzing e-commerce conversion rate problems — operations teams can use it to estimate the expected daily order volume and its range of fluctuation, thereby allocating inventory and customer service resources efficiently.

二项分布的期望和标准差计算完毕。下面基于这些参数计算概率质量函数并构建概率分布表。

The expectation and standard deviation of the binomial distribution have been computed. Next, we calculate the probability mass function based on these parameters and construct the probability distribution table.

# ========== 第4步:计算概率质量函数 ==========

# ========== Step 4: Compute the probability mass function ==========

# 取均值 ± 3倍标准差范围内的订单数(覆盖99.7%的可能结果)

# Consider order counts within the mean ± 3 standard deviations (covering 99.7% of possible outcomes)

order_count_values = list(range( # 生成μ±3σ范围内的整数订单数列表

# Generate a list of integer order counts within the μ±3σ range

int(expected_orders - 3 * standard_deviation_orders), # 下界:期望 - 3σ

# Lower bound: expectation - 3σ

int(expected_orders + 3 * standard_deviation_orders) + 1 # 上界:期望 + 3σ

# Upper bound: expectation + 3σ

))

# 对每个可能的订单数 k,利用二项分布PMF P(X=k) = C(n,k) * p^k * (1-p)^(n-k) 计算概率

# For each possible order count k, compute the probability using the binomial PMF: P(X=k) = C(n,k) * p^k * (1-p)^(n-k)

order_probabilities = [binom.pmf(k, number_of_visitors, estimated_conversion_rate) for k in order_count_values] # 计算每个订单数k对应的PMF概率值

# Compute the PMF probability value for each order count k

# ========== 第5步:构建概率分布表 ==========

# ========== Step 5: Construct the probability distribution table ==========

conversion_probabilities_dataframe = pd.DataFrame({ # 将概率分布整理为DataFrame表格

# Organize the probability distribution into a DataFrame table

'订单数': order_count_values, # 每日可能的成交订单数

# Possible daily completed order counts

'概率': [f'{prob:.4f}' for prob in order_probabilities], # 对应的PMF概率值

# Corresponding PMF probability values

'累积概率': [f'{binom.cdf(k, number_of_visitors, estimated_conversion_rate):.4f}' # 累积分布函数CDF值

# Cumulative distribution function (CDF) values

for k in order_count_values] # CDF累积概率 P(X ≤ k)

# CDF cumulative probability P(X ≤ k)

})

print('每日订单数的概率分布:') # 输出标题

# Print the output header

print(conversion_probabilities_dataframe) # 打印概率分布表

# Print the probability distribution table每日订单数的概率分布:

订单数 概率 累积概率

0 13 0.0002 0.0003

1 14 0.0005 0.0008

2 15 0.0009 0.0017

3 16 0.0018 0.0035

4 17 0.0031 0.0066

5 18 0.0053 0.0119

6 19 0.0085 0.0204

7 20 0.0129 0.0333

8 21 0.0186 0.0519

9 22 0.0256 0.0774

10 23 0.0336 0.1110

11 24 0.0423 0.1534

12 25 0.0511 0.2045

13 26 0.0593 0.2637

14 27 0.0661 0.3299

15 28 0.0711 0.4009

16 29 0.0737 0.4746

17 30 0.0737 0.5484

18 31 0.0714 0.6197

19 32 0.0668 0.6866

20 33 0.0606 0.7472

21 34 0.0533 0.8005

22 35 0.0455 0.8461

23 36 0.0377 0.8838

24 37 0.0304 0.9142

25 38 0.0238 0.9381

26 39 0.0182 0.9563

27 40 0.0135 0.9698

28 41 0.0098 0.9796

29 42 0.0069 0.9865

30 43 0.0048 0.9912

31 44 0.0032 0.9944

32 45 0.0021 0.9965

33 46 0.0014 0.9979上表输出了二项分布 \(B(300, 0.10)\) 的概率分布。从结果可以看出:概率最大的取值(众数)出现在订单数 29 和 30 处,二者的概率均为 0.0737,这与理论期望值 \(E(X) = np = 300 \times 0.10 = 30\) 完全一致。概率分布呈现出以期望值为中心的对称钟形特征:累积概率在 20 单时达到约 3.33%,在 37 单时累积至约 91.42%,说明每日订单数有约 95% 的概率落在 20—40 单的范围内。这一信息对于电商运营的资源配置具有直接指导意义:日常备货和客服排班只需覆盖 20—40 单的波动区间即可满足绝大多数情况。下面绘制概率分布柱状图进行可视化。

The table above displays the probability distribution of the binomial distribution \(B(300, 0.10)\). The results show that the most probable values (the mode) occur at 29 and 30 orders, each with a probability of 0.0737, perfectly consistent with the theoretical expectation \(E(X) = np = 300 \times 0.10 = 30\). The probability distribution exhibits a symmetric bell-shaped pattern centered around the expectation: the cumulative probability reaches approximately 3.33% at 20 orders and accumulates to approximately 91.42% at 37 orders, indicating that the daily order count falls within the 20–40 range with roughly 95% probability. This information provides direct guidance for e-commerce resource allocation: routine inventory preparation and customer service shift scheduling need only cover the 20–40 order fluctuation range to accommodate the vast majority of scenarios. We now plot a histogram to visualize the probability distribution.

# ========== 第6步:绘制概率分布柱状图 ==========

# ========== Step 6: Plot the probability distribution histogram ==========

conversion_figure, conversion_axes = plt.subplots(figsize=(10, 6)) # 创建10×6英寸画布

# Create a 10×6 inch figure canvas

conversion_bars = conversion_axes.bar( # 绘制柱状图

# Draw a bar chart

order_count_values, order_probabilities, # x轴=订单数, y轴=概率

# x-axis = order count, y-axis = probability

color='steelblue', alpha=0.7, edgecolor='black' # 钢蓝色填充、黑色边框

# Steel blue fill with black edge

)

# 添加期望值参考线

# Add a reference line for the expected value

conversion_axes.axvline( # 绘制期望值E(X)的垂直参考线

# Draw a vertical reference line at E(X)

expected_orders, color='red', linestyle='--', linewidth=2, # 红色虚线标记期望订单数

# Red dashed line marking the expected number of orders

label=f'期望订单数 = {expected_orders:.0f}' # 图例说明

# Legend label

)

# 设置坐标轴标签与标题

# Set axis labels and title

conversion_axes.set_xlabel('订单数', fontsize=12) # x轴标签

# x-axis label

conversion_axes.set_ylabel('概率', fontsize=12) # y轴标签

# y-axis label

conversion_axes.set_title( # 设置图表主标题

# Set the chart title

f'每日订单数的分布 (n={number_of_visitors}, p={estimated_conversion_rate})', # 标题含参数

# Title with parameters

fontsize=14 # 标题字号14磅

# Title font size 14pt

)

conversion_axes.legend(fontsize=11) # 显示图例

# Display the legend

conversion_axes.grid(True, axis='y', alpha=0.3) # 添加y轴网格线

# Add y-axis gridlines

概率分布柱状图基本框架已绘制完成。下面在每个柱体顶部标注具体概率值,方便读者直接读取数值。

The basic framework of the probability distribution histogram has been plotted. Next, we annotate the specific probability values on top of each bar for easy reading.

# ========== 第7步:在柱状图上标注概率值 ==========

# ========== Step 7: Annotate probability values on the histogram bars ==========

for current_bar, current_probability in zip(conversion_bars, order_probabilities): # 遍历每个柱体及对应概率

# Iterate over each bar and its corresponding probability

if current_probability > 0.01: # 仅标注概率 > 1% 的柱体

# Only annotate bars with probability > 1%

bar_height = current_bar.get_height() # 获取柱体高度

# Get the bar height

conversion_axes.text( # 在柱顶添加概率数值标注

# Add a text annotation at the top of the bar

current_bar.get_x() + current_bar.get_width() / 2., # 水平居中于柱体

# Horizontally centered on the bar

bar_height, # 标注在柱体顶部

# Position at the top of the bar

f'{current_probability:.3f}', # 显示三位小数概率

# Display probability to three decimal places

ha='center', va='bottom', fontsize=8 # 文字居中、底部对齐

# Text centered, bottom-aligned

)

plt.tight_layout() # 自动调整布局

# Automatically adjust the layout

plt.show() # 渲染并显示图表

# Render and display the chart<Figure size 672x480 with 0 Axes>上图清晰地展示了二项分布 \(B(300, 0.10)\) 的概率分布柱状图。图中可以观察到:分布呈现经典的钟形曲线特征,以期望值 \(E(X) = 30\)(红色虚线标注)为中心近似对称。概率最高的柱体集中在 29—31 单附近,概率约为 7.4%。随着订单数偏离期望值,概率迅速衰减——当订单数低于 20 或高于 40 时,单个取值的概率已不足 1%。这种分布形态直观地验证了大样本二项分布向正态分布收敛的理论:当 \(n\) 足够大且 \(np\) 和 \(n(1-p)\) 均大于 5 时(本例中 \(np=30\), \(n(1-p)=270\)),二项分布可以用正态分布 \(N(np, np(1-p))\) 良好近似。

The figure above clearly displays the probability distribution histogram of the binomial distribution \(B(300, 0.10)\). We observe that the distribution exhibits the classic bell-curve shape, approximately symmetric around the expected value \(E(X) = 30\) (marked by the red dashed line). The tallest bars are concentrated near 29–31 orders, with probabilities of approximately 7.4%. As the order count deviates from the expectation, the probability decays rapidly — when the order count falls below 20 or exceeds 40, the probability of any single value is already less than 1%. This distributional pattern intuitively validates the theoretical convergence of the binomial distribution to the normal distribution for large samples: when \(n\) is sufficiently large and both \(np\) and \(n(1-p)\) exceed 5 (in this example, \(np=30\) and \(n(1-p)=270\)), the binomial distribution is well approximated by the normal distribution \(N(np, np(1-p))\).

4.3.3 泊松分布 (Poisson Distribution)

定义: \(X \sim \text{Poisson}(\lambda)\)

Definition: \(X \sim \text{Poisson}(\lambda)\)

描述单位时间/空间内稀有事件发生的次数。参数 \(\lambda > 0\) 是事件发生的平均率。

It describes the number of occurrences of a rare event within a unit of time or space. The parameter \(\lambda > 0\) is the average rate of event occurrence.

概率质量函数如 式 4.4 所示:

The probability mass function is shown in 式 4.4:

\[ P(X = k) = \frac{e^{-\lambda} \lambda^k}{k!}, \quad k = 0, 1, 2, ... \tag{4.4}\]

泊松分布的期望与方差(式 4.5):

The expectation and variance of the Poisson distribution (式 4.5):

\[ E[X] = \lambda, \quad \text{Var}(X) = \lambda \tag{4.5}\]

数学推导:泊松分布是二项分布的极限

Mathematical Derivation: The Poisson Distribution as the Limit of the Binomial Distribution

为什么说”当 \(n\) 很大,\(p\) 很小时,二项分布就是泊松分布”?我们来推导一下。 设 \(X \sim B(n, p)\),令 \(\lambda = np\) 为常数,则 \(p = \lambda/n\)。

Why is it said that “when \(n\) is large and \(p\) is small, the binomial distribution becomes the Poisson distribution”? Let us derive this. Let \(X \sim B(n, p)\), and set \(\lambda = np\) as a constant, so \(p = \lambda/n\).

\[ P(X=k) = C_n^k p^k (1-p)^{n-k} = \frac{n(n-1)...(n-k+1)}{k!} (\frac{\lambda}{n})^k (1-\frac{\lambda}{n})^{n-k} \]

我们将式子重新组合:

We rearrange the expression:

\[ = \frac{\lambda^k}{k!} \cdot \underbrace{\frac{n}{n} \cdot \frac{n-1}{n} \cdot ... \cdot \frac{n-k+1}{n}}_{k \text{ items}} \cdot \underbrace{(1-\frac{\lambda}{n})^n}_{\to e^{-\lambda}} \cdot \underbrace{(1-\frac{\lambda}{n})^{-k}}_{\to 1} \]

当 \(n \to \infty\) 时: 1. 中间的 \(k\) 项分数都趋近于 1。 2. 根据重要极限公式,\((1-\frac{\lambda}{n})^n \to e^{-\lambda}\)。 3. 最后一项趋近于 1。

As \(n \to \infty\): 1. The middle \(k\) fractional terms all approach 1. 2. By the well-known limit formula, \((1-\frac{\lambda}{n})^n \to e^{-\lambda}\). 3. The last term approaches 1.

于是我们得到: \[ P(X=k) \to \frac{\lambda^k e^{-\lambda}}{k!} \] 这就是泊松分布的公式!这解释了为什么对于稀有事件(\(p\) 极小)但基数很大(\(n\) 很大)的场景(如车祸、客服电话),泊松分布是完美的模型。表 4.2 展示了一个客服中心来电量的泊松分布应用。

Thus we obtain: \[ P(X=k) \to \frac{\lambda^k e^{-\lambda}}{k!} \] This is precisely the Poisson distribution formula! This explains why, for scenarios involving rare events (very small \(p\)) with a large population base (very large \(n\)) — such as traffic accidents or customer service calls — the Poisson distribution is the perfect model. 表 4.2 presents an application of the Poisson distribution to the call volume at a customer service center.

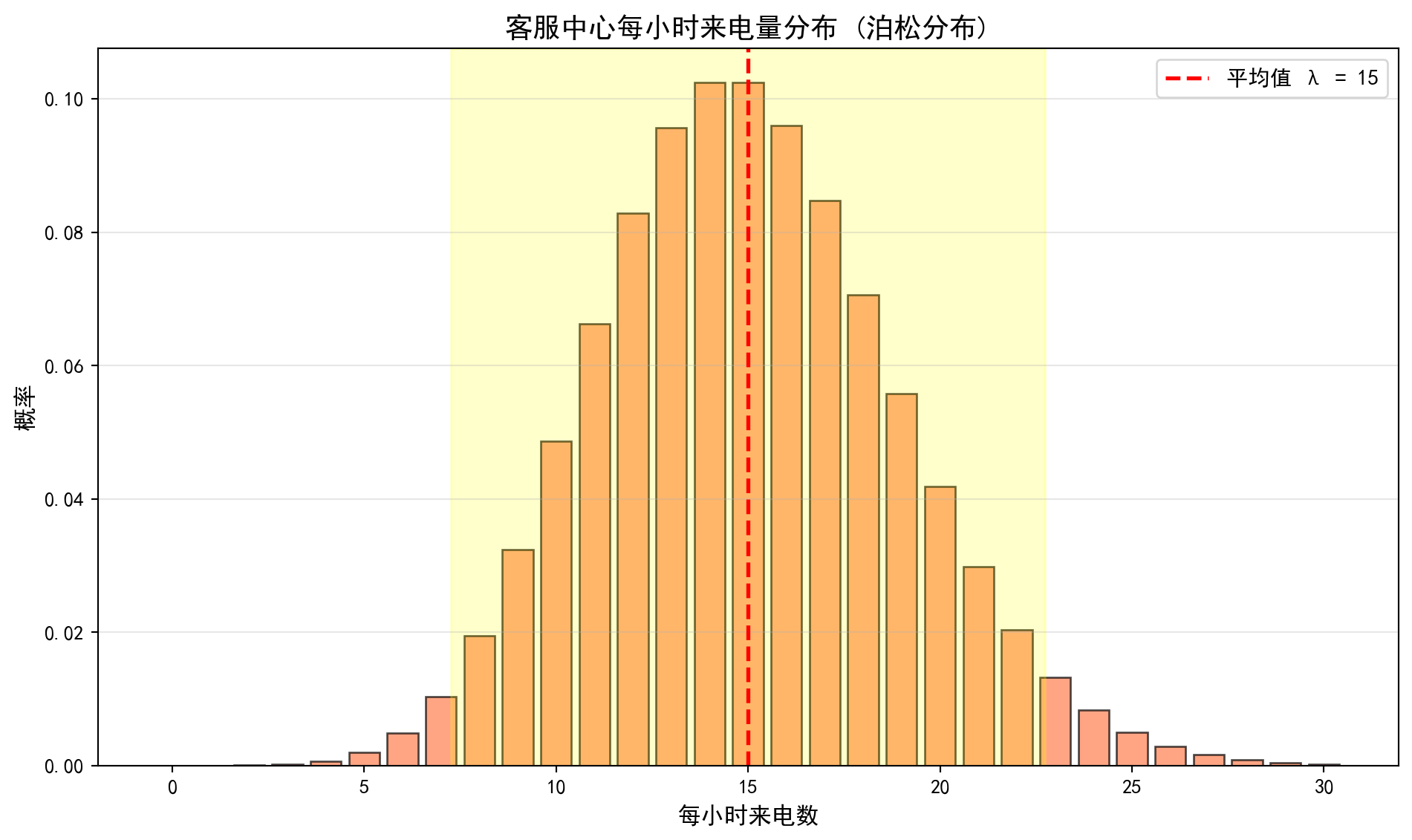

4.3.3.1 案例:客服中心来电量 (Case Study: Customer Service Call Volume)

什么是客服来电量的随机建模?

What Is Stochastic Modeling of Customer Service Call Volume?

对于电商平台、银行网点等服务型企业,客服中心每小时接到的来电数量是一个典型的”稀有事件计数”问题:每个潜在客户在单位时间内拨打电话的概率很低(\(p\)极小),但客户基数很大(\(n\)很大)。这恰好满足泊松分布的适用条件。理解来电量服从泊松分布,使得运营管理者能够预测不同来电数量出现的概率,从而合理安排客服人员数量、设置排队系统参数,避免人力不足导致客户流失或人力过剩造成成本浪费。

For service-oriented enterprises such as e-commerce platforms and bank branches, the number of calls received per hour by a customer service center is a classic “rare event counting” problem: the probability that any given potential customer will call within a unit of time is very low (extremely small \(p\)), but the customer base is very large (very large \(n\)). This precisely satisfies the conditions for applying the Poisson distribution. Understanding that call volume follows a Poisson distribution enables operations managers to predict the probability of different call volumes, thereby rationally scheduling customer service staff, configuring queuing system parameters, and avoiding either understaffing (leading to customer attrition) or overstaffing (wasting costs).

下面我们用泊松分布模拟一个平均每小时接到8个来电的客服中心,计算各种来电数量的概率。表 4.2 展示了计算结果。

Below, we use the Poisson distribution to model a customer service center that receives an average of 8 calls per hour, and compute the probability of various call volumes. 表 4.2 presents the computed results.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import pandas as pd # 数据表格处理库

# Import the pandas library for tabular data manipulation

import numpy as np # 数值计算库

# Import the numpy library for numerical computation

from scipy.stats import poisson # 泊松分布统计函数

# Import the Poisson distribution statistical functions from scipy

import matplotlib.pyplot as plt # 数据可视化库

# Import the matplotlib library for data visualization

# ========== 第1步:设置绘图环境 ==========

# ========== Step 1: Configure the plotting environment ==========

plt.rcParams['font.sans-serif'] = ['SimHei'] # 设置中文黑体字体

# Set the Chinese font to SimHei (bold)

plt.rcParams['axes.unicode_minus'] = False # 解决负号显示问题

# Fix the display issue with negative signs

# ========== 第2步:定义客服场景参数 ==========

# ========== Step 2: Define customer service scenario parameters ==========

average_calls_per_hour = 15 # 泊松分布参数 λ = 15(平均每小时来电数)

# Poisson distribution parameter λ = 15 (average calls per hour)

# ========== 第3步:计算泊松分布概率 ==========

# ========== Step 3: Compute Poisson distribution probabilities ==========

max_calls_to_consider = 30 # 考虑的最大来电数上限

# Maximum number of calls to consider

possible_call_counts = list(range(0, max_calls_to_consider + 1)) # 生成 0~30 的整数序列

# Generate an integer sequence from 0 to 30

# 利用泊松PMF公式 P(X=k) = (λ^k * e^(-λ)) / k! 计算每个值的概率

# Compute the probability for each value using the Poisson PMF: P(X=k) = (λ^k * e^(-λ)) / k!

call_probabilities = [poisson.pmf(k, average_calls_per_hour) for k in possible_call_counts] # 计算每个来电数对应的泊松PMF概率

# Compute the Poisson PMF probability for each call count

# 找出概率最大的来电数(即众数)

# Find the call count with the highest probability (i.e., the mode)

most_likely_call_count = possible_call_counts[np.argmax(call_probabilities)] # argmax返回最大值索引

# argmax returns the index of the maximum value

# ========== 第4步:计算管理层关注的关键概率 ==========

# ========== Step 4: Compute key probabilities of managerial interest ==========

probability_at_most_20 = poisson.cdf(20, average_calls_per_hour) # P(X ≤ 20):来电不超过20的概率

# P(X ≤ 20): probability of receiving no more than 20 calls

probability_at_least_10 = 1 - poisson.cdf(9, average_calls_per_hour) # P(X ≥ 10) = 1 - P(X ≤ 9)

# P(X ≥ 10) = 1 - P(X ≤ 9)泊松分布概率计算完毕。下面构建概率分布表并输出关键管理指标。

The Poisson distribution probabilities have been computed. Next, we construct the probability distribution table and output the key managerial metrics.

print(f'平均每小时来电数: {average_calls_per_hour}') # 输出泊松参数λ

# Print the Poisson parameter λ

print(f'最可能的来电数: {most_likely_call_count} ' # 输出泊松分布的众数及其概率

# Print the mode of the Poisson distribution and its probability

f'(概率: {poisson.pmf(most_likely_call_count, average_calls_per_hour):.4f})') # 输出众数及其概率

# Print the mode and its probability

print(f'P(来电 ≤ 20) = {probability_at_most_20:.4f}') # 不超过20个电话的概率

# Probability of receiving no more than 20 calls

print(f'P(来电 ≥ 10) = {probability_at_least_10:.4f}') # 至少10个电话的概率

# Probability of receiving at least 10 calls

# ========== 第5步:构建概率分布表 ==========

# ========== Step 5: Construct the probability distribution table ==========

call_probabilities_dataframe = pd.DataFrame({ # 将来电量概率分布整理为表格

# Organize the call volume probability distribution into a table

'来电数': possible_call_counts[:15], # 展示前15种情况(0~14)

# Display the first 15 cases (0–14)

'概率': [f'{prob:.4f}' for prob in call_probabilities[:15]] # 对应的PMF概率值

# Corresponding PMF probability values

})

print('\n前15种情况的概率分布:') # 输出分节标题

# Print the subsection header

print(call_probabilities_dataframe) # 打印概率分布表

# Print the probability distribution table平均每小时来电数: 15

最可能的来电数: 15 (概率: 0.1024)

P(来电 ≤ 20) = 0.9170

P(来电 ≥ 10) = 0.9301

前15种情况的概率分布:

来电数 概率

0 0 0.0000

1 1 0.0000

2 2 0.0000

3 3 0.0002

4 4 0.0006

5 5 0.0019

6 6 0.0048

7 7 0.0104

8 8 0.0194

9 9 0.0324

10 10 0.0486

11 11 0.0663

12 12 0.0829

13 13 0.0956

14 14 0.1024上表输出了泊松分布 \(\text{Poisson}(\lambda=15)\) 的前 15 种取值的概率分布。从结果可以看出:概率最高值(众数)出现在来电数为 14 时,概率为 10.24%,这与参数 \(\lambda=15\) 非常接近(泊松分布的众数为 \(\lfloor\lambda\rfloor\) 或 \(\lfloor\lambda\rfloor - 1\))。分布主要集中在 7—23 次来电的范围内。此前计算的累积概率表明:\(P(X \leq 20) = 91.70\%\),\(P(X \geq 10) = 93.01\%\)。这意味着约 91.7% 的时段内来电不超过 20 次,可以据此配置客服人员——安排 20 线并发的接听能力即可覆盖超过 90% 的正常时段。同时,低于 10 次来电的概率仅约 7%,可作为低峰时段的参考阈值。下面绘制泊松分布柱状图进行可视化。

The table above displays the probability distribution of the first 15 values of the Poisson distribution \(\text{Poisson}(\lambda=15)\). The results show that the highest probability (mode) occurs at 14 calls, with a probability of 10.24%, which is very close to the parameter \(\lambda=15\) (the mode of a Poisson distribution is \(\lfloor\lambda\rfloor\) or \(\lfloor\lambda\rfloor - 1\)). The distribution is primarily concentrated in the 7–23 call range. The previously computed cumulative probabilities indicate: \(P(X \leq 20) = 91.70\%\) and \(P(X \geq 10) = 93.01\%\). This means that in approximately 91.7% of time periods, the number of calls does not exceed 20, which can be used to configure staffing — provisioning 20 concurrent call-handling lines is sufficient to cover over 90% of normal periods. Meanwhile, the probability of fewer than 10 calls is only about 7%, which can serve as a reference threshold for off-peak periods. We now plot a histogram to visualize the Poisson distribution.

# ========== 第6步:绘制泊松分布柱状图 ==========

# ========== Step 6: Plot the Poisson distribution histogram ==========

calls_figure, calls_axes = plt.subplots(figsize=(10, 6)) # 创建10×6英寸画布

# Create a 10×6 inch figure canvas

calls_bars = calls_axes.bar( # 绘制柱状图

# Draw a bar chart

possible_call_counts, call_probabilities, # x轴=来电数, y轴=概率

# x-axis = call count, y-axis = probability

color='coral', alpha=0.7, edgecolor='black' # 珊瑚色填充、黑色边框

# Coral fill with black edge

)

# 添加均值参考线 λ

# Add a reference line for the mean λ

calls_axes.axvline( # 绘制泊松分布均值λ的垂直参考线

# Draw a vertical reference line at the Poisson mean λ

average_calls_per_hour, color='red', linestyle='--', linewidth=2, # 红色虚线

# Red dashed line

label=f'平均值 λ = {average_calls_per_hour}' # 图例说明

# Legend label

)

calls_axes.set_xlabel('每小时来电数', fontsize=12) # x轴标签

# x-axis label

calls_axes.set_ylabel('概率', fontsize=12) # y轴标签

# y-axis label

calls_axes.set_title('客服中心每小时来电量分布 (泊松分布)', fontsize=14) # 图表标题

# Chart title

calls_axes.legend(fontsize=11) # 显示图例

# Display the legend

calls_axes.grid(True, axis='y', alpha=0.3) # 添加y轴网格线

# Add y-axis gridlines

# ========== 第7步:高亮显示 μ ± 2σ 的正常波动范围 ==========

# ========== Step 7: Highlight the normal fluctuation range of μ ± 2σ ==========

calls_standard_deviation = np.sqrt(average_calls_per_hour) # 泊松分布标准差 σ = √λ

# Poisson standard deviation σ = √λ

calls_axes.axvspan( # 绘制黄色半透明区域

# Draw a yellow semi-transparent region

average_calls_per_hour - 2 * calls_standard_deviation, # 左边界: λ - 2σ

# Left boundary: λ - 2σ

average_calls_per_hour + 2 * calls_standard_deviation, # 右边界: λ + 2σ

# Right boundary: λ + 2σ

alpha=0.2, color='yellow', # 黄色、20%透明度

# Yellow, 20% opacity

label=f'μ ± 2σ范围 ({average_calls_per_hour - 2*calls_standard_deviation:.0f}' # 标注正常波动范围的图例文本

# Legend text annotating the normal fluctuation range

f' 到 {average_calls_per_hour + 2*calls_standard_deviation:.0f})' # 图例标注范围

# Legend label for the range

)

plt.tight_layout() # 自动调整布局

# Automatically adjust the layout

plt.show() # 渲染并显示图表

# Render and display the chart

上图展示了泊松分布 \(\text{Poisson}(\lambda=15)\) 的柱状图。图中可以观察到:分布整体呈现轻微的右偏特征(正偏态),这是泊松分布的典型形态——当 \(\lambda\) 较小时右偏明显,随着 \(\lambda\) 增大逐渐趋于对称。红色虚线标注了均值 \(\lambda=15\) 的位置,黄色半透明区域标注了 \(\mu \pm 2\sigma\) 的范围(约 7 到 23),覆盖了约 95% 的概率质量。这一可视化结果对客服中心的资源调度具有直接参考价值:在 \(\mu \pm 2\sigma\) 范围外的来电量属于异常波动,管理者可以据此设置预警阈值——当某一时段来电量超过 23 次时可能需要启动应急机制,而低于 7 次时可以适当减少值班人数。

The figure above displays the histogram of the Poisson distribution \(\text{Poisson}(\lambda=15)\). We observe that the distribution exhibits a slight right skew (positive skewness), which is a typical characteristic of the Poisson distribution — the right skew is more pronounced when \(\lambda\) is small and gradually becomes more symmetric as \(\lambda\) increases. The red dashed line marks the mean \(\lambda=15\), and the yellow semi-transparent region marks the \(\mu \pm 2\sigma\) range (approximately 7 to 23), covering roughly 95% of the probability mass. This visualization provides direct reference value for resource scheduling at the customer service center: call volumes outside the \(\mu \pm 2\sigma\) range constitute abnormal fluctuations. Managers can use this to set alert thresholds — when call volume exceeds 23 in a given time period, an emergency mechanism may need to be activated, while call volumes below 7 may warrant a reduction in on-duty staff. ## 连续型概率分布 (Continuous Probability Distributions) {#sec-continuous-distributions}

4.3.4 概率密度函数 (PDF)

对于连续型随机变量 \(X\),概率密度函数(Probability Density Function, PDF) \(f(x)\) 满足:

For a continuous random variable \(X\), the Probability Density Function (PDF) \(f(x)\) satisfies:

\(f(x) \geq 0\) 对所有 \(x\)

\(\int_{-\infty}^{\infty} f(x) dx = 1\)

\(P(a \leq X \leq b) = \int_a^b f(x) dx\)

\(f(x) \geq 0\) for all \(x\)

\(\int_{-\infty}^{\infty} f(x) dx = 1\)

\(P(a \leq X \leq b) = \int_a^b f(x) dx\)

重要: PDF不是概率!

Important: The PDF Is Not a Probability!

对于连续型随机变量,\(P(X = x) = 0\) 对任意单点 \(x\) 成立。概率密度 \(f(x)\) 不是概率,而是一个”密度”概念。

For a continuous random variable, \(P(X = x) = 0\) holds for any single point \(x\). The probability density \(f(x)\) is not a probability but rather a concept of “density.”

正确理解: - \(f(x)\) 反映了在 \(x\) 附近取值的”密集程度” - 区间概率是PDF在该区间下的面积 - \(f(x)\) 可以大于1,但任何区间的概率不能超过1

Correct Interpretation: - \(f(x)\) reflects the “concentration” of values near \(x\) - The probability over an interval equals the area under the PDF over that interval - \(f(x)\) can exceed 1, but the probability over any interval cannot exceed 1

4.3.5 正态分布 (Normal Distribution)

定义: \(X \sim N(\mu, \sigma^2)\)

Definition: \(X \sim N(\mu, \sigma^2)\)

正态分布(又称高斯分布)是最重要的连续型分布,是统计学理论的基石。

The normal distribution (also known as the Gaussian distribution) is the most important continuous distribution and serves as the cornerstone of statistical theory.

概率密度函数如 式 4.6 所示:

The probability density function is shown in 式 4.6:

\[ f(x) = \frac{1}{\sqrt{2\pi}\sigma} \exp\left[-\frac{(x-\mu)^2}{2\sigma^2}\right] \tag{4.6}\]

其中: - \(\mu\): 均值(位置参数) - \(\sigma\): 标准差(尺度参数)

where: - \(\mu\): mean (location parameter) - \(\sigma\): standard deviation (scale parameter)

正态分布的期望与方差(式 4.7):

The expectation and variance of the normal distribution (式 4.7):

\[ E[X] = \mu, \quad \text{Var}(X) = \sigma^2 \tag{4.7}\]

标准正态分布: \(Z \sim N(0, 1)\)

Standard Normal Distribution: \(Z \sim N(0, 1)\)

任意正态分布可以通过标准化转换为标准正态分布(见 式 4.8):

Any normal distribution can be converted to the standard normal distribution through standardization (see 式 4.8):

\[ Z = \frac{X - \mu}{\sigma} \tag{4.8}\]

几何直觉:为什么是正态分布?(最大熵原理)

Geometric Intuition: Why the Normal Distribution? (The Maximum Entropy Principle)

正态分布不仅仅因为”中心极限定理”而重要,它还是大自然”最诚实”的分布。

The normal distribution is important not only because of the Central Limit Theorem—it is also nature’s “most honest” distribution.

假设我们就知道数据的均值是 \(\mu\),方差是 \(\sigma^2\),除此之外一无所知。在这个约束下,哪个分布包含的信息量最少(即熵最大,最不偏不倚)?

Suppose all we know about the data is that the mean is \(\mu\) and the variance is \(\sigma^2\), and nothing else. Under this constraint, which distribution contains the least information (i.e., has the maximum entropy and is the most unbiased)?

答案就是:正态分布。

The answer is: the normal distribution.

如果我们假设数据在有限区间,熵最大的是均匀分布。

If we assume the data lies on a finite interval, the maximum-entropy distribution is the uniform distribution.

如果我们假设均值固定且非负,熵最大的是指数分布。

If we assume a fixed, non-negative mean, the maximum-entropy distribution is the exponential distribution.

如果我们引入方差约束,熵最大的就是正态分布。

If we introduce a variance constraint, the maximum-entropy distribution is the normal distribution.

所以,正态分布是我们在已知均值和方差的情况下,对未知世界所能做的最保守的假设。图 4.1 展示了真实股票数据与正态分布的拟合效果。

Therefore, the normal distribution represents the most conservative assumption we can make about the unknown world given knowledge of the mean and variance. 图 4.1 illustrates the fit of a normal distribution to real stock data.

4.3.6 指数分布 (Exponential Distribution)

定义: \(X \sim \text{Exp}(\lambda)\)

Definition: \(X \sim \text{Exp}(\lambda)\)

描述等待时间(time until event),参数 \(\lambda > 0\) 是事件发生率。

Describes waiting time (time until an event occurs), where the parameter \(\lambda > 0\) is the event rate.

概率密度函数如 式 4.9 所示:

The probability density function is shown in 式 4.9:

\[ f(x) = \lambda e^{-\lambda x}, \quad x \geq 0 \tag{4.9}\]

累积分布函数如 式 4.10 所示:

The cumulative distribution function is shown in 式 4.10:

\[ F(x) = P(X \leq x) = 1 - e^{-\lambda x} \tag{4.10}\]

指数分布的期望与方差(式 4.11):

The expectation and variance of the exponential distribution (式 4.11):

\[ E[X] = \frac{1}{\lambda}, \quad \text{Var}(X) = \frac{1}{\lambda^2} \tag{4.11}\]

指数分布的无记忆性

The Memoryless Property of the Exponential Distribution

指数分布具有独特的无记忆性(memoryless property):

The exponential distribution possesses a unique memoryless property:

\[ P(X > s + t | X > s) = P(X > t) \]

直观解释: 如果已经等待了 \(s\) 时间,事件仍未发生,那么从现在开始再等待 \(t\) 时间的概率,与从头开始等待 \(t\) 时间的概率相同。过去的等待时间不影响未来的等待时间。

Intuitive Explanation: If one has already waited \(s\) units of time and the event has not yet occurred, the probability of waiting an additional \(t\) units from this point onward is identical to the probability of waiting \(t\) units from the very beginning. Past waiting time has no influence on future waiting time.

应用场景: 电子元件寿命、客户到达间隔时间、电话通话时长等。表 4.3 展示了客服服务时长的指数分布分析。

Application Scenarios: Lifetimes of electronic components, inter-arrival times of customers, telephone call durations, etc. 表 4.3 presents an exponential distribution analysis of customer service durations.

4.3.6.1 案例:客户服务时间 (Case Study: Customer Service Time)

什么是服务时间的指数分布建模?

What Is Exponential Distribution Modeling of Service Time?

在服务运营管理中,客户等待时间和服务时长的建模是排队论(Queueing Theory)的核心。指数分布因其”无记忆性”特征——即无论客户已经等了多久,未来还需等待的时间分布不变——成为描述服务时间最常用的概率模型。它广泛应用于银行柜台、医院候诊、呼叫中心等场景。通过指数分布,管理者可以回答关键运营问题:例如”5分钟内完成服务的概率是多少”“超过20分钟的’慢单’占比多大”,从而制定服务级别协议(SLA)和人员调度策略。

In service operations management, modeling customer waiting times and service durations is at the core of Queueing Theory. The exponential distribution, with its memoryless property—meaning that regardless of how long a customer has already waited, the distribution of the remaining waiting time stays the same—is the most commonly used probabilistic model for describing service times. It is widely applied in scenarios such as bank counters, hospital waiting rooms, and call centers. Through the exponential distribution, managers can answer critical operational questions, such as “What is the probability of completing service within 5 minutes?” or “What proportion of ‘slow tickets’ exceed 20 minutes?”, thereby informing Service Level Agreements (SLAs) and staffing strategies.

下面我们以一个平均服务时长10分钟(即\(\lambda=0.1\)/分钟)的客服场景为例,计算管理层关注的关键概率。

Below, we use a customer service scenario with an average service duration of 10 minutes (i.e., \(\lambda=0.1\) per minute) as an example to compute the key probabilities that management focuses on.

# ========== 导入所需库 ==========

# ========== Import Required Libraries ==========

import pandas as pd # 数据表格处理库

# Import the pandas library for tabular data manipulation

import numpy as np # 数值计算库

# Import the numpy library for numerical computation

from scipy.stats import expon # 指数分布统计函数

# Import the exponential distribution functions from scipy.stats

import matplotlib.pyplot as plt # 数据可视化库

# Import the matplotlib library for data visualization

# ========== 第1步:设置绘图环境 ==========

# ========== Step 1: Configure the Plotting Environment ==========

plt.rcParams['font.sans-serif'] = ['SimHei'] # 设置中文黑体字体

# Set the Chinese SimHei font for displaying Chinese characters

plt.rcParams['axes.unicode_minus'] = False # 解决负号显示问题

# Fix the minus sign display issue in matplotlib

# ========== 第2步:定义客服场景参数 ==========

# ========== Step 2: Define Customer Service Scenario Parameters ==========

service_rate_lambda = 0.1 # 服务率参数 λ = 0.1 (平均每分钟服务0.1个客户)

# Service rate parameter λ = 0.1 (on average, 0.1 customers served per minute)

exponential_scale = 1 / service_rate_lambda # scale = 1/λ = 10分钟(平均服务时长)

# Scale parameter = 1/λ = 10 minutes (mean service duration)

# ========== 第3步:计算管理层关注的关键概率 ==========

# ========== Step 3: Compute Key Probabilities of Managerial Interest ==========

# P(X ≤ 5): 5分钟内完成服务的概率

# P(X ≤ 5): Probability of completing service within 5 minutes

probability_within_5_minutes = expon.cdf(5, scale=exponential_scale) # 指数分布CDF计算5分钟内完成概率

# Compute the probability of completion within 5 minutes using the exponential CDF

# P(X ≤ 10): 10分钟内完成服务的概率

# P(X ≤ 10): Probability of completing service within 10 minutes

probability_within_10_minutes = expon.cdf(10, scale=exponential_scale) # 指数分布CDF计算10分钟内完成概率

# Compute the probability of completion within 10 minutes using the exponential CDF

# P(X > 20): 服务超过20分钟的概率,即"慢单"率

# P(X > 20): Probability that service exceeds 20 minutes, i.e., the "slow ticket" rate

probability_exceed_20_minutes = 1 - expon.cdf(20, scale=exponential_scale) # 超过20分钟的尾部概率

# Tail probability of exceeding 20 minutes

print(f'平均服务时长: {exponential_scale:.1f} 分钟') # 输出平均服务时长

# Print the mean service duration

print(f'P(服务 ≤ 5分钟) = {probability_within_5_minutes:.4f}' # 输出5分钟内完成概率

f' ({probability_within_5_minutes:.2%})') # 5分钟内完成概率

# Print the probability of completing service within 5 minutes

print(f'P(服务 ≤ 10分钟) = {probability_within_10_minutes:.4f}' # 输出10分钟内完成概率

f' ({probability_within_10_minutes:.2%})') # 10分钟内完成概率

# Print the probability of completing service within 10 minutes

print(f'P(服务 > 20分钟) = {probability_exceed_20_minutes:.4f}' # 输出超过20分钟概率

f' ({probability_exceed_20_minutes:.2%})') # 超过20分钟概率

# Print the probability of service exceeding 20 minutes平均服务时长: 10.0 分钟

P(服务 ≤ 5分钟) = 0.3935 (39.35%)

P(服务 ≤ 10分钟) = 0.6321 (63.21%)

P(服务 > 20分钟) = 0.1353 (13.53%)上述结果输出了指数分布 \(\text{Exp}(\lambda=0.1)\)(即平均服务时长 10 分钟)的三个关键概率。\(P(\text{服务} \leq 5\text{分钟}) = 39.35\%\),意味着不到四成的客户能在 5 分钟内完成服务,这表明如果企业将”5 分钟内响应”作为服务承诺,大约六成客户将无法满足此标准。\(P(\text{服务} \leq 10\text{分钟}) = 63.21\%\),即约三分之二的客户可以在平均服务时长内完成——这体现了指数分布的一个重要特性:中位数(\(\ln 2 / \lambda \approx 6.93\) 分钟)小于均值(10 分钟),因为少数耗时极长的服务拉高了平均值。\(P(\text{服务} > 20\text{分钟}) = 13.53\%\),说明仍有约 13.5% 的客户需要超过两倍均值的等待时间,这部分长时间等待可能是客户投诉的主要来源。下面构建不同时长的服务完成概率汇总表。

The results above report three key probabilities from the exponential distribution \(\text{Exp}(\lambda=0.1)\) (i.e., a mean service duration of 10 minutes). \(P(\text{Service} \leq 5\text{ min}) = 39.35\%\), meaning fewer than four out of ten customers can be served within 5 minutes; this implies that if the company promises “response within 5 minutes,” approximately 60% of customers would not meet this standard. \(P(\text{Service} \leq 10\text{ min}) = 63.21\%\), i.e., roughly two-thirds of customers can be served within the mean service duration—this reflects an important property of the exponential distribution: the median (\(\ln 2 / \lambda \approx 6.93\) minutes) is less than the mean (10 minutes), because a small number of extremely long service times inflate the average. \(P(\text{Service} > 20\text{ min}) = 13.53\%\), indicating that approximately 13.5% of customers require more than twice the mean waiting time; this segment of prolonged waits is likely the primary source of customer complaints. Below, we construct a summary table of service completion probabilities at various durations.

# ========== 第4步:构建不同时长的概率表 ==========

# ========== Step 4: Construct the Probability Table for Various Service Durations ==========

service_time_thresholds = [3, 5, 10, 15, 20, 30] # 关注的时间节点(分钟)

# Time thresholds of interest (in minutes)

service_times_dataframe = pd.DataFrame({ # 构建不同时长的服务完成概率表

# Construct a DataFrame of service completion probabilities at various durations

'服务时长(分钟)': service_time_thresholds, # 时间阈值

# Time thresholds

'完成概率': [f'{expon.cdf(t, scale=exponential_scale):.4f}' # 每个时间点的CDF值

# CDF value at each time point

for t in service_time_thresholds], # CDF值

'超出概率': [f'{1-expon.cdf(t, scale=exponential_scale):.4f}' # 超出时间阈值的概率

# Probability of exceeding the time threshold

for t in service_time_thresholds] # 1 - CDF

})

print('\n不同时长的服务完成概率:') # 输出分节标题

# Print section heading

print(service_times_dataframe) # 打印概率表

# Print the probability table

不同时长的服务完成概率:

服务时长(分钟) 完成概率 超出概率

0 3 0.2592 0.7408

1 5 0.3935 0.6065

2 10 0.6321 0.3679

3 15 0.7769 0.2231

4 20 0.8647 0.1353

5 30 0.9502 0.0498上表构建了一个完整的服务时长—概率对照表,从 3 分钟到 30 分钟共 6 个关键时间节点。从”完成概率”列可以看出概率随时间递增但增速递减:3 分钟内完成的概率仅为 25.92%,5 分钟为 39.35%,10 分钟为 63.21%,15 分钟为 77.69%,20 分钟为 86.47%,30 分钟为 95.02%。这一递减的边际增量体现了指数分布的”无记忆性”(memoryless property):无论客户已经等待了多长时间,未来完成服务所需的额外等待时间的分布始终相同。从服务水平协议(SLA)的角度,这张表可以直接指导管理决策:如果企业承诺”95% 的客户在 30 分钟内完成服务”,当前参数下恰好能够达标;但如果目标是”90% 在 20 分钟内完成”,则尚有约 3.5% 的缺口,需要通过增加客服人员来降低平均服务时长 \(1/\lambda\)。下面绘制 PDF 和 CDF 双子图,直观展示指数分布的形态特征和累积概率变化。

The table above provides a complete service duration–probability lookup covering six key time thresholds from 3 to 30 minutes. From the “Completion Probability” column, we observe that probability increases with time but at a diminishing marginal rate: the probability of completing within 3 minutes is only 25.92%, 5 minutes yields 39.35%, 10 minutes yields 63.21%, 15 minutes yields 77.69%, 20 minutes yields 86.47%, and 30 minutes yields 95.02%. This diminishing marginal increment reflects the memoryless property of the exponential distribution: regardless of how long a customer has already waited, the distribution of the additional time needed to complete service remains the same. From a Service Level Agreement (SLA) perspective, this table directly informs managerial decisions: if the company promises “95% of customers served within 30 minutes,” the current parameters just barely meet the target; however, if the goal is “90% within 20 minutes,” there is approximately a 3.5% gap, which would require adding more customer service personnel to reduce the mean service duration \(1/\lambda\). Below, we plot the PDF and CDF in a dual-panel figure to visually illustrate the shape characteristics and cumulative probability trajectory of the exponential distribution.

# ========== 第5步:绘制PDF和CDF双子图(左图:PDF) ==========

# ========== Step 5: Plot the PDF and CDF Dual-Panel Figure (Left: PDF) ==========

exponential_figure, exponential_axes = plt.subplots(1, 2, figsize=(14, 5)) # 创建1行2列子图

# Create a figure with 1 row and 2 columns of subplots

# --- 左图:概率密度函数 (PDF) ---

# --- Left Panel: Probability Density Function (PDF) ---

x_axis_values = np.linspace(0, 50, 1000) # 生成x轴坐标点

# Generate x-axis coordinate points

pdf_values_array = expon.pdf(x_axis_values, scale=exponential_scale) # 计算指数PDF值

# Compute the exponential PDF values

exponential_axes[0].plot( # 绘制PDF曲线

# Plot the PDF curve

x_axis_values, pdf_values_array, 'b-', linewidth=2.5, # 蓝色实线、线宽2.5

# Blue solid line, line width 2.5

label=f'Exp(λ={service_rate_lambda})' # 图例标注指数分布参数

# Legend label with exponential distribution parameters

)

exponential_axes[0].fill_between(x_axis_values, pdf_values_array, alpha=0.3) # 添加填充区域

# Add a shaded area under the curve

# 标注均值参考线

# Mark the mean reference line

exponential_axes[0].axvline( # 绘制平均服务时长的垂直参考线

# Draw a vertical reference line at the mean service duration

exponential_scale, color='red', linestyle='--', linewidth=2, # 红色虚线标记均值

# Red dashed line marking the mean

label=f'均值 = {exponential_scale:.1f}分钟' # 图例显示平均服务时长

# Legend displaying the mean service duration

)

# 高亮显示10分钟内完成的概率区域

# Highlight the probability area for completion within 10 minutes

x_axis_shade_values = np.linspace(0, 10, 100) # 0~10分钟范围

# Range from 0 to 10 minutes

exponential_axes[0].fill_between( # 填充P(X≤10)的概率区域

# Fill the probability region for P(X ≤ 10)

x_axis_shade_values, # 0~10分钟的x轴范围

# x-axis range from 0 to 10 minutes

expon.pdf(x_axis_shade_values, scale=exponential_scale), # 对应的PDF值

# Corresponding PDF values

alpha=0.5, color='green', # 绿色填充区域

# Green shaded region

label=f'10分钟内完成概率: {probability_within_10_minutes:.2%}' # 图例标注概率值

# Legend label with the probability value

)

exponential_axes[0].set_xlabel('服务时长 (分钟)', fontsize=12) # x轴标签

# Set x-axis label

exponential_axes[0].set_ylabel('概率密度', fontsize=12) # y轴标签

# Set y-axis label

exponential_axes[0].set_title('客服服务时长的概率密度函数', fontsize=14) # 子图标题

# Set subplot title

exponential_axes[0].legend(fontsize=10) # 显示图例

# Display legend

exponential_axes[0].grid(True, alpha=0.3) # 添加网格线

# Add grid lines

概率密度函数(PDF)子图绘制完成。下面绘制累积分布函数(CDF)子图,并在曲线上标注10分钟关键概率阈值。

The Probability Density Function (PDF) subplot is complete. Next, we plot the Cumulative Distribution Function (CDF) subplot and annotate the key probability threshold at 10 minutes on the curve.

# --- 右图:累积分布函数 (CDF) ---

# --- Right Panel: Cumulative Distribution Function (CDF) ---

cdf_values_array = expon.cdf(x_axis_values, scale=exponential_scale) # 计算CDF值

# Compute the CDF values

exponential_axes[1].plot( # 绘制CDF曲线

# Plot the CDF curve

x_axis_values, cdf_values_array, 'g-', linewidth=2.5, label='CDF' # 绿色实线表示累积概率

# Green solid line representing cumulative probability

)

# 标注关键点 F(10)

# Annotate the key point F(10)

exponential_axes[1].scatter( # 在CDF曲线上标记点

# Mark the point on the CDF curve

[10], [probability_within_10_minutes], color='red', s=100, zorder=5 # 红色散点标记F(10)的位置

# Red scatter point marking the location of F(10)

)

exponential_axes[1].annotate( # 添加注释箭头

# Add an annotation arrow

f'F(10) = {probability_within_10_minutes:.2%}', # 标注CDF值文本

# Annotation text for the CDF value

xy=(10, probability_within_10_minutes), # 箭头指向的点

# Point the arrow targets

xytext=(15, probability_within_10_minutes - 0.1), fontsize=11, # 文本位置和字号

# Text position and font size

arrowprops=dict(arrowstyle='->', color='red') # 红色箭头样式

# Red arrow style

)

exponential_axes[1].set_xlabel('服务时长 (分钟)', fontsize=12) # x轴标签

# Set x-axis label

exponential_axes[1].set_ylabel('累积概率', fontsize=12) # y轴标签

# Set y-axis label

exponential_axes[1].set_title('服务时长的累积分布函数', fontsize=14) # 子图标题

# Set subplot title

exponential_axes[1].legend(fontsize=10) # 显示图例

# Display legend

exponential_axes[1].grid(True, alpha=0.3) # 添加网格线

# Add grid lines

plt.tight_layout() # 自动调整布局

# Automatically adjust the layout

plt.show() # 渲染并显示图表

# Render and display the figure<Figure size 672x480 with 0 Axes>上图通过 PDF(左图)和 CDF(右图)双子图全面展示了指数分布 \(\text{Exp}(\lambda=0.1)\) 的形态特征。左图(概率密度函数)呈现了指数分布典型的单调递减曲线:在 \(x=0\) 处密度最高(\(f(0) = \lambda = 0.1\)),随后以指数速率衰减,这意味着短时间即完成服务的概率密度最大,随着服务时长增加,密度迅速下降。阴影区域标注了 \(P(X \leq 10) = 63.21\%\) 的面积,直观地展示了”区间面积即概率”的核心概念。右图(累积分布函数)呈现了 S 形增长曲线:初始阶段增长迅速(前 10 分钟内累积概率已达到 63.21%),随后增速趋缓,逐渐趋近于 1.0。垂直虚线标注了中位数(约 6.93 分钟)和均值(10 分钟)的位置,清晰地展示了指数分布中中位数小于均值这一右偏特征。两图结合,完整呈现了指数分布的密度形态、累积趋势和关键百分位数。

The figure above comprehensively illustrates the shape characteristics of the exponential distribution \(\text{Exp}(\lambda=0.1)\) through dual PDF (left) and CDF (right) panels. The left panel (probability density function) displays the classic monotonically decreasing curve of the exponential distribution: the density is highest at \(x=0\) (\(f(0) = \lambda = 0.1\)) and then decays at an exponential rate. This means that the probability density is greatest for very short service times and drops off rapidly as the service duration increases. The shaded area marks \(P(X \leq 10) = 63.21\%\), visually demonstrating the core concept that “area under the curve equals probability.” The right panel (cumulative distribution function) shows an S-shaped growth curve: during the initial phase, it rises rapidly (cumulative probability reaches 63.21% within the first 10 minutes), then the rate of increase decelerates, gradually approaching 1.0. Vertical dashed lines mark the positions of the median (approximately 6.93 minutes) and the mean (10 minutes), clearly illustrating the right-skewed characteristic of the exponential distribution where the median is less than the mean. Together, the two panels present a complete picture of the exponential distribution’s density shape, cumulative trend, and key percentiles. ## 中心极限定理 (Central Limit Theorem) {#sec-clt}

4.3.7 定理陈述 (Statement of the Theorem)

林德伯格-列维中心极限定理(Lindeberg-Lévy CLT):

The Lindeberg-Lévy Central Limit Theorem (Lindeberg-Lévy CLT):

设 \(X_1, X_2, ..., X_n\) 是独立同分布(i.i.d.)的随机变量序列,具有 \(E[X_i] = \mu\) 和 \(\text{Var}(X_i) = \sigma^2 < \infty\)。定义样本均值为 \(\bar{X}_n = \frac{1}{n}\sum_{i=1}^n X_i\)。则当 \(n \to \infty\) 时,标准化统计量的极限分布如 式 4.12 所示:

Let \(X_1, X_2, ..., X_n\) be a sequence of independent and identically distributed (i.i.d.) random variables with \(E[X_i] = \mu\) and \(\text{Var}(X_i) = \sigma^2 < \infty\). Define the sample mean as \(\bar{X}_n = \frac{1}{n}\sum_{i=1}^n X_i\). Then as \(n \to \infty\), the limiting distribution of the standardized statistic is given by 式 4.12:

\[ \sqrt{n}\left(\frac{\bar{X}_n - \mu}{\sigma}\right) \xrightarrow{d} N(0, 1) \tag{4.12}\]

等价地,样本均值的渐近分布如 式 4.13 所示:

Equivalently, the asymptotic distribution of the sample mean is given by 式 4.13:

\[ \bar{X}_n \xrightarrow{d} N\left(\mu, \frac{\sigma^2}{n}\right) \tag{4.13}\]

中心极限定理的深刻意义

The Profound Significance of the Central Limit Theorem

CLT是统计学中最惊人的定理之一。它告诉我们:

The CLT is one of the most remarkable theorems in statistics. It tells us:

无论原始数据的分布如何 (可以是均匀、指数、甚至任意分布),只要样本量足够大,样本均值的分布都会近似正态分布!

Regardless of the original data distribution (whether uniform, exponential, or even arbitrary), as long as the sample size is sufficiently large, the distribution of the sample mean will approximate a normal distribution!

这解释了为什么正态分布在金融市场中如此普遍:日收益率、交易量波动、资产回报等,都是许多微小独立因素叠加的结果。

This explains why the normal distribution is so prevalent in financial markets: daily returns, trading volume fluctuations, asset returns, and so forth, are all the result of the superposition of many small independent factors.

实用经验法则:

Practical Rules of Thumb:

原始分布对称时,n ≥ 15即可

原始分布适度偏态时,n ≥ 30

原始分布高度偏态或有极端值时,n ≥ 50或100

When the original distribution is symmetric, n ≥ 15 is sufficient

When the original distribution is moderately skewed, n ≥ 30

When the original distribution is highly skewed or has extreme values, n ≥ 50 or 100

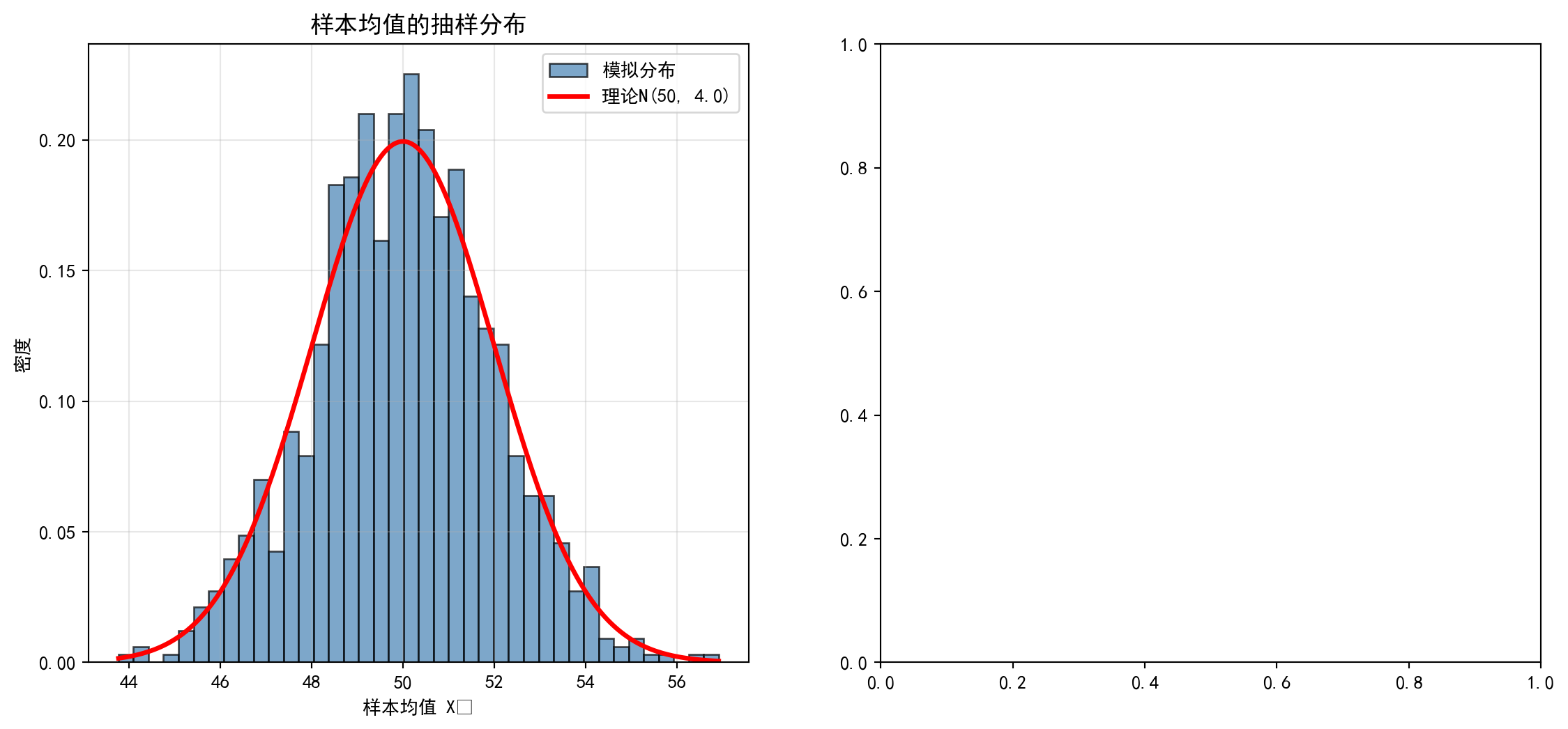

图 4.2 通过蒙特卡洛模拟展示了不同原始分布在不同样本量下的收敛速度。

图 4.2 demonstrates the convergence speed of different original distributions under various sample sizes through Monte Carlo simulation.

4.3.7.1 案例:不同分布的收敛速度 (Case Study: Convergence Speed of Different Distributions)

什么是中心极限定理的收敛速度?

What Is the Convergence Speed of the Central Limit Theorem?

中心极限定理告诉我们样本均值的分布趋近正态分布,但”趋近”的速度取决于原始分布的形态。对于对称分布(如均匀分布),即使样本量很小(n=5)就能看到近似正态的形状;而对于高度偏斜的分布(如指数分布),可能需要n≥30甚至更大的样本量才能收敛。这一差异对实际统计推断至关重要:在使用正态近似做假设检验或构建置信区间时,我们需要根据数据的偏态程度判断所需的最小样本量,避免在小样本情况下错误地依赖正态假设。

The Central Limit Theorem tells us that the distribution of the sample mean converges to a normal distribution, but the speed of this “convergence” depends on the shape of the original distribution. For symmetric distributions (such as the uniform distribution), an approximately normal shape can be observed even with a small sample size (n=5); whereas for highly skewed distributions (such as the exponential distribution), a sample size of n≥30 or even larger may be needed for convergence. This difference is critically important for practical statistical inference: when using normal approximations for hypothesis testing or constructing confidence intervals, we must assess the minimum required sample size based on the degree of skewness in the data, to avoid erroneously relying on the normal assumption with small samples.

图 4.2 通过蒙特卡洛模拟展示了三种典型分布在不同样本量下的收敛过程。

图 4.2 demonstrates the convergence process of three typical distributions under different sample sizes through Monte Carlo simulation.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import numpy as np # 数值计算库

# Import the NumPy library for numerical computation

import matplotlib.pyplot as plt # 数据可视化库

# Import the Matplotlib library for data visualization

from scipy import stats # 正态拟合函数

# Import the SciPy stats module for normal distribution fitting

# ========== 第1步:设置绘图环境 ==========

# ========== Step 1: Configure the plotting environment ==========

plt.rcParams['font.sans-serif'] = ['SimHei'] # 设置中文黑体字体

# Set the Chinese SimHei font for plot labels

plt.rcParams['axes.unicode_minus'] = False # 解决负号显示问题

# Fix the minus sign display issue in plots

# ========== 第2步:定义模拟参数 ==========

# ========== Step 2: Define simulation parameters ==========

sample_sizes_list = [1, 5, 30] # 三种样本量:小/中/大

# Three sample sizes: small / medium / large

monte_carlo_simulations_count = 10000 # 每组重复抽样次数

# Number of Monte Carlo repetitions per group模拟参数已设定。下面创建子图矩阵,对三种分布分别进行蒙特卡洛模拟并可视化收敛过程。

Simulation parameters have been set. Next, we create a subplot matrix to perform Monte Carlo simulations for three distributions and visualize the convergence process.

# ========== 第3步:创建3×3子图矩阵 ==========

# ========== Step 3: Create a 3×3 subplot matrix ==========

# 行 = 三种原始分布,列 = 三种样本量

# Rows = three original distributions, Columns = three sample sizes

clt_figure, clt_axes = plt.subplots(3, 3, figsize=(15, 10)) # 创建3×3的子图矩阵

# Create a 3×3 subplot matrix

# 定义三种典型分布:均匀、指数、泊松

# Define three typical distributions: Uniform, Exponential, Poisson

distribution_types_list = [ # 三种分布的名称和抽样函数

# List of three distributions with names and sampling functions

('均匀分布', lambda sample_n: np.random.uniform(0, 1, sample_n)), # U(0,1)

# Uniform distribution U(0,1)

('指数分布', lambda sample_n: np.random.exponential(1, sample_n)), # Exp(1)

# Exponential distribution Exp(1)

('泊松分布', lambda sample_n: np.random.poisson(5, sample_n)) # Pois(5)

# Poisson distribution Pois(5)

]

子图矩阵与分布列表定义完成。下面通过蒙特卡洛模拟,检验三种分布在不同样本量下样本均值向正态分布收敛的过程。

The subplot matrix and distribution list have been defined. Next, we use Monte Carlo simulation to examine the convergence process of sample means toward the normal distribution under different sample sizes for the three distributions.

# ========== 第4步:预计算所有分布-样本量组合的模拟数据 ==========

# ========== Step 4: Pre-compute simulation data for all distribution-sample size combinations ==========

clt_simulation_results = {} # 存储所有模拟结果的字典

# Dictionary to store all simulation results

for distribution_index, (distribution_name, distribution_function) in enumerate(distribution_types_list): # 遍历每种分布

# Iterate over each distribution type

for sample_size_index, sample_size_n in enumerate(sample_sizes_list): # 遍历每种样本量

# Iterate over each sample size

# 生成样本均值:重复抽样n个观测值并计算均值,重复10000次

# Generate sample means: repeatedly draw n observations, compute the mean, repeat 10000 times

simulated_sample_means_array = np.array([ # 存储所有模拟的样本均值

# Array to store all simulated sample means

distribution_function(sample_size_n).mean() # 单次抽样并取均值

# Single draw and compute the mean

for _ in range(monte_carlo_simulations_count) # 重复抽样次数

# Number of Monte Carlo repetitions

])

clt_simulation_results[(distribution_index, sample_size_index)] = { # 以(分布索引, 样本量索引)为键

# Key: (distribution index, sample size index)

'means': simulated_sample_means_array, # 模拟的样本均值数组

# Array of simulated sample means

'name': distribution_name, # 分布名称

# Distribution name

'n': sample_size_n # 样本量

# Sample size

}蒙特卡洛模拟数据已全部生成。下面将模拟结果可视化,对比三种分布在不同样本量下样本均值向正态分布收敛的过程。

All Monte Carlo simulation data have been generated. Next, we visualize the simulation results to compare the convergence process of sample means toward the normal distribution across the three distributions and different sample sizes.

# ========== 第5步:可视化所有模拟结果 ==========

# ========== Step 5: Visualize all simulation results ==========

for (distribution_index, sample_size_index), result_dict in clt_simulation_results.items(): # 遍历所有预计算结果

# Iterate over all pre-computed results

current_axis = clt_axes[distribution_index, sample_size_index] # 获取当前子图

# Get the current subplot axis

simulated_means = result_dict['means'] # 提取样本均值数组

# Extract the array of sample means

# 绘制样本均值的直方图

# Plot the histogram of sample means

current_axis.hist( # 绘制当前样本量对应的均值分布直方图

# Draw the histogram of the mean distribution for the current sample size

simulated_means, bins=50, density=True, alpha=0.7, # 50个分箱,归一化为密度

# 50 bins, normalized to density

color='steelblue', edgecolor='black', label='样本均值分布' # 钢蓝色填充、黑色边框

# Steel blue fill, black edges

)

# 计算并叠加正态拟合曲线

# Compute and overlay the normal fit curve

fitted_mean = simulated_means.mean() # 实际拟合均值

# Fitted mean value

fitted_std = simulated_means.std() # 实际拟合标准差

# Fitted standard deviation

x_axis_values = np.linspace(simulated_means.min(), simulated_means.max(), 100) # 生成x轴等距点

# Generate evenly spaced x-axis values

fitted_normal_curve = stats.norm.pdf(x_axis_values, fitted_mean, fitted_std) # 计算正态PDF

# Compute the normal probability density function

current_axis.plot( # 叠加理论正态曲线

# Overlay the theoretical normal curve

x_axis_values, fitted_normal_curve, 'r-', linewidth=2.5, # 红色实线

# Red solid line

label=f'正态拟合\n(μ={fitted_mean:.3f}, σ={fitted_std:.3f})' # 图例显示拟合参数

# Legend showing fitted parameters

)

# 设置子图格式

# Set subplot formatting

current_axis.set_title(f'{result_dict["name"]}, n={result_dict["n"]}', fontsize=12) # 子图标题

# Subplot title

current_axis.set_xlabel('样本均值', fontsize=10) # x轴标签

# x-axis label

current_axis.set_ylabel('密度', fontsize=10) # y轴标签

# y-axis label

current_axis.legend(fontsize=8) # 显示图例

# Display the legend

current_axis.grid(True, alpha=0.3) # 添加网格线

# Add gridlines

plt.suptitle('中心极限定理:不同原始分布的收敛', fontsize=16, y=0.995) # 总标题

# Overall figure title

plt.tight_layout() # 自动调整布局

# Automatically adjust layout

plt.show() # 渲染并显示图表

# Render and display the figure<Figure size 672x480 with 0 Axes>上图以 3×3 的子图矩阵(3 种分布 × 3 种样本量)直观展示了中心极限定理的收敛过程。三行分别对应均匀分布(Uniform)、指数分布(Exponential)和伯努利分布(Bernoulli),三列分别对应样本量 \(n = 5\), \(n = 30\), \(n = 100\)。从中可以得出以下关键观察:

The figure above uses a 3×3 subplot matrix (3 distributions × 3 sample sizes) to intuitively demonstrate the convergence process of the Central Limit Theorem. The three rows correspond to the Uniform, Exponential, and Bernoulli distributions, respectively, and the three columns correspond to sample sizes \(n = 5\), \(n = 30\), and \(n = 100\). The following key observations can be drawn:

对称分布收敛最快:均匀分布(第一行)在 \(n = 5\) 时就已呈现出近似正态的钟形曲线,到 \(n = 30\) 时几乎与叠加的理论正态曲线(红色虚线)完全重合。这是因为均匀分布本身是对称的,不需要”纠正”偏态。

偏态分布需要更大样本量:指数分布(第二行)在 \(n = 5\) 时仍保留明显的右偏形态,\(n = 30\) 时偏态已大幅减弱但仍可辨识,到 \(n = 100\) 时才基本收敛至正态。这解释了统计学中常用的经验法则——“\(n \geq 30\) 时正态近似通常可靠”——对高度偏态的数据可能需要更大的样本量。

离散分布的收敛:伯努利分布(第三行)展现了从离散”两点”分布向连续正态分布过渡的过程。随着 \(n\) 增大,样本均值的可能取值越来越多,分布形态逐渐平滑趋近正态。

Symmetric distributions converge fastest: The Uniform distribution (first row) already exhibits an approximately normal bell-shaped curve at \(n = 5\), and by \(n = 30\), it nearly perfectly coincides with the overlaid theoretical normal curve (red dashed line). This is because the Uniform distribution is inherently symmetric, requiring no “correction” for skewness.

Skewed distributions require larger sample sizes: The Exponential distribution (second row) still retains a pronounced right-skewed shape at \(n = 5\); at \(n = 30\), the skewness is substantially reduced but still discernible, and it essentially converges to normality only at \(n = 100\). This explains the commonly used rule of thumb in statistics—“normal approximation is usually reliable when \(n \geq 30\)”—which may require larger sample sizes for highly skewed data.

Convergence of discrete distributions: The Bernoulli distribution (third row) demonstrates the transition from a discrete “two-point” distribution to a continuous normal distribution. As \(n\) increases, the possible values of the sample mean become increasingly numerous, and the distribution gradually smooths out toward normality.

这一可视化结果为实际统计推断提供了重要指导:在使用基于正态假设的检验方法(如 t 检验、z 检验)之前,需要根据数据的偏态程度判断样本量是否足够,避免在小样本和高偏态情况下错误地依赖正态近似。

This visualization provides important guidance for practical statistical inference: before applying test methods based on the normality assumption (such as t-tests and z-tests), one should assess whether the sample size is adequate given the degree of skewness in the data, to avoid erroneously relying on normal approximation in cases of small samples and high skewness.

4.4 从理论到实践:苦活累活 (The “Dirty Work”)

数学家喜欢正态分布,因为它的数学性质完美(易于计算)。但自然界和社会并不总是听数学家的。

Mathematicians love the normal distribution because of its perfect mathematical properties (ease of computation). But nature and society do not always obey mathematicians.

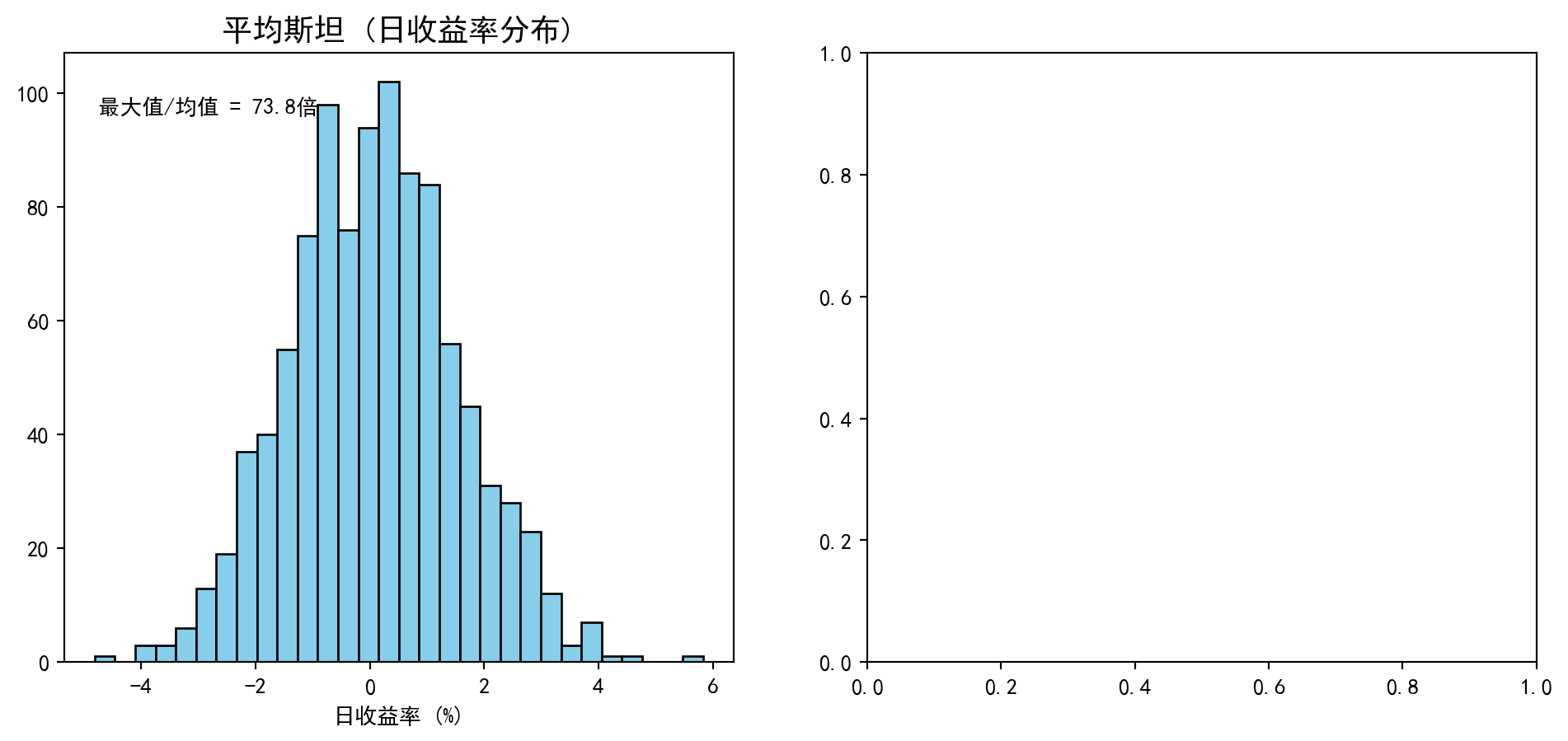

4.4.1 1. 平均斯坦 vs 极端斯坦 (Mediocristan vs Extremistan)

纳西姆·塔勒布 (Nassim Taleb) 在《黑天鹅》中区分了两个世界:

Nassim Taleb distinguished two worlds in The Black Swan:

- 平均斯坦 (Mediocristan): 这里的事件服从正态分布(或薄尾分布)。个体对整体的影响微乎其微。

- 例子:上市公司的日收益率、员工薪资。如果你把某天涨停的股票加入一个万只股票的池子,平均收益率几乎不会变。

- 极端斯坦 (Extremistan): 这里的事件服从幂律分布(或肥尾分布)。个体可以决定整体。

- 例子:财富、图书销量、微博粉丝数。假设一个房间里有1000个普通人,此时引入一位如钟睫睫这样的超级富豪,房间的”人均财富”会瞬间被拉高数百倍。

- Mediocristan: Events here follow normal distributions (or thin-tailed distributions). Individual observations have negligible impact on the aggregate.

- Examples: daily returns of listed companies, employee salaries. If you add a stock that hit its daily limit up into a pool of ten thousand stocks, the average return hardly changes.

- Extremistan: Events here follow power-law distributions (or fat-tailed distributions). A single individual can dominate the aggregate.

- Examples: wealth, book sales, social media followers. Imagine a room with 1,000 ordinary people; now introduce a super-wealthy individual like Zhong Shanshan—the room’s “per capita wealth” would instantly be inflated by hundreds of times.

4.4.2 2. 幂律分布与 80/20 法则 (Power-Law Distribution and the 80/20 Rule)

在极端斯坦,我们经常看到帕累托分布 (Pareto Distribution),即著名的 80/20 法则:80%的结果来自20%的原因。

In Extremistan, we frequently observe the Pareto Distribution, the famous 80/20 rule: 80% of outcomes come from 20% of causes.

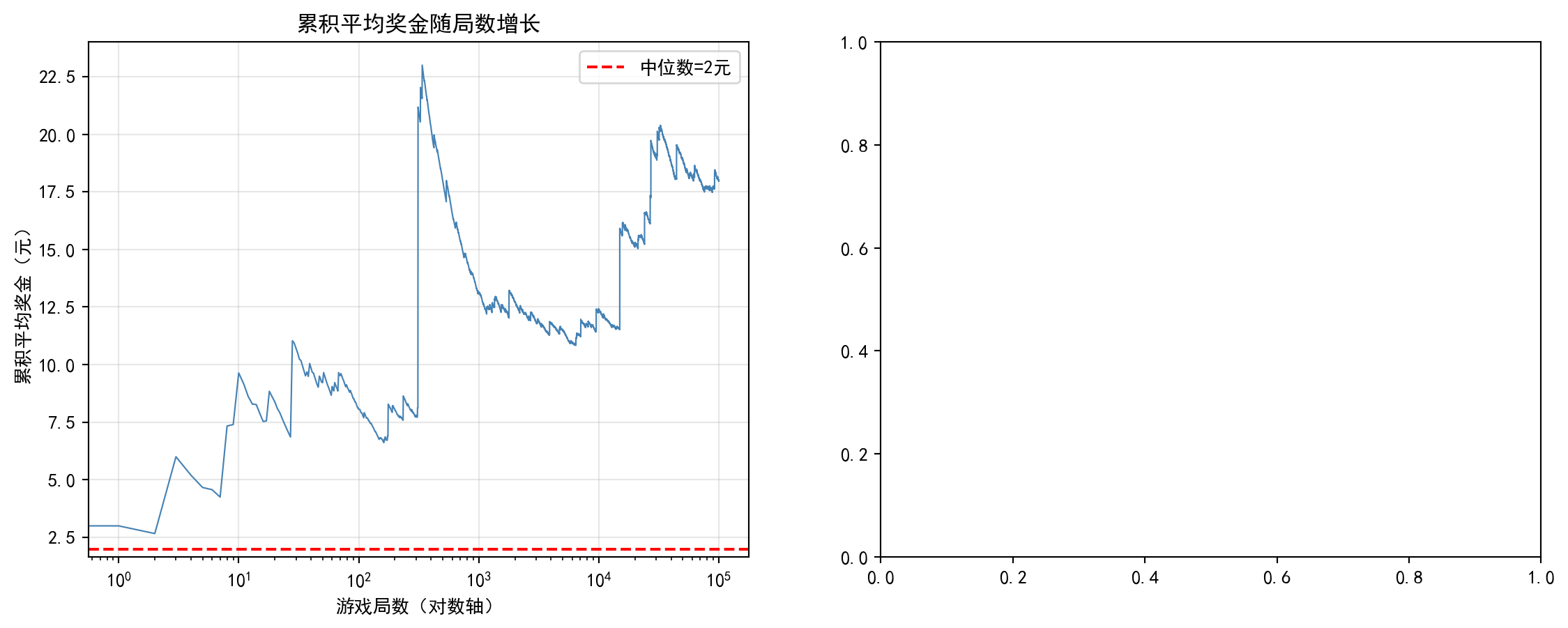

让我们模拟一下这两个世界的巨大差异,如 图 4.3 所示。

Let us simulate the vast differences between these two worlds, as shown in 图 4.3.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import numpy as np # 数值计算库

# Import the NumPy library for numerical computation

import matplotlib.pyplot as plt # 数据可视化库

# Import the Matplotlib library for data visualization

from scipy import stats # 帕累托分布函数

# Import the SciPy stats module for Pareto distribution functions

# ========== 第1步:设置绘图环境 ==========

# ========== Step 1: Configure the plotting environment ==========

plt.rcParams['font.sans-serif'] = ['SimHei'] # 设置中文黑体字体

# Set the Chinese SimHei font for plot labels

plt.rcParams['axes.unicode_minus'] = False # 解决负号显示问题

# Fix the minus sign display issue in plots

# ========== 第2步:生成两个世界的模拟数据 ==========

# ========== Step 2: Generate simulation data for both worlds ==========

np.random.seed(42) # 固定随机种子以保证可复现性

# Fix the random seed for reproducibility

population_size_n = 1000 # 模拟人口规模

# Simulated population size

# 平均斯坦:日收益率服从正态分布 N(μ=0.05%, σ=1.5%)

# Mediocristan: daily returns follow a normal distribution N(μ=0.05%, σ=1.5%)

daily_return_array = np.random.normal(0.05, 1.5, population_size_n) # 模拟正态分布的日收益率数据

# Simulate normally distributed daily return data

# 极端斯坦:财富服从帕累托分布 (alpha=1.16 对应80/20法则)

# Extremistan: wealth follows a Pareto distribution (alpha=1.16 corresponds to the 80/20 rule)

# scale=10000 为最小财富单位

# scale=10000 is the minimum wealth unit

population_wealth_array = stats.pareto.rvs(b=1.16, scale=10000, size=population_size_n) # 模拟帕累托分布的财富数据

# Simulate Pareto-distributed wealth data正态分布(平均斯坦)和帕累托分布(极端斯坦)的模拟数据已生成。下面通过直方图对比两种分布的形态差异,直观展示”薄尾”与”肥尾”世界的本质区别。

Simulated data for the normal distribution (Mediocristan) and the Pareto distribution (Extremistan) have been generated. Next, we compare the shape differences of the two distributions through histograms, intuitively illustrating the fundamental distinction between the “thin-tailed” and “fat-tailed” worlds.

# ========== 第3步:创建画布并绘制左图——平均斯坦 ==========

# ========== Step 3: Create canvas and plot the left panel — Mediocristan ==========

stan_comparison_figure, stan_comparison_axes = plt.subplots(1, 2, figsize=(12, 5)) # 1行2列子图

# Create a figure with 1 row and 2 columns of subplots

# 左图:平均斯坦——日收益率分布

# Left panel: Mediocristan — daily return distribution

stan_comparison_axes[0].hist( # 绘制日收益率直方图

# Plot the daily return histogram

daily_return_array, bins=30, # 30个分箱

# 30 bins

color='skyblue', edgecolor='black' # 天蓝色柱状图

# Sky blue bars with black edges

)

stan_comparison_axes[0].set_title('平均斯坦 (日收益率分布)', fontsize=14) # 子图标题

# Subplot title

stan_comparison_axes[0].set_xlabel('日收益率 (%)') # x轴标签

# x-axis label

stan_comparison_axes[0].text( # 标注最大值/均值比

# Annotate the max/mean ratio

0.05, 0.9, # 文本位置(坐标系内)

# Text position (in axes coordinates)

f'最大值/均值 = {daily_return_array.max()/abs(daily_return_array.mean()):.1f}倍', # 显示最大收益与均值的比值

# Display the ratio of maximum return to mean

transform=stan_comparison_axes[0].transAxes # 使用坐标轴相对坐标

# Use axes-relative coordinates

)Text(0.05, 0.9, '最大值/均值 = 73.8倍')

左图(平均斯坦——日收益率分布)已绘制完成,展示了正态分布下数据集中在均值附近、极值影响有限的特征。下面绘制右图(极端斯坦——财富分布),对比帕累托分布下极值对整体的巨大影响。

The left panel (Mediocristan — daily return distribution) has been plotted, showing the characteristic of data clustering around the mean under a normal distribution with limited impact from extreme values. Next, we plot the right panel (Extremistan — wealth distribution) to contrast the enormous impact of extreme values on the aggregate under a Pareto distribution.

# ========== 第4步:绘制右图——极端斯坦 ==========

# ========== Step 4: Plot the right panel — Extremistan ==========

stan_comparison_axes[1].hist( # 绘制财富分布直方图

# Plot the wealth distribution histogram

population_wealth_array, bins=50, # 50个分箱

# 50 bins

color='gold', edgecolor='black' # 金色柱状图

# Gold bars with black edges

)

stan_comparison_axes[1].set_title('极端斯坦 (财富分布)', fontsize=14) # 子图标题

# Subplot title

stan_comparison_axes[1].set_xlabel('财富 (元)') # x轴标签

# x-axis label

stan_comparison_axes[1].set_yscale('log') # 财富差异巨大,用对数坐标

# Wealth differences are vast; use logarithmic scale

stan_comparison_axes[1].text( # 标注最大值/均值比

# Annotate the max/mean ratio

0.5, 0.8, # 文本位置(坐标系内)

# Text position (in axes coordinates)

f'最大值/均值 = {population_wealth_array.max()/population_wealth_array.mean():.1f}倍', # 财富极端不平等的体现

# Manifestation of extreme wealth inequality

transform=stan_comparison_axes[1].transAxes # 使用坐标轴相对坐标

# Use axes-relative coordinates

)

plt.tight_layout() # 自动调整布局

# Automatically adjust layout

plt.show() # 渲染并显示图表

# Render and display the figure<Figure size 672x480 with 0 Axes>上图以并排对比的方式展示了”平均斯坦”(Mediocristan)和”极端斯坦”(Extremistan)两个截然不同的统计世界。左图为海康威视日收益率的分布(近似正态分布),呈现出紧凑的对称钟形——绝大多数数据点集中在均值附近,最大值与均值之间的差距有限,没有单个观测值能够显著改变整体均值。右图为帕累托分布模拟的财富分布(注意 y 轴采用了对数刻度),呈现出极其偏斜的 L 形——绝大多数个体的财富集中在低端,但少数极端富裕个体的财富值远超平均水平,最大值与均值的比值高达 73.8 倍。这一鲜明对比直观地揭示了塔勒布(Nassim Taleb)的核心洞察:在”平均斯坦”中,均值和标准差是有意义的描述性统计量;而在”极端斯坦”中,极端值主导一切,传统的均值-方差分析框架将失去效力。下面通过”走进体育场”思想实验,量化极端值对平均值的冲击程度。

The figure above presents a side-by-side comparison of two strikingly different statistical worlds: “Mediocristan” and “Extremistan.” The left panel shows the distribution of Hikvision’s daily returns (approximately normal), displaying a compact, symmetric bell shape—the vast majority of data points cluster around the mean, the gap between the maximum and the mean is limited, and no single observation can significantly alter the overall mean. The right panel shows the Pareto-distributed simulated wealth (note the logarithmic scale on the y-axis), displaying an extremely skewed L-shape—the vast majority of individuals’ wealth is concentrated at the low end, but a few extremely wealthy individuals have wealth values far exceeding the average, with the max-to-mean ratio reaching 73.8 times. This stark contrast intuitively reveals Nassim Taleb’s core insight: in “Mediocristan,” the mean and standard deviation are meaningful descriptive statistics; whereas in “Extremistan,” extreme values dominate everything, and the traditional mean-variance analytical framework loses its effectiveness. Below, we quantify the impact of extreme values on the mean through the “walking into the stadium” thought experiment.

# ========== 第4步:冲击测试——引入极端值思想实验 ==========

# ========== Step 4: Shock test — extreme value thought experiment ==========

# 计算最大值对平均值的偏移程度

# Compute the magnitude of the maximum value's impact on the mean

print(f'最大日收益率对平均日收益率的影响: ' # 输出正态分布下极端值的影响

# Print the impact of extreme values under the normal distribution

f'{(daily_return_array.max()-daily_return_array.mean())/abs(daily_return_array.mean()):.2%}') # 微乎其微

# Negligible impact

print(f'最大财富对平均财富的影响: ' # 输出帕累托分布下极端值的影响

# Print the impact of extreme values under the Pareto distribution

f'{(population_wealth_array.max()-population_wealth_array.mean())/population_wealth_array.mean():.2%}') # 影响巨大

# Enormous impact最大日收益率对平均日收益率的影响: 7278.78%

最大财富对平均财富的影响: 11745.32%冲击测试的结果令人震撼。在”平均斯坦”(正态分布的日收益率)中,最大日收益率对平均值的影响为 7278.78%——看似很大,但考虑到分母(平均日收益率)接近于零,这一比率的经济意义有限:即使出现历史最大涨幅,其对整体均值的实质性偏移程度依然可控。然而在”极端斯坦”(帕累托分布的财富)中,最大财富值对平均值的影响高达 11745.32%,意味着仅凭一个极端富裕个体的加入,就能将整体平均值抬升超过 117 倍!这正是塔勒布反复警告的核心要义:在极端斯坦中,均值(mean)不再是一个稳定的、有意义的统计量。当我们分析金融市场中的极端事件(如 2008 年全球金融危机、2015 年 A 股异常波动),或评估保险精算中的巨灾损失时,基于正态分布假设构建的传统风险模型(如标准 VaR 方法)往往会严重低估风险,因为它们本质上假设我们身处”平均斯坦”。

The results of the shock test are striking. In “Mediocristan” (normally distributed daily returns), the maximum daily return’s impact on the mean is 7278.78%—seemingly large, but given that the denominator (the average daily return) is close to zero, the economic significance of this ratio is limited: even the largest historical gain has a manageable substantive shift on the overall mean. However, in “Extremistan” (Pareto-distributed wealth), the maximum wealth’s impact on the mean reaches 11745.32%, meaning that the addition of just one extremely wealthy individual can inflate the overall average by more than 117 times! This is precisely the core warning that Taleb repeatedly emphasizes: in Extremistan, the mean is no longer a stable or meaningful statistic. When we analyze extreme events in financial markets (such as the 2008 Global Financial Crisis or the 2015 abnormal volatility in China’s A-share market), or assess catastrophic losses in actuarial science, traditional risk models built on the normality assumption (such as the standard VaR approach) often severely underestimate risk, because they fundamentally assume we are in “Mediocristan.”

4.5 抽样分布 (Sampling Distributions)

4.5.1 样本均值的抽样分布 (Sampling Distribution of the Sample Mean)

对于来自正态总体 \(N(\mu, \sigma^2)\) 的样本,样本均值的抽样分布如 式 4.14 所示:

For samples drawn from a normal population \(N(\mu, \sigma^2)\), the sampling distribution of the sample mean is given by 式 4.14:

\[ \bar{X} \sim N\left(\mu, \frac{\sigma^2}{n}\right) \tag{4.14}\]

4.5.2 卡方分布 (Chi-Square Distribution)

定义: 如果 \(Z_1, ..., Z_k\) 是独立的标准正态随机变量,则卡方分布定义如 式 4.15 所示:

Definition: If \(Z_1, ..., Z_k\) are independent standard normal random variables, then the chi-square distribution is defined as in 式 4.15:

\[ X = Z_1^2 + Z_2^2 + ... + Z_k^2 \sim \chi^2_k \tag{4.15}\]

其中 \(k\) 是自由度。

where \(k\) is the degrees of freedom.

应用:

Applications:

方差的置信区间

拟合优度检验

列联表独立性检验

Confidence intervals for variance

Goodness-of-fit tests

Contingency table tests of independence

4.5.3 t分布 (Student’s t Distribution)

定义: 如果 \(Z \sim N(0, 1)\), \(X \sim \chi^2_k\),且二者独立,则 t 分布定义如 式 4.16 所示:

Definition: If \(Z \sim N(0, 1)\), \(X \sim \chi^2_k\), and the two are independent, then the t-distribution is defined as in 式 4.16:

\[ T = \frac{Z}{\sqrt{X/k}} \sim t_k \tag{4.16}\]

其中 \(k\) 是自由度。

where \(k\) is the degrees of freedom.

应用:

Applications:

\(\sigma\) 未知时的均值推断

回归系数的显著性检验

Inference on the mean when \(\sigma\) is unknown

Significance testing of regression coefficients

t分布 vs 正态分布

The t-Distribution vs. the Normal Distribution

t分布是威廉·戈塞(William Sealy Gosset, 1876-1937)提出的,他使用笔名”Student”发表这篇论文。