# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import pandas as pd # 数据处理

# Data processing

import numpy as np # 数值计算

# Numerical computation

import statsmodels.api as sm # 统计建模(Logistic回归)

# Statistical modeling (Logistic regression)

from sklearn.linear_model import LogisticRegression # sklearn Logistic分类器

# sklearn Logistic classifier

from sklearn.model_selection import train_test_split # 训练/测试集划分

# Train/test set splitting

from sklearn.metrics import classification_report, confusion_matrix, roc_auc_score, roc_curve # 评估指标

# Evaluation metrics

import matplotlib.pyplot as plt # 可视化绑定

# Visualization bindingds

import platform # 系统平台检测

# System platform detection

# ========== 中文字体与显示设置 ==========

# ========== Chinese font and display settings ==========

if platform.system() == 'Linux': # Linux环境字体

# Linux environment font

plt.rcParams['font.sans-serif'] = ['Source Han Serif SC', 'SimHei', 'DejaVu Sans'] # 设置Linux中文字体优先级

# Set Linux Chinese font priority

else: # Windows环境字体

# Windows environment font

plt.rcParams['font.sans-serif'] = ['SimHei', 'Microsoft YaHei'] # 设置Windows中文字体优先级

# Set Windows Chinese font priority

plt.rcParams['axes.unicode_minus'] = False # 解决负号显示问题

# Fix minus sign display issue

# ========== 第1步:读取本地财务数据 ==========

# ========== Step 1: Load local financial data ==========

if platform.system() == 'Linux': # Linux数据路径

# Linux data path

data_path = '/home/ubuntu/r2_data_mount/qiufei/data/stock' # 设置Linux本地股票数据路径

# Set Linux local stock data path

else: # Windows数据路径

# Windows data path

data_path = 'C:/qiufei/data/stock' # 设置Windows本地股票数据路径

# Set Windows local stock data path

financial_statement_dataframe = pd.read_hdf( # 读取财务报表数据

f'{data_path}/financial_statement.h5') # 指定财务报表HDF5文件

# Read financial statement HDF5 file

stock_basic_info_dataframe = pd.read_hdf( # 读取股票基本信息

f'{data_path}/stock_basic_data.h5') # 指定股票基本信息HDF5文件

# Read stock basic info HDF5 file11 广义线性模型与非线性模型 (Generalized Linear Models and Nonlinear Models)

当因变量不满足正态分布假设,或变量关系非线性时,需要使用广义线性模型(Generalized Linear Models, GLM)或其他非线性模型。本章系统介绍GLM的理论框架,重点讲解Logistic回归和Poisson回归,并介绍正则化方法。

When the dependent variable does not satisfy the normality assumption, or the relationship between variables is nonlinear, Generalized Linear Models (GLM) or other nonlinear models are needed. This chapter systematically introduces the theoretical framework of GLM, with a focus on Logistic regression and Poisson regression, and also covers regularization methods.

11.1 非线性模型在金融风险管理中的典型应用 (Typical Applications of Nonlinear Models in Financial Risk Management)

经典线性回归要求因变量服从正态分布,但金融领域中许多关键问题的因变量本质上是非正态的。GLM和非线性模型为解决这些问题提供了强大的工具。

Classical linear regression requires the dependent variable to follow a normal distribution, but in the field of finance, the dependent variables of many key problems are inherently non-normal. GLM and nonlinear models provide powerful tools for addressing these problems.

11.1.1 应用一:信用违约预测与Logistic回归 (Application 1: Credit Default Prediction and Logistic Regression)

信用风险管理的核心问题是预测借款人是否会违约——这是一个二分类问题,因变量只取0或1。经典线性回归会预测出超出[0,1]范围的概率值,且残差不满足正态性假设。Logistic回归通过logit连接函数完美解决了这一问题。利用 financial_statement.h5 中的财务比率(如资产负债率、流动比率、利息保障倍数等)作为自变量,以上市公司是否被ST标记作为违约代理变量,可以构建信用评分模型。

The core problem of credit risk management is predicting whether a borrower will default — this is a binary classification problem where the dependent variable takes only 0 or 1. Classical linear regression may predict probability values outside the [0,1] range, and the residuals do not satisfy the normality assumption. Logistic regression perfectly solves this problem through the logit link function. Using financial ratios from financial_statement.h5 (such as debt-to-asset ratio, current ratio, interest coverage ratio, etc.) as independent variables, and whether a listed company is marked as ST as a proxy variable for default, a credit scoring model can be constructed.

11.1.2 应用二:金融事件计数的Poisson回归 (Application 2: Poisson Regression for Financial Event Counts)

上市公司每季度发布公告的次数、分析师发布研究报告的次数、股票涨停或跌停的天数——这些都是计数变量。Poisson回归假设因变量服从泊松分布,通过对数连接函数将均值与线性预测量联系起来。基于 financial_statement.h5 和 stock_price_pre_adjusted.h5 的数据,可以研究公司特征(如规模、行业、治理质量)与异常事件发生频率之间的关系。

The number of announcements released by listed companies each quarter, the number of research reports published by analysts, and the number of days a stock hits its price limit up or down — these are all count variables. Poisson regression assumes the dependent variable follows a Poisson distribution and links the mean to the linear predictor through a log link function. Based on data from financial_statement.h5 and stock_price_pre_adjusted.h5, one can study the relationship between company characteristics (such as size, industry, governance quality) and the frequency of abnormal events.

11.1.3 应用三:正则化与高维金融数据 (Application 3: Regularization and High-Dimensional Financial Data)

当模型中引入大量候选变量时(如 valuation_factors_quarterly_15_years.h5 中包含众多估值因子),模型面临过拟合和多重共线性风险。LASSO和Ridge等正则化方法通过向目标函数中添加惩罚项,在拟合数据和模型复杂度之间取得平衡。在A股市场的多因子选股研究中,正则化Logistic回归被广泛用于从数百个候选因子中筛选出真正有预测能力的关键因子。

When a large number of candidate variables are introduced into the model (such as the numerous valuation factors in valuation_factors_quarterly_15_years.h5), the model faces risks of overfitting and multicollinearity. Regularization methods such as LASSO and Ridge achieve a balance between fitting the data and model complexity by adding penalty terms to the objective function. In multi-factor stock selection research on the A-share market, regularized Logistic regression is widely used to screen out truly predictive key factors from hundreds of candidates.

11.2 指数族分布 (Exponential Family Distributions)

GLM要求因变量服从指数族分布(Exponential Family),其概率密度函数如 式 11.1 所示:

GLM requires the dependent variable to follow an exponential family distribution, whose probability density function is shown in 式 11.1:

\[ f(y|\theta, \phi) = \exp\left\{\frac{y\theta - b(\theta)}{a(\phi)} + c(y, \phi)\right\} \tag{11.1}\]

其中:

- \(\theta\): 自然参数(canonical parameter)

- \(\phi\): 离散参数(dispersion parameter)

- \(a(\cdot), b(\cdot), c(\cdot)\): 特定于分布的已知函数

Where:

- \(\theta\): canonical parameter

- \(\phi\): dispersion parameter

- \(a(\cdot), b(\cdot), c(\cdot)\): known functions specific to the distribution

常见分布的指数族形式

Exponential Family Forms of Common Distributions

| 分布 | \(\theta\) | \(b(\theta)\) | \(a(\phi)\) | 均值 \(\mu\) | 方差 |

|---|---|---|---|---|---|

| 正态 | \(\mu\) | \(\theta^2/2\) | \(\sigma^2\) | \(\theta\) | \(\sigma^2\) |

| 二项 | \(\ln\frac{p}{1-p}\) | \(\ln(1+e^\theta)\) | \(1/n\) | \(\frac{e^\theta}{1+e^\theta}\) | \(np(1-p)\) |

| 泊松 | \(\ln\lambda\) | \(e^\theta\) | \(1\) | \(e^\theta\) | \(\lambda\) |

| 伽马 | \(-1/\mu\) | \(-\ln(-\theta)\) | \(\phi\) | \(-1/\theta\) | \(\phi\mu^2\) |

指数族分布有重要性质:\(E(Y) = b'(\theta)\),\(\text{Var}(Y) = a(\phi)b''(\theta)\)

Exponential family distributions have important properties: \(E(Y) = b'(\theta)\), \(\text{Var}(Y) = a(\phi)b''(\theta)\)

11.3 广义线性模型(GLM)框架 (Generalized Linear Model (GLM) Framework)

11.3.1 模型的三要素 (Three Components of the Model)

GLM由三个相互关联的要素组成:

GLM consists of three interrelated components:

1. 随机分量(Random Component)

1. Random Component

因变量\(Y\)服从指数族分布,其均值为\(\mu = E(Y)\)。

The dependent variable \(Y\) follows an exponential family distribution with mean \(\mu = E(Y)\).

2. 系统分量(Systematic Component)

2. Systematic Component

线性预测子(Linear Predictor),如 式 11.2 所示:

The linear predictor, as shown in 式 11.2:

\[ \eta = \mathbf{X}\boldsymbol{\beta} = \beta_0 + \beta_1 X_1 + ... + \beta_p X_p \tag{11.2}\]

3. 连接函数(Link Function)

3. Link Function

连接函数\(g(\cdot)\)将均值\(\mu\)与线性预测子\(\eta\)联系起来,如 式 11.3 所示:

The link function \(g(\cdot)\) connects the mean \(\mu\) to the linear predictor \(\eta\), as shown in 式 11.3:

\[ g(\mu) = \eta \tag{11.3}\]

等价地:\(\mu = g^{-1}(\eta)\)

Equivalently: \(\mu = g^{-1}(\eta)\)

11.3.2 常用连接函数 (Common Link Functions)

表 11.1 列出了GLM中常用的连接函数及其应用场景。

表 11.1 lists the commonly used link functions in GLM and their application scenarios.

| 分布 | 典型连接函数 | 表达式 | 应用场景 |

|---|---|---|---|

| 正态 | 恒等(Identity) | \(g(\mu) = \mu\) | 连续因变量 |

| 二项 | Logit | \(g(\mu) = \ln\frac{\mu}{1-\mu}\) | 二分类、比例 |

| 二项 | Probit | \(g(\mu) = \Phi^{-1}(\mu)\) | 二分类(潜变量) |

| 泊松 | 对数(Log) | \(g(\mu) = \ln\mu\) | 计数数据 |

| 伽马 | 倒数(Inverse) | \(g(\mu) = 1/\mu\) | 正偏态连续变量 |

为什么需要连接函数?(直观理解)

Why Do We Need Link Functions? (Intuitive Understanding)

线性预测子 \(\eta = \mathbf{X}\boldsymbol{\beta}\) 的取值范围是 \((-\infty, +\infty)\)。 但我们的因变量均值 \(\mu\) 往往有范围限制:

The range of the linear predictor \(\eta = \mathbf{X}\boldsymbol{\beta}\) is \((-\infty, +\infty)\). However, the mean of our dependent variable \(\mu\) often has range restrictions:

二分类概率 \(p \in [0, 1]\)

计数 \(\lambda \in [0, +\infty)\)

Binary classification probability \(p \in [0, 1]\)

Count \(\lambda \in [0, +\infty)\)

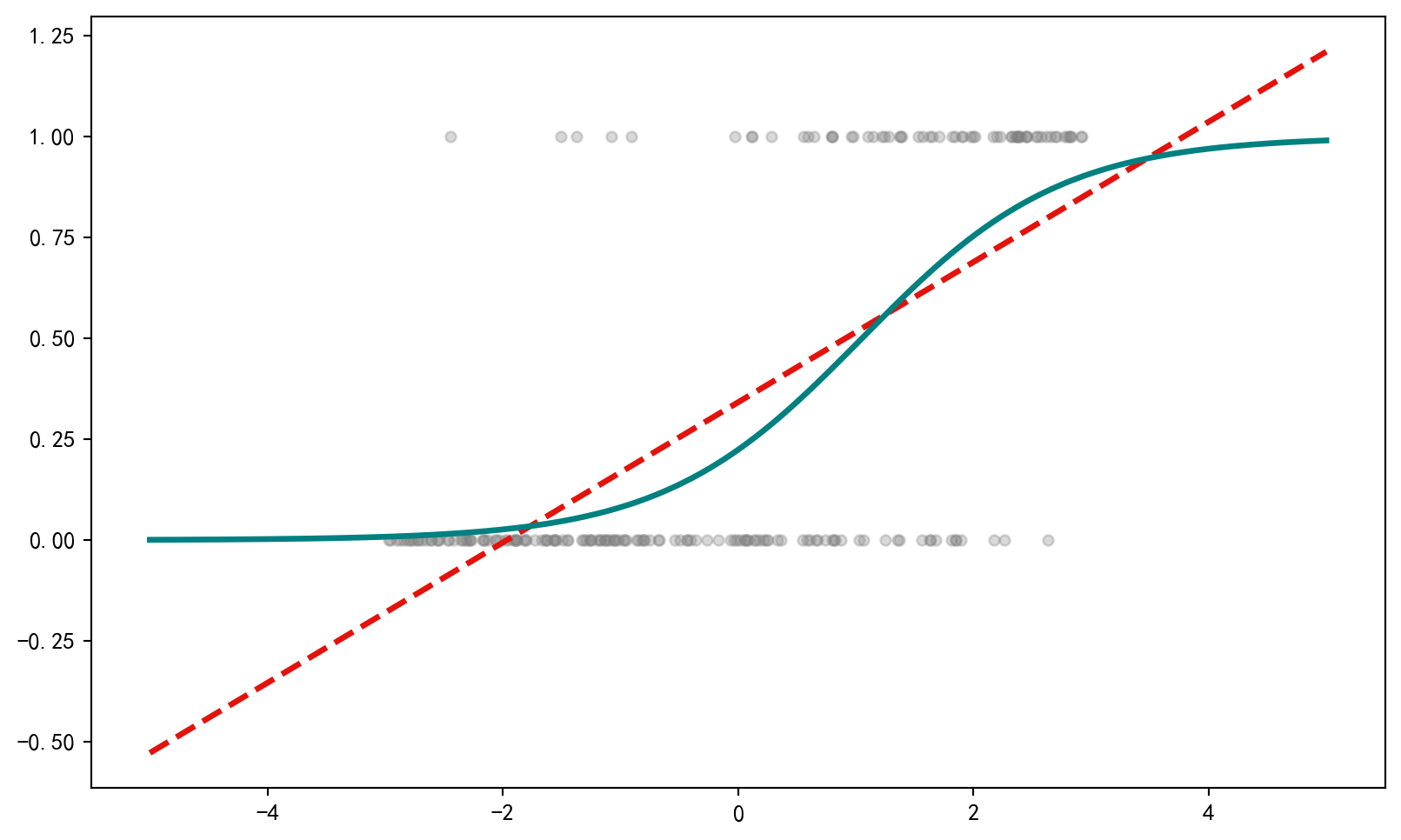

直接用线性回归(\(p = \mathbf{X}\boldsymbol{\beta}\))可能会预测出负概率或大于1的概率,这是荒谬的。 连接函数 \(g(\cdot)\) 的作用就是进行空间映射:

Directly using linear regression (\(p = \mathbf{X}\boldsymbol{\beta}\)) may predict negative probabilities or probabilities greater than 1, which is absurd. The role of the link function \(g(\cdot)\) is to perform space mapping:

Logit将 \((0, 1)\) 映射到 \((-\infty, +\infty)\)

Log将 \((0, +\infty)\) 映射到 \((-\infty, +\infty)\)

Logit maps \((0, 1)\) to \((-\infty, +\infty)\)

Log maps \((0, +\infty)\) to \((-\infty, +\infty)\)

反过来,反连接函数 \(g^{-1}\) 将线性预测值”压缩”回合理的取值范围。这就是GLM”广义”的精髓所在。

Conversely, the inverse link function \(g^{-1}\) “compresses” the linear predicted values back into a reasonable range. This is the essence of the “generalized” in GLM.

11.3.3 极大似然估计 (Maximum Likelihood Estimation)

GLM参数通过极大似然估计(MLE)获得。对数似然函数如 式 11.4 所示:

GLM parameters are obtained through Maximum Likelihood Estimation (MLE). The log-likelihood function is shown in 式 11.4:

\[ \ell(\boldsymbol{\beta}) = \sum_{i=1}^n \frac{y_i\theta_i - b(\theta_i)}{a(\phi)} + c(y_i, \phi) \tag{11.4}\]

由于\(\theta_i\)通过连接函数与\(\boldsymbol{\beta}\)相关,对数似然非线性于\(\boldsymbol{\beta}\),需要使用Newton-Raphson或Fisher Scoring迭代算法求解。

Since \(\theta_i\) is related to \(\boldsymbol{\beta}\) through the link function, the log-likelihood is nonlinear in \(\boldsymbol{\beta}\), requiring iterative algorithms such as Newton-Raphson or Fisher Scoring to solve.

迭代加权最小二乘(IRLS)

Iteratively Reweighted Least Squares (IRLS)

Fisher Scoring算法可以表示为迭代加权最小二乘(Iteratively Reweighted Least Squares, IRLS):

The Fisher Scoring algorithm can be expressed as Iteratively Reweighted Least Squares (IRLS):

\[\boldsymbol{\beta}^{(t+1)} = (\mathbf{X}'\mathbf{W}^{(t)}\mathbf{X})^{-1}\mathbf{X}'\mathbf{W}^{(t)}\mathbf{z}^{(t)}\]

其中:

- \(\mathbf{W}\): 对角权重矩阵,\(W_{ii} = \left(\frac{\partial\mu_i}{\partial\eta_i}\right)^2 / \text{Var}(Y_i)\)

- \(\mathbf{z}\): 工作因变量(working response),\(z_i = \eta_i + (y_i - \mu_i)\frac{\partial\eta_i}{\partial\mu_i}\)

Where:

- \(\mathbf{W}\): diagonal weight matrix, \(W_{ii} = \left(\frac{\partial\mu_i}{\partial\eta_i}\right)^2 / \text{Var}(Y_i)\)

- \(\mathbf{z}\): working response, \(z_i = \eta_i + (y_i - \mu_i)\frac{\partial\eta_i}{\partial\mu_i}\)

IRLS算法在每次迭代中更新权重,相当于对变换后的响应变量进行加权最小二乘。

The IRLS algorithm updates weights at each iteration, equivalent to performing weighted least squares on the transformed response variable.

11.4 Logistic回归 (Logistic Regression)

Logistic回归是最常用的二分类模型,广泛应用于信用评分、客户流失预测、市场预测等领域。

Logistic regression is the most commonly used binary classification model, widely applied in credit scoring, customer churn prediction, market forecasting, and other fields.

11.4.1 模型设定与解释 (Model Specification and Interpretation)

对于二分类因变量\(Y \in \{0, 1\}\),我们建模\(P(Y=1|\mathbf{X})\),如 式 11.5 所示:

For a binary dependent variable \(Y \in \{0, 1\}\), we model \(P(Y=1|\mathbf{X})\), as shown in 式 11.5:

\[ P(Y=1|\mathbf{X}) = \frac{1}{1+e^{-\eta}} = \frac{e^{\eta}}{1+e^{\eta}} \tag{11.5}\]

其中\(\eta = \beta_0 + \beta_1 X_1 + ... + \beta_p X_p\)。

Where \(\eta = \beta_0 + \beta_1 X_1 + ... + \beta_p X_p\).

11.4.2 Logit变换 (Logit Transformation)

取对数几率(log-odds)变换,如 式 11.6 所示:

Taking the log-odds transformation, as shown in 式 11.6:

\[ \text{logit}(p) = \ln\left(\frac{p}{1-p}\right) = \beta_0 + \beta_1 X_1 + ... + \beta_p X_p \tag{11.6}\]

几率(Odds): \(\frac{p}{1-p}\)表示事件发生概率与不发生概率之比

Odds: \(\frac{p}{1-p}\) represents the ratio of the probability of an event occurring to the probability of it not occurring

几率比(Odds Ratio): \(\exp(\beta_j)\)表示\(X_j\)每增加1单位,几率的乘法变化

Odds Ratio: \(\exp(\beta_j)\) represents the multiplicative change in odds for each unit increase in \(X_j\)

几率比的解释

Interpretation of Odds Ratios

假设\(\beta_1 = 0.5\),则\(OR = e^{0.5} = 1.65\)

Suppose \(\beta_1 = 0.5\), then \(OR = e^{0.5} = 1.65\)

解释:\(X_1\)每增加1单位,事件发生的几率增加65%

Interpretation: For each unit increase in \(X_1\), the odds of the event occurring increase by 65%

注意:这是几率的变化,不是概率的变化!

Note: This is a change in odds, not a change in probability!

11.4.3 似然函数推导 (Likelihood Function Derivation)

Logistic回归的似然函数

Likelihood Function of Logistic Regression

对于\(n\)个独立观测,似然函数为:

For \(n\) independent observations, the likelihood function is:

\[ L(\boldsymbol{\beta}) = \prod_{i=1}^n p_i^{y_i}(1-p_i)^{1-y_i} \]

对数似然:

Log-likelihood:

\[ \ell(\boldsymbol{\beta}) = \sum_{i=1}^n \left[y_i \ln p_i + (1-y_i)\ln(1-p_i)\right] \]

代入\(p_i = \frac{e^{\eta_i}}{1+e^{\eta_i}}\),得 式 11.7 :

Substituting \(p_i = \frac{e^{\eta_i}}{1+e^{\eta_i}}\), we obtain 式 11.7:

\[ \ell(\boldsymbol{\beta}) = \sum_{i=1}^n \left[y_i \eta_i - \ln(1+e^{\eta_i})\right] \tag{11.7}\]

对\(\beta_j\)求导:

Taking the derivative with respect to \(\beta_j\):

\[ \frac{\partial \ell}{\partial \beta_j} = \sum_{i=1}^n (y_i - p_i)x_{ij} \]

这是非线性方程,需要数值方法求解。

This is a nonlinear equation that requires numerical methods to solve.

11.4.4 案例:上市公司财务困境预测 (Case Study: Listed Company Financial Distress Prediction)

什么是财务困境预测?

What Is Financial Distress Prediction?

在中国A股市场中,当上市公司连续两年净利润为负或出现其他重大财务异常时,交易所会对其实施”特别处理”(ST)。ST标记不仅意味着股价承受巨大下行压力,更直接影响企业的融资能力和市场信誉。对于银行、基金等机构投资者而言,能否提前识别潜在的财务困境企业,是信用风险管理的核心课题。

In China’s A-share market, when a listed company has negative net profits for two consecutive years or exhibits other significant financial abnormalities, the exchange will impose “Special Treatment” (ST) on it. The ST label not only means that the stock price faces enormous downward pressure, but also directly affects the company’s financing ability and market reputation. For institutional investors such as banks and funds, being able to identify potential financially distressed companies in advance is a core issue in credit risk management.

逻辑斯蒂回归(Logistic Regression)恰好为这类二元分类问题提供了经典的统计建模框架:它通过将多个财务指标(如资产负债率、流动比率、盈利能力等)的线性组合映射到0-1概率空间,输出一家公司陷入财务困境的概率估计。下面我们使用长三角地区上市公司的真实财务数据,建立Logistic回归模型预测公司是否会被ST,如 表 11.2 所示。

Logistic Regression provides precisely the classic statistical modeling framework for this type of binary classification problem: it maps a linear combination of multiple financial indicators (such as debt-to-asset ratio, current ratio, profitability, etc.) to the 0-1 probability space, outputting a probability estimate of a company falling into financial distress. Below, we use real financial data from listed companies in the Yangtze River Delta region to build a Logistic regression model to predict whether a company will be marked as ST, as shown in 表 11.2.

Logistic回归所需的库导入和本地财务数据加载完毕。下面筛选2023年年报数据,构造ST标识并限定长三角非金融企业作为分析样本。

The library imports and local financial data loading required for Logistic regression are complete. Next, we filter the 2023 annual report data, construct the ST indicator, and restrict the analysis sample to non-financial enterprises in the Yangtze River Delta.

# ========== 第2步:筛选2023年报并合并ST状态 ==========

# ========== Step 2: Filter 2023 annual reports and merge ST status ==========

annual_report_dataframe_2023 = financial_statement_dataframe[ # 筛选2023年第四季度(年报)

(financial_statement_dataframe['quarter'].str.endswith('q4')) & # 筛选第四季度(年报)

# Filter Q4 (annual report)

(financial_statement_dataframe['quarter'].str.startswith('2023')) # 限定2023年度

# Restrict to year 2023

].copy() # 创建独立副本避免链式赋值警告

# Create an independent copy to avoid chained assignment warning

annual_report_dataframe_2023 = annual_report_dataframe_2023.merge( # 左连接股票基本信息

stock_basic_info_dataframe[['order_book_id', 'abbrev_symbol', 'industry_name', 'province']], # 选取代码、简称、行业、省份列

# Select columns: stock code, abbreviation, industry, province

on='order_book_id', # 以股票代码为连接键

# Join on stock code

how='left' # 左连接保留所有财务数据

# Left join to keep all financial data

)

# ========== 第3步:构造ST标识并筛选长三角非金融企业 ==========

# ========== Step 3: Construct ST indicator and filter YRD non-financial enterprises ==========

annual_report_dataframe_2023['is_st'] = annual_report_dataframe_2023[ # 根据名称识别ST股

'abbrev_symbol'].str.contains('ST|\\*ST', na=False).astype(int) # 匹配ST或*ST标记并转为0/1

# Identify ST stocks by name, match ST or *ST and convert to 0/1

yangtze_river_delta_areas_list = ['上海市', '江苏省', '浙江省', '安徽省'] # 长三角四省市

# Four provinces/cities in the Yangtze River Delta

financial_industries_list = ['货币金融服务', '保险业', '其他金融业'] # 金融行业排除名单

# Financial industries exclusion list

base_analysis_dataframe = annual_report_dataframe_2023[ # 筛选分析样本

(annual_report_dataframe_2023['province'].isin(yangtze_river_delta_areas_list)) & # 限定长三角

# Restrict to Yangtze River Delta

(~annual_report_dataframe_2023['industry_name'].isin(financial_industries_list)) & # 排除金融业

# Exclude financial industries

(annual_report_dataframe_2023['total_assets'] > 0) # 排除资产为零

# Exclude zero-asset companies

].copy() # 创建筛选后的独立副本

# Create an independent copy of the filtered data长三角非金融业上市公司样本已筛选完毕。下面基于财务报表数据计算ROA、资产负债率、流动比率等特征指标,并建立Logistic回归模型预测ST概率。

The sample of non-financial listed companies in the Yangtze River Delta has been filtered. Next, we calculate feature indicators such as ROA, debt-to-asset ratio, and current ratio based on the financial statement data, and build a Logistic regression model to predict the probability of ST.

# ========== 第4步:计算财务指标(特征工程) ==========

# ========== Step 4: Calculate financial indicators (feature engineering) ==========

# 盈利能力: ROA = 净利润 / 总资产 × 100

# Profitability: ROA = Net Profit / Total Assets × 100

base_analysis_dataframe['roa'] = ( # 计算总资产收益率ROA(%)

# Calculate Return on Assets ROA (%)

base_analysis_dataframe['net_profit'] / # 分子:净利润

# Numerator: net profit

base_analysis_dataframe['total_assets'] * 100 # 分母:总资产,乘100转为百分比

# Denominator: total assets, multiply by 100 to convert to percentage

)

# 偿债能力: 资产负债率 = 总负债 / 总资产 × 100

# Solvency: Debt-to-Asset Ratio = Total Liabilities / Total Assets × 100

base_analysis_dataframe['debt_ratio'] = ( # 计算资产负债率(%)

# Calculate debt-to-asset ratio (%)

base_analysis_dataframe['total_liabilities'] / # 分子:总负债

# Numerator: total liabilities

base_analysis_dataframe['total_assets'] * 100 # 分母:总资产,乘100转为百分比

# Denominator: total assets, multiply by 100 to convert to percentage

)

# 流动性: 流动比率 = 流动资产 / 流动负债

# Liquidity: Current Ratio = Current Assets / Current Liabilities

base_analysis_dataframe['current_ratio'] = np.where( # 条件计算流动比率

# Conditionally calculate current ratio

base_analysis_dataframe['current_liabilities'] > 0, # 避免除零

# Avoid division by zero

base_analysis_dataframe['current_assets'] / base_analysis_dataframe['current_liabilities'], # 流动资产除以流动负债

# Current assets divided by current liabilities

np.nan # 无流动负债则为缺失

# NaN if no current liabilities

)

# 公司规模: 总资产取对数(单位:亿元)

# Company size: Log of total assets (unit: 100 million yuan)

base_analysis_dataframe['log_assets'] = np.log(base_analysis_dataframe['total_assets'] / 1e8) # 总资产取对数(单位:亿元)

# Log of total assets (unit: 100 million yuan)财务指标特征工程完毕。下面去除异常值并建立Logistic回归模型。

Feature engineering of financial indicators is complete. Next, we remove outliers and build the Logistic regression model.

# ========== 第5步:去除异常值并建立Logistic回归模型 ==========

# ========== Step 5: Remove outliers and build the Logistic regression model ==========

logistic_model_dataframe = base_analysis_dataframe[ # 异常值过滤

(base_analysis_dataframe['roa'] > -100) & (base_analysis_dataframe['roa'] < 50) & # ROA在合理范围内

# ROA within reasonable range

(base_analysis_dataframe['debt_ratio'] > 0) & (base_analysis_dataframe['debt_ratio'] < 150) & # 负债率在合理范围内

# Debt ratio within reasonable range

(base_analysis_dataframe['current_ratio'] > 0) & (base_analysis_dataframe['current_ratio'] < 10) # 流动比率在合理范围内

# Current ratio within reasonable range

].dropna(subset=['is_st', 'roa', 'debt_ratio', 'current_ratio', 'log_assets']) # 删除缺失值

# Drop missing values

print(f'分析样本量: {len(logistic_model_dataframe)} 家公司') # 输出样本量

# Print sample size

print(f'ST公司: {logistic_model_dataframe["is_st"].sum()} 家 ({logistic_model_dataframe["is_st"].mean()*100:.2f}%)') # ST占比

# Print ST company count and proportion

predictor_variables_list = ['roa', 'debt_ratio', 'current_ratio', 'log_assets'] # 自变量列表

# List of predictor variables

design_matrix_features = logistic_model_dataframe[predictor_variables_list] # 特征矩阵

# Feature matrix

target_variable_series = logistic_model_dataframe['is_st'] # 因变量(0/1)

# Dependent variable (0/1)

statsmodels_design_matrix = sm.add_constant(design_matrix_features) # 添加截距项

# Add intercept term

fitted_logistic_model = sm.Logit(target_variable_series, # Logistic回归拟合

statsmodels_design_matrix).fit(disp=0) # disp=0抑制迭代输出

# Fit Logistic regression, disp=0 suppresses iteration output

print('\n=== Logistic回归结果 ===') # 输出回归结果分隔标题

# Print regression results section header

print(fitted_logistic_model.summary()) # 输出完整统计摘要

# Print complete statistical summary分析样本量: 1868 家公司

ST公司: 74 家 (3.96%)

=== Logistic回归结果 ===

Logit Regression Results

==============================================================================

Dep. Variable: is_st No. Observations: 1868

Model: Logit Df Residuals: 1863

Method: MLE Df Model: 4

Date: Tue, 10 Mar 2026 Pseudo R-squ.: 0.1642

Time: 20:46:38 Log-Likelihood: -260.28

converged: True LL-Null: -311.43

Covariance Type: nonrobust LLR p-value: 3.204e-21

=================================================================================

coef std err z P>|z| [0.025 0.975]

---------------------------------------------------------------------------------

const -3.0385 0.694 -4.376 0.000 -4.399 -1.678

roa -0.0790 0.014 -5.819 0.000 -0.106 -0.052

debt_ratio 0.0305 0.008 3.594 0.000 0.014 0.047

current_ratio 0.0883 0.108 0.816 0.415 -0.124 0.300

log_assets -0.4843 0.129 -3.753 0.000 -0.737 -0.231

=================================================================================表 11.2 的运行结果显示,最终分析样本包含1868家长三角非金融业上市公司,其中74家为ST公司,占比3.96%,反映了财务困境的低频事件特征。模型整体的Pseudo R²为0.1642,LLR p值为3.204e-21,说明模型在统计上高度显著。从各变量的系数估计来看:ROA(系数=-0.0790,p=0.000)、资产负债率(系数=0.0305,p=0.000)和资产规模对数值(系数=-0.4843,p=0.000)均在1%水平上显著,说明盈利能力越弱、杠杆率越高、资产规模越小的公司,被标记为ST的可能性越大。而流动比率(系数=0.0883,p=0.415)不显著,表明在控制其他变量后,短期偿债能力对ST风险的独立解释力有限。

The results of 表 11.2 show that the final analysis sample includes 1,868 non-financial listed companies in the Yangtze River Delta, of which 74 are ST companies, accounting for 3.96%, reflecting the low-frequency event characteristic of financial distress. The overall Pseudo R² of the model is 0.1642, with an LLR p-value of 3.204e-21, indicating the model is highly statistically significant. From the coefficient estimates of each variable: ROA (coefficient = -0.0790, p = 0.000), debt-to-asset ratio (coefficient = 0.0305, p = 0.000), and log of total assets (coefficient = -0.4843, p = 0.000) are all significant at the 1% level, indicating that companies with weaker profitability, higher leverage, and smaller asset size are more likely to be marked as ST. The current ratio (coefficient = 0.0883, p = 0.415) is not significant, indicating that after controlling for other variables, short-term solvency has limited independent explanatory power for ST risk.

如 表 11.3 所示,我们进一步计算模型的几率比(Odds Ratio)及其置信区间,以便对系数进行更直观的经济学解读。

As shown in 表 11.3, we further calculate the model’s odds ratios and their confidence intervals for a more intuitive economic interpretation of the coefficients.

# ========== 第1步:计算几率比(OR)及其置信区间 ==========

# ========== Step 1: Calculate odds ratios (OR) and confidence intervals ==========

logistic_coefficients_series = fitted_logistic_model.params # 提取回归系数

# Extract regression coefficients

confidence_intervals_dataframe = fitted_logistic_model.conf_int() # 95%置信区间

# 95% confidence intervals

confidence_intervals_dataframe.columns = ['2.5%', '97.5%'] # 重命名列

# Rename columns

odds_ratios_dataframe = pd.DataFrame({ # 构建几率比汇总表

# Construct odds ratio summary table

'变量': logistic_coefficients_series.index, # 变量名

# Variable names

'系数': logistic_coefficients_series.values, # 对数几率比(原始系数)

# Log odds ratio (raw coefficients)

'几率比(OR)': np.exp(logistic_coefficients_series.values), # exp(β) = OR

# exp(β) = OR

'OR 2.5%': np.exp(confidence_intervals_dataframe['2.5%'].values), # OR下限

# OR lower bound

'OR 97.5%': np.exp(confidence_intervals_dataframe['97.5%'].values), # OR上限

# OR upper bound

'p值': fitted_logistic_model.pvalues.values # 系数显著性p值

# Coefficient significance p-value

})

print('=== 几率比分析 ===\n') # 输出几率比分析标题

# Print odds ratio analysis header

print(odds_ratios_dataframe.round(4).to_string(index=False)) # 输出几率比汇总表

# Print odds ratio summary table=== 几率比分析 ===

变量 系数 几率比(OR) OR 2.5% OR 97.5% p值

const -3.0385 0.0479 0.0123 0.1868 0.0000

roa -0.0790 0.9241 0.8998 0.9490 0.0000

debt_ratio 0.0305 1.0309 1.0139 1.0482 0.0003

current_ratio 0.0883 1.0923 0.8836 1.3503 0.4146

log_assets -0.4843 0.6161 0.4784 0.7934 0.0002表 11.3 的运行结果显示了各变量的几率比(OR)及其95%置信区间。具体而言:ROA的OR为0.9241(p=0.0000),资产负债率的OR为1.0309(p=0.0003),资产规模对数值的OR为0.6161(p=0.0002),这三个变量的OR置信区间均不包含1,表明其对ST风险的影响在统计上显著。而流动比率的OR为1.0923(p=0.4146),其95%置信区间为[0.8836, 1.3503],包含了1,进一步验证了该变量在模型中不具显著解释力。下面逐一将这些几率比翻译为具体的经济学含义。

The results of 表 11.3 show the odds ratios (OR) and their 95% confidence intervals for each variable. Specifically: the OR of ROA is 0.9241 (p = 0.0000), the OR of debt-to-asset ratio is 1.0309 (p = 0.0003), and the OR of log of total assets is 0.6161 (p = 0.0002). The OR confidence intervals of these three variables do not contain 1, indicating that their effects on ST risk are statistically significant. The OR of current ratio is 1.0923 (p = 0.4146), and its 95% confidence interval is [0.8836, 1.3503], which contains 1, further confirming that this variable does not have significant explanatory power in the model. Below, we translate these odds ratios into specific economic meanings one by one.

# ========== 第2步:逐一解释各变量的经济含义 ==========

# ========== Step 2: Interpret the economic meaning of each variable ==========

print('\n=== 解释 ===') # 输出经济含义解释标题

# Print economic interpretation header

print('1. ROA (资产收益率):') # ROA的OR解释

# ROA's OR interpretation

print(f' OR = {np.exp(logistic_coefficients_series["roa"]):.4f}') # 输出ROA的几率比

# Print ROA's odds ratio

print(' ROA每提高1个百分点,ST几率变为原来的{:.1%}'.format( # 输出ROA边际效应

np.exp(logistic_coefficients_series["roa"])-1)) # 边际效应

# Marginal effect: for each 1 percentage point increase in ROA, the odds of ST change by this factor

print('\n2. 资产负债率:') # 负债率的OR解释

# Debt-to-asset ratio OR interpretation

print(f' OR = {np.exp(logistic_coefficients_series["debt_ratio"]):.4f}') # 输出负债率的几率比

# Print debt-to-asset ratio's odds ratio

print(' 资产负债率每提高1个百分点,ST几率{:.1%}'.format( # 输出负债率边际效应

np.exp(logistic_coefficients_series["debt_ratio"])-1)) # 边际效应

# Marginal effect: for each 1 percentage point increase in debt ratio, ST odds change by this factor

print('\n3. 流动比率:') # 流动比率OR解释

# Current ratio OR interpretation

print(f' OR = {np.exp(logistic_coefficients_series["current_ratio"]):.4f}') # 输出流动比率的几率比

# Print current ratio's odds ratio

print(' 流动比率每提高1,ST几率变为原来的{:.1%}'.format( # 输出流动比率边际效应

np.exp(logistic_coefficients_series["current_ratio"])-1)) # 边际效应

# Marginal effect: for each unit increase in current ratio, ST odds change by this factor

=== 解释 ===

1. ROA (资产收益率):

OR = 0.9241

ROA每提高1个百分点,ST几率变为原来的-7.6%

2. 资产负债率:

OR = 1.0309

资产负债率每提高1个百分点,ST几率3.1%

3. 流动比率:

OR = 1.0923

流动比率每提高1,ST几率变为原来的9.2%上述代码输出的经济含义解释清晰地揭示了各财务指标对ST风险的边际影响:(1)ROA的OR为0.9241,意味着ROA每提高1个百分点,企业被标记为ST的几率降低约7.6%,盈利能力的提升是防范财务困境最有效的”盾牌”;(2)资产负债率的OR为1.0309,资产负债率每升高1个百分点,ST几率上升3.1%,高杠杆加剧了财务脆弱性;(3)流动比率的OR为1.0923,虽然方向上显示流动比率越高ST风险越大——这可能反映了部分ST企业账面流动资产虚高的现象——但由于p值为0.415,该效应在统计上并不显著,不应作为风险判断的依据。接下来,我们通过机器学习的评估框架进一步检验模型的分类性能。图 11.1 展示了Logistic回归模型的ROC曲线和混淆矩阵评估结果。

The economic interpretations output by the above code clearly reveal the marginal effects of each financial indicator on ST risk: (1) The OR of ROA is 0.9241, meaning that for each 1 percentage point increase in ROA, the odds of being marked as ST decrease by approximately 7.6% — improvement in profitability is the most effective “shield” against financial distress; (2) The OR of debt-to-asset ratio is 1.0309, meaning that for each 1 percentage point increase in the debt-to-asset ratio, the odds of ST increase by 3.1%, as high leverage exacerbates financial fragility; (3) The OR of current ratio is 1.0923, which directionally suggests that a higher current ratio leads to greater ST risk — this may reflect the phenomenon of inflated current assets on the books of some ST companies — but since the p-value is 0.415, this effect is not statistically significant and should not be used as a basis for risk judgment. Next, we further examine the classification performance of the model through a machine learning evaluation framework. 图 11.1 shows the ROC curve and confusion matrix evaluation results of the Logistic regression model.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

from sklearn.model_selection import cross_val_predict # 交叉验证预测

# Cross-validation prediction

from sklearn.preprocessing import StandardScaler # 特征标准化

# Feature standardization

# ========== 第1步:特征标准化与训练/测试集划分 ==========

# ========== Step 1: Feature standardization and train/test split ==========

features_standard_scaler = StandardScaler() # 初始化标准化器

# Initialize the standard scaler

scaled_features_matrix = features_standard_scaler.fit_transform( # 标准化特征矩阵

design_matrix_features) # 对原始特征矩阵进行标准化

# Standardize the original feature matrix

features_train_matrix, features_test_matrix, target_train_series, target_test_series = train_test_split( # 划分训练集和测试集

scaled_features_matrix, target_variable_series, # 输入特征和标签

# Input features and labels

test_size=0.3, random_state=42, # 30%测试集,固定种子

# 30% test set, fixed random seed

stratify=target_variable_series # 分层抽样保持类别比例

# Stratified sampling to maintain class proportions

)

# ========== 第2步:训练sklearn Logistic模型并预测 ==========

# ========== Step 2: Train sklearn Logistic model and predict ==========

sklearn_logistic_classifier = LogisticRegression( # 初始化分类器

# Initialize classifier

max_iter=1000, class_weight='balanced') # 类别权重平衡处理不平衡

# Balanced class weights to handle imbalance

sklearn_logistic_classifier.fit(features_train_matrix, # 拟合训练数据

target_train_series) # 传入训练集标签进行拟合

# Fit training data with training labels

predicted_classes_array = sklearn_logistic_classifier.predict( # 预测类别标签

features_test_matrix) # 对测试集特征进行类别预测

# Predict class labels on test set features

predicted_probabilities_array = sklearn_logistic_classifier.predict_proba( # 预测概率

features_test_matrix)[:, 1] # 取ST类(正类)概率

# Take ST class (positive class) probability模型训练和预测完成。下面通过ROC曲线和混淆矩阵评估模型性能。

Model training and prediction are complete. Next, we evaluate model performance using the ROC curve and confusion matrix.

# ========== 第3步:创建画布并绘制ROC曲线 ==========

# ========== Step 3: Create canvas and plot ROC curve ==========

evaluation_figure, evaluation_axes_array = plt.subplots(1, 2, figsize=(14, 5)) # 创建1×2子图

# Create 1×2 subplots

# ----- 左图:ROC曲线 -----

# ----- Left panel: ROC Curve -----

roc_curve_axes = evaluation_axes_array[0] # 选取左侧子图

# Select the left subplot

false_positive_rates_array, true_positive_rates_array, roc_thresholds_array = roc_curve( # 计算ROC

target_test_series, predicted_probabilities_array) # 传入真实标签和预测概率

# Compute ROC with true labels and predicted probabilities

area_under_curve_score = roc_auc_score(target_test_series, # 计算AUC面积

predicted_probabilities_array) # 传入预测概率计算AUC值

# Compute AUC score with predicted probabilities

roc_curve_axes.plot(false_positive_rates_array, # 绘制ROC曲线

true_positive_rates_array, linewidth=2, color='#E3120B', # 真阳性率为Y轴,红色实线

# True positive rate on Y-axis, red solid line

label=f'ROC曲线 (AUC = {area_under_curve_score:.4f})') # 图例标注AUC值

# Legend with AUC value

roc_curve_axes.plot([0, 1], [0, 1], 'k--', linewidth=1, # 45度对角线(随机基准)

label='随机分类器') # 随机基准线图例

# 45-degree diagonal (random baseline), legend: random classifier

roc_curve_axes.fill_between(false_positive_rates_array, # 填充AUC区域

true_positive_rates_array, alpha=0.2, color='#E3120B') # ROC曲线下方红色半透明填充

# Fill area under ROC curve with semi-transparent red

roc_curve_axes.set_xlabel('假阳性率 (1 - 特异度)', fontsize=12) # x轴标签

# X-axis label: False Positive Rate (1 - Specificity)

roc_curve_axes.set_ylabel('真阳性率 (敏感度)', fontsize=12) # y轴标签

# Y-axis label: True Positive Rate (Sensitivity)

roc_curve_axes.set_title('ROC曲线 - ST预测模型', fontsize=14) # 标题

# Title: ROC Curve - ST Prediction Model

roc_curve_axes.legend(loc='lower right') # 图例

# Legend at lower right

roc_curve_axes.grid(True, alpha=0.3) # 网格线

# Grid lines

ROC曲线绘制完毕。接下来在右侧面板绘制混淆矩阵热力图,直观展示模型分类的准确性。

The ROC curve has been plotted. Next, we draw the confusion matrix heatmap on the right panel to visually display the classification accuracy of the model.

# ----- 右图:混淆矩阵热力图 -----

# ----- Right panel: Confusion Matrix Heatmap -----

confusion_matrix_axes = evaluation_axes_array[1] # 选取右侧子图

# Select the right subplot

calculated_confusion_matrix = confusion_matrix(target_test_series, # 计算混淆矩阵

predicted_classes_array) # 传入预测类别计算混淆矩阵

# Compute confusion matrix with predicted classes

confusion_matrix_image = confusion_matrix_axes.imshow( # 以热力图展示

calculated_confusion_matrix, cmap='Blues') # 蓝色色系显示混淆矩阵

# Display as heatmap with blue color scheme

confusion_matrix_axes.set_xticks([0, 1]) # x轴刻度

# X-axis ticks

confusion_matrix_axes.set_yticks([0, 1]) # y轴刻度

# Y-axis ticks

confusion_matrix_axes.set_xticklabels(['预测:正常', '预测:ST']) # x轴标签

# X-axis labels: Predicted: Normal, Predicted: ST

confusion_matrix_axes.set_yticklabels(['实际:正常', '实际:ST']) # y轴标签

# Y-axis labels: Actual: Normal, Actual: ST

confusion_matrix_axes.set_xlabel('预测标签', fontsize=12) # x轴标题

# X-axis title: Predicted Label

confusion_matrix_axes.set_ylabel('真实标签', fontsize=12) # y轴标题

# Y-axis title: True Label

confusion_matrix_axes.set_title('混淆矩阵', fontsize=14) # 标题

# Title: Confusion Matrix

# 在每个格子中央标注数值

# Annotate values at the center of each cell

for i in range(2): # 遍历行

# Iterate over rows

for j in range(2): # 遍历列

# Iterate over columns

confusion_matrix_axes.text(j, i, # 添加文字标注

calculated_confusion_matrix[i, j], # 传入该格的数值

# Pass the value of this cell

ha='center', va='center', fontsize=16, # 文字居中对齐,字号16

# Center-aligned text, font size 16

color='white' if calculated_confusion_matrix[i, j] > calculated_confusion_matrix.max()/2 else 'black') # 深色格用白字,浅色格用黑字

# White text for dark cells, black text for light cells

plt.colorbar(confusion_matrix_image, ax=confusion_matrix_axes) # 添加颜色条

# Add color bar

plt.tight_layout() # 自动调整布局

# Auto-adjust layout

plt.show() # 显示图表

# Display the figure<Figure size 672x480 with 0 Axes>图 11.1 的左侧面板绘制了ROC曲线,模型的AUC(曲线下面积)达到0.8400,表明模型对ST公司与正常公司的区分能力较强——AUC越接近1.0,分类能力越好;等于0.5则等同于随机猜测。ROC曲线明显位于对角线(随机分类器基准线)之上,红色填充区域直观展示了模型相对于随机分类的”超额辨别力”。右侧面板的混淆矩阵热力图则展示了各类别的具体分类结果。下面输出精确率、召回率和F1分数的分类报告,对模型性能进行量化总结。

The left panel of 图 11.1 shows the ROC curve, with the model’s AUC (Area Under the Curve) reaching 0.8400, indicating strong discriminative ability between ST and normal companies — the closer the AUC is to 1.0, the better the classification ability; 0.5 is equivalent to random guessing. The ROC curve is clearly above the diagonal (random classifier baseline), and the red filled area visually demonstrates the model’s “excess discriminative power” relative to random classification. The confusion matrix heatmap in the right panel displays the specific classification results for each category. Below, we output the classification report with precision, recall, and F1 score to provide a quantitative summary of model performance.

# ========== 第4步:输出分类报告 ==========

# ========== Step 4: Output classification report ==========

print('\n=== 分类报告 ===') # 输出分类报告标题

# Print classification report header

print(classification_report(target_test_series, # 输出精确率/召回率/F1

predicted_classes_array, target_names=['正常', 'ST'])) # 指定类别名称输出报告

# Output precision/recall/F1 with specified class names

=== 分类报告 ===

precision recall f1-score support

正常 0.98 0.83 0.90 539

ST 0.14 0.68 0.23 22

accuracy 0.82 561

macro avg 0.56 0.75 0.56 561

weighted avg 0.95 0.82 0.87 561

分类报告的运行结果揭示了不平衡数据下分类模型的典型特征。模型整体准确率为82%,但这一指标具有误导性——由于正常公司占样本的绝大多数,即使把所有公司都预测为”正常”也能获得96%以上的准确率。更有意义的是关注ST类别的指标:召回率(Recall)为0.68,表明模型能捕捉到68%的真正ST公司;精确率(Precision)为0.14,意味着模型预测为ST的公司中只有14%是真正的ST——存在大量的”误报”(False Positive)。这种低精确率-高召回率的权衡正是由class_weight='balanced'参数驱动的:在信用风险管理中,漏掉一家真正的ST公司(假阴性)的代价远大于多发几次预警(假阳性),因此模型被设计为宁可多报也不漏报。在实际应用中,风控团队通常会结合成本矩阵调整分类阈值,以在精确率和召回率之间找到最佳平衡点。

The classification report results reveal the typical characteristics of classification models under imbalanced data. The model’s overall accuracy is 82%, but this metric is misleading — since normal companies account for the vast majority of the sample, predicting all companies as “normal” would already achieve over 96% accuracy. More meaningful are the metrics for the ST category: Recall is 0.68, indicating the model can capture 68% of truly ST companies; Precision is 0.14, meaning only 14% of companies predicted as ST are actually ST — there are numerous “false alarms” (False Positives). This low-precision-high-recall trade-off is precisely driven by the class_weight='balanced' parameter: in credit risk management, the cost of missing a truly ST company (false negative) is far greater than issuing a few extra warnings (false positives), so the model is designed to err on the side of over-reporting rather than under-reporting. In practice, risk control teams typically adjust the classification threshold in conjunction with a cost matrix to find the optimal balance between precision and recall. ## Poisson回归 (Poisson Regression) {#sec-poisson-regression}

Poisson回归用于建模计数数据,如客户购买次数、事故发生次数、专利申请数量等。

Poisson regression is used to model count data, such as the number of customer purchases, accident occurrences, or patent applications.

11.4.5 模型设定 (Model Specification)

对于计数因变量\(Y \in \{0, 1, 2, ...\}\):

For a count-dependent variable \(Y \in \{0, 1, 2, ...\}\):

\[ Y \sim \text{Poisson}(\lambda) \]

其中均值参数\(\lambda\)通过对数连接与自变量关联,如 式 11.8 所示:

where the mean parameter \(\lambda\) is linked to the independent variables via a log link function, as shown in 式 11.8:

\[ \ln(\lambda) = \beta_0 + \beta_1 X_1 + ... + \beta_p X_p \tag{11.8}\]

等价地:\(\lambda = e^{\beta_0 + \beta_1 X_1 + ... + \beta_p X_p}\)

Equivalently: \(\lambda = e^{\beta_0 + \beta_1 X_1 + ... + \beta_p X_p}\)

11.4.6 发生率比(IRR) (Incidence Rate Ratio)

发生率比(Incidence Rate Ratio, IRR)是Poisson回归中系数的指数变换:

Incidence Rate Ratio (IRR) is the exponential transformation of the coefficients in Poisson regression:

\[ IRR_j = e^{\beta_j} \]

解释: \(X_j\)每增加1单位,事件发生率变为原来的\(e^{\beta_j}\)倍。

Interpretation: For each one-unit increase in \(X_j\), the event rate is multiplied by \(e^{\beta_j}\).

11.4.7 过度离散问题 (Overdispersion)

Poisson分布假设均值等于方差(\(E(Y) = \text{Var}(Y) = \lambda\))。当实际方差大于均值时,称为过度离散(Overdispersion)。

The Poisson distribution assumes that the mean equals the variance (\(E(Y) = \text{Var}(Y) = \lambda\)). When the actual variance exceeds the mean, this is called overdispersion.

过度离散的后果与解决

Consequences and Solutions for Overdispersion

后果:

- 标准误被低估

- 统计检验过于乐观(p值偏小)

- 置信区间过窄

Consequences:

- Standard errors are underestimated

- Statistical tests are overly optimistic (p-values are too small)

- Confidence intervals are too narrow

检验: 计算离散参数\(\phi = \frac{1}{n-p}\sum\frac{(y_i-\hat{\lambda}_i)^2}{\hat{\lambda}_i}\),若\(\phi >> 1\)则存在过度离散

Test: Compute the dispersion parameter \(\phi = \frac{1}{n-p}\sum\frac{(y_i-\hat{\lambda}_i)^2}{\hat{\lambda}_i}\); if \(\phi >> 1\), overdispersion is present.

解决方案:

- 拟泊松回归(Quasi-Poisson): 允许\(\text{Var}(Y) = \phi\lambda\)

- 负二项回归: \(\text{Var}(Y) = \lambda + \lambda^2/\theta\)

Solutions:

- Quasi-Poisson regression: allows \(\text{Var}(Y) = \phi\lambda\)

- Negative Binomial regression: \(\text{Var}(Y) = \lambda + \lambda^2/\theta\)

11.4.8 案例:上市公司营收规模影响因素分析 (Case Study: Factors Affecting Revenue Scale of Listed Companies)

什么是计数数据与营收规模分析?

What Are Count Data and Revenue Scale Analysis?

在企业经营分析中,很多指标本质上是”计数型”数据——例如企业的产品线数量、专利申请数、子公司数量等。即使营收金额本身是连续变量,当我们关注的是营收在某个量级区间内的分布规律时,泊松回归(Poisson Regression)提供了一种建模非负离散型因变量的有效方法。

In business analysis, many indicators are inherently “count-type” data — such as the number of product lines, patent applications, or subsidiaries. Even though revenue itself is a continuous variable, when we focus on the distributional patterns of revenue within certain magnitude intervals, Poisson regression provides an effective method for modeling non-negative discrete dependent variables.

与普通线性回归假设因变量服从正态分布不同,泊松回归假设因变量服从泊松分布,这更适合取值为非负整数且右偏的数据特征。在商业实践中,理解哪些因素驱动企业的营收规模等级,对于行业分析、投资决策和竞争战略制定都具有重要意义。下面如 表 11.4 所示,我们使用长三角地区上市公司的财务数据,分析影响企业营收规模的关键因素。

Unlike ordinary linear regression, which assumes the dependent variable follows a normal distribution, Poisson regression assumes the dependent variable follows a Poisson distribution, which is better suited for data characterized by non-negative integer values and right-skewness. In business practice, understanding which factors drive a company’s revenue scale level is of great significance for industry analysis, investment decision-making, and competitive strategy formulation. As shown in 表 11.4 below, we use financial data from listed companies in the Yangtze River Delta region to analyze the key factors affecting corporate revenue scale.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import statsmodels.api as sm # 统计建模

# Statistical modeling

import platform # 平台检测

# Platform detection

# ========== 第1步:读取本地财务数据 ==========

# ========== Step 1: Read local financial data ==========

if platform.system() == 'Windows': # Windows路径

# Windows path

data_path = 'C:/qiufei/data/stock' # 设置Windows本地数据路径

# Set Windows local data path

else: # Linux路径

# Linux path

data_path = '/home/ubuntu/r2_data_mount/qiufei/data/stock' # 设置Linux本地数据路径

# Set Linux local data path

full_financial_statement_dataframe = pd.read_hdf( # 读取财务报表

# Read financial statements

f'{data_path}/financial_statement.h5') # 指定财务报表文件路径

# Specify financial statement file path

full_stock_basic_info_dataframe = pd.read_hdf( # 读取股票基本信息

# Read stock basic information

f'{data_path}/stock_basic_data.h5') # 指定股票基本信息文件路径

# Specify stock basic information file path

# ========== 第2步:提取最新年报数据 ==========

# ========== Step 2: Extract latest annual report data ==========

latest_annual_financial_dataframe = full_financial_statement_dataframe[ # 筛选第四季度(年报)

# Filter Q4 (annual report)

full_financial_statement_dataframe['quarter'].str.endswith('q4')] # 只保留第四季度年报数据

# Keep only Q4 annual report data

latest_annual_financial_dataframe = latest_annual_financial_dataframe.sort_values( # 按季度降序

# Sort by quarter descending

'quarter', ascending=False) # 按季度降序排列

# Sort by quarter in descending order

latest_annual_financial_dataframe = latest_annual_financial_dataframe.drop_duplicates( # 每家公司保留最新一期

# Keep latest record per company

subset='order_book_id', keep='first') # 每家公司保留最新一期年报

# Keep the most recent annual report for each company最新年报数据提取完成。下面合并行业信息并计算财务指标。

Extraction of the latest annual report data is complete. Next, we merge industry information and compute financial indicators.

# ========== 第3步:计算财务指标 ==========

# ========== Step 3: Compute financial indicators ==========

poisson_analysis_base_dataframe = latest_annual_financial_dataframe.merge( # 合并行业和省份信息

# Merge industry and province information

full_stock_basic_info_dataframe[['order_book_id', 'industry_name', 'province']], # 选取代码、行业、省份列

# Select stock code, industry, and province columns

on='order_book_id', how='left') # 按股票代码左连接

# Left join on stock code

poisson_analysis_base_dataframe['roa'] = ( # 计算总资产收益率(%)

# Compute return on assets (%)

poisson_analysis_base_dataframe['net_profit'] / # 分子:净利润

# Numerator: net profit

poisson_analysis_base_dataframe['total_assets'] * 100) # 分母:总资产,乘100转百分比

# Denominator: total assets, multiply by 100 to convert to percentage

poisson_analysis_base_dataframe['log_assets'] = np.log( # 计算资产规模对数值(亿元)

# Compute log of asset size (in 100 million yuan)

poisson_analysis_base_dataframe['total_assets'] / 1e8) # 总资产除以1亿取对数

# Divide total assets by 100 million, then take logarithm

poisson_analysis_base_dataframe['debt_ratio'] = ( # 计算资产负债率(%)

# Compute debt-to-asset ratio (%)

poisson_analysis_base_dataframe['total_liabilities'] / # 分子:总负债

# Numerator: total liabilities

poisson_analysis_base_dataframe['total_assets'] * 100) # 分母:总资产,乘100转百分比

# Denominator: total assets, multiply by 100 to convert to percentage数据读取和财务指标计算完成。下面构造Poisson计数因变量并拟合回归模型。

Data loading and financial indicator computation are complete. Next, we construct the Poisson count dependent variable and fit the regression model.

# ========== 第4步:构造Poisson计数因变量 ==========

# ========== Step 4: Construct Poisson count dependent variable ==========

# 将营收按10亿元为单位折算为计数变量,截断上限100

# Convert revenue into count variable in units of 1 billion yuan, capped at 100

poisson_analysis_base_dataframe['revenue_units'] = ( # 营收离散化为整数单位

# Discretize revenue into integer units

poisson_analysis_base_dataframe['revenue'] / 1e9 # 营收除以10亿元得到基本单位

# Divide revenue by 1 billion yuan to get base units

).clip(0, 100).round().fillna(0).astype(int) # 截断至[0,100]、四舍五入、填充缺失、转为整数

# Clip to [0,100], round, fill missing values, and convert to integer

# 筛选有效样本:排除极端值和缺失值

# Filter valid samples: exclude extreme values and missing values

poisson_modeling_dataframe = poisson_analysis_base_dataframe[ # 筛选有效样本

# Filter valid samples

(poisson_analysis_base_dataframe['roa'].notna()) & # ROA非缺失

# ROA is not missing

(poisson_analysis_base_dataframe['log_assets'].notna()) & # 资产对数非缺失

# Log assets is not missing

(poisson_analysis_base_dataframe['debt_ratio'].notna()) & # 负债率非缺失

# Debt ratio is not missing

(poisson_analysis_base_dataframe['revenue_units'] >= 0) & # 营收单位非负

# Revenue units is non-negative

(poisson_analysis_base_dataframe['roa'] > -50) & # ROA下限

# ROA lower bound

(poisson_analysis_base_dataframe['roa'] < 50) & # ROA上限

# ROA upper bound

(poisson_analysis_base_dataframe['debt_ratio'] > 0) & # 负债率下限

# Debt ratio lower bound

(poisson_analysis_base_dataframe['debt_ratio'] < 150) # 负债率上限

# Debt ratio upper bound

].copy() # 创建筛选后的独立副本

# Create an independent copy of the filtered data

print('=== 营收单位数据描述统计 (每单位=10亿元) ===') # 输出标题

# Print title

print(f'样本量: {len(poisson_modeling_dataframe)}家公司') # 输出样本量

# Print sample size

print(poisson_modeling_dataframe[ # 描述统计

# Descriptive statistics

['revenue_units', 'log_assets', 'roa', 'debt_ratio'] # 选取关键变量

# Select key variables

].describe().round(3)) # 输出描述统计并保留3位小数

# Print descriptive statistics rounded to 3 decimal places=== 营收单位数据描述统计 (每单位=10亿元) ===

样本量: 5396家公司

revenue_units log_assets roa debt_ratio

count 5396.000 5396.000 5396.000 5396.000

mean 8.064 4.010 1.147 43.382

std 18.400 1.496 7.862 23.015

min 0.000 -0.298 -49.389 1.615

25% 1.000 3.001 -0.885 25.292

50% 2.000 3.701 2.163 41.719

75% 6.000 4.708 5.181 58.559

max 100.000 13.099 43.296 148.442表 11.4 的描述统计结果显示,最终有效样本包含5396家上市公司。因变量revenue_units(以10亿元为单位的营收计数)的均值为8.064,但中位数仅为2.000,最大值达到100(截断上限),标准差高达18.400——这种均值远大于中位数、右偏严重的分布特征,正是计数数据的典型表现,也正是泊松回归优于普通线性回归的应用场景。自变量方面,资产规模对数均值为4.010(对应约55亿元总资产),ROA均值为1.147%,资产负债率均值为43.382%。下面拟合Poisson广义线性模型(GLM),分析这些财务因素如何影响企业的营收规模等级。

The descriptive statistics from 表 11.4 show that the final valid sample contains 5,396 listed companies. The dependent variable revenue_units (revenue count in units of 1 billion yuan) has a mean of 8.064, but a median of only 2.000, a maximum of 100 (the truncation cap), and a standard deviation as high as 18.400 — this distribution pattern, where the mean far exceeds the median with severe right-skewness, is a typical characteristic of count data, and precisely the scenario where Poisson regression outperforms ordinary linear regression. On the independent variable side, the mean of log assets is 4.010 (corresponding to approximately 5.5 billion yuan in total assets), the mean ROA is 1.147%, and the mean debt-to-asset ratio is 43.382%. Next, we fit a Poisson Generalized Linear Model (GLM) to analyze how these financial factors affect the revenue scale level of companies.

# ========== 第5步:拟合Poisson回归模型 ==========

# ========== Step 5: Fit Poisson regression model ==========

poisson_design_matrix = poisson_modeling_dataframe[ # 选取自变量

# Select independent variables

['log_assets', 'roa', 'debt_ratio']] # 选取自变量列

# Select independent variable columns

poisson_design_matrix = sm.add_constant(poisson_design_matrix) # 添加截距项

# Add intercept term

poisson_target_series = poisson_modeling_dataframe['revenue_units'] # 因变量

# Dependent variable

fitted_poisson_model = sm.GLM( # 建立Poisson GLM模型

# Build Poisson GLM model

poisson_target_series, poisson_design_matrix, # 传入因变量和设计矩阵

# Pass dependent variable and design matrix

family=sm.families.Poisson()).fit() # 用极大似然估计拟合

# Fit using maximum likelihood estimation

print('\n=== Poisson回归结果 ===') # 输出回归结果标题

# Print regression results title

print(fitted_poisson_model.summary()) # 输出模型摘要

# Print model summary

print('\n数据来源: 本地financial_statement.h5') # 注明数据来源

# Note data source

=== Poisson回归结果 ===

Generalized Linear Model Regression Results

==============================================================================

Dep. Variable: revenue_units No. Observations: 5396

Model: GLM Df Residuals: 5392

Model Family: Poisson Df Model: 3

Link Function: Log Scale: 1.0000

Method: IRLS Log-Likelihood: -25228.

Date: Tue, 10 Mar 2026 Deviance: 36102.

Time: 20:46:43 Pearson chi2: 4.74e+04

No. Iterations: 6 Pseudo R-squ. (CS): 1.000

Covariance Type: nonrobust

==============================================================================

coef std err z P>|z| [0.025 0.975]

------------------------------------------------------------------------------

const -0.8132 0.015 -54.568 0.000 -0.842 -0.784

log_assets 0.4964 0.002 204.945 0.000 0.492 0.501

roa 0.0492 0.001 49.036 0.000 0.047 0.051

debt_ratio 0.0063 0.000 20.633 0.000 0.006 0.007

==============================================================================

数据来源: 本地financial_statement.h5Poisson回归的运行结果表明模型整体效果良好。三个自变量的系数在1%水平上全部显著(p=0.000):资产规模对数的系数为0.4964,ROA的系数为0.0492,资产负债率的系数为0.0063,均与经济直觉一致——规模越大、盈利越强、杠杆越高的企业,营收水平越高。模型的偏差(Deviance)为36102,Pearson卡方统计量高达4.74×10⁴,远大于残差自由度5392,这已经初步暗示了可能存在过度离散问题(即实际数据的方差远大于Poisson分布假设的方差)。不过,Poisson回归中的原始系数代表的是对数均值的变化量,经济解释并不直观。表 11.5 进一步展示了发生率比(IRR)的计算结果及过度离散检验,将系数转化为更易理解的倍数关系。

The Poisson regression results indicate that the overall model performs well. The coefficients of all three independent variables are significant at the 1% level (p=0.000): the coefficient for log assets is 0.4964, for ROA it is 0.0492, and for debt-to-asset ratio it is 0.0063, all consistent with economic intuition — companies that are larger in scale, more profitable, and more leveraged tend to have higher revenue levels. The model deviance is 36,102 and the Pearson chi-squared statistic is as high as 4.74×10⁴, far exceeding the residual degrees of freedom of 5,392, which already suggests the possible presence of overdispersion (i.e., the actual data variance is much larger than the variance assumed by the Poisson distribution). However, the raw coefficients in Poisson regression represent changes in the log-mean, which are not intuitively interpretable. 表 11.5 further presents the Incidence Rate Ratio (IRR) calculations and the overdispersion test, converting the coefficients into more understandable multiplicative relationships.

# ========== 第1步:计算发生率比(IRR) ==========

# ========== Step 1: Compute Incidence Rate Ratios (IRR) ==========

incidence_rate_ratios_series = np.exp(fitted_poisson_model.params) # 对系数取指数得到IRR

# Exponentiate coefficients to obtain IRR

incidence_rate_ratios_confidence_intervals_dataframe = np.exp( # 对置信区间取指数

# Exponentiate confidence intervals

fitted_poisson_model.conf_int()) # 取回归系数的95%置信区间

# Get the 95% confidence intervals of regression coefficients

incidence_rate_ratios_results_dataframe = pd.DataFrame({ # 汇总为DataFrame

# Compile into a DataFrame

'变量': fitted_poisson_model.params.index, # 变量名称

# Variable names

'系数': fitted_poisson_model.params.values, # 原始系数

# Raw coefficients

'IRR': incidence_rate_ratios_series.values, # 发生率比

# Incidence rate ratios

'IRR 2.5%': incidence_rate_ratios_confidence_intervals_dataframe[0].values, # 下限

# Lower bound

'IRR 97.5%': incidence_rate_ratios_confidence_intervals_dataframe[1].values, # 上限

# Upper bound

'p值': fitted_poisson_model.pvalues.values # 显著性

# Significance

})

print('=== 发生率比(IRR)分析 ===\n') # 输出标题

# Print title

print(incidence_rate_ratios_results_dataframe.round(4).to_string( # 输出IRR表格

# Print IRR table

index=False)) # 不显示行索引

# Do not display row index=== 发生率比(IRR)分析 ===

变量 系数 IRR IRR 2.5% IRR 97.5% p值

const -0.8132 0.4434 0.4307 0.4566 0.0

log_assets 0.4964 1.6428 1.6350 1.6506 0.0

roa 0.0492 1.0504 1.0483 1.0525 0.0

debt_ratio 0.0063 1.0063 1.0057 1.0069 0.0表 11.5 的输出将回归系数转换为更直观的发生率比(IRR)。截距项的IRR为0.4434,代表所有自变量取零时的营收基准水平。三个自变量的IRR分别为:资产规模对数(IRR=1.6428)、ROA(IRR=1.0504)、资产负债率(IRR=1.0063),所有IRR的95%置信区间均不包含1,与系数检验的结论一致。下面将这些IRR值翻译为具体的经济含义,并进行过度离散检验。

The output of 表 11.5 converts the regression coefficients into more intuitive Incidence Rate Ratios (IRR). The IRR for the intercept is 0.4434, representing the baseline revenue level when all independent variables equal zero. The IRRs for the three independent variables are: log assets (IRR=1.6428), ROA (IRR=1.0504), and debt-to-asset ratio (IRR=1.0063). The 95% confidence intervals for all IRRs do not contain 1, consistent with the coefficient test conclusions. Below, we translate these IRR values into specific economic implications and conduct the overdispersion test.

# ========== 第2步:经济含义解释 ==========

# ========== Step 2: Economic interpretation ==========

print('\n=== 解释 ===') # 输出经济含义解释标题

# Print economic interpretation title

print('1. 公司规模(log_assets):') # 公司规模效应

# Company size effect

print(f' IRR = {incidence_rate_ratios_series["log_assets"]:.3f}') # 输出IRR值

# Print IRR value

print(f' 资产规模每增加1个对数单位,营收单位数变为原来的' # 经济解释

# Economic interpretation

f'{incidence_rate_ratios_series["log_assets"]:.2f}倍') # 输出资产规模IRR的具体倍数

# Print specific multiplier of asset size IRR

print('\n2. 盈利能力(roa):') # 盈利能力效应

# Profitability effect

print(f' IRR = {incidence_rate_ratios_series["roa"]:.3f}') # 输出IRR值

# Print IRR value

print(f' ROA每提高1%,营收单位数变化' # 边际效应百分比

# Marginal effect in percentage

f'{(incidence_rate_ratios_series["roa"]-1)*100:.2f}%') # 输出ROA的IRR边际百分比

# Print marginal percentage of ROA's IRR

# ========== 第3步:过度离散检验 ==========

# ========== Step 3: Overdispersion test ==========

print('\n=== 过度离散检验 ===') # 输出过度离散检验标题

# Print overdispersion test title

pearson_chi_squared_statistic = fitted_poisson_model.pearson_chi2 # Pearson卡方统计量

# Pearson chi-squared statistic

residual_degrees_of_freedom = fitted_poisson_model.df_resid # 残差自由度

# Residual degrees of freedom

calculated_dispersion_parameter = (pearson_chi_squared_statistic / # 离散参数 = χ²/df

# Dispersion parameter = χ²/df

residual_degrees_of_freedom) # 除以残差自由度得到离散参数

# Divide by residual degrees of freedom to get dispersion parameter

print(f'Pearson离散参数: {calculated_dispersion_parameter:.4f}') # 输出离散参数

# Print dispersion parameter

if calculated_dispersion_parameter > 1.5: # 判断是否过度离散

# Check whether overdispersion exists

print('存在过度离散,建议使用负二项回归') # 过度离散时的建议

# Overdispersion detected, recommend negative binomial regression

else: # 离散参数不超过阈值时

# When dispersion parameter does not exceed threshold

print('不存在严重过度离散,Poisson模型适用') # Poisson适用

# No severe overdispersion, Poisson model is appropriate

=== 解释 ===

1. 公司规模(log_assets):

IRR = 1.643

资产规模每增加1个对数单位,营收单位数变为原来的1.64倍

2. 盈利能力(roa):

IRR = 1.050

ROA每提高1%,营收单位数变化5.04%

=== 过度离散检验 ===

Pearson离散参数: 8.7826

存在过度离散,建议使用负二项回归上述运行结果揭示了两个关键发现。第一,经济含义方面:资产规模(log_assets)的IRR为1.643,意味着资产规模每增加1个对数单位(约2.7倍),企业营收计数变为原来的1.64倍,表明规模效应是驱动营收的最强因素;ROA的IRR为1.050,ROA每提高1个百分点,营收计数增加约5.04%,盈利能力对营收的正向促进作用虽不如规模效应显著,但在统计上高度可靠。第二,模型诊断方面:Pearson离散参数高达8.7826,远超理论阈值1.0(Poisson分布要求方差等于均值时该参数应接近1),表明数据存在严重的过度离散——实际营收数据的方差是Poisson模型假设方差的近9倍。这意味着当前Poisson模型的标准误被低估,置信区间过窄,p值可能过于乐观。在实际研究中,应当考虑使用负二项回归(Negative Binomial Regression)来修正这一问题。

The above results reveal two key findings. First, regarding economic implications: The IRR for asset size (log_assets) is 1.643, meaning that for each one-unit increase in log assets (approximately a 2.7-fold increase), the company’s revenue count becomes 1.64 times the original, indicating that the scale effect is the strongest driver of revenue; the IRR for ROA is 1.050, meaning each one-percentage-point increase in ROA leads to approximately a 5.04% increase in the revenue count — although the positive effect of profitability on revenue is less pronounced than the scale effect, it is highly statistically reliable. Second, regarding model diagnostics: The Pearson dispersion parameter reaches 8.7826, far exceeding the theoretical threshold of 1.0 (the Poisson distribution requires this parameter to be close to 1 when the variance equals the mean), indicating severe overdispersion in the data — the actual variance of the revenue data is nearly 9 times the variance assumed by the Poisson model. This means the standard errors of the current Poisson model are underestimated, confidence intervals are too narrow, and p-values may be overly optimistic. In practical research, one should consider using Negative Binomial Regression to address this issue.

11.5 从理论到实践:苦活累活 (From Theory to Practice: The “Dirty Work”)

GLM 比线性回归更灵活,但也更容易崩溃。

GLMs are more flexible than linear regression, but they are also more prone to breaking down.

11.5.1 维度的诅咒 (The Curse of Dimensionality)

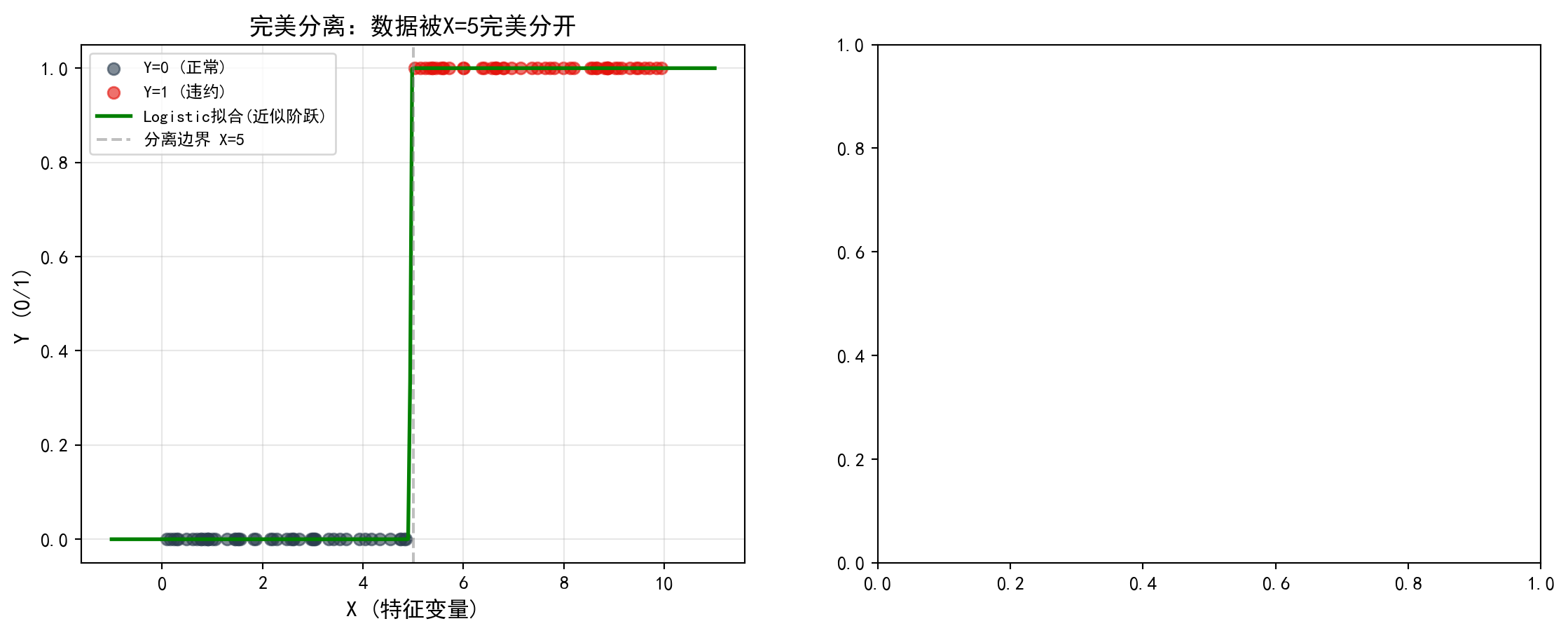

当你做 Logistic 回归时,如果有某个变量(比如”ID号”)能完美预测结果(\(X=x_0 \implies Y=1\)),你会发现系数 \(\beta \to \infty\),标准误 \(SE \to \infty\),模型完全失效。

When performing Logistic regression, if a certain variable (such as “ID number”) can perfectly predict the outcome (\(X=x_0 \implies Y=1\)), you will find that the coefficient \(\beta \to \infty\), the standard error \(SE \to \infty\), and the model completely fails.

现象:这叫完全分离 (Perfect Separation)。

原因:极大似然估计在寻找让 \(P(Y=1) \to 1\) 的参数,只有无穷大的 \(\beta\) 才能做到。

对策:不要以为模型坏了,是你给的变量”太好了”(或者是数据泄露)。使用 Firth’s Logistic Regression 或 正则化 (Penalized Regression) 来约束系数。

Phenomenon: This is called Perfect Separation.

Cause: Maximum likelihood estimation is searching for parameters that make \(P(Y=1) \to 1\), and only an infinite \(\beta\) can achieve this.

Remedy: Don’t assume the model is broken — it’s that the variable you provided is “too good” (or it’s data leakage). Use Firth’s Logistic Regression or Penalized Regression to constrain the coefficients.

11.5.2 过拟合与”奥卡姆剃刀” (Overfitting and “Occam’s Razor”)

非线性模型(如多项式回归、神经网络)可以拟合任何形状的曲线。

Nonlinear models (such as polynomial regression and neural networks) can fit curves of any shape.

陷阱:如果你用一个 10 次多项式拟合 11 个点,\(R^2 = 1.0\)。但这个模型在预测新数据时会一塌糊涂。

真理:如无必要,勿增实体。在商业分析中,简单的 Logistic 回归往往比复杂的深度学习模型更稳健、更可解释、更易上线。

Pitfall: If you fit 11 data points with a 10th-degree polynomial, \(R^2 = 1.0\). But this model will perform terribly when predicting new data.

Truth: Do not multiply entities beyond necessity. In business analytics, a simple Logistic regression is often more robust, more interpretable, and easier to deploy than a complex deep learning model. ## 正则化回归 (Regularized Regression) {#sec-regularization}

当存在多重共线性或高维数据(变量数接近或超过样本量)时,OLS估计可能不稳定或无法计算。正则化回归通过在损失函数中添加惩罚项来约束系数大小。

When multicollinearity or high-dimensional data (where the number of variables approaches or exceeds the sample size) is present, OLS estimates may become unstable or infeasible. Regularized regression constrains coefficient magnitudes by adding penalty terms to the loss function.

11.5.3 Ridge回归(L2正则化) (Ridge Regression / L2 Regularization)

Ridge回归通过对系数添加L2惩罚来约束模型复杂度,其优化目标如 式 11.9 所示:

Ridge regression constrains model complexity by adding an L2 penalty on the coefficients. Its optimization objective is shown in 式 11.9:

\[ \hat{\boldsymbol{\beta}}_{ridge} = \arg\min_{\boldsymbol{\beta}} \left\{\sum_{i=1}^n(y_i - \mathbf{x}_i'\boldsymbol{\beta})^2 + \lambda\sum_{j=1}^p\beta_j^2\right\} \tag{11.9}\]

闭式解:

Closed-Form Solution:

什么是闭式解(Closed-Form Solution)?

What Is a Closed-Form Solution?

在数学和统计学中,闭式解是指可以用一个确定的代数公式直接计算出来的精确解,不需要通过迭代算法逐步逼近。与之相对的是数值解(Numerical Solution),后者需要通过梯度下降、牛顿法等迭代优化算法反复计算才能得到近似解。

In mathematics and statistics, a closed-form solution is an exact solution that can be computed directly using a definite algebraic formula, without the need for iterative algorithms to approximate. In contrast, a numerical solution requires repeated computation through iterative optimization algorithms such as gradient descent or Newton’s method to obtain an approximate answer.

一个熟悉的例子:OLS回归的系数估计 \(\hat{\boldsymbol{\beta}} = (\mathbf{X}'\mathbf{X})^{-1}\mathbf{X}'\mathbf{Y}\) 就是闭式解——给定数据,代入公式即可一步算出结果。而Logistic回归的最大似然估计就没有闭式解,必须用迭代算法求解。闭式解的优势在于计算速度快、结果精确;劣势在于并非所有优化问题都存在闭式解。

A familiar example: the OLS regression coefficient estimate \(\hat{\boldsymbol{\beta}} = (\mathbf{X}'\mathbf{X})^{-1}\mathbf{X}'\mathbf{Y}\) is a closed-form solution — given the data, one simply plugs it into the formula to obtain the result in a single step. In contrast, the maximum likelihood estimation of logistic regression has no closed-form solution and must be solved using iterative algorithms. The advantage of a closed-form solution is fast computation and exact results; the disadvantage is that not all optimization problems have one.

Ridge回归的闭式解为:

The closed-form solution for Ridge regression is:

\[ \hat{\boldsymbol{\beta}}_{ridge} = (\mathbf{X}'\mathbf{X} + \lambda\mathbf{I})^{-1}\mathbf{X}'\mathbf{Y} \]

Ridge回归的性质

Properties of Ridge Regression

收缩效应: 所有系数向零收缩,但不会精确为零

解决共线性: 通过\(\lambda\mathbf{I}\)使矩阵可逆

偏差-方差权衡: 增加偏差换取方差减少

相关变量处理: 相关变量的系数相近

Shrinkage effect: All coefficients are shrunk toward zero, but never exactly to zero

Resolving collinearity: The matrix is made invertible by adding \(\lambda\mathbf{I}\)

Bias–variance tradeoff: Variance is reduced at the cost of increased bias

Handling correlated variables: Coefficients of correlated variables tend to be similar

11.5.4 Lasso回归(L1正则化) (Lasso Regression / L1 Regularization)

Lasso回归通过L1惩罚实现变量选择,其优化目标如 式 11.10 所示:

Lasso regression achieves variable selection through the L1 penalty. Its optimization objective is shown in 式 11.10:

\[ \hat{\boldsymbol{\beta}}_{lasso} = \arg\min_{\boldsymbol{\beta}} \left\{\sum_{i=1}^n(y_i - \mathbf{x}_i'\boldsymbol{\beta})^2 + \lambda\sum_{j=1}^p|\beta_j|\right\} \tag{11.10}\]

特点: L1惩罚可以将一些系数精确收缩为零,实现自动变量选择。

Key feature: The L1 penalty can shrink some coefficients exactly to zero, achieving automatic variable selection.

11.5.5 弹性网 (Elastic Net)

弹性网结合L1和L2惩罚,如 式 11.11 所示:

Elastic Net combines both L1 and L2 penalties, as shown in 式 11.11:

\[ \hat{\boldsymbol{\beta}}_{EN} = \arg\min_{\boldsymbol{\beta}} \left\{\sum_{i=1}^n(y_i - \mathbf{x}_i'\boldsymbol{\beta})^2 + \lambda_1\sum_{j=1}^p|\beta_j| + \lambda_2\sum_{j=1}^p\beta_j^2\right\} \tag{11.11}\]

11.5.6 正则化方法比较 (Comparison of Regularization Methods)

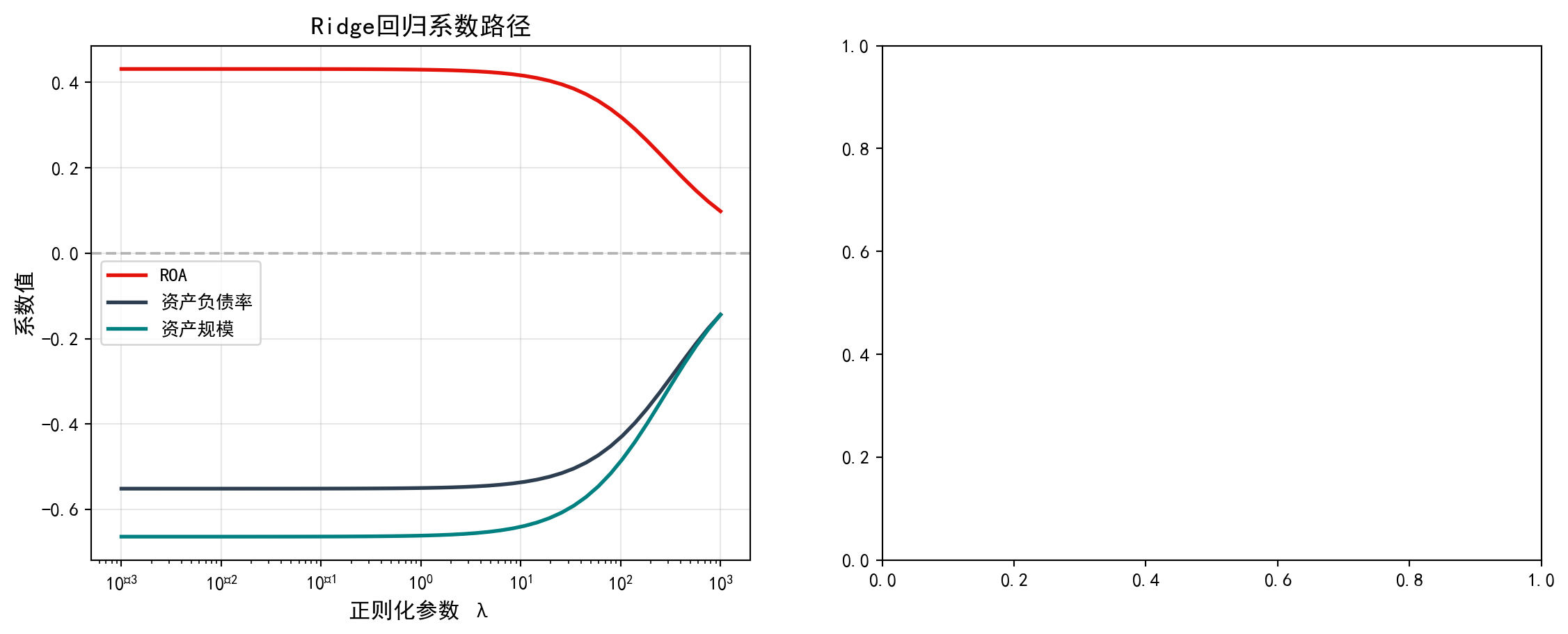

图 11.2 展示了Ridge和Lasso回归在不同正则化参数\(\lambda\)下的系数路径变化。

图 11.2 shows the coefficient path changes of Ridge and Lasso regression under different regularization parameters \(\lambda\).

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

from sklearn.linear_model import Ridge, Lasso, ElasticNet, LinearRegression # 正则化回归

# Regularized regression models

from sklearn.preprocessing import StandardScaler # 标准化器

# Standardizer

from sklearn.model_selection import cross_val_score # 交叉验证

# Cross-validation

import warnings # 警告控制

# Warning control

warnings.filterwarnings('ignore') # 忽略收敛警告

# Suppress convergence warnings

# ========== 第1步:准备数据并标准化 ==========

# ========== Step 1: Prepare data and standardize ==========

regularization_analysis_dataframe = base_analysis_dataframe.dropna( # 删除缺失值,取300个样本

# Drop missing values and take 300 samples

subset=['roa', 'debt_ratio', 'log_assets'])[:300] # 删除关键变量缺失值并取前300个样本

# Drop rows with missing key variables and take the first 300 samples

regularization_features_matrix = regularization_analysis_dataframe[ # 提取自变量矩阵

# Extract independent variable matrix

['roa', 'debt_ratio', 'log_assets']].values # 提取自变量的numpy数组

# Extract numpy array of independent variables

regularization_target_array = regularization_analysis_dataframe[ # 因变量:流动比率

# Dependent variable: current ratio

'current_ratio'].fillna(1).values # 流动比率缺失值填充为1后转数组

# Fill missing current ratio values with 1 and convert to array

regularization_standard_scaler = StandardScaler() # 创建标准化器

# Create standardizer

scaled_regularization_features_matrix = ( # 对自变量进行Z-score标准化

# Z-score standardize the independent variables

regularization_standard_scaler.fit_transform( # 调用fit_transform进行拟合和变换

# Call fit_transform for fitting and transformation

regularization_features_matrix)) # 传入原始特征矩阵进行标准化

# Pass in the raw feature matrix for standardization正则化分析数据标准化完毕。下面遍历不同惩罚参数λ记录Ridge和Lasso的系数路径。

Data standardization for regularization analysis is complete. Next, we iterate over different penalty parameters λ to record the coefficient paths of Ridge and Lasso.

# ========== 第2步:遍历不同λ值记录系数路径 ==========

# ========== Step 2: Iterate over different λ values to record coefficient paths ==========

regularization_penalty_alphas_array = np.logspace(-3, 3, 50) # λ从0.001到10003,取50个点

# λ from 0.001 to 1000, 50 points on a log scale

ridge_coefficients_path_list = [] # 存储Ridge系数路径

# Store Ridge coefficient paths

lasso_coefficients_path_list = [] # 存储Lasso系数路径

# Store Lasso coefficient paths

for alpha in regularization_penalty_alphas_array: # 遍历每个λ值

# Iterate over each λ value

ridge_regression_model = Ridge(alpha=alpha).fit( # 拟合Ridge模型

# Fit Ridge model

scaled_regularization_features_matrix, # 传入标准化后的特征矩阵

# Pass in standardized feature matrix

regularization_target_array) # 传入因变量数组

# Pass in target array

ridge_coefficients_path_list.append( # 记录Ridge系数

# Record Ridge coefficients

ridge_regression_model.coef_) # 提取当前λ下的Ridge系数向量

# Extract Ridge coefficient vector at the current λ

lasso_regression_model = Lasso( # 拟合Lasso模型

# Fit Lasso model

alpha=alpha, max_iter=10000).fit( # 设置惩罚参数和最大迭代次数

# Set penalty parameter and max iterations

scaled_regularization_features_matrix, # 传入标准化特征矩阵

# Pass in standardized feature matrix

regularization_target_array) # 传入因变量进行拟合

# Pass in target for fitting

lasso_coefficients_path_list.append( # 记录Lasso系数

# Record Lasso coefficients

lasso_regression_model.coef_) # 提取当前λ下的Lasso系数向量

# Extract Lasso coefficient vector at the current λ

ridge_coefficients_path_list = np.array( # 转为numpy数组

# Convert to numpy array

ridge_coefficients_path_list) # 将系数列表转为二维数组

# Convert coefficient list to 2D array

lasso_coefficients_path_list = np.array( # 转为numpy数组

# Convert to numpy array

lasso_coefficients_path_list) # 将系数列表转为二维数组

# Convert coefficient list to 2D array计算完不同正则化强度下的系数路径后,我们绘制Ridge和Lasso的正则化路径图进行对比:

After computing the coefficient paths under different regularization strengths, we plot the regularization path diagrams for Ridge and Lasso for comparison:

# ========== 第3步:创建画布并绘制Ridge系数路径 ==========

# ========== Step 3: Create canvas and plot Ridge coefficient paths ==========

regularization_figure, regularization_axes_array = plt.subplots( # 创建1×2子图

# Create 1×2 subplots

1, 2, figsize=(14, 5)) # 1行2列,图幅14×5英寸

# 1 row, 2 columns, figure size 14×5 inches

regularization_variable_names_list = ['ROA', '资产负债率', '资产规模'] # 变量名称

# Variable names

regularization_plot_colors_list = ['#E3120B', '#2C3E50', '#008080'] # 配色方案

# Color scheme

# ----- 左图:Ridge系数路径 -----

# ----- Left panel: Ridge coefficient paths -----

ridge_path_axes = regularization_axes_array[0] # 选取左侧子图

# Select left subplot

for i, (name, color) in enumerate(zip( # 遍历每个变量

# Iterate over each variable

regularization_variable_names_list, # 变量名称列表

# Variable name list

regularization_plot_colors_list)): # 对应颜色列表

# Corresponding color list

ridge_path_axes.plot(regularization_penalty_alphas_array, # 绘制系数随λ变化曲线

# Plot coefficient vs. λ curve

ridge_coefficients_path_list[:, i], # 第i个变量的Ridge系数路径

# Ridge coefficient path for the i-th variable

label=name, color=color, linewidth=2) # 设置图例、颜色和线宽

# Set legend, color, and line width

ridge_path_axes.set_xscale('log') # x轴对数刻度

# Logarithmic scale for x-axis

ridge_path_axes.set_xlabel('正则化参数 λ', fontsize=12) # x轴标签

# x-axis label

ridge_path_axes.set_ylabel('系数值', fontsize=12) # y轴标签

# y-axis label

ridge_path_axes.set_title('Ridge回归系数路径', fontsize=14) # 标题

# Title

ridge_path_axes.legend() # 图例

# Legend

ridge_path_axes.grid(True, alpha=0.3) # 网格线

# Grid lines

ridge_path_axes.axhline(y=0, color='gray', linestyle='--', # 零值参考线

# Zero reference line

alpha=0.5) # 半透明显示

# Semi-transparent display

Ridge系数路径绘制完毕。下面在右侧面板绘制Lasso回归的系数路径,观察其特有的变量选择效应——某些系数会被精确压缩为零。

The Ridge coefficient path plot is complete. Next, we plot the Lasso regression coefficient paths in the right panel to observe its distinctive variable selection effect — some coefficients are shrunk exactly to zero.

# ----- 右图:Lasso系数路径 -----

# ----- Right panel: Lasso coefficient paths -----

lasso_path_axes = regularization_axes_array[1] # 选取右侧子图

# Select right subplot

for i, (name, color) in enumerate(zip( # 遍历每个变量

# Iterate over each variable

regularization_variable_names_list, # 变量名称列表

# Variable name list

regularization_plot_colors_list)): # 对应颜色列表

# Corresponding color list

lasso_path_axes.plot(regularization_penalty_alphas_array, # 绘制系数随λ变化曲线

# Plot coefficient vs. λ curve

lasso_coefficients_path_list[:, i], # 第i个变量的Lasso系数路径

# Lasso coefficient path for the i-th variable

label=name, color=color, linewidth=2) # 设置图例、颜色和线宽

# Set legend, color, and line width

lasso_path_axes.set_xscale('log') # x轴对数刻度

# Logarithmic scale for x-axis

lasso_path_axes.set_xlabel('正则化参数 λ', fontsize=12) # x轴标签

# x-axis label

lasso_path_axes.set_ylabel('系数值', fontsize=12) # y轴标签

# y-axis label

lasso_path_axes.set_title('Lasso回归系数路径', fontsize=14) # 标题

# Title

lasso_path_axes.legend() # 图例

# Legend

lasso_path_axes.grid(True, alpha=0.3) # 网格线

# Grid lines

lasso_path_axes.axhline(y=0, color='gray', linestyle='--', # 零值参考线

# Zero reference line

alpha=0.5) # 半透明显示

# Semi-transparent display

plt.tight_layout() # 自动调整布局

# Automatically adjust layout

plt.show() # 显示图表

# Display the figure<Figure size 672x480 with 0 Axes>从系数路径图可以清晰看到两种正则化方法的本质区别:

The coefficient path plots clearly reveal the essential difference between the two regularization methods:

print('关键观察:') # 输出观察结论

# Print observation conclusions

print('- Ridge: 系数逐渐收缩但不会变为零') # Ridge特点

# Ridge characteristic

print('- Lasso: 系数可以被压缩为精确的零(变量选择)') # Lasso特点

# Lasso characteristic关键观察:

- Ridge: 系数逐渐收缩但不会变为零

- Lasso: 系数可以被压缩为精确的零(变量选择)如 表 11.6 所示,我们通过交叉验证选择最优正则化参数,并比较不同方法的性能。

As shown in 表 11.6, we select the optimal regularization parameter via cross-validation and compare the performance of different methods.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

from sklearn.linear_model import RidgeCV, LassoCV # 带交叉验证的正则化回归

# Regularized regression with cross-validation

# ========== 第1步:交叉验证选择最优λ ==========

# ========== Step 1: Select optimal λ via cross-validation ==========

cross_validated_ridge_model = RidgeCV( # 5折交叉验证选择Ridge最优λ

# 5-fold cross-validation to select optimal Ridge λ

alphas=regularization_penalty_alphas_array, cv=5 # 候选λ值序列,5折交叉验证

# Candidate λ values, 5-fold cross-validation

).fit(scaled_regularization_features_matrix, # 拟合标准化特征数据

# Fit on standardized feature data

regularization_target_array) # 传入因变量完成拟合

# Pass in target to complete fitting

cross_validated_lasso_model = LassoCV( # 5折交叉验证选择Lasso最优λ

# 5-fold cross-validation to select optimal Lasso λ

alphas=regularization_penalty_alphas_array, cv=5, # 候选λ值序列,5折CV

# Candidate λ values, 5-fold CV

max_iter=10000 # 设置最大迭代次数确保收敛

# Set max iterations to ensure convergence

).fit(scaled_regularization_features_matrix, # 拟合标准化特征数据

# Fit on standardized feature data

regularization_target_array) # 传入因变量完成拟合

# Pass in target to complete fittingRidge/Lasso交叉验证拟合完毕。下面输出最优正则化参数与模型对比结果。

Ridge/Lasso cross-validation fitting is complete. Below we output the optimal regularization parameters and model comparison results.

# ========== 第2步:输出交叉验证结果 ==========

# ========== Step 2: Output cross-validation results ==========

print('=== 交叉验证结果 ===\n') # 标题

# Title

print(f'Ridge最优λ: {cross_validated_ridge_model.alpha_:.4f}') # Ridge最优惩罚参数

# Optimal Ridge penalty parameter

ridge_cv_r2_score = cross_validated_ridge_model.score( # 计算Ridge交叉验证R²

# Compute Ridge cross-validated R²

scaled_regularization_features_matrix, # 传入特征矩阵

# Pass in feature matrix

regularization_target_array) # 传入因变量计算R²

# Pass in target to calculate R²

print(f'Ridge CV R²: {ridge_cv_r2_score:.4f}') # 输出Ridge拟合优度

# Print Ridge goodness of fit

print(f'Ridge系数: {cross_validated_ridge_model.coef_.round(4)}') # Ridge系数向量

# Ridge coefficient vector

print(f'\nLasso最优λ: {cross_validated_lasso_model.alpha_:.4f}') # Lasso最优惩罚参数

# Optimal Lasso penalty parameter

lasso_cv_r2_score = cross_validated_lasso_model.score( # 计算Lasso交叉验证R²

# Compute Lasso cross-validated R²

scaled_regularization_features_matrix, # 传入特征矩阵