# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import numpy as np # 导入numpy库,用于数值计算

# Import numpy for numerical computation

import pandas as pd # 导入pandas库,用于数据处理

# Import pandas for data manipulation

from scipy import stats # 导入scipy统计模块,用于假设检验

# Import scipy stats module for hypothesis testing

import matplotlib.pyplot as plt # 导入matplotlib库,用于绑定绘图环境

# Import matplotlib for the plotting bindingd environment

import platform # 导入platform库,用于判断操作系统

# Import platform to detect the operating system

# ========== 第1步:加载本地财务数据 ==========

# ========== Step 1: Load local financial data ==========

if platform.system() == 'Windows': # 判断当前操作系统是否为Windows

# Check if the current OS is Windows

data_path = 'C:/qiufei/data/stock' # Windows平台下的数据路径

# Data path for the Windows platform

else: # 否则为Linux平台

# Otherwise it is the Linux platform

data_path = '/home/ubuntu/r2_data_mount/qiufei/data/stock' # Linux平台下的数据路径

# Data path for the Linux platform

stock_basic_info_dataframe = pd.read_hdf(f'{data_path}/stock_basic_data.h5') # 读取上市公司基本信息

# Read listed company basic information

financial_statement_dataframe = pd.read_hdf(f'{data_path}/financial_statement.h5') # 读取财务报表数据

# Read financial statement data

# ========== 第2步:筛选最新年报数据 ==========

# ========== Step 2: Filter the latest annual report data ==========

financial_statement_dataframe = financial_statement_dataframe[financial_statement_dataframe['quarter'].str.endswith('q4')] # 仅保留第四季度(年报)数据

# Keep only Q4 (annual report) data

financial_statement_dataframe = financial_statement_dataframe.sort_values('quarter', ascending=False) # 按季度降序排列,最新数据在前

# Sort by quarter in descending order so the latest data comes first

financial_statement_dataframe = financial_statement_dataframe.drop_duplicates(subset='order_book_id', keep='first') # 每家公司仅保留最新一期年报

# Keep only the most recent annual report for each company

# ========== 第3步:合并行业信息并筛选银行业 ==========

# ========== Step 3: Merge industry information and filter for banking ==========

merged_financial_dataframe = financial_statement_dataframe.merge(stock_basic_info_dataframe[['order_book_id', 'industry_name']], on='order_book_id', how='left') # 左连接合并行业名称

# Left join to merge industry names

bank_industry_dataframe = merged_financial_dataframe[merged_financial_dataframe['industry_name'] == '货币金融服务'].copy() # 筛选银行业(货币金融服务)

# Filter for the banking industry (Monetary and Financial Services)

# ========== 第4步:计算净利润率并过滤异常值 ==========

# ========== Step 4: Calculate net profit margin and filter outliers ==========

bank_industry_dataframe['profit_margin'] = (bank_industry_dataframe['net_profit'] / bank_industry_dataframe['revenue']) * 100 # 计算净利润率(百分比)

# Calculate net profit margin (percentage)

profit_margin_sample_series = bank_industry_dataframe['profit_margin'].dropna() # 删除缺失值

# Drop missing values

profit_margin_sample_series = profit_margin_sample_series[(profit_margin_sample_series > 0) & (profit_margin_sample_series < 100)] # 过滤不合理的异常值

# Filter out unreasonable outliers7 均值的推断统计 (Inference for Means)

本章深入探讨不同场景下的均值推断方法,包括单样本、两样本和配对样本的检验,以及方差分析(ANOVA)的基础。均值推断是统计推断的核心内容,广泛应用于商业决策、质量控制和医学研究等领域。

This chapter provides an in-depth exploration of inference methods for means under different scenarios, including one-sample, two-sample, and paired-sample tests, as well as the fundamentals of Analysis of Variance (ANOVA). Inference for means is a central topic in statistical inference, with broad applications in business decision-making, quality control, and medical research.

7.1 均值推断在投资分析中的典型应用 (Typical Applications of Mean Inference in Investment Analysis)

均值推断是检验投资策略有效性和评估公司财务表现的核心统计工具。以下展示t检验方法在中国资本市场中的实际应用。

Mean inference is a core statistical tool for testing the effectiveness of investment strategies and evaluating corporate financial performance. The following demonstrates practical applications of t-test methods in China’s capital markets.

7.1.1 应用一:基金业绩的超额收益检验(单样本t检验) (Application 1: Testing Fund Alpha — One-Sample t-Test)

评估一只基金是否创造了真正的Alpha(超额收益),需要用单样本t检验回答:该基金的平均超额收益是否显著异于零?设 \(H_0: \mu_{\text{超额}} = 0\)(基金无超额收益),利用 stock_price_pre_adjusted.h5 中的基准指数数据和基金净值数据计算超额收益序列,然后进行t检验。如果P值足够小,则有统计证据支持该基金确实具备选股或择时能力。

To evaluate whether a fund has generated true Alpha (excess returns), we use a one-sample t-test to answer: is the fund’s average excess return significantly different from zero? Setting \(H_0: \mu_{\text{excess}} = 0\) (the fund produces no excess returns), we calculate the excess return series using benchmark index data from stock_price_pre_adjusted.h5 and fund NAV data, then conduct the t-test. If the p-value is sufficiently small, there is statistical evidence supporting the fund’s stock-picking or market-timing ability.

7.1.2 应用二:政策事件前后的市场反应比较(配对样本t检验) (Application 2: Comparing Market Reactions Before and After Policy Events — Paired-Sample t-Test)

分析师经常需要比较同一组股票在重大政策出台前后的表现差异。例如,研究”降准”政策对银行股收益率的影响,利用同一批银行股在政策公告前10日和后10日的平均日收益率,进行配对样本t检验。由于两组数据来自同一组股票,天然具有配对结构,配对检验消除了个股差异带来的干扰,提高了检验效力。

Analysts frequently need to compare the performance of the same group of stocks before and after major policy announcements. For example, to study the impact of a “reserve requirement ratio cut” policy on bank stock returns, the average daily returns of the same batch of bank stocks in the 10 days before and 10 days after the policy announcement are compared using a paired-sample t-test. Since both data groups come from the same set of stocks, they naturally have a paired structure, and the paired test eliminates interference from individual stock differences, thereby improving test power.

7.1.3 应用三:价值股与成长股的收益率差异(独立样本t检验) (Application 3: Return Differences Between Value and Growth Stocks — Independent Two-Sample t-Test)

利用 valuation_factors_quarterly_15_years.h5 中的估值因子数据,按市净率(PB)将A股上市公司分为”价值股”(低PB)和”成长股”(高PB)两组,使用独立样本t检验比较两组在后续季度的平均收益率是否存在显著差异。这一检验直接关系到价值投资策略的实证基础——如果t检验拒绝了两组均值相等的原假设,则为价值溢价(Value Premium)提供了统计支持。

Using valuation factor data from valuation_factors_quarterly_15_years.h5, A-share listed companies are divided into “value stocks” (low PB) and “growth stocks” (high PB) based on the price-to-book ratio (PB), and an independent two-sample t-test is used to compare whether the average returns of the two groups differ significantly in subsequent quarters. This test is directly related to the empirical foundation of value investing strategies — if the t-test rejects the null hypothesis of equal means between the two groups, it provides statistical support for the Value Premium.

7.2 单样本t检验 (One-Sample t-Test)

7.2.1 理论背景 (Theoretical Background)

单样本t检验用于比较样本均值与已知总体均值。它解决的核心问题是:基于样本信息,我们能否推断总体均值等于某个特定值?其检验统计量如 式 7.1 所示。

The one-sample t-test is used to compare a sample mean with a known population mean. The core question it addresses is: based on sample information, can we infer that the population mean equals a specific value? The test statistic is shown in 式 7.1.

假设设置:

Hypothesis Setup:

原假设 \(H_0: \mu = \mu_0\) (总体均值等于假设值)

备择假设 \(H_1: \mu \neq \mu_0\) (双侧检验) 或 \(\mu > \mu_0\) / \(\mu < \mu_0\) (单侧检验)

Null hypothesis \(H_0: \mu = \mu_0\) (the population mean equals the hypothesized value)

Alternative hypothesis \(H_1: \mu \neq \mu_0\) (two-sided test) or \(\mu > \mu_0\) / \(\mu < \mu_0\) (one-sided test)

检验统计量:

Test Statistic:

\[ t = \frac{\bar{X} - \mu_0}{s/\sqrt{n}} \tag{7.1}\]

其中:

Where:

\(\bar{X}\) 为样本均值

\(\mu_0\) 为假设的总体均值

\(s\) 为样本标准差

\(n\) 为样本量

\(t\) 服从自由度为 \(n-1\) 的t分布

\(\bar{X}\) is the sample mean

\(\mu_0\) is the hypothesized population mean

\(s\) is the sample standard deviation

\(n\) is the sample size

\(t\) follows a t-distribution with \(n-1\) degrees of freedom

几何解释:为什么 t 分布有”厚尾” (Fat Tails)?

Geometric Interpretation: Why Does the t-Distribution Have “Fat Tails”?

直观地看,t 统计量 \(t = \frac{\bar{X}-\mu}{s/\sqrt{n}}\) 实际上包含了两重随机性:

Intuitively, the t-statistic \(t = \frac{\bar{X}-\mu}{s/\sqrt{n}}\) actually contains two sources of randomness:

分子 \(\bar{X}\) 的随机性(围绕 \(\mu\) 波动)。

分母 \(s\) 的随机性(围绕 \(\sigma\) 波动)。

The randomness of the numerator \(\bar{X}\) (fluctuating around \(\mu\)).

The randomness of the denominator \(s\) (fluctuating around \(\sigma\)).

当 \(n\) 很小时,分母 \(s\) 极其不稳定。偶尔,我们会抽到一个 \(s\) 远小于 \(\sigma\) 的样本(低估波动)。这时,除以一个很小的数,会导致 \(t\) 值爆表(变得极大或极小)。

When \(n\) is small, the denominator \(s\) is extremely unstable. Occasionally, we draw a sample where \(s\) is much smaller than \(\sigma\) (underestimating volatility). In this case, dividing by a very small number causes the \(t\) value to explode (becoming very large or very small).

正是这种”分母偶尔过小”的可能性,导致了 t 分布产生比正态分布更多的极端值(厚尾)。

It is precisely this possibility of the “denominator occasionally being too small” that causes the t-distribution to produce more extreme values (fat tails) than the normal distribution.

几何上,正态分布是一个稳定的钟形。

t 分布是一个”被拍扁”的钟形:中心低,尾部高。这意味着小样本推断必须更加保守(区间更宽),以容纳这种额外的不确定性。

Geometrically, the normal distribution is a stable bell shape.

The t-distribution is a “flattened” bell shape: lower in the center, higher in the tails. This means that small-sample inference must be more conservative (wider intervals) to accommodate this additional uncertainty.

7.2.2 适用场景与优缺点 (Applicable Scenarios and Pros/Cons)

适用场景:

Applicable Scenarios:

样本量较小(\(n < 30\))

总体标准差未知

总体近似服从正态分布(或样本量足够大)

Small sample size (\(n < 30\))

Population standard deviation is unknown

The population approximately follows a normal distribution (or the sample size is sufficiently large)

优点:

Advantages:

适用于小样本情况

不需要知道总体标准差

对正态性假设具有一定的稳健性

Suitable for small sample situations

Does not require knowledge of the population standard deviation

Has a certain degree of robustness to the normality assumption

缺点:

Disadvantages:

要求样本来自正态分布(或近似正态)

对异常值敏感

只能处理单一总体的均值检验

Requires the sample to come from a normal distribution (or approximately normal)

Sensitive to outliers

Can only handle mean tests for a single population

7.2.3 案例:检验银行业净利润率是否达到行业基准 (Case Study: Testing Whether Banking Net Profit Margin Meets the Industry Benchmark)

什么是行业基准的达标检验?

What Is an Industry Benchmark Compliance Test?

银行业是国民经济的”血液”,其盈利能力直接影响金融体系的稳定性。监管机构和行业分析师通常会设定净利润率的行业基准值(如 30%),然后检验实际的行业平均水平是否达到这一基准。这种「总体均值是否等于某个特定值」的问题,正是单样本t检验的标准应用场景。

The banking industry is the “lifeblood” of the national economy, and its profitability directly affects the stability of the financial system. Regulators and industry analysts typically set an industry benchmark for net profit margin (e.g., 30%) and then test whether the actual industry average meets this benchmark. This type of question — “does the population mean equal a specific value?” — is exactly the standard application scenario for a one-sample t-test.

与简单的样本均值比较不同,t检验能够充分考虑样本的变异性和样本量的大小,给出严谨的统计推断结论。下面我们以A股银行业上市公司为例,检验该行业平均净利润率是否达到30%的行业基准,结果如 表 7.1 所示。

Unlike a simple comparison of sample means, the t-test fully accounts for sample variability and sample size, providing rigorous statistical inference conclusions. Below, we use A-share listed banking companies as an example to test whether the industry’s average net profit margin meets the 30% industry benchmark, with results shown in 表 7.1.

银行业净利润率数据已清洗完毕。下面执行单样本t检验,判断银行业净利润率是否显著偏离30%的行业基准水平,并计算置信区间与效应量。

The banking industry net profit margin data has been cleaned. Next, we perform a one-sample t-test to determine whether the banking net profit margin significantly deviates from the 30% industry benchmark, and calculate the confidence interval and effect size.

# ========== 第5步:单样本t检验(与行业基准30%对比) ==========

# ========== Step 5: One-sample t-test (compared with the 30% industry benchmark) ==========

sample_size_n = len(profit_margin_sample_series) # 计算有效样本量

# Calculate the effective sample size

industry_benchmark_value = 30.0 # 设定银行业净利润率行业基准为30%

# Set the banking net profit margin industry benchmark at 30%

t_statistic_value, calculated_p_value = stats.ttest_1samp(profit_margin_sample_series, industry_benchmark_value) # 执行单样本t检验,检验总体均值是否等于30%

# Perform one-sample t-test to check whether the population mean equals 30%

# ========== 第6步:计算95%置信区间 ==========

# ========== Step 6: Calculate the 95% confidence interval ==========

confidence_level_value = 0.95 # 设定置信水平为95%

# Set the confidence level to 95%

degrees_of_freedom_value = sample_size_n - 1 # 自由度 = 样本量 - 1

# Degrees of freedom = sample size - 1

sample_mean_value = np.mean(profit_margin_sample_series) # 计算样本均值

# Calculate the sample mean

sample_standard_deviation = np.std(profit_margin_sample_series, ddof=1) # 计算样本标准差(无偏估计)

# Calculate the sample standard deviation (unbiased estimate)

standard_error_value = sample_standard_deviation / np.sqrt(sample_size_n) # 计算标准误

# Calculate the standard error

t_critical_value = stats.t.ppf((1 + confidence_level_value) / 2, degrees_of_freedom_value) # 查t分布双侧临界值

# Look up the two-sided critical value from the t-distribution

margin_of_error_value = t_critical_value * standard_error_value # 计算误差边际

# Calculate the margin of error

confidence_interval_lower_bound = sample_mean_value - margin_of_error_value # 置信区间下界

# Lower bound of the confidence interval

confidence_interval_upper_bound = sample_mean_value + margin_of_error_value # 置信区间上界

# Upper bound of the confidence intervalt检验与置信区间计算完成。下面输出完整的检验结果和结论。

The t-test and confidence interval calculations are complete. Below we output the full test results and conclusions.

# ========== 第7步:输出检验结果 ==========

# ========== Step 7: Output test results ==========

print('=' * 50) # 打印分隔线

# Print separator line

print('银行业净利润率单样本t检验') # 打印标题

# Print title

print('=' * 50) # 打印分隔线

# Print separator line

print(f'样本量: {sample_size_n}家银行') # 输出样本量

# Output sample size

print(f'平均净利润率: {sample_mean_value:.2f}%') # 输出样本均值

# Output sample mean

print(f'标准差: {sample_standard_deviation:.2f}%') # 输出标准差

# Output standard deviation

print(f'标准误: {standard_error_value:.2f}%') # 输出标准误

# Output standard error

print('\n' + '=' * 50) # 打印分隔线

# Print separator line

print('假设检验') # 打印假设检验标题

# Print hypothesis test title

print('=' * 50) # 打印分隔线

# Print separator line

print(f'原假设 H0: μ = {industry_benchmark_value}% (达到行业基准)') # 输出原假设

# Output null hypothesis

print(f'备择假设 H1: μ ≠ {industry_benchmark_value}% (偏离基准)') # 输出备择假设

# Output alternative hypothesis

print(f'\nt统计量: {t_statistic_value:.4f}') # 输出t统计量

# Output t-statistic

print(f'p值: {calculated_p_value:.6f}') # 输出p值

# Output p-value

print(f'自由度: {degrees_of_freedom_value}') # 输出自由度

# Output degrees of freedom

print('\n' + '=' * 50) # 打印分隔线

# Print separator line

print(f'{confidence_level_value*100:.0f}%置信区间') # 打印置信区间标题

# Print confidence interval title

print('=' * 50) # 打印分隔线

# Print separator line

print(f'[{confidence_interval_lower_bound:.2f}, {confidence_interval_upper_bound:.2f}]%') # 输出置信区间

# Output confidence interval==================================================

银行业净利润率单样本t检验

==================================================

样本量: 43家银行

平均净利润率: 37.80%

标准差: 9.04%

标准误: 1.38%

==================================================

假设检验

==================================================

原假设 H0: μ = 30.0% (达到行业基准)

备择假设 H1: μ ≠ 30.0% (偏离基准)

t统计量: 5.6611

p值: 0.000001

自由度: 42

==================================================

95%置信区间

==================================================

[35.02, 40.58]%上述结果显示,A股共有43家银行类上市公司纳入分析,其平均净利润率为37.80%,标准差为9.04%,标准误为1.38%。单样本t检验的t统计量为5.6611,对应p值极小(p=0.000001),自由度为42。95%置信区间为[35.02, 40.58]%,该区间完全位于30%基准线之上,说明银行业整体净利润率显著高于行业基准。

The results above show that a total of 43 A-share listed banking companies were included in the analysis, with an average net profit margin of 37.80%, a standard deviation of 9.04%, and a standard error of 1.38%. The one-sample t-test yields a t-statistic of 5.6611 with an extremely small p-value (p=0.000001) and 42 degrees of freedom. The 95% confidence interval is [35.02, 40.58]%, which lies entirely above the 30% benchmark, indicating that the overall banking industry net profit margin is significantly higher than the industry benchmark.

检验统计量和置信区间输出完毕。下面输出假设检验结论和Cohen’s d效应量评估。

The test statistic and confidence interval output is complete. Below we output the hypothesis test conclusion and Cohen’s d effect size assessment.

# ========== 第8步:结论与效应量 ==========

# ========== Step 8: Conclusion and effect size ==========

print('\n' + '=' * 50) # 打印分隔线

# Print separator line

print('结论') # 打印结论标题

# Print conclusion title

print('=' * 50) # 打印分隔线

# Print separator line

alpha = 0.05 # 设定显著性水平α=0.05

# Set the significance level α=0.05

if calculated_p_value < alpha: # 若p值小于α

# If p-value is less than α

print(f'在α={alpha}水平下拒绝原假设(p={calculated_p_value:.6f} < {alpha})') # 输出拒绝结论

# Output the rejection conclusion

if sample_mean_value > industry_benchmark_value: # 若样本均值高于基准

# If the sample mean is above the benchmark

print('平均净利润率显著高于行业基准') # 说明方向:高于基准

# Indicate direction: above the benchmark

else: # 若样本均值低于基准

# If the sample mean is below the benchmark

print('平均净利润率显著低于行业基准') # 说明方向:低于基准

# Indicate direction: below the benchmark

else: # 若p值不小于α

# If p-value is not less than α

print(f'在α={alpha}水平下不能拒绝原假设(p={calculated_p_value:.6f} >= {alpha})') # 输出不拒绝结论

# Output the failure-to-reject conclusion

print('没有充分证据表明净利润率偏离行业基准') # 说明无统计学差异

# Indicate insufficient evidence for a deviation from the benchmark

cohens_d_effect_size = (sample_mean_value - industry_benchmark_value) / sample_standard_deviation # 计算Cohen's d效应量

# Calculate Cohen's d effect size

print(f'\n效应量(Cohen\'s d): {cohens_d_effect_size:.3f}') # 输出效应量数值

# Output the effect size value

if abs(cohens_d_effect_size) < 0.2: # 若|d|<0.2

# If |d| < 0.2

effect_size_description = '小' # 效应量为小

# Effect size is small

elif abs(cohens_d_effect_size) < 0.5: # 若0.2≤|d|<0.5

# If 0.2 ≤ |d| < 0.5

effect_size_description = '中等' # 效应量为中等

# Effect size is medium

else: # 若|d|≥0.5

# If |d| ≥ 0.5

effect_size_description = '大' # 效应量为大

# Effect size is large

print(f'解释: 这是一个{effect_size_description}效应量') # 输出效应量解释

# Output effect size interpretation

print(f'\n数据来源: 本地financial_statement.h5') # 输出数据来源说明

# Output data source note

==================================================

结论

==================================================

在α=0.05水平下拒绝原假设(p=0.000001 < 0.05)

平均净利润率显著高于行业基准

效应量(Cohen's d): 0.863

解释: 这是一个大效应量

数据来源: 本地financial_statement.h5检验结论明确:在α=0.05的显著性水平下拒绝原假设(p=0.000001远小于0.05),表明银行业平均净利润率显著高于30%的行业基准。效应量Cohen’s d=0.863,属于大效应量(|d|≥0.5),说明银行业净利润率偏离基准不仅在统计上显著,在实际经济意义上也具有重要意义——银行业整体盈利能力远超30%的参考水平。

The test conclusion is clear: at the α=0.05 significance level, the null hypothesis is rejected (p=0.000001, far less than 0.05), indicating that the average banking net profit margin is significantly higher than the 30% industry benchmark. The effect size Cohen’s d=0.863 is classified as a large effect (|d|≥0.5), demonstrating that the deviation of banking net profit margins from the benchmark is not only statistically significant but also of substantial practical economic importance — the overall profitability of the banking industry far exceeds the 30% reference level.

关于p值的常见误解

Common Misconceptions About the p-Value

误解1:“p值越小,原假设越不可能为真”

Misconception 1: “The smaller the p-value, the less likely the null hypothesis is true”

正确理解:p值是在原假设为真的条件下,观察到当前样本(或更极端)的概率。它不是原假设为真的概率。

Correct understanding: The p-value is the probability of observing the current sample (or something more extreme) given that the null hypothesis is true. It is not the probability that the null hypothesis is true.

误解2:“p < 0.05意味着结果有实际意义”

Misconception 2: “p < 0.05 means the result is practically significant”

正确理解:统计显著性不等于实际显著性。在大样本情况下,微小的差异也可能达到统计显著,但可能没有实际意义。因此,报告效应量(effect size)与p值同样重要。

Correct understanding: Statistical significance does not equal practical significance. With large samples, even tiny differences can achieve statistical significance but may have no practical importance. Therefore, reporting the effect size is just as important as reporting the p-value.

误解3:“p > 0.05证明原假设为真”

Misconception 3: “p > 0.05 proves the null hypothesis is true”

正确理解:未能拒绝原假设并不意味着证明原假设为真,只能说明证据不足以拒绝它。

Correct understanding: Failing to reject the null hypothesis does not mean proving it is true; it only indicates that there is insufficient evidence to reject it. ## 两独立样本t检验 (Two Independent Samples t-Test) {#sec-two-sample-test}

7.2.4 理论背景 (Theoretical Background)

两独立样本t检验用于比较两个独立总体的均值是否存在显著差异。这是商业研究中最常用的方法之一,例如比较两个地区的平均消费、两种营销策略的效果等。其检验统计量如 式 7.2 所示。

The two independent samples t-test is used to determine whether there is a statistically significant difference between the means of two independent populations. It is one of the most commonly used methods in business research, such as comparing average consumption between two regions or the effectiveness of two marketing strategies. The test statistic is shown in 式 7.2.

假设设置: - 原假设 \(H_0: \mu_1 - \mu_2 = 0\) (两个总体均值相等) - 备择假设 \(H_1: \mu_1 - \mu_2 \neq 0\) (双侧检验)

Hypothesis Setup: - Null hypothesis \(H_0: \mu_1 - \mu_2 = 0\) (the two population means are equal) - Alternative hypothesis \(H_1: \mu_1 - \mu_2 \neq 0\) (two-sided test)

检验统计量:

Test Statistic:

\[ t = \frac{(\bar{X}_1 - \bar{X}_2) - (\mu_1 - \mu_2)}{SE_{\bar{X}_1 - \bar{X}_2}} \tag{7.2}\]

其中标准误的计算取决于方差假设。

where the calculation of the standard error depends on the variance assumption.

7.2.5 方差齐性检验 (Test for Homogeneity of Variance)

在进行两样本t检验之前,需要检验两个总体方差是否相等。

Before conducting a two-sample t-test, it is necessary to test whether the variances of the two populations are equal.

为什么需要检验方差齐性?

Why Is Testing for Homogeneity of Variance Necessary?

方差齐性(等方差)假设影响t检验的计算方式。如果两个总体的方差相等,我们使用”合并方差”来估计标准误,这会提高统计功效。如果方差不相等,使用合并方差会导致第一类错误率偏离名义水平。

The assumption of homogeneity of variance (equal variances) affects how the t-test is calculated. If the variances of the two populations are equal, we use a “pooled variance” to estimate the standard error, which increases statistical power. If the variances are unequal, using the pooled variance will cause the Type I error rate to deviate from its nominal level.

Levene检验对正态性假设相对稳健,是检验方差齐性的常用方法。 表 9.8 展示了银行业与电子行业的方差齐性检验结果。

Levene’s test is relatively robust to violations of the normality assumption and is a commonly used method for testing homogeneity of variance. 表 9.8 presents the results of a homogeneity of variance test between the banking and electronics industries.

Levene检验: - 原假设 \(H_0: \sigma_1^2 = \sigma_2^2\) (方差相等) - 检验统计量基于各组数据与其组均值的绝对偏差

Levene’s Test: - Null hypothesis \(H_0: \sigma_1^2 = \sigma_2^2\) (variances are equal) - The test statistic is based on the absolute deviations of each group’s data from its group mean

# ========== 导入所需库 ==========

# ========== Import Required Libraries ==========

from scipy.stats import levene, bartlett # 导入Levene检验和Bartlett检验函数

# Import Levene's test and Bartlett's test functions

import numpy as np # 导入numpy库,用于数值计算

# Import numpy for numerical computation

import pandas as pd # 导入pandas库,用于数据处理

# Import pandas for data manipulation

import platform # 导入platform库,用于判断操作系统

# Import platform to detect the operating system

# ========== 第1步:加载本地财务数据 ==========

# ========== Step 1: Load Local Financial Data ==========

if platform.system() == 'Windows': # 判断当前操作系统是否为Windows

# Check if the current operating system is Windows

data_path = 'C:/qiufei/data/stock' # Windows平台下的数据路径

# Data path for the Windows platform

else: # 否则为Linux平台

# Otherwise, it is the Linux platform

data_path = '/home/ubuntu/r2_data_mount/qiufei/data/stock' # Linux平台下的数据路径

# Data path for the Linux platform

stock_basic_info_dataframe = pd.read_hdf(f'{data_path}/stock_basic_data.h5') # 读取上市公司基本信息

# Read basic information of listed companies

financial_statement_dataframe = pd.read_hdf(f'{data_path}/financial_statement.h5') # 读取财务报表数据

# Read financial statement data

# ========== 第2步:筛选最新年报并合并行业信息 ==========

# ========== Step 2: Filter Latest Annual Reports and Merge Industry Info ==========

financial_statement_dataframe = financial_statement_dataframe[financial_statement_dataframe['quarter'].str.endswith('q4')] # 仅保留第四季度(年报)数据

# Keep only Q4 (annual report) data

financial_statement_dataframe = financial_statement_dataframe.sort_values('quarter', ascending=False) # 按季度降序排列

# Sort by quarter in descending order

financial_statement_dataframe = financial_statement_dataframe.drop_duplicates(subset='order_book_id', keep='first') # 每家公司保留最新一期

# Keep only the latest record for each company

merged_financial_dataframe = financial_statement_dataframe.merge(stock_basic_info_dataframe[['order_book_id', 'industry_name']], on='order_book_id', how='left') # 左连接合并行业名称

# Left join to merge industry names

# ========== 第3步:计算净利润率并过滤异常值 ==========

# ========== Step 3: Calculate Net Profit Margin and Filter Outliers ==========

merged_financial_dataframe['profit_margin'] = (merged_financial_dataframe['net_profit'] / merged_financial_dataframe['revenue']) * 100 # 计算净利润率(百分比)

# Calculate net profit margin (percentage)

merged_financial_dataframe = merged_financial_dataframe[(merged_financial_dataframe['profit_margin'].notna()) & (merged_financial_dataframe['profit_margin'] > -50) & (merged_financial_dataframe['profit_margin'] < 50)] # 过滤缺失值和极端异常值

# Filter out missing values and extreme outliers

# ========== 第4步:提取两个行业的净利润率数据 ==========

# ========== Step 4: Extract Net Profit Margin Data for Two Industries ==========

bank_industry_profit_margin_array = merged_financial_dataframe[merged_financial_dataframe['industry_name'] == '货币金融服务']['profit_margin'].values # 提取银行业净利润率

# Extract banking industry net profit margins

electronics_industry_profit_margin_array = merged_financial_dataframe[merged_financial_dataframe['industry_name'] == '计算机、通信和其他电子设备制造业']['profit_margin'].values # 提取电子行业净利润率

# Extract electronics industry net profit margins银行业与电子行业净利润率数据提取完成。下面分别执行Levene检验和Bartlett检验评估两组数据的方差齐性,并输出统计结果。

The net profit margin data for the banking and electronics industries have been extracted. Below, we perform Levene’s test and Bartlett’s test to assess variance homogeneity between the two groups and output the statistical results.

# ========== 第5步:执行方差齐性检验 ==========

# ========== Step 5: Perform Homogeneity of Variance Tests ==========

levene_statistic_value, levene_p_value = levene(bank_industry_profit_margin_array, electronics_industry_profit_margin_array) # Levene检验(基于中位数,更稳健)

# Levene's test (median-based, more robust)

bartlett_statistic_value, bartlett_p_value = bartlett(bank_industry_profit_margin_array, electronics_industry_profit_margin_array) # Bartlett检验(假设正态,功效更高)

# Bartlett's test (assumes normality, higher power)

# ========== 第6步:输出检验结果 ==========

# ========== Step 6: Output Test Results ==========

print('=' * 50) # 打印分隔线

# Print separator line

print('方差齐性检验: 银行业 vs 电子行业净利润率') # 打印标题

# Print title

print('=' * 50) # 打印分隔线

# Print separator line

print(f'\nLevene检验:') # 打印Levene检验标签

# Print Levene's test label

print(f' W统计量: {levene_statistic_value:.4f}') # 输出Levene检验统计量

# Output the Levene test statistic

print(f' p值: {levene_p_value:.4f}') # 输出Levene检验p值

# Output the Levene test p-value

print(f' 结论: {"方差相等" if levene_p_value > 0.05 else "方差不相等"}') # 根据p值输出结论

# Output conclusion based on the p-value

print(f'\nBartlett检验:') # 打印Bartlett检验标签

# Print Bartlett's test label

print(f' 统计量: {bartlett_statistic_value:.4f}') # 输出Bartlett检验统计量

# Output the Bartlett test statistic

print(f' p值: {bartlett_p_value:.4f}') # 输出Bartlett检验p值

# Output the Bartlett test p-value

print(f' 结论: {"方差相等" if bartlett_p_value > 0.05 else "方差不相等"}') # 根据p值输出结论

# Output conclusion based on the p-value

# ========== 第7步:输出描述性统计 ==========

# ========== Step 7: Output Descriptive Statistics ==========

print('\n' + '=' * 50) # 打印分隔线

# Print separator line

print('描述性统计') # 打印描述性统计标题

# Print descriptive statistics title

print('=' * 50) # 打印分隔线

# Print separator line

print(f'银行业: 样本量={len(bank_industry_profit_margin_array)}, 均值={np.mean(bank_industry_profit_margin_array):.2f}%, 标准差={np.std(bank_industry_profit_margin_array, ddof=1):.2f}%') # 输出银行业描述统计

# Output descriptive statistics for the banking industry

print(f'电子业: 样本量={len(electronics_industry_profit_margin_array)}, 均值={np.mean(electronics_industry_profit_margin_array):.2f}%, 标准差={np.std(electronics_industry_profit_margin_array, ddof=1):.2f}%') # 输出电子业描述统计

# Output descriptive statistics for the electronics industry

print(f'\n数据来源: 本地financial_statement.h5') # 输出数据来源说明

# Output data source description==================================================

方差齐性检验: 银行业 vs 电子行业净利润率

==================================================

Levene检验:

W统计量: 3.9804

p值: 0.0465

结论: 方差不相等

Bartlett检验:

统计量: 18.4749

p值: 0.0000

结论: 方差不相等

==================================================

描述性统计

==================================================

银行业: 样本量=40, 均值=36.57%, 标准差=8.08%

电子业: 样本量=606, 均值=3.39%, 标准差=14.63%

数据来源: 本地financial_statement.h5方差齐性检验结果显示:Levene检验的W统计量为3.9804,p值为0.0465(<0.05),判定方差不相等;Bartlett检验的统计量为18.4749,p值趋近于0(0.0000),同样判定方差不相等。两种方法结论一致,均表明银行业与电子行业的净利润率方差存在显著差异。从描述性统计来看,银行业共40家公司,均值为36.57%,标准差为8.08%;电子行业共606家公司,均值仅为3.39%,标准差为14.63%。两个行业在样本量、均值和标准差上均存在巨大差异,因此后续进行两样本t检验时应选择Welch(异方差)t检验。

The results of the homogeneity of variance tests show: Levene’s test yields a W statistic of 3.9804 with a p-value of 0.0465 (< 0.05), concluding that the variances are unequal; Bartlett’s test gives a statistic of 18.4749 with a p-value approaching 0 (0.0000), also concluding that the variances are unequal. Both methods reach the same conclusion, indicating that the net profit margin variances of the banking and electronics industries differ significantly. Descriptive statistics reveal that the banking industry comprises 40 companies with a mean of 36.57% and standard deviation of 8.08%, while the electronics industry comprises 606 companies with a mean of only 3.39% and standard deviation of 14.63%. Given the substantial differences in sample size, mean, and standard deviation between the two industries, the Welch (unequal variance) t-test should be used in subsequent two-sample hypothesis testing.

7.2.6 两种t检验类型 (Two Types of t-Tests)

7.2.6.1 1. 等方差t检验(Student’s t-test) (Equal Variance t-Test)

当方差相等时,使用合并标准误:

When variances are equal, the pooled standard error is used:

\[ SE_{pooled} = \sqrt{\frac{(n_1-1)s_1^2 + (n_2-1)s_2^2}{n_1+n_2-2} \left(\frac{1}{n_1} + \frac{1}{n_2}\right)} \]

自由度:\(df = n_1 + n_2 - 2\)

Degrees of freedom: \(df = n_1 + n_2 - 2\)

7.2.6.2 2. 异方差t检验(Welch’s t-test) (Unequal Variance t-Test)

当方差不相等时,使用Welch校正:

When variances are unequal, Welch’s correction is used:

\[ SE_{Welch} = \sqrt{\frac{s_1^2}{n_1} + \frac{s_2^2}{n_2}} \]

自由度(Welch-Satterthwaite公式):

Degrees of freedom (Welch-Satterthwaite formula):

\[ df_{Welch} = \frac{\left(\frac{s_1^2}{n_1} + \frac{s_2^2}{n_2}\right)^2}{\frac{(s_1^2/n_1)^2}{n_1-1} + \frac{(s_2^2/n_2)^2}{n_2-1}} \]

7.3 从理论到实践:苦活累活 (From Theory to Practice: The “Dirty Work”)

在商业数据中,t 检验最容易违反的不是正态性(由 CLT 拯救),而是独立性 (Independence)。

In business data, the most commonly violated assumption of the t-test is not normality (which is rescued by the CLT), but independence.

7.3.1 1. 独立性假设的破灭 (The Breakdown of the Independence Assumption)

集群效应 (Clustering):如果你分析的是”上海银行”和”宁波银行”的股票,它们都深受”长三角经济”这一共同因素影响。它们不是独立的。

序列相关 (Serial Correlation):今天的股价与昨天的股价高度相关。

Clustering: If you are analyzing stocks such as “Bank of Shanghai” and “Bank of Ningbo,” they are both deeply influenced by the common factor of the “Yangtze River Delta economy.” They are not independent.

Serial Correlation: Today’s stock price is highly correlated with yesterday’s stock price.

如果你忽视相关性,你的样本有效信息量 (Effective Sample Size) 远小于名义样本量 \(n\)。这会导致标准误被低估,t 值虚高,从而产生大量的虚假显著性。

If you ignore correlation, your effective sample size is much smaller than the nominal sample size \(n\). This will cause the standard error to be underestimated, the t-value to be artificially inflated, and consequently produce a large number of spurious significant results.

7.3.2 2. “N=30” 的神话 (The Myth of “N=30”)

许多教科书声称”当 \(N>30\) 时,可以用正态分布代替 t 分布”。

Many textbooks claim that “when \(N>30\), the normal distribution can be used instead of the t-distribution.”

历史考古:这个 “30” 是早期计算能力不足时的妥协(查表方便)。

现代观点:在计算机时代,无论样本量多大,始终使用 t 分布(或 Welch’s t-test)是更安全的选择。特别是当数据分布严重偏态(如财富、赔付金额)时,即使 N=100,中心极限定理的收敛速度也可能不够快。

Historical Archaeology: The “30” was a compromise made in the era of limited computational power (for the convenience of looking up tables).

Modern Perspective: In the age of computers, it is always safer to use the t-distribution (or Welch’s t-test) regardless of sample size. Especially when the data distribution is heavily skewed (e.g., wealth, claim amounts), even with N=100, the convergence speed of the Central Limit Theorem may still be insufficient.

建议:永远默认使用

ttest_ind(equal_var=False),即 Welch’s t-test。它在方差不等和样本量不等多重打击下依然稳健。

Recommendation: Always default to using

ttest_ind(equal_var=False), i.e., Welch’s t-test. It remains robust under the combined impact of unequal variances and unequal sample sizes.

7.3.3 案例:上海与广东上市公司日收益率比较 (Case Study: Comparison of Daily Returns Between Shanghai and Guangdong Listed Companies)

什么是跨区域收益率差异分析?

What Is Cross-Regional Return Differential Analysis?

中国资本市场存在显著的区域异质性:不同省份的上市公司在行业结构、治理水平和市场环境上存在差异,这些差异可能导致股票收益率的系统性不同。例如,上海作为金融中心,其上市公司群体的收益率特征是否与广东的制造业强省有显著差异?这对于建立区分区域风险因子的量化模型至关重要。

China’s capital market exhibits significant regional heterogeneity: listed companies across different provinces differ in industry structure, governance quality, and market environment, which may lead to systematic differences in stock returns. For example, does the return profile of listed companies in Shanghai, as a financial center, differ significantly from that of Guangdong, a manufacturing powerhouse? This is crucial for building quantitative models that distinguish regional risk factors.

独立双样本t检验是比较两个独立群体均值差异的标准方法。它能够在控制样本波动和样本量差异的前提下,精确地判断两个地区的平均收益率是否存在统计上的显著差异。下面使用本地股票数据比较两个地区上市公司的日收益率差异,结果如 表 7.3 所示。

The independent two-sample t-test is the standard method for comparing mean differences between two independent groups. It can precisely determine whether the average returns of two regions are statistically significantly different, while controlling for sample variability and differences in sample sizes. Below, we use local stock data to compare the daily return differences of listed companies between the two regions, with results shown in 表 7.3.

# ========== 导入所需库 ==========

# ========== Import Required Libraries ==========

import pandas as pd # 导入pandas库,用于数据处理

# Import pandas for data manipulation

import numpy as np # 导入numpy库,用于数值计算

# Import numpy for numerical computation

from scipy import stats # 导入scipy统计模块,用于假设检验

# Import scipy stats module for hypothesis testing

import platform # 导入platform库,用于判断操作系统

# Import platform to detect the operating system

# ========== 第1步:加载本地股价数据 ==========

# ========== Step 1: Load Local Stock Price Data ==========

if platform.system() == 'Windows': # 判断当前操作系统是否为Windows

# Check if the current operating system is Windows

data_path = 'C:/qiufei/data/stock' # Windows平台下的数据路径

# Data path for the Windows platform

else: # 否则为Linux平台

# Otherwise, it is the Linux platform

data_path = '/home/ubuntu/r2_data_mount/qiufei/data/stock' # Linux平台下的数据路径

# Data path for the Linux platform

stock_basic_info_dataframe = pd.read_hdf(f'{data_path}/stock_basic_data.h5') # 读取上市公司基本信息

# Read basic information of listed companies

stock_price_dataframe = pd.read_hdf(f'{data_path}/stock_price_pre_adjusted.h5') # 读取前复权日度行情数据

# Read pre-adjusted daily stock price data

stock_price_dataframe = stock_price_dataframe.reset_index() # 重置索引,将多级索引转为普通列

# Reset index, converting multi-level index to regular columns

# ========== 第2步:筛选2023年数据并计算日收益率 ==========

# ========== Step 2: Filter 2023 Data and Calculate Daily Returns ==========

stock_price_2023_dataframe = stock_price_dataframe[(stock_price_dataframe['date'] >= '2023-01-01') & # 按日期范围筛选2023年数据

(stock_price_dataframe['date'] <= '2023-12-31')].copy() # 筛选2023年全年数据

# Filter stock price data for the full year of 2023

stock_price_2023_dataframe = stock_price_2023_dataframe.sort_values(['order_book_id', 'date']) # 按股票代码和日期排序

# Sort by stock code and date

stock_price_2023_dataframe['return'] = stock_price_2023_dataframe.groupby('order_book_id')['close'].pct_change() * 100 # 按个股分组计算日百分比收益率

# Calculate daily percentage returns grouped by individual stock

# ========== 第3步:合并地区信息并提取两地区收益率 ==========

# ========== Step 3: Merge Regional Info and Extract Returns for Two Regions ==========

merged_price_area_dataframe = stock_price_2023_dataframe.merge(stock_basic_info_dataframe[['order_book_id', 'province']], on='order_book_id', how='left') # 左连接合并省份信息

# Left join to merge province information

shanghai_returns_array = merged_price_area_dataframe[merged_price_area_dataframe['province'] == '上海市']['return'].dropna().values # 提取上海市上市公司日收益率

# Extract daily returns of listed companies in Shanghai

guangdong_returns_array = merged_price_area_dataframe[merged_price_area_dataframe['province'] == '广东省']['return'].dropna().values # 提取广东省上市公司日收益率

# Extract daily returns of listed companies in Guangdong数据准备完毕,上海和广东两地上市公司2023年全年的日收益率已提取。下面执行Welch’s t检验,计算均值差的95%置信区间和Hedges’ g效应量,并输出完整的检验报告。

Data preparation is complete. Daily returns for the full year of 2023 have been extracted for listed companies in both Shanghai and Guangdong. Below, we perform Welch’s t-test, calculate the 95% confidence interval for the mean difference and Hedges’ g effect size, and output a complete test report.

# ========== 第4步:执行Welch's t检验(不假设等方差) ==========

# ========== Step 4: Perform Welch's t-Test (No Equal Variance Assumption) ==========

welch_t_statistic, welch_p_value = stats.ttest_ind(shanghai_returns_array, guangdong_returns_array, equal_var=False) # Welch's t检验(异方差稳健)

# Welch's t-test (robust to unequal variances)

shanghai_sample_size, guangdong_sample_size = len(shanghai_returns_array), len(guangdong_returns_array) # 获取两地区样本量

# Get sample sizes for the two regions

# ========== 第5步:计算均值差的95%置信区间 ==========

# ========== Step 5: Calculate the 95% Confidence Interval for the Mean Difference ==========

mean_difference_value = np.mean(shanghai_returns_array) - np.mean(guangdong_returns_array) # 计算两组均值之差

# Calculate the difference between the two group means

standard_error_difference = np.sqrt(np.var(shanghai_returns_array, ddof=1)/shanghai_sample_size + np.var(guangdong_returns_array, ddof=1)/guangdong_sample_size) # 计算均值差的标准误(Welch公式)

# Calculate the standard error of the mean difference (Welch formula)

welch_degrees_of_freedom = (standard_error_difference**4) / ((np.var(shanghai_returns_array, ddof=1)**2)/(shanghai_sample_size**2*(shanghai_sample_size-1)) + # 计算Welch-Satterthwaite自由度(分子为标准误的四次方)

(np.var(guangdong_returns_array, ddof=1)**2)/(guangdong_sample_size**2*(guangdong_sample_size-1))) # Welch-Satterthwaite近似自由度

# Calculate Welch-Satterthwaite approximate degrees of freedom

t_critical_value = stats.t.ppf(0.975, welch_degrees_of_freedom) # 查t分布双侧97.5%分位数

# Look up the 97.5th percentile of the t-distribution (two-sided)

confidence_interval_lower_bound = mean_difference_value - t_critical_value * standard_error_difference # 置信区间下界

# Lower bound of the confidence interval

confidence_interval_upper_bound = mean_difference_value + t_critical_value * standard_error_difference # 置信区间上界

# Upper bound of the confidence interval

# ========== 第6步:计算效应量(Hedges' g) ==========

# ========== Step 6: Calculate Effect Size (Hedges' g) ==========

pooled_standard_deviation = np.sqrt(((shanghai_sample_size-1)*np.var(shanghai_returns_array, ddof=1) + (guangdong_sample_size-1)*np.var(guangdong_returns_array, ddof=1)) / (shanghai_sample_size+guangdong_sample_size-2)) # 计算合并标准差

# Calculate pooled standard deviation

hedges_g_effect_size = mean_difference_value / pooled_standard_deviation # 计算Hedges' g效应量(对小样本有偏差校正)

# Calculate Hedges' g effect size (with bias correction for small samples)Welch’s t检验、置信区间和效应量计算完毕。下面输出完整的检验报告。

The Welch’s t-test, confidence interval, and effect size calculations are complete. Below, we output the full test report.

# ========== 第7步:输出描述性统计 ==========

# ========== Step 7: Output Descriptive Statistics ==========

print('=' * 60) # 打印分隔线

# Print separator line

print('上海 vs 广东公司日收益率比较 (Welch\'s t-test)') # 打印标题

# Print title

print('=' * 60) # 打印分隔线

# Print separator line

print('\n描述性统计:') # 打印描述性统计标签

# Print descriptive statistics label

print('-' * 60) # 打印分隔线

# Print separator line

descriptive_statistics_dataframe = pd.DataFrame({ # 构建描述性统计汇总表

# Construct descriptive statistics summary table

'地区': ['上海市', '广东省'], # 地区列

# Region column

'观测数': [shanghai_sample_size, guangdong_sample_size], # 样本量列

# Number of observations column

'均值(%)': [np.mean(shanghai_returns_array), np.mean(guangdong_returns_array)], # 均值列

# Mean column

'标准差(%)': [np.std(shanghai_returns_array, ddof=1), np.std(guangdong_returns_array, ddof=1)], # 标准差列

# Standard deviation column

'标准误': [np.std(shanghai_returns_array, ddof=1)/np.sqrt(shanghai_sample_size), np.std(guangdong_returns_array, ddof=1)/np.sqrt(guangdong_sample_size)] # 标准误列

# Standard error column

})

print(descriptive_statistics_dataframe.to_string(index=False)) # 输出描述性统计表(不显示索引)

# Output descriptive statistics table (without index)

# ========== 第8步:输出假设检验结果 ==========

# ========== Step 8: Output Hypothesis Test Results ==========

print('\n' + '=' * 60) # 打印分隔线

# Print separator line

print('假设检验结果') # 打印假设检验标题

# Print hypothesis test results title

print('=' * 60) # 打印分隔线

# Print separator line

print(f'原假设 H0: μ_上海 - μ_广东 = 0') # 输出原假设

# Output the null hypothesis

print(f'备择假设 H1: μ_上海 - μ_广东 ≠ 0') # 输出备择假设

# Output the alternative hypothesis

print(f'\nt统计量: {welch_t_statistic:.4f}') # 输出Welch t统计量

# Output the Welch t-statistic

print(f'自由度: {welch_degrees_of_freedom:.2f}') # 输出Welch-Satterthwaite近似自由度

# Output Welch-Satterthwaite approximate degrees of freedom

print(f'p值: {welch_p_value:.8f}') # 输出p值

# Output the p-value============================================================

上海 vs 广东公司日收益率比较 (Welch's t-test)

============================================================

描述性统计:

------------------------------------------------------------

地区 观测数 均值(%) 标准差(%) 标准误

上海市 100789 0.023507 2.532256 0.007976

广东省 197829 0.035093 2.705236 0.006082

============================================================

假设检验结果

============================================================

原假设 H0: μ_上海 - μ_广东 = 0

备择假设 H1: μ_上海 - μ_广东 ≠ 0

t统计量: -1.1551

自由度: 215032.50

p值: 0.24806283Welch双样本t检验的描述性统计显示:上海市上市公司共100,789个交易日观测,日均收益率均值为0.0235%,标准差为2.5323%;广东省上市公司共197,829个观测,日均收益率均值为0.0351%,标准差为2.7052%。Welch t统计量为-1.1551,近似自由度为215,032.50,p值为0.24806283,远大于0.05的显著性水平。

The descriptive statistics from the Welch two-sample t-test show: Shanghai listed companies have 100,789 trading-day observations with a mean daily return of 0.0235% and standard deviation of 2.5323%; Guangdong listed companies have 197,829 observations with a mean daily return of 0.0351% and standard deviation of 2.7052%. The Welch t-statistic is -1.1551, the approximate degrees of freedom is 215,032.50, and the p-value is 0.24806283, far exceeding the 0.05 significance level.

假设检验结果已输出。下面输出均值差置信区间、效应量分析与最终结论。

The hypothesis test results have been output. Below, we present the confidence interval for the mean difference, effect size analysis, and the final conclusion.

# ========== 第9步:输出均值差置信区间与效应量 ==========

# ========== Step 9: Output Mean Difference Confidence Interval and Effect Size ==========

print('\n' + '=' * 60) # 打印分隔线

# Print separator line

print('均值差与95%置信区间') # 打印置信区间标题

# Print confidence interval title

print('=' * 60) # 打印分隔线

# Print separator line

print(f'均值差: {mean_difference_value:.4f}%') # 输出均值差

# Output mean difference

print(f'95% CI: [{confidence_interval_lower_bound:.4f}, {confidence_interval_upper_bound:.4f}]%') # 输出95%置信区间

# Output 95% confidence interval

print('\n' + '=' * 60) # 打印分隔线

# Print separator line

print('效应量') # 打印效应量标题

# Print effect size title

print('=' * 60) # 打印分隔线

# Print separator line

print(f'Hedges\' g: {hedges_g_effect_size:.4f}') # 输出Hedges' g效应量

# Output Hedges' g effect size

if abs(hedges_g_effect_size) < 0.2: # 若|g|<0.2

# If |g| < 0.2

effect_size_description = '小' # 效应量为小

# Effect size is small

elif abs(hedges_g_effect_size) < 0.5: # 若0.2≤|g|<0.5

# If 0.2 ≤ |g| < 0.5

effect_size_description = '中等' # 效应量为中等

# Effect size is medium

elif abs(hedges_g_effect_size) < 0.8: # 若0.5≤|g|<0.8

# If 0.5 ≤ |g| < 0.8

effect_size_description = '大' # 效应量为大

# Effect size is large

else: # 若|g|≥0.8

# If |g| ≥ 0.8

effect_size_description = '非常大' # 效应量为非常大

# Effect size is very large

print(f'解释: 这是一个{effect_size_description}效应量') # 输出效应量解释

# Output effect size interpretation

============================================================

均值差与95%置信区间

============================================================

均值差: -0.0116%

95% CI: [-0.0312, 0.0081]%

============================================================

效应量

============================================================

Hedges' g: -0.0044

解释: 这是一个小效应量均值差为-0.0116%,95%置信区间为[-0.0312, 0.0081]%,该区间包含0,与p值不显著的结论一致。效应量Hedges’ g=-0.0044,属于极小效应量(|g|<0.2),说明上海和广东两地上市公司日收益率之间的差异在实际意义上可以忽略不计。

The mean difference is -0.0116%, with a 95% confidence interval of [-0.0312, 0.0081]%. This interval contains 0, consistent with the non-significant p-value conclusion. The effect size Hedges’ g = -0.0044, which is an extremely small effect size (|g| < 0.2), indicating that the difference in daily returns between Shanghai and Guangdong listed companies is practically negligible.

均值差置信区间与效应量分析已输出。下面输出最终统计结论。

The confidence interval for the mean difference and the effect size analysis have been output. Below, we present the final statistical conclusion.

# ========== 第10步:输出结论 ==========

# ========== Step 10: Output Conclusion ==========

print('\n' + '=' * 60) # 打印分隔线

# Print separator line

print('结论') # 打印结论标题

# Print conclusion title

print('=' * 60) # 打印分隔线

# Print separator line

alpha = 0.05 # 设定显著性水平α=0.05

# Set significance level α = 0.05

if welch_p_value < alpha: # 若p值小于α

# If the p-value is less than α

print(f'在α={alpha}水平下拒绝原假设(p={welch_p_value:.8f} < {alpha})') # 输出拒绝结论

# Output rejection conclusion

print('上海与广东上市公司的日收益率存在显著差异') # 说明存在显著差异

# State that a significant difference exists

else: # 若p值不小于α

# If the p-value is not less than α

print(f'在α={alpha}水平下不能拒绝原假设(p={welch_p_value:.8f} >= {alpha})') # 输出不拒绝结论

# Output failure-to-reject conclusion

print('没有充分证据表明两地区收益率存在差异') # 说明无显著差异

# State that there is insufficient evidence of a difference

print(f'\n数据来源: 本地stock_price_pre_adjusted.h5') # 输出数据来源说明

# Output data source description

============================================================

结论

============================================================

在α=0.05水平下不能拒绝原假设(p=0.24806283 >= 0.05)

没有充分证据表明两地区收益率存在差异

数据来源: 本地stock_price_pre_adjusted.h5最终结论:在α=0.05水平下不能拒绝原假设(p=0.24806283≥0.05),没有充分的统计证据表明上海与广东上市公司的日收益率存在显著差异。结合极小的效应量(Hedges’ g=-0.0044)和包含0的置信区间,可以认为A股不同地区的上市公司在日收益率水平上并无系统性差异,这与有效市场假说的预期一致——如果市场是有效的,地理位置这一因素不应造成系统性的收益率差异。

Final conclusion: At the α = 0.05 level, we fail to reject the null hypothesis (p = 0.24806283 ≥ 0.05). There is insufficient statistical evidence to suggest a significant difference in daily returns between Shanghai and Guangdong listed companies. Combined with the extremely small effect size (Hedges’ g = -0.0044) and the confidence interval containing 0, we can conclude that listed companies in different regions of China’s A-share market do not exhibit systematic differences in daily return levels. This is consistent with the prediction of the Efficient Market Hypothesis — if the market is efficient, geographic location should not cause systematic return differentials. ## 配对样本t检验 (Paired Sample t-Test) {#sec-paired-test}

7.3.4 理论背景 (Theoretical Background)

配对样本t检验用于比较相关或匹配的两组数据的均值差异。与前述独立样本t检验不同,配对设计控制了个体间的变异性,从而提高统计功效。其检验统计量如 式 7.3 所示。

The paired sample t-test is used to compare the mean difference between two related or matched groups of data. Unlike the independent sample t-test discussed earlier, the paired design controls for between-subject variability, thereby increasing statistical power. The test statistic is shown in 式 7.3.

配对设计的典型场景:

- 前后对比:同一对象在干预前后的测量

- 匹配设计:根据某些特征配对的两个不同对象

- 重复测量:同一对象在不同条件下的测量

- 区块设计:同一区块内的两个处理比较

Typical Scenarios for Paired Design:

- Before-and-After Comparison: Measurements of the same subject before and after an intervention

- Matched Design: Two different subjects matched based on certain characteristics

- Repeated Measures: Measurements of the same subject under different conditions

- Block Design: Comparison of two treatments within the same block

检验统计量:

Test Statistic:

\[ t = \frac{\bar{d} - \mu_d}{s_d/\sqrt{n}} \tag{7.3}\]

其中:

- \(\bar{d}\) 为差值的均值(\(d_i = X_{1i} - X_{2i}\))

- \(\mu_d\) 为差值的假设均值(通常为0)

- \(s_d\) 为差值的标准差

- \(n\) 为配对数量

Where:

- \(\bar{d}\) is the mean of the differences (\(d_i = X_{1i} - X_{2i}\))

- \(\mu_d\) is the hypothesized mean of the differences (usually 0)

- \(s_d\) is the standard deviation of the differences

- \(n\) is the number of pairs

配对检验 vs. 独立检验:如何选择?

Paired Test vs. Independent Test: How to Choose?

使用配对检验:

- 数据天然配对(如左右眼、前后测量)

- 个体间变异性大,但关注组内差异

- 可以识别配对关系

Use Paired Test When:

- Data are naturally paired (e.g., left and right eyes, before-and-after measurements)

- Between-subject variability is large, but focus is on within-subject differences

- Pairing relationships can be identified

使用独立检验:

- 两组完全独立,无关联

- 样本量不同

- 无法进行配对

Use Independent Test When:

- The two groups are completely independent with no association

- Sample sizes differ

- Pairing is not possible

关键问题:如果错误地使用独立检验处理配对数据,会损失统计功效;如果错误地使用配对检验处理独立数据,会减少自由度,也可能降低功效。

Key Issue: If an independent test is incorrectly used on paired data, statistical power is lost; if a paired test is incorrectly used on independent data, degrees of freedom are reduced, which may also lower power.

7.3.5 适用场景与优缺点 (Applicable Scenarios, Advantages and Disadvantages)

优点:

- 控制个体差异:消除个体间的变异,提高检验功效

- 所需样本量更小:由于误差项减小,达到同样功效需要更少的样本

- 更精确的估计:关注的是差值,而非绝对值

Advantages:

- Controls for Individual Differences: Eliminates between-subject variability, increasing test power

- Requires Smaller Sample Sizes: Because the error term is reduced, fewer samples are needed to achieve the same power

- More Precise Estimates: Focuses on differences rather than absolute values

缺点:

- 需要配对关系:不适用于独立样本

- 顺序效应:在前后测设计中,可能存在时间或学习效应

- 数据缺失:如果一个配对中的任一数据缺失,整对数据都无法使用

Disadvantages:

- Requires Pairing Relationship: Not applicable to independent samples

- Order Effects: In before-and-after designs, time or learning effects may exist

- Missing Data: If either data point in a pair is missing, the entire pair becomes unusable

7.3.6 案例:银行股2022年与2023年收益率对比 (Case Study: Bank Stock Returns Comparison Between 2022 and 2023)

什么是配对样本的年度绩效比较?

What Is a Paired Sample Annual Performance Comparison?

在评估市场环境变化对企业绩效的影响时,一个自然的问题是:同一批企业在不同年份的表现是否存在显著差异?例如,2022年和2023年的宏观经济环境、货币政策和市场情绪都有明显差异,这些变化是否导致银行股的收益率发生了统计上的显著变化?

When assessing the impact of changes in the market environment on corporate performance, a natural question arises: is there a significant difference in the performance of the same group of firms across different years? For example, the macroeconomic environment, monetary policy, and market sentiment in 2022 and 2023 were quite different—did these changes lead to statistically significant shifts in bank stock returns?

配对样本t检验专门用于处理这种「同一组对象前后比较」的场景。与独立双样本t检验不同,配对检验通过计算每对观测的差值来消除个体差异的干扰,从而更精确地检测时间效应。下面我们比较同一批银行股在2022年和2023年的年收益率,评估年度表现的变化,结果如 表 7.4 所示。

The paired sample t-test is specifically designed for scenarios involving “before-and-after comparisons of the same group of subjects.” Unlike the independent two-sample t-test, the paired test eliminates the interference of individual differences by computing the difference for each paired observation, thereby detecting temporal effects more precisely. Below, we compare the annual returns of the same batch of bank stocks in 2022 and 2023 to evaluate changes in annual performance, with results shown in 表 7.4.

# ========== 导入所需库 ==========

# ========== Import Required Libraries ==========

import numpy as np # 导入NumPy用于数值计算

# Import NumPy for numerical computation

import pandas as pd # 导入Pandas用于数据处理

# Import Pandas for data manipulation

from scipy import stats # 导入SciPy统计模块

# Import SciPy statistics module

import matplotlib.pyplot as plt # 导入Matplotlib用于绑定可视化

# Import Matplotlib for visualization bindingimport platform # 导入platform模块用于判断操作系统

import platform # 导入platform模块用于判断操作系统

# Import platform module to detect operating system

# ========== 第1步:加载本地股票数据 ==========

# ========== Step 1: Load Local Stock Data ==========

if platform.system() == 'Windows': # 判断当前操作系统

# Detect current operating system

data_path = 'C:/qiufei/data/stock' # Windows下的数据路径

# Data path on Windows

else: # 非Windows系统(Linux/Mac)

# Non-Windows system (Linux/Mac)

data_path = '/home/ubuntu/r2_data_mount/qiufei/data/stock' # Linux下的数据路径

# Data path on Linux

stock_basic_info_dataframe = pd.read_hdf(f'{data_path}/stock_basic_data.h5') # 读取上市公司基本信息

# Read listed company basic information

stock_price_dataframe = pd.read_hdf(f'{data_path}/stock_price_pre_adjusted.h5') # 读取前复权日线行情

# Read pre-adjusted daily stock prices

stock_price_dataframe = stock_price_dataframe.reset_index() # 重置索引,将MultiIndex转为普通列

# Reset index, converting MultiIndex to regular columns

# ========== 第2步:筛选银行股 ==========

# ========== Step 2: Filter Bank Stocks ==========

bank_stock_codes_list = stock_basic_info_dataframe[ # 从基本信息中筛选银行业公司

# Filter banking industry companies from basic info

stock_basic_info_dataframe['industry_name'] == '货币金融服务' # 筛选货币金融服务行业(即银行业)

# Filter monetary and financial services industry (i.e., banking)

]['order_book_id'].tolist() # 提取银行股代码列表

# Extract bank stock code list

bank_stock_price_dataframe = stock_price_dataframe[ # 从全部行情中提取银行股数据

# Extract bank stock data from all price data

stock_price_dataframe['order_book_id'].isin(bank_stock_codes_list) # 从行情数据中筛选银行股

# Filter bank stocks from price data

].copy() # 复制子集避免SettingWithCopyWarning

# Copy subset to avoid SettingWithCopyWarning

# ========== 第3步:计算各银行股2022年和2023年的年收益率 ==========

# ========== Step 3: Calculate Annual Returns for Each Bank Stock in 2022 and 2023 ==========

bank_stock_price_dataframe['year'] = pd.to_datetime( # 新增年份列用于后续分年计算

# Add year column for subsequent annual calculation

bank_stock_price_dataframe['date'] # 将日期字符串转为datetime

# Convert date string to datetime

).dt.year # 提取年份

# Extract year银行股数据加载和年份列提取完毕。下面遍历每只银行股,分别计算2022年和2023年的年收益率。

Bank stock data loading and year column extraction are complete. Next, we iterate through each bank stock to calculate the annual returns for 2022 and 2023 separately.

annual_returns_list = [] # 初始化年收益率结果列表

# Initialize annual return result list

for stock_code in bank_stock_codes_list: # 遍历每只银行股

# Iterate through each bank stock

individual_stock_dataframe = bank_stock_price_dataframe[ # 筛选当前遍历到的银行股数据

# Filter data for the current bank stock

bank_stock_price_dataframe['order_book_id'] == stock_code # 筛选当前股票

# Filter current stock

].sort_values('date') # 按日期排序

# Sort by date

for year in [2022, 2023]: # 遍历2022和2023两个年份

# Iterate through the years 2022 and 2023

single_year_stock_dataframe = individual_stock_dataframe[ # 提取当前年份的交易数据

# Extract trading data for the current year

individual_stock_dataframe['year'] == year # 筛选当年数据

# Filter data for the current year

]

if len(single_year_stock_dataframe) > 20: # 至少有20个交易日数据

# At least 20 trading days of data required

first_trading_day_close_price = single_year_stock_dataframe.iloc[0]['close'] # 年初第一个交易日收盘价

# Closing price on the first trading day of the year

last_trading_day_close_price = single_year_stock_dataframe.iloc[-1]['close'] # 年末最后一个交易日收盘价

# Closing price on the last trading day of the year

calculated_annual_return = (last_trading_day_close_price - first_trading_day_close_price) / first_trading_day_close_price * 100 # 年收益率(%)

# Annual return (%)

annual_returns_list.append({ # 将结果追加到列表

# Append result to list

'order_book_id': stock_code, # 股票代码

# Stock code

'year': year, # 年份

# Year

'return': calculated_annual_return # 年收益率

# Annual return

})年度收益率计算完成后,下面我们将数据构建为配对格式的透视表,并对同一批银行股在2022年与2023年的收益率差异进行配对样本t检验。配对t检验的核心思想是利用同一受试对象在不同条件下的表现差异来消除个体差异的干扰,从而更精确地检测年度间的系统性变化。

After the annual return calculations are complete, we construct the data into a paired-format pivot table and perform a paired sample t-test on the return differences of the same batch of bank stocks between 2022 and 2023. The core idea of the paired t-test is to use the performance differences of the same subject under different conditions to eliminate the interference of individual differences, thereby detecting systematic year-over-year changes more precisely.

# ========== 第4步:构建配对数据透视表 ==========

# ========== Step 4: Construct Paired Data Pivot Table ==========

annual_returns_dataframe = pd.DataFrame(annual_returns_list) # 将列表转为DataFrame

# Convert list to DataFrame

annual_returns_pivot_dataframe = annual_returns_dataframe.pivot( # 构建宽格式透视表用于配对分析

# Construct wide-format pivot table for paired analysis

index='order_book_id', # 以股票代码为行索引

# Use stock code as row index

columns='year', # 以年份为列

# Use year as columns

values='return' # 年收益率为值

# Annual return as values

).dropna() # 删除任一年缺失的股票(确保配对完整)

# Drop stocks with missing data in either year (ensure complete pairing)配对数据透视表已构建完成,每行为一只银行股,列为2022年和2023年的年度收益率。下面基于这些配对数据执行配对样本t检验,并计算效应量和置信区间。

The paired data pivot table has been constructed, with each row representing a bank stock and columns for the annual returns of 2022 and 2023. Next, we perform the paired sample t-test based on these paired data and calculate the effect size and confidence interval.

if len(annual_returns_pivot_dataframe) >= 5: # 至少需要5只银行股形成有效配对

# At least 5 bank stocks needed to form valid pairs

returns_2022_array = annual_returns_pivot_dataframe[2022].values # 提取2022年收益率数组

# Extract 2022 return array

returns_2023_array = annual_returns_pivot_dataframe[2023].values # 提取2023年收益率数组

# Extract 2023 return array

paired_sample_size = len(returns_2022_array) # 配对样本量

# Paired sample size

# ========== 第5步:配对t检验 ==========

# ========== Step 5: Paired t-Test ==========

return_differences_array = returns_2023_array - returns_2022_array # 计算配对差值(2023-2022)

# Calculate paired differences (2023-2022)

paired_t_statistic, paired_p_value = stats.ttest_rel( # 执行配对样本t检验

# Perform paired sample t-test

returns_2023_array, returns_2022_array # 配对样本t检验

# Paired sample t-test

)

# ========== 第6步:计算描述性统计与置信区间 ==========

# ========== Step 6: Calculate Descriptive Statistics and Confidence Interval ==========

mean_return_2022 = np.mean(returns_2022_array) # 2022年平均收益率

# Mean return for 2022

mean_return_2023 = np.mean(returns_2023_array) # 2023年平均收益率

# Mean return for 2023

mean_difference_value = np.mean(return_differences_array) # 差值的均值

# Mean of differences

standard_deviation_of_difference = np.std(return_differences_array, ddof=1) # 差值的标准差(无偏估计)

# Standard deviation of differences (unbiased estimate)

standard_error_of_difference = standard_deviation_of_difference / np.sqrt(paired_sample_size) # 差值的标准误

# Standard error of differences

t_critical_value = stats.t.ppf(0.975, paired_sample_size-1) # t分布97.5%分位数(双尾α=0.05)

# 97.5th percentile of t-distribution (two-tailed α=0.05)

confidence_interval_lower_bound = mean_difference_value - t_critical_value * standard_error_of_difference # 95% CI下界

# 95% CI lower bound

confidence_interval_upper_bound = mean_difference_value + t_critical_value * standard_error_of_difference # 95% CI上界

# 95% CI upper bound

# ========== 第7步:计算效应量(配对Cohen's d) ==========

# ========== Step 7: Calculate Effect Size (Paired Cohen's d) ==========

cohens_d_effect_size = mean_difference_value / standard_deviation_of_difference # Cohen's d = 均值差/差值标准差

# Cohen's d = mean difference / SD of differences

else: # 若配对样本不足5只

# If fewer than 5 paired samples

print('银行股数据不足,无法进行配对分析') # 输出数据不足提示

# Print insufficient data message配对检验的核心统计量已计算完毕(t统计量、p值、95%置信区间、Cohen’s d效应量)。下面分步输出描述性统计结果。

The core statistics of the paired test have been calculated (t-statistic, p-value, 95% confidence interval, Cohen’s d effect size). Below, we output the descriptive statistics step by step.

# ========== 第8步:输出描述性统计 ==========

# ========== Step 8: Output Descriptive Statistics ==========

if 'paired_t_statistic' in locals(): # 确认配对t检验已成功执行

# Confirm paired t-test was successfully executed

print('=' * 60) # 打印分隔线

# Print separator line

print('银行股年收益率配对比较 (2022 vs 2023)') # 打印标题

# Print title

print('=' * 60) # 打印分隔线

# Print separator line

print('\n描述性统计:') # 打印描述性统计标签

# Print descriptive statistics label

print('-' * 60) # 打印分隔线

# Print separator line

descriptive_statistics_dataframe = pd.DataFrame({ # 构建描述性统计汇总表

# Construct descriptive statistics summary table

'年份': ['2022年', '2023年', '差值(2023-2022)'], # 年份列

# Year column

'平均收益率(%)': [mean_return_2022, mean_return_2023, mean_difference_value], # 均值列

# Mean column

'标准差(%)': [np.std(returns_2022_array, ddof=1), np.std(returns_2023_array, ddof=1), standard_deviation_of_difference], # 标准差列

# Standard deviation column

'标准误': [np.std(returns_2022_array, ddof=1)/np.sqrt(paired_sample_size), np.std(returns_2023_array, ddof=1)/np.sqrt(paired_sample_size), standard_error_of_difference] # 标准误列

# Standard error column

})

print(descriptive_statistics_dataframe.to_string(index=False)) # 输出描述性统计表

# Output descriptive statistics table============================================================

银行股年收益率配对比较 (2022 vs 2023)

============================================================

描述性统计:

------------------------------------------------------------

年份 平均收益率(%) 标准差(%) 标准误

2022年 -4.227243 16.394309 2.500109

2023年 -0.149614 17.251162 2.630778

差值(2023-2022) 4.077628 21.854905 3.332842描述性统计结果显示:43只银行股在2022年的平均年收益率为-4.23%,标准差为16.39%;2023年的平均年收益率为-0.15%,标准差为17.25%。差值(2023年减去2022年)的平均值为4.08%,标准差为21.85%,标准误为3.33%。虽然2023年的平均表现优于2022年(改善了约4个百分点),但差值标准差较大(21.85%),说明各银行股的年际变化存在较大的个体差异。

The descriptive statistics show that the average annual return of the 43 bank stocks in 2022 was -4.23% with a standard deviation of 16.39%; in 2023, the average annual return was -0.15% with a standard deviation of 17.25%. The mean of the differences (2023 minus 2022) was 4.08%, with a standard deviation of 21.85% and a standard error of 3.33%. Although the average performance in 2023 was better than in 2022 (an improvement of about 4 percentage points), the large standard deviation of the differences (21.85%) indicates substantial individual variation in year-over-year changes among different bank stocks.

描述性统计输出完成。下面输出假设检验结果(t统计量、自由度、p值)和差值的95%置信区间。

Descriptive statistics output is complete. Next, we output the hypothesis test results (t-statistic, degrees of freedom, p-value) and the 95% confidence interval for the differences.

# ========== 第9步:输出假设检验结果与置信区间 ==========

# ========== Step 9: Output Hypothesis Test Results and Confidence Interval ==========

if 'paired_t_statistic' in locals(): # 确认配对t检验结果可用

# Confirm paired t-test results are available

print('\n' + '=' * 60) # 打印分隔线

# Print separator line

print('假设检验结果') # 打印假设检验标题

# Print hypothesis test title

print('=' * 60) # 打印分隔线

# Print separator line

print(f'样本量: {paired_sample_size}只银行股') # 输出配对样本量

# Output paired sample size

print(f'原假设 H0: μ_差值 = 0 (两年收益率无差异)') # 输出原假设

# Output null hypothesis

print(f'备择假设 H1: μ_差值 ≠ 0 (两年收益率有差异)') # 输出备择假设

# Output alternative hypothesis

print(f'\nt统计量: {paired_t_statistic:.4f}') # 输出配对t统计量

# Output paired t-statistic

print(f'自由度: {paired_sample_size-1}') # 输出自由度(n-1)

# Output degrees of freedom (n-1)

print(f'p值: {paired_p_value:.8f}') # 输出p值

# Output p-value

print('\n' + '=' * 60) # 打印分隔线

# Print separator line

print('差值的95%置信区间') # 打印置信区间标题

# Print confidence interval title

print('=' * 60) # 打印分隔线

# Print separator line

print(f'平均差值: {mean_difference_value:.2f}%') # 输出平均差值

# Output mean difference

print(f'95% CI: [{confidence_interval_lower_bound:.2f}, {confidence_interval_upper_bound:.2f}]%') # 输出95%置信区间

# Output 95% confidence interval

============================================================

假设检验结果

============================================================

样本量: 43只银行股

原假设 H0: μ_差值 = 0 (两年收益率无差异)

备择假设 H1: μ_差值 ≠ 0 (两年收益率有差异)

t统计量: 1.2235

自由度: 42

p值: 0.22797260

============================================================

差值的95%置信区间

============================================================

平均差值: 4.08%

95% CI: [-2.65, 10.80]%配对t检验的假设检验结果:共43只银行股参与配对比较,t统计量为1.2235,自由度为42,p值为0.22797260,远大于0.05的显著性水平。差值的95%置信区间为[-2.65, 10.80]%,该区间包含0,与p值不显著的结论一致。这意味着虽然点估计的平均改善幅度为4.08%,但由于个体差异较大,我们无法在统计上确信这一改善是普遍的。

The hypothesis test results of the paired t-test: a total of 43 bank stocks participated in the paired comparison, with a t-statistic of 1.2235, degrees of freedom of 42, and a p-value of 0.22797260, far exceeding the significance level of 0.05. The 95% confidence interval for the differences is [-2.65, 10.80]%, which contains 0, consistent with the non-significant p-value conclusion. This means that although the point estimate suggests an average improvement of 4.08%, due to the large individual variation, we cannot be statistically confident that this improvement is universal.

假设检验和置信区间输出完成。下面输出效应量(Cohen’s d)的解释和最终统计结论。

Hypothesis test and confidence interval output is complete. Next, we output the interpretation of the effect size (Cohen’s d) and the final statistical conclusion.

# ========== 第10步:输出效应量与结论 ==========

# ========== Step 10: Output Effect Size and Conclusion ==========

if 'paired_t_statistic' in locals(): # 确认配对t检验结果可用

# Confirm paired t-test results are available

print('\n' + '=' * 60) # 打印分隔线

# Print separator line

print('效应量') # 打印效应量标题

# Print effect size title

print('=' * 60) # 打印分隔线

# Print separator line

print(f'Cohen\'s d: {cohens_d_effect_size:.3f}') # 输出Cohen's d效应量

# Output Cohen's d effect size

if abs(cohens_d_effect_size) < 0.2: # 若|d|<0.2

# If |d| < 0.2

effect_size_description = '小' # 效应量为小

# Effect size is small

elif abs(cohens_d_effect_size) < 0.5: # 若0.2≤|d|<0.5

# If 0.2 ≤ |d| < 0.5

effect_size_description = '中等' # 效应量为中等

# Effect size is medium

elif abs(cohens_d_effect_size) < 0.8: # 若0.5≤|d|<0.8

# If 0.5 ≤ |d| < 0.8

effect_size_description = '大' # 效应量为大

# Effect size is large

else: # 若|d|≥0.8

# If |d| ≥ 0.8

effect_size_description = '非常大' # 效应量为非常大

# Effect size is very large

print(f'解释: 这是一个{effect_size_description}效应量') # 输出效应量解释

# Output effect size interpretation

print('\n' + '=' * 60) # 打印分隔线

# Print separator line

print('结论') # 打印结论标题

# Print conclusion title

print('=' * 60) # 打印分隔线

# Print separator line

alpha = 0.05 # 设定显著性水平α=0.05

# Set significance level α=0.05

if paired_p_value < alpha: # 若p值小于α

# If p-value is less than α

print(f'在α={alpha}水平下拒绝原假设(p={paired_p_value:.8f} < {alpha})') # 输出拒绝结论

# Output rejection conclusion

print(f'银行股2023年相比2022年收益率变化: {mean_difference_value:.2f}%') # 输出收益率变化幅度

# Output return change magnitude

else: # 若p值不小于α

# If p-value is not less than α

print(f'在α={alpha}水平下不能拒绝原假设(p={paired_p_value:.8f} >= {alpha})') # 输出不拒绝结论

# Output non-rejection conclusion

print('没有充分证据表明两年收益率存在差异') # 说明无显著差异

# State that there is no significant difference

print(f'\n数据来源: 本地stock_price_pre_adjusted.h5') # 输出数据来源说明

# Output data source note

============================================================

效应量

============================================================

Cohen's d: 0.187

解释: 这是一个小效应量

============================================================

结论

============================================================

在α=0.05水平下不能拒绝原假设(p=0.22797260 >= 0.05)

没有充分证据表明两年收益率存在差异

数据来源: 本地stock_price_pre_adjusted.h5效应量Cohen’s d=0.187,属于小效应量(|d|<0.2),说明2022年到2023年的收益率变化幅度从实际意义上来看较为有限。最终结论:在α=0.05的显著性水平下不能拒绝原假设(p=0.22797260≥0.05),没有充分的统计证据表明银行股2022年与2023年的年收益率存在系统性差异。这一结果提醒投资者:即使从点估计来看两年之间存在一定的跨年变化,但由于样本量较小(n=43)且个体波动较大,该变化在统计上并不显著。

Cohen’s d = 0.187, which falls in the small effect size category (|d| < 0.2), indicating that the magnitude of the return change from 2022 to 2023 is relatively limited in practical terms. Final conclusion: at the α = 0.05 significance level, we fail to reject the null hypothesis (p = 0.22797260 ≥ 0.05), and there is insufficient statistical evidence to suggest that there is a systematic difference in annual returns of bank stocks between 2022 and 2023. This result reminds investors that even though the point estimate suggests some year-over-year change, due to the small sample size (n = 43) and large individual variation, the change is not statistically significant.

7.3.7 配对设计的可视化 (Visualization of Paired Design)

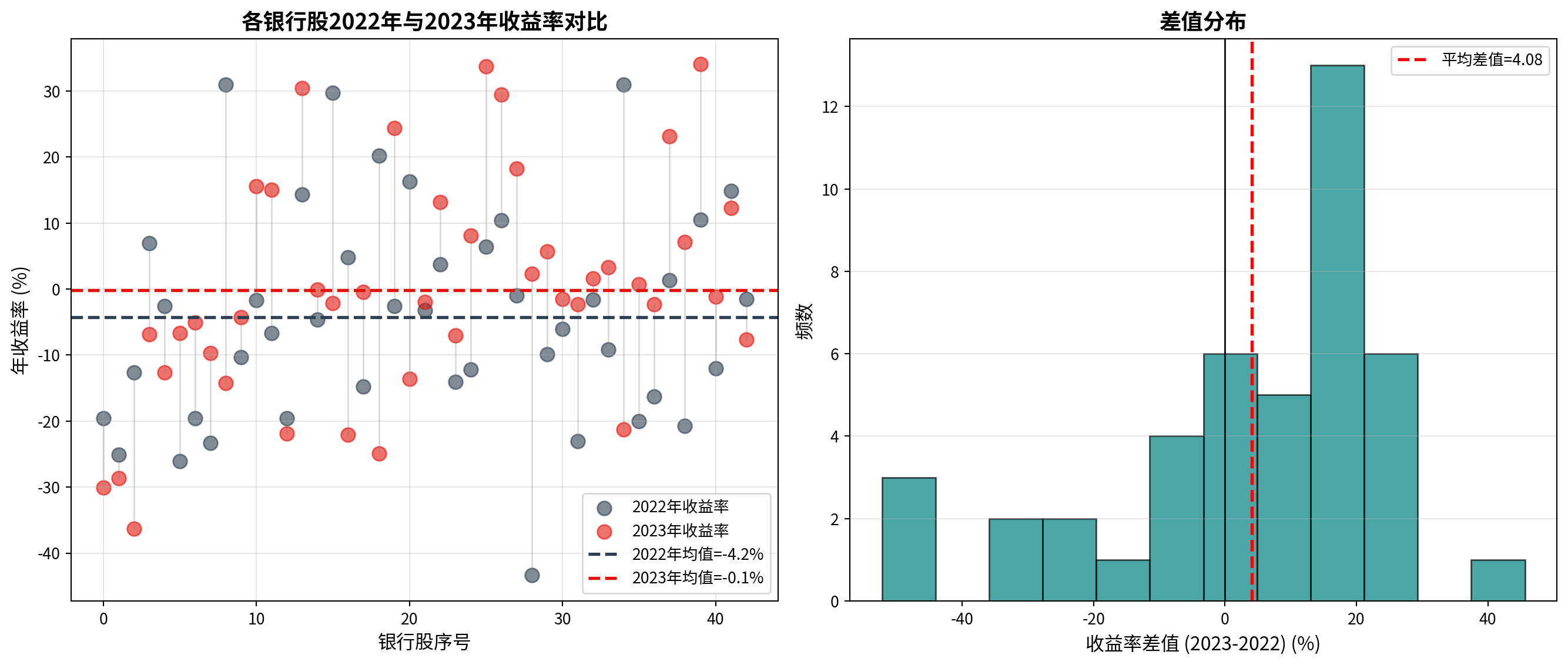

图 7.1 展示了银行股2022年与2023年收益率的配对对比。

图 7.1 presents the paired comparison of bank stock returns between 2022 and 2023.

# ========== 导入可视化所需库 ==========

# ========== Import Visualization Libraries ==========

import matplotlib.pyplot as plt # 导入matplotlib绘图库

# Import matplotlib plotting library

import numpy as np # 导入numpy数值计算库

# Import numpy numerical computation library下面绘制银行股2022年与2023年收益率的配对对比图,左图展示各银行股两年收益率的个体配对散点图,右图展示收益率差值的直方图分布。

Below, we plot the paired comparison chart of bank stock returns between 2022 and 2023. The left panel shows the individual paired scatter plot of each bank stock’s returns over the two years, and the right panel shows the histogram distribution of return differences.

# ========== 第1步:创建双面板图形 ==========

# ========== Step 1: Create Dual-Panel Figure ==========

matplot_figure, matplot_axes_array = plt.subplots(1, 2, figsize=(14, 6)) # 创建1行2列子图布局

# Create 1-row, 2-column subplot layout

if 'paired_sample_size' in locals(): # 检查配对数据变量是否存在(依赖前一代码块)

# Check if paired data variable exists (depends on previous code block)

# ========== 第2步:左图——个体配对前后对比散点图 ==========

# ========== Step 2: Left Panel — Individual Paired Before-After Scatter Plot ==========

matplot_axes_array[0].scatter(range(paired_sample_size), returns_2022_array, alpha=0.6, s=80, label='2022年收益率', color='#2C3E50') # 绘制2022年各银行股收益率散点

# Plot scatter points for 2022 returns of each bank stock

matplot_axes_array[0].scatter(range(paired_sample_size), returns_2023_array, alpha=0.6, s=80, label='2023年收益率', color='#E3120B') # 绘制2023年各银行股收益率散点

# Plot scatter points for 2023 returns of each bank stock

for i in range(paired_sample_size): # 遍历每只银行股

# Iterate through each bank stock

matplot_axes_array[0].plot([i, i], [returns_2022_array[i], returns_2023_array[i]], 'gray', alpha=0.3, linewidth=1) # 用灰色连线连接同一银行股两年的收益率

# Connect the same bank stock's returns across two years with a gray line

matplot_axes_array[0].axhline(mean_return_2022, color='#2C3E50', linestyle='--', linewidth=2, label=f'2022年均值={mean_return_2022:.1f}%') # 添加2022年均值水平线

# Add 2022 mean horizontal line

matplot_axes_array[0].axhline(mean_return_2023, color='#E3120B', linestyle='--', linewidth=2, label=f'2023年均值={mean_return_2023:.1f}%') # 添加2023年均值水平线

# Add 2023 mean horizontal line

matplot_axes_array[0].set_xlabel('银行股序号', fontsize=12) # 设置x轴标签

# Set x-axis label

matplot_axes_array[0].set_ylabel('年收益率 (%)', fontsize=12) # 设置y轴标签

# Set y-axis label

matplot_axes_array[0].set_title('各银行股2022年与2023年收益率对比', fontsize=14, fontweight='bold') # 设置左图标题

# Set left panel title

matplot_axes_array[0].legend(loc='best', fontsize=10) # 添加图例

# Add legend

matplot_axes_array[0].grid(True, alpha=0.3) # 添加网格线

# Add gridlines

# ========== 第3步:右图——收益率差值的直方图分布 ==========

# ========== Step 3: Right Panel — Histogram Distribution of Return Differences ==========

matplot_axes_array[1].hist(return_differences_array, bins=12, color='#008080', alpha=0.7, edgecolor='black') # 绘制差值直方图

# Plot histogram of differences

matplot_axes_array[1].axvline(mean_difference_value, color='red', linestyle='--', linewidth=2, label=f'平均差值={mean_difference_value:.2f}') # 添加平均差值竖线

# Add mean difference vertical line

matplot_axes_array[1].axvline(0, color='black', linestyle='-', linewidth=1) # 添加零值参考线

# Add zero reference line

matplot_axes_array[1].set_xlabel('收益率差值 (2023-2022) (%)', fontsize=12) # 设置x轴标签

# Set x-axis label

matplot_axes_array[1].set_ylabel('频数', fontsize=12) # 设置y轴标签

# Set y-axis label

matplot_axes_array[1].set_title('差值分布', fontsize=14, fontweight='bold') # 设置右图标题

# Set right panel title

matplot_axes_array[1].legend(loc='best', fontsize=10) # 添加图例

# Add legend

matplot_axes_array[1].grid(True, alpha=0.3, axis='y') # 仅添加y轴方向网格线

# Add gridlines only in y-axis direction

# ========== 第4步:调整布局并显示 ==========

# ========== Step 4: Adjust Layout and Display ==========

plt.tight_layout() # 自动调整子图间距

# Automatically adjust subplot spacing

plt.show() # 显示图形

# Display figure

else: # 若配对数据不存在

# If paired data does not exist

print('图表依赖的配对数据不足') # 输出提示信息

# Output warning message

7.4 样本量与统计功效 (Sample Size and Statistical Power)

7.4.1 理论背景 (Theoretical Background)

统计功效(Power)是假设检验中正确拒绝错误原假设的概率,定义为 \(1 - \beta\),其中 \(\beta\) 是第二类错误率。

Statistical power is the probability of correctly rejecting a false null hypothesis in hypothesis testing, defined as \(1 - \beta\), where \(\beta\) is the Type II error rate.

四种概率:

Four Probabilities:

第一类错误(\(\alpha\)):原假设为真时拒绝它(假阳性)

第二类错误(\(\beta\)):原假设为假时未能拒绝(假阴性)

统计功效(\(1-\beta\)):原假设为假时正确拒绝

置信水平(\(1-\alpha\)):原假设为真时正确保留

Type I Error (\(\alpha\)): Rejecting the null hypothesis when it is true (false positive)

Type II Error (\(\beta\)): Failing to reject the null hypothesis when it is false (false negative)

Statistical Power (\(1-\beta\)): Correctly rejecting the null hypothesis when it is false

Confidence Level (\(1-\alpha\)): Correctly retaining the null hypothesis when it is true

功效分析的直观理解