import pandas as pd # 数据处理核心库

# Import the core data manipulation library

import numpy as np # 数值计算核心库

# Import the core numerical computation library

from scipy.optimize import minimize # 用于数值优化求解MLE

# Import the optimizer for numerically solving MLE

import matplotlib.pyplot as plt # 数据可视化库

# Import the data visualization library

from pathlib import Path # 跨平台路径处理

# Import cross-platform path handling utilities

# ---------- 中文字体配置 ----------

# ---------- Chinese Font Configuration ----------

plt.rcParams['font.sans-serif'] = ['SimHei'] # 设置中文字体为黑体,确保中文标签正常显示

# Set Chinese font to SimHei to ensure proper rendering of Chinese labels

plt.rcParams['axes.unicode_minus'] = False # 关闭Unicode负号,避免负号显示为方块

# Disable Unicode minus sign to prevent display issues

# ========== 第1步:加载本地数据 ==========

# ========== Step 1: Load Local Data ==========

# 根据操作系统自动选择数据路径(Windows与Linux双平台兼容)

# Automatically select data path based on operating system (Windows/Linux compatible)

import platform # 导入平台检测模块,用于判断当前操作系统

# Import platform detection module to determine the current OS

if platform.system() == 'Windows': # 判断是否为Windows操作系统

# Check if the current OS is Windows

data_directory_path = Path('C:/qiufei/data/stock') # Windows本地数据目录

# Windows local data directory

else: # 非Windows系统(Linux服务器环境)

# Non-Windows system (Linux server environment)

data_directory_path = Path('/home/ubuntu/r2_data_mount/qiufei/data/stock') # Linux服务器数据目录

# Linux server data directory

basic_info_file_path = data_directory_path / 'stock_basic_data.h5' # 上市公司基本信息文件

# File path for listed company basic information

financial_statement_file_path = data_directory_path / 'financial_statement.h5' # 财务报表文件

# File path for financial statements

# 读取HDF5格式数据,仅选取分析所需列以节省内存

# Read HDF5 data, selecting only the columns needed for analysis to save memory

basic_info_dataframe = pd.read_hdf(basic_info_file_path, columns=['order_book_id', 'province', 'industry_name']) # 读取上市公司基本信息(代码、省份、行业)

# Read listed company basic info (stock code, province, industry)

financial_statement_dataframe = pd.read_hdf(financial_statement_file_path, columns=['order_book_id', 'quarter', 'net_profit', 'total_equity']) # 读取财务报表(代码、季度、净利润、股东权益)

# Read financial statements (stock code, quarter, net profit, total equity)

# ========== 第2步:筛选长三角地区企业 ==========

# ========== Step 2: Filter YRD Region Companies ==========

yrd_provinces_list = ['上海市', '江苏省', '浙江省', '安徽省'] # 长三角四省市

# The four provinces/municipalities of the Yangtze River Delta

yrd_companies_dataframe = basic_info_dataframe[basic_info_dataframe['province'].isin(yrd_provinces_list)] # 按省份筛选

# Filter by province5 推断统计基础 (Inferential Statistics)

推断统计是统计学的核心,它使我们能够从样本数据推断总体特征,并量化推断的不确定性。本章系统介绍点估计、区间估计和假设检验的理论与方法,这些方法是数据驱动决策的科学基础。

Inferential statistics is the core of statistics, enabling us to infer population characteristics from sample data and quantify the uncertainty of such inferences. This chapter systematically introduces the theory and methods of point estimation, interval estimation, and hypothesis testing—the scientific foundation of data-driven decision-making.

5.1 推断统计在金融市场研究中的典型应用 (Typical Applications of Inferential Statistics in Financial Market Research)

推断统计为金融市场的实证研究提供了严格的科学方法论。以下展示假设检验和区间估计在中国资本市场中的核心应用。

Inferential statistics provides a rigorous scientific methodology for empirical research in financial markets. The following demonstrates the core applications of hypothesis testing and interval estimation in China’s capital markets.

5.1.1 应用一:市场有效性的统计检验 (Application 1: Statistical Tests for Market Efficiency)

有效市场假说(EMH)认为,股票价格已充分反映所有可用信息,因此不存在持续获得超额收益的可能。检验这一假说的核心方法是假设检验:设立原假设 \(H_0\):市场有效(超额收益为零),备择假设 \(H_1\):存在可预测的超额收益。使用 stock_price_pre_adjusted.h5 中的历史收益率数据,可以对收益率的序列相关性进行检验。如果日收益率的一阶自相关显著不为零(即拒绝 \(H_0\)),则构成对弱式有效市场的证据反驳。

The Efficient Market Hypothesis (EMH) posits that stock prices fully reflect all available information, making it impossible to consistently earn excess returns. The core method for testing this hypothesis is hypothesis testing: set up the null hypothesis \(H_0\): the market is efficient (excess returns are zero), and the alternative hypothesis \(H_1\): predictable excess returns exist. Using historical return data from stock_price_pre_adjusted.h5, we can test the serial correlation of returns. If the first-order autocorrelation of daily returns is significantly different from zero (i.e., rejecting \(H_0\)), this constitutes evidence against weak-form market efficiency.

5.1.2 应用二:事件研究法中的异常收益检验 (Application 2: Abnormal Return Tests in Event Studies)

事件研究法(Event Study)通过检验事件窗口内的累积异常收益(CAR)是否显著异于零,来评估特定事件(如并购公告、政策变化、财报发布)对股价的影响。其统计基础是置信区间和t检验:如果CAR的95%置信区间不包含零,则认为该事件对股价有显著影响。基于 stock_price_pre_adjusted.h5 和 stock_basic_data.h5 中的实际数据,我们可以对长三角地区上市公司的重大事件进行实证分析。

The Event Study methodology assesses the impact of specific events (such as M&A announcements, policy changes, and earnings releases) on stock prices by testing whether the Cumulative Abnormal Return (CAR) within the event window is significantly different from zero. Its statistical foundation rests on confidence intervals and t-tests: if the 95% confidence interval for CAR does not contain zero, the event is considered to have a significant impact on stock prices. Based on actual data from stock_price_pre_adjusted.h5 and stock_basic_data.h5, we can conduct empirical analysis of major events for listed companies in the Yangtze River Delta region.

5.1.3 应用三:投资策略的统计显著性评估 (Application 3: Statistical Significance Assessment of Investment Strategies)

量化投资中,评估一个交易策略是否真正有效而非依靠”运气”,需要严格的假设检验。原假设为 \(H_0\):策略的真实超额收益为零(策略无效),通过统计检验量化策略表现的置信水平。结合 stock_price_pre_adjusted.h5 中的历史行情数据构建回测,并使用t检验评估策略收益的统计显著性,可以区分真正有效的Alpha策略和过拟合的”数据挖掘”结果。这体现了推断统计在投资实践中防范”p-hacking”和过度拟合的重要价值。

In quantitative investing, evaluating whether a trading strategy is genuinely effective rather than relying on “luck” requires rigorous hypothesis testing. The null hypothesis is \(H_0\): the strategy’s true excess return is zero (the strategy is ineffective), and statistical tests are used to quantify the confidence level of strategy performance. By constructing backtests using historical market data from stock_price_pre_adjusted.h5 and employing t-tests to assess the statistical significance of strategy returns, one can distinguish truly effective Alpha strategies from overfitted “data mining” results. This exemplifies the critical value of inferential statistics in guarding against “p-hacking” and overfitting in investment practice.

5.2 点估计 (Point Estimation)

5.2.1 估计量的性质 (Properties of Estimators)

5.2.1.1 无偏性 (Unbiasedness)

估计量 \(\hat{\theta}\) 是参数 \(\theta\) 的无偏估计,如果它满足 式 5.1 所示的条件:

An estimator \(\hat{\theta}\) is an unbiased estimator of the parameter \(\theta\) if it satisfies the condition shown in 式 5.1:

\[ E[\hat{\theta}] = \theta \tag{5.1}\]

常见无偏估计量:

- 样本均值 \(\bar{X}\) 是总体均值 \(\mu\) 的无偏估计

- 样本方差 \(S^2 = \frac{1}{n-1}\sum_{i=1}^n (X_i - \bar{X})^2\) 是总体方差 \(\sigma^2\) 的无偏估计

Common unbiased estimators:

- The sample mean \(\bar{X}\) is an unbiased estimator of the population mean \(\mu\)

- The sample variance \(S^2 = \frac{1}{n-1}\sum_{i=1}^n (X_i - \bar{X})^2\) is an unbiased estimator of the population variance \(\sigma^2\)

为什么样本方差用n-1?

Why does the sample variance use n-1?

回顾第2章的讨论:使用 \(n-1\) 而不是 \(n\) 是为了确保无偏性。

Recall the discussion in Chapter 2: using \(n-1\) instead of \(n\) is to ensure unbiasedness.

直观理解:当我们用样本均值 \(\bar{X}\) 代替总体均值 \(\mu\) 时,样本数据与 \(\bar{X}\) 的距离总是小于与真实 \(\mu\) 的距离。这会导致低估真实方差。除以 \(n-1\) 可以补偿这个偏差。

Intuitive understanding: when we substitute the sample mean \(\bar{X}\) for the population mean \(\mu\), the distances from the sample data to \(\bar{X}\) are always smaller than the distances to the true \(\mu\). This leads to an underestimation of the true variance. Dividing by \(n-1\) compensates for this bias.

5.2.1.2 有效性 (Efficiency)

在所有无偏估计量中,方差最小的估计量称为最有效估计量。

Among all unbiased estimators, the one with the smallest variance is called the most efficient estimator.

克拉默-拉奥下界(Cramér-Rao Lower Bound)给出了无偏估计量方差的理论下界。

The Cramér-Rao Lower Bound provides a theoretical lower bound for the variance of any unbiased estimator.

5.2.1.3 一致性 (Consistency)

估计量 \(\hat{\theta}_n\) (基于样本量 \(n\)) 是一致的,如果当 \(n \to \infty\) 时,它满足 式 5.2 所示的条件:

An estimator \(\hat{\theta}_n\) (based on sample size \(n\)) is consistent if, as \(n \to \infty\), it satisfies the condition shown in 式 5.2:

\[ \hat{\theta}_n \xrightarrow{p} \theta \tag{5.2}\]

即估计量依概率收敛于真实参数。

That is, the estimator converges in probability to the true parameter.

5.2.2 极大似然估计 (Maximum Likelihood Estimation, MLE)

直觉: 极大似然估计的核心思想非常朴素——“发生的事情是概率最大的”。如果在这个参数下,出现当前数据的概率最大,那么这个参数就是最”可信”的估计值。

Intuition: The core idea of maximum likelihood estimation is remarkably intuitive—“what happened is what was most likely to happen.” If the probability of observing the current data is maximized under a particular parameter value, then that parameter value is the most “credible” estimate.

数学推导: 以最简单的抛硬币(或企业盈亏)为例。设 \(X\) 服从伯努利分布 \(B(p)\),即 \(P(X=1)=p\) (盈利),\(P(X=0)=1-p\) (亏损)。 假设我们观察到 \(n\) 个独立样本 \(x_1, x_2, ..., x_n\)。

Mathematical Derivation: Consider the simplest example of a coin flip (or corporate profit/loss). Let \(X\) follow a Bernoulli distribution \(B(p)\), where \(P(X=1)=p\) (profitable) and \(P(X=0)=1-p\) (loss-making). Suppose we observe \(n\) independent samples \(x_1, x_2, ..., x_n\).

似然函数 (Likelihood Function) 是观测数据出现的联合概率,主要关于参数 \(p\) 的函数:

The Likelihood Function is the joint probability of the observed data as a function of the parameter \(p\):

\[ L(p) = P(x_1, ..., x_n | p) = \prod_{i=1}^n p^{x_i} (1-p)^{1-x_i} \] \[ L(p) = p^{\sum x_i} (1-p)^{n-\sum x_i} \]

为了计算方便,我们通常取对数(对数函数是单调递增的,最大化对数似然等价于最大化似然):

For computational convenience, we typically take the logarithm (since the logarithmic function is monotonically increasing, maximizing the log-likelihood is equivalent to maximizing the likelihood):

\[ \ell(p) = \ln L(p) = (\sum x_i) \ln p + (n-\sum x_i) \ln(1-p) \]

为了找到使 \(\ell(p)\) 最大的 \(p\),我们对 \(p\) 求导并令其为 0:

To find the value of \(p\) that maximizes \(\ell(p)\), we take the derivative with respect to \(p\) and set it equal to 0:

\[ \frac{d\ell}{dp} = \frac{\sum x_i}{p} - \frac{n-\sum x_i}{1-p} = 0 \]

解这个方程:

Solving this equation:

\[ (1-p)\sum x_i = p(n-\sum x_i) \] \[ \sum x_i - p\sum x_i = pn - p\sum x_i \] \[ p = \frac{\sum x_i}{n} = \bar{x} \]

结论:样本均值(即盈利公司的比例)就是总体比例 \(p\) 的极大似然估计量 \(\hat{p}_{MLE}\)。

Conclusion: The sample mean (i.e., the proportion of profitable companies) is the maximum likelihood estimator \(\hat{p}_{MLE}\) of the population proportion \(p\).

反例:MLE 总是最好的吗?

Counterexample: Is MLE Always the Best?

虽然 MLE 具有一致性(样本量很大时收敛到真值)和渐近正态性,但它并不总是无偏的。 一个经典的反例是方差的估计。对于正态分布 \(N(\mu, \sigma^2)\), \(\sigma^2\) 的 MLE 估计量是 \(\hat{\sigma}^2_{MLE} = \frac{1}{n}\sum(x_i - \bar{x})^2\)。 然而,这个估计量是有偏的(偏小)。为了纠正偏差,我们需要除以 \(n-1\)(即样本方差 \(S^2\))。这提醒我们:虽然 MLE 是寻找估计量的强力工具,但 blind application 可能会带来偏差,尤其是在小样本下。

Although MLE possesses consistency (converging to the true value as the sample size grows large) and asymptotic normality, it is not always unbiased. A classic counterexample is the estimation of variance. For a normal distribution \(N(\mu, \sigma^2)\), the MLE of \(\sigma^2\) is \(\hat{\sigma}^2_{MLE} = \frac{1}{n}\sum(x_i - \bar{x})^2\). However, this estimator is biased (biased downward). To correct for this bias, we need to divide by \(n-1\) (yielding the sample variance \(S^2\)). This reminds us that although MLE is a powerful tool for finding estimators, blind application can introduce bias, especially with small samples.

MLE的直观理解:客户满意度调查

Intuitive Understanding of MLE: Customer Satisfaction Survey

假设某公司想知道客户满意度 \(p\)。随机调查100人,85人表示满意。

Suppose a company wants to know its customer satisfaction rate \(p\). A random survey of 100 people reveals that 85 expressed satisfaction.

似然函数: \(L(p) = \binom{100}{85} p^{85} (1-p)^{15}\)

Likelihood function: \(L(p) = \binom{100}{85} p^{85} (1-p)^{15}\)

MLE: 求 \(L(p)\) 关于 \(p\) 的最大值,得到:

MLE: Maximizing \(L(p)\) with respect to \(p\), we obtain:

\[ \hat{p}_{MLE} = \frac{85}{100} = 0.85 \]

解释: 使”观察到85人满意”这个事件概率最大的 \(p\) 值就是0.85,即样本比例。这符合直觉。

Interpretation: The value of \(p\) that maximizes the probability of the event “85 out of 100 are satisfied” is 0.85, which is simply the sample proportion. This aligns with intuition.

5.2.2.1 案例:长三角上市公司盈利比例的极大似然估计 (Case Study: MLE of Profitability Ratio for YRD Listed Companies)

什么是企业盈利比例的估计?

What is the Estimation of Corporate Profitability Ratio?

在投资分析和信用评估中,了解某一区域或行业中「有多大比例的企业处于盈利状态」是一项基础且关键的指标。例如,银行在对长三角地区制造业企业进行批量授信时,需要评估该区域企业群体的整体盈利健康度。如果盈利比例过低,意味着该区域的信用风险较高,授信策略需要更加审慎。

In investment analysis and credit assessment, understanding “what proportion of companies in a given region or industry are profitable” is a fundamental and critical metric. For instance, when banks conduct batch credit approvals for manufacturing enterprises in the Yangtze River Delta region, they need to assess the overall profitability health of the corporate population in that area. If the profitability ratio is too low, it signals higher credit risk in the region, necessitating more prudent lending strategies.

极大似然估计(MLE)是估计此类「总体比例」参数的经典统计方法。其核心思想是:在所有可能的参数值中,找到那个使我们「实际观察到的样本数据出现概率最大」的参数值。下面我们使用本地上市公司财务数据,通过极大似然估计法计算长三角地区上市公司的盈利比例,结果如 表 5.1 所示。

Maximum Likelihood Estimation (MLE) is the classic statistical method for estimating such “population proportion” parameters. Its core idea is: among all possible parameter values, find the one that maximizes the probability of the sample data we actually observed. Below, we use local listed company financial data to compute the profitability ratio of YRD listed companies via MLE, with results shown in 表 5.1.

长三角地区上市公司基本信息筛选完毕。下面合并目标季度的财务数据并计算盈利状态。

The basic information of YRD listed companies has been filtered. Next, we merge the target quarter’s financial data and compute profitability status.

# ========== 第3步:获取目标季度财务数据 ==========

# ========== Step 3: Retrieve Target Quarter Financial Data ==========

# 选取2023年第3季度数据作为分析样本

# Select Q3 2023 data as the analysis sample

target_quarter_string = '2023q3' # 目标分析季度:2023年第三季度

# Target analysis quarter: Q3 2023

financial_statement_quarter_dataframe = financial_statement_dataframe[ # 从财务报表中筛选指定季度

financial_statement_dataframe['quarter'] == target_quarter_string # 筛选目标季度

]

# Filter the target quarter from financial statements

# 将公司基本信息与财务数据按股票代码(order_book_id)内连接合并

# Inner join company basic info with financial data on stock code (order_book_id)

merged_analysis_dataframe = pd.merge(yrd_companies_dataframe, financial_statement_quarter_dataframe, on='order_book_id', how='inner') # 内连接保留双表匹配记录

# Inner join retains only records matching in both tables

# 定义盈利状态:净利润大于0标记为1(盈利),否则为0(亏损)

# Define profitability status: net profit > 0 marked as 1 (profitable), otherwise 0 (loss-making)

merged_analysis_dataframe['is_profitable'] = (merged_analysis_dataframe['net_profit'] > 0).astype(int) # 盈利标志:1=盈利,0=亏损

# Profitability flag: 1 = profitable, 0 = loss-making

# 统计样本总数和盈利企业数

# Count total sample size and number of profitable companies

total_companies_count = len(merged_analysis_dataframe) # 总样本量n

# Total sample size n

profitable_companies_count = merged_analysis_dataframe['is_profitable'].sum() # 盈利企业数k

# Number of profitable companies k长三角地区上市公司盈利数据准备完成。下面通过极大似然估计法求解盈利比例的点估计。

The profitability data for YRD listed companies is now prepared. Next, we solve for the point estimate of the profitability ratio using maximum likelihood estimation.

# ========== 第4步:极大似然估计 ==========

# ========== Step 4: Maximum Likelihood Estimation ==========

# 定义负对数似然函数(因为scipy.optimize.minimize执行最小化,所以取负号)

# Define the negative log-likelihood function (negated because scipy.optimize.minimize performs minimization)

def calculate_negative_log_likelihood(probability_parameter): # 接收候选概率参数p

# Takes a candidate probability parameter p

# 将参数p裁剪到(0,1)开区间内,避免log(0)导致数值错误

# Clip parameter p to the open interval (0,1) to avoid numerical errors from log(0)

probability_parameter = np.clip(probability_parameter, 1e-10, 1-1e-10) # 数值稳定性处理:限制p在极小正数到接近1之间

# Numerical stability: constrain p between a tiny positive number and near 1

# 二项分布对数似然: log L(p) = k*log(p) + (n-k)*log(1-p) + 常数项

# Binomial log-likelihood: log L(p) = k*log(p) + (n-k)*log(1-p) + constant term

# 这里忽略组合数常数项(它不影响最优解的位置)

# The combinatorial constant is omitted here (it does not affect the location of the optimum)

return -(profitable_companies_count * np.log(probability_parameter) + # 负对数似然:k*log(p)

# Negative log-likelihood: k*log(p)

(total_companies_count - profitable_companies_count) * np.log(1-probability_parameter)) # 加上(n-k)*log(1-p)

# Plus (n-k)*log(1-p)

# 使用L-BFGS-B算法在[0.001, 0.999]范围内数值求解最大似然估计

# Use the L-BFGS-B algorithm to numerically solve the MLE within the [0.001, 0.999] range

optimization_result = minimize(calculate_negative_log_likelihood, x0=0.5, bounds=[(0.001, 0.999)]) # 初始值0.5,约束p∈[0.001,0.999]

# Initial value 0.5, constraint p ∈ [0.001, 0.999]

mle_estimated_probability = optimization_result.x[0] # 数值优化得到的MLE估计值

# MLE estimate obtained via numerical optimization

theoretical_probability = profitable_companies_count / total_companies_count # 理论公式: p_hat = k/n

# Theoretical formula: p_hat = k/n基于上述数据准备和MLE参数估计,我们输出估计结果并绘制似然函数曲线,直观展示极大似然估计的原理:

Based on the data preparation and MLE parameter estimation above, we output the estimation results and plot the likelihood function curve to visually demonstrate the principle of maximum likelihood estimation:

# ========== 第5步:输出结果 ==========

# ========== Step 5: Output Results ==========

print(f'数据来源: 本地数据集 (长三角地区上市公司 {target_quarter_string} 财报)') # 标注数据来源

# Print data source annotation

print(f'样本总数: {total_companies_count} 家') # 输出总样本量

# Print total sample size

print(f'盈利家数: {profitable_companies_count} 家') # 输出盈利企业数

# Print number of profitable companies

print(f'MLE估计 (数值优化): {mle_estimated_probability:.6f}') # 数值解

# MLE estimate (numerical optimization)

print(f'MLE估计 (理论公式): {theoretical_probability:.6f}') # 解析解(两者应一致)

# MLE estimate (theoretical formula) — both should be identical

print(f'盈利比例: {theoretical_probability:.2%}') # 以百分比格式输出盈利比例

# Print profitability ratio in percentage format数据来源: 本地数据集 (长三角地区上市公司 2023q3 财报)

样本总数: 1978 家

盈利家数: 1678 家

MLE估计 (数值优化): 0.848332

MLE估计 (理论公式): 0.848332

盈利比例: 84.83%表 5.1 的输出结果揭示了长三角上市公司的盈利全景:在2023年第三季度,1978家样本企业中有1678家实现盈利,MLE估计的盈利比例为 \(\hat{p} = 0.8483\)(约84.83%)。值得注意的是,数值优化法(SciPy的minimize)和理论公式法(\(\hat{p} = k/n\))给出了完全一致的结果(0.848332),这验证了我们在理论推导中证明的结论——对于伯努利试验,MLE的解析解与数值解相同。从经济含义来看,约85%的盈利比例表明长三角制造业上市公司整体盈利健康度较高,但仍有约15%的企业处于亏损状态,这对银行批量授信的风控策略具有重要参考价值。

The output of 表 5.1 reveals the full profitability picture of YRD listed companies: in Q3 2023, out of 1,978 sample firms, 1,678 were profitable, with the MLE-estimated profitability ratio of \(\hat{p} = 0.8483\) (approximately 84.83%). Notably, the numerical optimization method (SciPy’s minimize) and the theoretical formula (\(\hat{p} = k/n\)) yield identical results (0.848332), verifying the conclusion proven in our theoretical derivation—for Bernoulli trials, the analytical and numerical solutions of MLE are the same. From an economic perspective, a profitability ratio of approximately 85% indicates that YRD manufacturing listed companies are in generally healthy profitability condition, yet about 15% remain in a loss-making state, which carries important implications for banks’ risk management strategies in batch credit approvals.

下面绘制似然函数曲线,直观展示MLE的原理。

Below, we plot the likelihood function curve to visually illustrate the principle of MLE.

# ========== 第6步:可视化似然函数 ==========

# ========== Step 6: Visualize the Likelihood Function ==========

# 在[0.5, 1.0]区间内构建100个候选概率值(盈利比例通常较高)

# Construct 100 candidate probability values in the [0.5, 1.0] interval (profitability ratios are typically high)

probability_values_array = np.linspace(0.5, 1.0, 100) # 生成100个等距候选概率值

# Generate 100 equally spaced candidate probability values

# 计算每个候选概率值对应的似然值(取指数还原为似然而非对数似然)

# Compute the likelihood value for each candidate (exponentiate to recover likelihood from log-likelihood)

likelihood_values_list = [np.exp(-calculate_negative_log_likelihood(p)) for p in probability_values_array] # 对每个p计算似然值L(p)

# Compute likelihood L(p) for each candidate p

# 归一化处理:将最大似然值缩放为1,便于绘图比较

# Normalization: scale the maximum likelihood value to 1 for easier visual comparison

normalized_likelihood_values_array = np.array(likelihood_values_list) / np.max(likelihood_values_list) # 归一化:最大值=1

# Normalize: maximum value = 1

# 绘制归一化似然函数曲线

# Plot the normalized likelihood function curve

mle_figure, mle_axes = plt.subplots(figsize=(10, 6)) # 创建10×6英寸画布与坐标轴对象

# Create a 10×6 inch figure and axes object

mle_axes.plot(probability_values_array, normalized_likelihood_values_array, 'b-', linewidth=2.5, label='归一化似然函数') # 绘制蓝色实线似然曲线

# Plot the blue solid likelihood curve

# 用红色虚线标注MLE估计值的位置(似然函数的峰值点)

# Mark the MLE estimate position with a red dashed line (the peak of the likelihood function)

mle_axes.axvline(mle_estimated_probability, color='red', linestyle='--', linewidth=2, label=f'MLE = {mle_estimated_probability:.4f}') # 红色虚线标注MLE估计值位置

# Red dashed line marking the MLE estimate position

mle_axes.set_xlabel('盈利比例 (p)', fontsize=12) # X轴:候选参数值

# X-axis: candidate parameter values

mle_axes.set_ylabel('相对似然值', fontsize=12) # Y轴:归一化似然值

# Y-axis: normalized likelihood values

mle_axes.set_title(f'长三角上市公司盈利比例的MLE估计 ({target_quarter_string})', fontsize=14) # 设置图表标题

# Set the chart title

mle_axes.legend(fontsize=11) # 显示图例

# Display the legend

mle_axes.grid(True, alpha=0.3) # 添加半透明网格线

# Add semi-transparent grid lines

plt.tight_layout() # 自动调整子图间距,防止标签被截断

# Automatically adjust subplot spacing to prevent label clipping

plt.show() # 渲染并显示图形

# Render and display the figure

图 5.1 直观展示了似然函数的核心原理。曲线呈现明显的单峰钟形结构,在 \(p \approx 0.848\) 处达到最大值(归一化似然值为1.0),这正是MLE估计值的位置,由红色虚线标注。曲线的尖锐程度反映了估计的精度——由于样本量较大(\(n = 1978\)),似然函数在峰值附近急剧下降,表明MLE估计的不确定性很小。直观地说,如果曲线在峰值两侧缓慢下降,意味着多个候选参数值的似然函数值都接近最大值,估计就不够精确;而本案例中曲线陡峭的衰减,说明数据强烈”偏好”\(\hat{p} = 0.848\) 这一估计值,其他候选值的可能性迅速降低。

图 5.1 visually demonstrates the core principle of the likelihood function. The curve exhibits a clear unimodal bell-shaped structure, reaching its maximum (normalized likelihood value of 1.0) at \(p \approx 0.848\), which is precisely the location of the MLE estimate, marked by the red dashed line. The sharpness of the curve reflects the precision of the estimate—because the sample size is large (\(n = 1978\)), the likelihood function drops steeply near the peak, indicating very low uncertainty in the MLE estimate. Intuitively, if the curve declined slowly on both sides of the peak, it would mean that multiple candidate parameter values have likelihood values close to the maximum, resulting in an imprecise estimate. In this case, however, the steep decay of the curve indicates that the data strongly “favors” the estimate \(\hat{p} = 0.848\), with the plausibility of other candidate values diminishing rapidly. ## 区间估计 (Interval Estimation) {#sec-interval-estimation}

5.2.3 置信区间的概念与几何解释 (Concept and Geometric Interpretation of Confidence Intervals)

直觉: 点估计(如平均 ROE 为 8.5%)就像是用一支箭去射靶心,虽然瞄得很准,但射中靶心(精确等于真值)的概率几乎为0。 置信区间就像是撒一张网。我们无法保证网的中心就在靶心,但我们可以保证这张网的大小足以在 95% 的投掷中”网住”靶心。

Intuition: A point estimate (e.g., an average ROE of 8.5%) is like shooting a single arrow at a bullseye — although well-aimed, the probability of hitting the exact center (equaling the true value precisely) is virtually zero. A confidence interval is like casting a net. We cannot guarantee that the center of the net lies on the bullseye, but we can ensure the net is large enough to “capture” the bullseye in 95% of the throws.

数学推导 (Normal Case): 假设样本均值 \(\bar{X} \sim N(\mu, \sigma^2/n)\)。如果不确定性标准化,我们得到枢轴量 (Pivotal Quantity) \(Z\): \[ Z = \frac{\bar{X} - \mu}{\sigma/\sqrt{n}} \sim N(0, 1) \]

Mathematical Derivation (Normal Case): Assume the sample mean \(\bar{X} \sim N(\mu, \sigma^2/n)\). If we standardize the uncertainty, we obtain the pivotal quantity \(Z\): \[ Z = \frac{\bar{X} - \mu}{\sigma/\sqrt{n}} \sim N(0, 1) \]

我们知道标准正态分布有 95% 的概率落在 \([-1.96, 1.96]\) 之间: \[ P\left(-1.96 \le \frac{\bar{X} - \mu}{\sigma/\sqrt{n}} \le 1.96\right) = 0.95 \]

We know that the standard normal distribution has a 95% probability of falling within \([-1.96, 1.96]\): \[ P\left(-1.96 \le \frac{\bar{X} - \mu}{\sigma/\sqrt{n}} \le 1.96\right) = 0.95 \]

现在,我们通过代数变换,将 \(\mu\) 留在不等式中间: \[ P\left(\bar{X} - 1.96\frac{\sigma}{\sqrt{n}} \le \mu \le \bar{X} + 1.96\frac{\sigma}{\sqrt{n}}\right) = 0.95 \]

Now, through algebraic manipulation, we isolate \(\mu\) in the middle of the inequality: \[ P\left(\bar{X} - 1.96\frac{\sigma}{\sqrt{n}} \le \mu \le \bar{X} + 1.96\frac{\sigma}{\sqrt{n}}\right) = 0.95 \]

这就构成了 95% 的置信区间:\([\bar{X} - 1.96 SE, \bar{X} + 1.96 SE]\)。

This constitutes the 95% confidence interval: \([\bar{X} - 1.96 SE, \bar{X} + 1.96 SE]\).

概念陷阱:置信区间的解释

Conceptual Pitfall: Interpreting Confidence Intervals

❌ 错误:“也就是说明总体均值 \(\mu\) 有 95% 的概率落在这个区间内。” ✅ 正确:“该区间构建方法(Method)在长期重复使用中,有 95% 的区间会覆盖真实的 \(\mu\)。”

❌ Incorrect: “This means the population mean \(\mu\) has a 95% probability of falling within this interval.” ✅ Correct: “This interval construction method, when used repeatedly over the long run, will produce intervals that cover the true \(\mu\) 95% of the time.”

解释:在频率学派框架下,参数 \(\mu\) 是一个固定的常数,它要么在区间里,要么不在(概率为 1 或 0)。随机的是区间本身(因为样本 \(\bar{X}\) 是随机的)。

Explanation: Under the frequentist framework, the parameter \(\mu\) is a fixed constant — it is either inside the interval or not (with probability 1 or 0). What is random is the interval itself (because the sample mean \(\bar{X}\) is random).

想象你扔圈圈套娃娃。娃娃(\(\mu\))不动,你的圈圈(区间)是随机落下的。95% 置信水平衡量的是你扔圈圈的技术(方法的可靠性),而不是某个特定圈圈套中娃娃的概率。

Imagine tossing rings at a doll. The doll (\(\mu\)) stays put while your ring (the interval) lands randomly. The 95% confidence level measures your ring-tossing skill (the reliability of the method), not the probability that any particular ring has caught the doll.

5.2.4 均值的置信区间 (Confidence Interval for the Mean)

5.2.4.1 已知 \(\sigma\) 时 (When \(\sigma\) Is Known)

\(\mu\) 的 \(100(1-\alpha)\%\) 置信区间如 式 5.3 所示:

The \(100(1-\alpha)\%\) confidence interval for \(\mu\) is given by 式 5.3:

\[ \bar{X} \pm z_{\alpha/2} \frac{\sigma}{\sqrt{n}} \tag{5.3}\]

其中 \(z_{\alpha/2}\) 是标准正态分布的 \(\alpha/2\) 上侧分位数。

where \(z_{\alpha/2}\) is the upper \(\alpha/2\) quantile of the standard normal distribution.

5.2.4.2 未知 \(\sigma\) 时 (When \(\sigma\) Is Unknown)

使用样本标准差 \(S\) 替代 \(\sigma\),置信区间如 式 5.4 所示:

When the sample standard deviation \(S\) is used in place of \(\sigma\), the confidence interval is given by 式 5.4:

\[ \bar{X} \pm t_{\alpha/2, n-1} \frac{S}{\sqrt{n}} \tag{5.4}\]

其中 \(t_{\alpha/2, n-1}\) 是自由度为 \(n-1\) 的t分布的 \(\alpha/2\) 上侧分位数。

where \(t_{\alpha/2, n-1}\) is the upper \(\alpha/2\) quantile of the \(t\)-distribution with \(n-1\) degrees of freedom.

5.2.4.3 案例:平均消费支出估计 (Case Study: Estimating Average ROE)

什么是ROE的区间估计?

What Is an Interval Estimate of ROE?

净资产收益率(ROE)是衡量企业利用股东权益创造利润能力的核心指标,被巴菲特等价值投资者视为最重要的财务指标之一。当我们想了解「长三角电子行业上市公司的平均盈利能力如何」时,仅依靠一个样本均值(点估计)是不够的,因为它无法告诉我们这个估计值的精确度。

Return on Equity (ROE) is a core metric measuring a firm’s ability to generate profit from shareholders’ equity, regarded by value investors such as Warren Buffett as one of the most important financial indicators. When we ask “what is the average profitability of listed electronics companies in the Yangtze River Delta?”, relying on a single sample mean (point estimate) is insufficient, because it tells us nothing about the precision of that estimate.

置信区间为我们提供了一个「可信的范围」:它不仅给出平均ROE的估计值,还告诉我们在给定置信水平下,真实的总体均值最可能落在什么范围内。这对于行业对标分析和投资决策具有重要的实际意义。下面我们使用本地财务数据,构建长三角电子行业上市公司平均ROE的置信区间,结果如 表 5.2 所示。

A confidence interval provides a “credible range”: it not only gives the estimated average ROE but also tells us, at a given confidence level, within what range the true population mean is most likely to fall. This is of great practical significance for industry benchmarking and investment decisions. Below, we use local financial data to construct a confidence interval for the average ROE of listed electronics companies in the Yangtze River Delta, with results shown in 表 5.2.

import numpy as np # 导入数值计算库

# Import the numerical computation library

import pandas as pd # 导入数据处理库

# Import the data processing library

from scipy import stats # 导入统计分布与检验模块

# Import the statistical distributions and hypothesis testing module

from pathlib import Path # 导入路径处理模块,跨平台兼容

# Import the path handling module for cross-platform compatibility

# ---------- 加载本地数据 ----------

# ---------- Load local data ----------

import platform # 导入平台检测模块,用于判断操作系统

# Import the platform detection module to identify the operating system

if platform.system() == 'Windows': # Windows系统使用本地路径

# Use the local path for Windows systems

data_directory_path = Path('C:/qiufei/data/stock') # Windows本地数据路径

# Windows local data path

else: # Linux服务器环境

# Linux server environment

data_directory_path = Path('/home/ubuntu/r2_data_mount/qiufei/data/stock') # Linux本地数据路径

# Linux local data path

basic_info_dataframe = pd.read_hdf(data_directory_path / 'stock_basic_data.h5') # 加载公司基本信息

# Load company basic information

financial_statement_dataframe = pd.read_hdf(data_directory_path / 'financial_statement.h5') # 加载财务报表

# Load financial statements

# ========== 第1步:数据准备——筛选长三角电子行业企业 ==========

# ========== Step 1: Data preparation — filter YRD electronics companies ==========

yrd_provinces_list = ['上海市', '江苏省', '浙江省', '安徽省'] # 长三角四省市

# The four YRD provinces/municipalities

target_industry_name = '计算机、通信和其他电子设备制造业' # 国统局行业分类代码对应的电子行业

# NBS industry classification code for the electronics sector

target_quarter_string = '2023q3' # 分析的目标季度

# Target quarter for analysis

# 构建布尔掩码进行精确筛选(本地数据使用国统局行业分类标准)

# Construct boolean masks for precise filtering (local data uses NBS industry classification)

region_mask = basic_info_dataframe['province'].isin(yrd_provinces_list) # 地区筛选:省份在长三角列表中

# Region filter: province is in the YRD list

industry_mask = basic_info_dataframe['industry_name'] == target_industry_name # 行业筛选:精确匹配行业名称

# Industry filter: exact match of industry name

target_companies_dataframe = basic_info_dataframe[region_mask & industry_mask] # 取交集:同时满足地区和行业条件

# Intersection: companies satisfying both region and industry conditions长三角电子行业目标企业筛选完毕。下面获取目标季度财务数据,合并后计算ROE并清洗异常值。

Filtering of target YRD electronics companies is complete. Next, we retrieve financial data for the target quarter, merge the datasets, compute ROE, and clean outliers.

# 获取目标季度的财务报表数据

# Retrieve financial statement data for the target quarter

financial_statement_quarter_dataframe = financial_statement_dataframe[ # 筛选指定季度财务数据

# Filter financial data for the specified quarter

financial_statement_dataframe['quarter'] == target_quarter_string # 筛选目标季度

# Select the target quarter

].copy() # 使用copy()避免SettingWithCopyWarning

# Use copy() to avoid SettingWithCopyWarning

# 将公司信息与财务数据内连接合并(只保留同时有基本信息和财务数据的公司)

# Inner-join company information with financial data (keep only companies with both)

merged_analysis_dataframe = pd.merge(target_companies_dataframe, financial_statement_quarter_dataframe, on='order_book_id', how='inner') # 按股票代码内连接合并

# Inner join on stock code

# ========== 第2步:计算ROE(净资产收益率) ==========

# ========== Step 2: Compute ROE (Return on Equity) ==========

# ROE = 净利润 / 股东权益,衡量公司利用股东投入资本的获利效率

# ROE = Net Profit / Total Equity, measuring a firm's efficiency in generating profit from shareholders' capital

merged_analysis_dataframe['roe'] = merged_analysis_dataframe['net_profit'] / merged_analysis_dataframe['total_equity'] # 计算净资产收益率

# Compute Return on Equity

# ========== 第3步:数据清洗——处理异常值 ==========

# ========== Step 3: Data cleaning — handle outliers ==========

# 将无穷大值替换为NaN,并删除ROE为缺失值的行(分母为0时会产生inf)

# Replace infinity with NaN and drop rows where ROE is missing (inf arises when the denominator is zero)

merged_analysis_dataframe = merged_analysis_dataframe.replace([np.inf, -np.inf], np.nan).dropna(subset=['roe']) # 清洗异常值

# Clean outliers

# 去除极端离群值:仅保留ROE在(-50%, 50%)范围内的观测(壳股或ST公司的ROE可能极端异常)

# Remove extreme outliers: keep only observations with ROE in (-50%, 50%) (shell companies or ST firms may have extreme ROE)

clean_analysis_dataframe = merged_analysis_dataframe[ # 筛选ROE合理范围内的样本

# Filter samples within a reasonable ROE range

(merged_analysis_dataframe['roe'] > -0.5) & (merged_analysis_dataframe['roe'] < 0.5) # ROE取值范围约束

# ROE range constraint

]

roe_sample_series = clean_analysis_dataframe['roe'] # 提取清洗后的ROE序列

# Extract the cleaned ROE series

sample_size_n = len(roe_sample_series) # 有效样本量

# Effective sample size完成数据筛选和清洗后,我们基于清洁样本计算描述性统计量,并构建不同置信水平下的区间估计:

After completing data filtering and cleaning, we compute descriptive statistics from the clean sample and construct interval estimates at different confidence levels:

# ========== 第4步:计算描述性统计量 ==========

# ========== Step 4: Compute descriptive statistics ==========

sample_mean_roe = roe_sample_series.mean() # 样本均值:ROE的点估计

# Sample mean: point estimate of ROE

sample_standard_deviation = roe_sample_series.std() # 样本标准差:衡量ROE的离散程度

# Sample standard deviation: measures the dispersion of ROE

standard_error = sample_standard_deviation / np.sqrt(sample_size_n) # 标准误:均值估计的不确定性

# Standard error: uncertainty of the mean estimate

# ========== 第5步:构建不同置信水平的置信区间 ==========

# ========== Step 5: Construct confidence intervals at different confidence levels ==========

confidence_levels_list = [0.90, 0.95, 0.99] # 三个常用置信水平

# Three commonly used confidence levels

confidence_interval_results_list = [] # 存储结果的列表

# List to store results

for current_confidence_level in confidence_levels_list: # 遍历三个置信水平

# Iterate over the three confidence levels

significance_level_alpha = 1 - current_confidence_level # 显著性水平α

# Significance level α

t_critical_value = stats.t.ppf(1 - significance_level_alpha/2, df=sample_size_n-1) # t分布临界值(自由度=n-1)

# t-distribution critical value (degrees of freedom = n-1)

margin_of_error = t_critical_value * standard_error # 边际误差 = t临界值 × 标准误

# Margin of error = t critical value × standard error

confidence_interval_lower_bound = sample_mean_roe - margin_of_error # 置信区间下界

# Lower bound of the confidence interval

confidence_interval_upper_bound = sample_mean_roe + margin_of_error # 置信区间上界

# Upper bound of the confidence interval

# 将当前置信水平的结果存入字典

# Store the results for the current confidence level in a dictionary

confidence_interval_results_list.append({ # 将当前置信水平的结果存入字典

# Append the current confidence level results to the list

'置信水平': f'{current_confidence_level:.0%}', # 格式化为百分比

# Format as percentage

't临界值': f'{t_critical_value:.3f}', # t分布临界值

# t-distribution critical value

'标准误': f'{standard_error:.4f}', # 均值估计的标准误

# Standard error of the mean estimate

'边际误差': f'{margin_of_error:.4f}', # 置信区间的半宽

# Half-width of the confidence interval

'置信区间': f'[{confidence_interval_lower_bound:.2%}, {confidence_interval_upper_bound:.2%}]', # 区间范围

# Interval range

'区间宽度': f'{confidence_interval_upper_bound - confidence_interval_lower_bound:.2%}' # 区间总宽度

# Total width of the interval

})

# 将结果列表转换为DataFrame,方便展示

# Convert the result list to a DataFrame for display

results_summary_dataframe = pd.DataFrame(confidence_interval_results_list) # 构建置信区间对比表

# Build the confidence interval comparison table三种置信水平的区间计算完成。下面整理并输出置信区间对比表及经济学解释。

The interval calculations for all three confidence levels are complete. Below we compile and output the confidence interval comparison table along with the economic interpretation.

# ========== 第6步:输出分析结论 ==========

# ========== Step 6: Output the analytical conclusions ==========

print(f'分析对象: 长三角地区 {target_industry_name} 行业上市公司 ({target_quarter_string})') # 打印分析范围

# Print the scope of the analysis

print(f'有效样本量: {sample_size_n} 家') # 打印有效样本量

# Print the effective sample size

print(f'样本平均ROE: {sample_mean_roe:.2%}') # 点估计结果

# Point estimate result

print(f'样本标准差: {sample_standard_deviation:.2%}') # 离散程度

# Degree of dispersion

print(f'\n不同置信水平的区间估计:') # 分隔标题

# Section heading

print(results_summary_dataframe) # 三个置信水平的对比表

# Comparison table for the three confidence levels

# 对95%置信区间的经济学解释

# Economic interpretation of the 95% confidence interval

print(f'\n解释:') # 经济学解释部分

# Interpretation section

print(f'我们有95%的把握认为,长三角电子行业上市公司的平均ROE落在 [{sample_mean_roe - stats.t.ppf(0.975, sample_size_n-1)*standard_error:.2%}, {sample_mean_roe + stats.t.ppf(0.975, sample_size_n-1)*standard_error:.2%}] 之间。') # 输出95%置信区间的业务含义

# Output the business implication of the 95% confidence interval分析对象: 长三角地区 计算机、通信和其他电子设备制造业 行业上市公司 (2023q3)

有效样本量: 190 家

样本平均ROE: 2.10%

样本标准差: 7.01%

不同置信水平的区间估计:

置信水平 t临界值 标准误 边际误差 置信区间 区间宽度

0 90% 1.653 0.0051 0.0084 [1.26%, 2.94%] 1.68%

1 95% 1.973 0.0051 0.0100 [1.10%, 3.11%] 2.01%

2 99% 2.602 0.0051 0.0132 [0.78%, 3.43%] 2.65%

解释:

我们有95%的把握认为,长三角电子行业上市公司的平均ROE落在 [1.10%, 3.11%] 之间。表 5.2 的运行结果展示了长三角电子行业190家上市公司在2023年第三季度的ROE区间估计。样本平均ROE仅为2.10%,标准差高达7.01%,说明行业内盈利水平参差不齐。三组置信区间的对比清晰体现了”置信水平-区间宽度”的权衡关系:90%置信区间[1.26%, 2.94%]宽1.68个百分点,95%置信区间[1.10%, 3.11%]宽2.01个百分点,而99%置信区间[0.78%, 3.43%]宽2.65个百分点。置信水平每提高一档,区间宽度几乎增加了30%以上。值得关注的是,即使在最严格的99%置信水平下,区间下界(0.78%)仍然大于零,这意味着我们有非常强的统计证据表明该行业整体处于盈利状态。但2.10%的平均ROE远低于一般8%-10%的行业基准,反映了2023年第三季度电子行业面临的景气下行压力。

The results of 表 5.2 show the ROE interval estimates for 190 listed electronics companies in the Yangtze River Delta in Q3 2023. The sample mean ROE is only 2.10% with a standard deviation as high as 7.01%, indicating pronounced variation in profitability across the industry. The comparison of the three confidence intervals clearly illustrates the trade-off between confidence level and interval width: the 90% CI [1.26%, 2.94%] spans 1.68 percentage points, the 95% CI [1.10%, 3.11%] spans 2.01 percentage points, and the 99% CI [0.78%, 3.43%] spans 2.65 percentage points. With each higher confidence level, the interval width increases by approximately 30% or more. Notably, even at the most stringent 99% confidence level, the lower bound (0.78%) remains above zero, providing very strong statistical evidence that the industry is profitable overall. However, the 2.10% mean ROE is far below the typical 8%–10% industry benchmark, reflecting the cyclical downturn pressures facing the electronics sector in Q3 2023.

大样本条件下(通常要求 \(np \geq 10\) 且 \(n(1-p) \geq 10\)),比例 \(p\) 的置信区间如 式 5.5 所示:

Under large-sample conditions (typically requiring \(np \geq 10\) and \(n(1-p) \geq 10\)), the confidence interval for proportion \(p\) is given by 式 5.5:

\[ \hat{p} \pm z_{\alpha/2} \sqrt{\frac{\hat{p}(1-\hat{p})}{n}} \tag{5.5}\]

5.3 从理论到实践:苦活累活 (From Theory to Practice: The “Dirty Work”)

假设检验在教科书里是神圣的科学方法,但在现实中,它常被滥用。如果不了解这些陷阱,你很容易被”统计显著”的研究结果误导。

Hypothesis testing is presented in textbooks as a rigorous scientific method, but in practice it is frequently misused. Without awareness of these pitfalls, you can easily be misled by “statistically significant” research findings.

5.3.1 1. P值黑客 (P-Hacking)

既然 P < 0.05 是发表论文或通过审批的”金标准”,那么研究者就有巨大的动力去”凑”出一个小于0.05的P值。

Since P < 0.05 is the “gold standard” for publishing papers or passing reviews, researchers have enormous incentive to engineer a p-value below 0.05.

方法很简单:

- 尝试几十种不同的变量组合。

- 尝试增加或减少样本量。

- 尝试剔除几个”离群点”。

The methods are straightforward:

- Try dozens of different variable combinations.

- Try increasing or decreasing the sample size.

- Try removing a few “outliers.”

只要你尝试的次数足够多,总能碰巧找到一个 P < 0.05 的结果,即使实际上没有任何效应。

As long as you try enough times, you will inevitably stumble upon a P < 0.05 result by chance, even when no real effect exists.

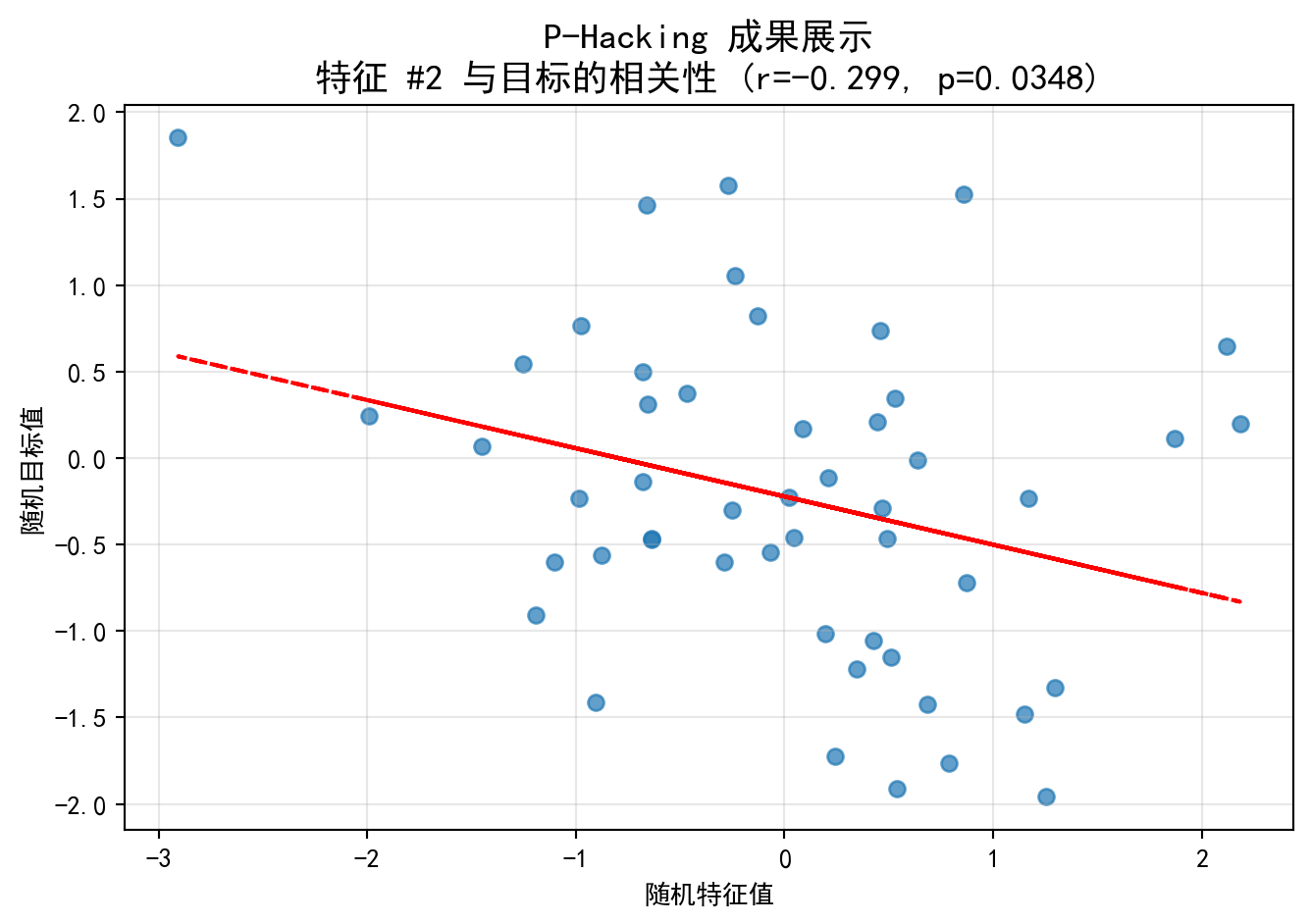

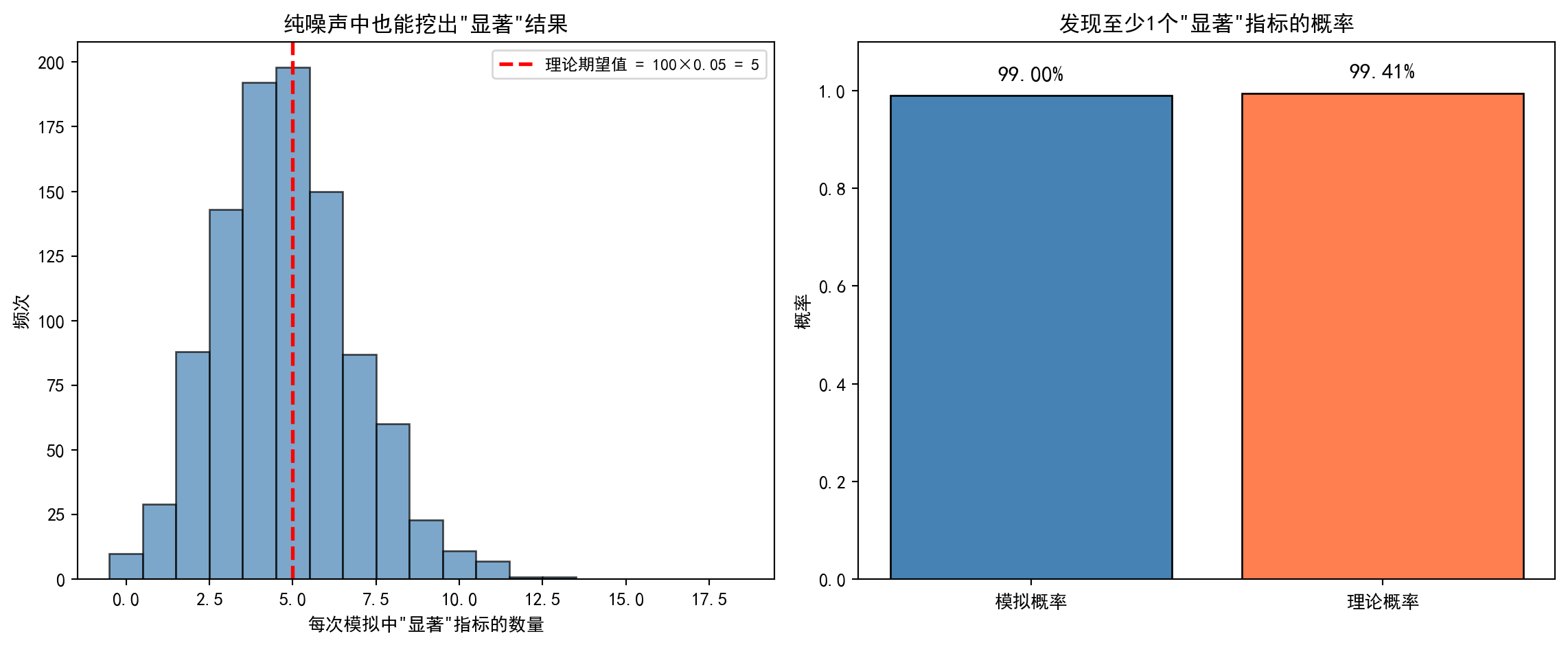

让我们模拟一下这个过程:即便全是随机噪声,只要尝试次数够多,也能找到”显著”结果。如 图 5.2 所示,在完全随机的数据中,P-hacking 依然能”挖掘”出统计显著的相关性。

Let us simulate this process: even with nothing but random noise, a sufficient number of attempts will yield “significant” results. As shown in 图 5.2, P-hacking can still “unearth” statistically significant correlations in entirely random data.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import numpy as np # 数值计算库,用于生成随机数和数组操作

# Numerical computation library for random number generation and array operations

import pandas as pd # 数据分析库(此处备用)

# Data analysis library (reserved for potential use)

from scipy import stats # 科学计算统计模块,提供pearsonr相关性检验

# Statistical module from SciPy, providing the Pearson correlation test

import matplotlib.pyplot as plt # 绘图库,用于可视化散点图

# Plotting library for scatter plot visualization

# ========== 第1步:设置中文显示环境 ==========

# ========== Step 1: Set up Chinese display environment ==========

plt.rcParams['font.sans-serif'] = ['SimHei'] # 设置中文字体为黑体

# Set the Chinese font to SimHei (bold)

plt.rcParams['axes.unicode_minus'] = False # 解决负号显示为方块的问题

# Fix the issue where minus signs display as squares

# ========== 第2步:设定模拟参数 ==========

# ========== Step 2: Set simulation parameters ==========

np.random.seed(42) # 设置随机种子,确保结果可复现

# Set the random seed for reproducibility

samples_count = 50 # 每个特征的样本量(模拟50个观测值)

# Sample size per feature (simulate 50 observations)

features_count = 100 # 尝试100个不同的候选特征(模拟"数据挖掘"行为)

# Number of candidate features to try (simulating "data mining" behavior)

# ========== 第3步:构造完全随机的数据(无任何真实效应) ==========

# ========== Step 3: Generate entirely random data (no real effects) ==========

# simulated_target_array 是我们想预测的目标变量(纯粹的噪声)

# simulated_target_array is the target variable we want to predict (pure noise)

simulated_target_array = np.random.normal(0, 1, samples_count) # 从标准正态N(0,1)生成50个随机值

# Generate 50 random values from the standard normal N(0,1)

# simulated_features_matrix 是100个候选特征(同样全是噪声,与目标无关联)

# simulated_features_matrix contains 100 candidate features (also pure noise, unrelated to the target)

simulated_features_matrix = np.random.normal(0, 1, (samples_count, features_count)) # 50行×100列随机矩阵

# 50-row × 100-column random matrix模拟数据生成完毕(目标变量与100个特征均为纯噪声)。下面逐一检验相关性并统计误报数量。

Simulated data generation is complete (both the target variable and all 100 features are pure noise). Next, we test each feature for correlation and count the false positives.

# ========== 第4步:对100个特征逐一进行Pearson相关性检验 ==========

# ========== Step 4: Perform Pearson correlation tests on each of the 100 features ==========

significant_features_count = 0 # 初始化:记录通过显著性检验的特征数量

# Initialize: count of features passing the significance test

significant_feature_indices = [] # 初始化:记录通过检验的特征索引号

# Initialize: indices of features passing the test

calculated_p_values_list = [] # 初始化:存储每个特征的p值

# Initialize: store the p-value for each feature

for feature_index in range(features_count): # 遍历100个特征,逐一检验

# Iterate over 100 features, testing each one

# 计算第feature_index个特征与目标变量的Pearson相关系数及p值

# Compute the Pearson correlation coefficient and p-value between the feature and the target

correlation_coefficient, p_value_result = stats.pearsonr( # 计算皮尔逊相关系数及p值

# Compute the Pearson correlation coefficient and p-value

simulated_features_matrix[:, feature_index], # 取出第feature_index列特征数据

# Extract the feature_index-th column of feature data

simulated_target_array # 目标变量

# Target variable

)

calculated_p_values_list.append(p_value_result) # 将p值存入列表

# Append the p-value to the list

if p_value_result < 0.05: # 如果p值小于0.05(传统显著性阈值)

# If the p-value is less than 0.05 (the conventional significance threshold)

significant_features_count += 1 # 计数器加1

# Increment the counter

significant_feature_indices.append(feature_index) # 记录该特征的索引

# Record the index of this feature

# ========== 第5步:输出检验结果汇总 ==========

# ========== Step 5: Output the test results summary ==========

print(f'尝试特征数量: {features_count}') # 打印测试的特征总数

# Print the total number of features tested

print(f'真实效应: 无 (全是随机噪声)') # 强调数据中无真实效应

# Emphasize that there is no real effect in the data

print(f'找到的 P < 0.05 的显著特征数: {significant_features_count} 个') # 打印通过检验的误报数

# Print the number of false positives passing the test

print(f'预期误报数 (Type I Error): {features_count * 0.05} 个') # 理论误报数 = 100 × 5% = 5

# Theoretical number of false positives = 100 × 5% = 5尝试特征数量: 100

真实效应: 无 (全是随机噪声)

找到的 P < 0.05 的显著特征数: 9 个

预期误报数 (Type I Error): 5.0 个P值黑客检验结果已输出。运行结果显示,在100个完全由随机噪声生成的特征中,竟有9个特征被检验判定为在5%显著性水平下”显著”——而真实效应为零!理论上,在5%的显著性水平下检验100个无效特征,预期误报数为 \(100 \times 0.05 = 5\) 个。本次模拟的9个”发现”略高于理论预期,但完全在合理波动范围内(二项分布 \(B(100, 0.05)\) 的标准差约为2.2)。这9个”显著”结果中,没有一个是真实效应,它们全部是第一类错误(False Positive)。这一结果有力说明了:只要尝试的次数足够多,即使数据中不存在任何真实信号,也几乎必然能挖掘出”统计显著”的结果。 下面可视化一个”显著”特征的散点图,直观展示数据挖掘产生的虚假相关性。

The P-hacking test results have been output. The results show that among 100 features generated entirely from random noise, 9 features were deemed “significant” at the 5% level — yet the true effect is zero! In theory, testing 100 null features at the 5% significance level should yield an expected \(100 \times 0.05 = 5\) false positives. The 9 “discoveries” in this simulation are slightly above the theoretical expectation but well within reasonable fluctuation (the standard deviation of a binomial \(B(100, 0.05)\) is approximately 2.2). Not a single one of these 9 “significant” results reflects a true effect — they are all Type I errors (false positives). This powerfully demonstrates that as long as enough tests are conducted, it is virtually certain that “statistically significant” results will be mined out of data even when no real signal exists. Below, we visualize the scatter plot of one such “significant” feature to intuitively illustrate the spurious correlation produced by data mining.

# ========== 第6步:可视化其中一个"显著"特征的散点图 ==========

# ========== Step 6: Visualize the scatter plot of one "significant" feature ==========

if significant_features_count > 0: # 如果存在至少一个"显著"特征

# If at least one "significant" feature exists

first_significant_index = significant_feature_indices[0] # 取第一个通过检验的特征索引

# Take the index of the first feature that passed the test

best_performing_feature = simulated_features_matrix[:, first_significant_index] # 提取该特征列数据

# Extract the data for that feature column

# 重新计算该特征与目标的相关系数和p值(用于图表标注)

# Recalculate the correlation coefficient and p-value for chart annotation

best_correlation, best_p_value = stats.pearsonr(best_performing_feature, simulated_target_array) # 重新计算相关系数和p值供图表标注

# Recalculate the correlation coefficient and p-value for chart annotation

plt.figure(figsize=(8, 5)) # 创建8×5英寸的画布

# Create an 8×5 inch canvas

plt.scatter(best_performing_feature, simulated_target_array, alpha=0.7) # 绘制散点图,透明度0.7

# Draw a scatter plot with 0.7 opacity

# 用一次多项式拟合画回归线(展示虚假的"相关性")

# Fit a first-degree polynomial to draw a regression line (showing the spurious "correlation")

slope_estimate, intercept_estimate = np.polyfit(best_performing_feature, simulated_target_array, 1) # 最小二乘拟合斜率和截距

# Least-squares fit for slope and intercept

plt.plot(best_performing_feature, # 绘制回归拟合线

# Plot the regression line

slope_estimate * best_performing_feature + intercept_estimate, # 拟合直线 y = slope*x + intercept

# Fitted line y = slope*x + intercept

color='red', linestyle='--') # 红色虚线表示拟合线

# Red dashed line for the fitted line

# 设置图表标题(包含相关系数r和p值,展示"挖掘"出的虚假关联)

# Set the chart title (including correlation r and p-value, showing the "mined" spurious association)

plt.title(f'P-Hacking 成果展示\n特征 #{first_significant_index} 与目标的相关性 (r={best_correlation:.3f}, p={best_p_value:.4f})', fontsize=14)

plt.xlabel('随机特征值') # x轴标签

# x-axis label

plt.ylabel('随机目标值') # y轴标签

# y-axis label

plt.grid(True, alpha=0.3) # 添加半透明网格线

# Add semi-transparent grid lines

plt.show() # 显示图形

# Display the figure

图 5.2 展示了在100个完全随机的特征中”精心挑选”出的一个”最佳”特征与目标变量的散点图。图中散点呈现出毫无规律的云状分布,但红色虚线的回归线却暗示了某种微弱的线性趋势。图表标题中的相关系数虽然不大(典型值在 \(|r| \approx 0.1\)–\(0.2\) 之间),但由于100个观测值的样本量,p值恰好低于0.05。这正是P值黑客的本质——在随机噪声中通过大量尝试”碰运气”式地发现虚假关联。如果一个量化基金将这个”发现”用于构建交易策略,其在样本外的表现几乎必然会回归至零。

图 5.2 presents a scatter plot of the “cherry-picked” best feature from among 100 entirely random features against the target variable. The scatter points exhibit a patternless cloud-like distribution, yet the red dashed regression line hints at a faint linear trend. Although the correlation coefficient in the chart title is small (typically \(|r| \approx 0.1\)–\(0.2\)), the p-value happens to fall below 0.05 thanks to the sample of 100 observations. This is the essence of P-hacking — discovering spurious associations through sheer trial-and-error in random noise. If a quantitative fund were to use this “discovery” to build a trading strategy, its out-of-sample performance would almost certainly revert to zero.

警示:当你看到一篇研究报告说”我们在分析了几百个财务指标后,发现指标X与股票超额收益有显著相关性”,请务必保持怀疑。这可能只是统计学上的”撞大运”——在足够多的指标中总会意外发现某个”显著”的结果。

Warning: When you see a research report claiming “after analyzing hundreds of financial indicators, we found that indicator X has a significant correlation with stock excess returns,” remain skeptical. This may simply be a statistical fluke — among enough indicators, some “significant” result will inevitably be found by chance.

5.3.2 2. 抽屉问题 (The File Drawer Problem)

为什么我们看到的科研论文大多是”成功”的(结果显著)?

- 因为那些”失败”的实验(P > 0.05)都被扔进抽屉里了,没人发表。

- 幸存者偏差:我们只看到了通过了显著性检验的幸存者,从而高估了效应的普遍性。

Why do most published scientific papers report “successful” (statistically significant) results?

- Because the “failed” experiments (P > 0.05) were tossed into file drawers and never published.

- Survivorship bias: We only see the survivors that passed the significance test, thus overestimating the prevalence of the effect. ## 假设检验 (Hypothesis Testing) {#sec-hypothesis-testing}

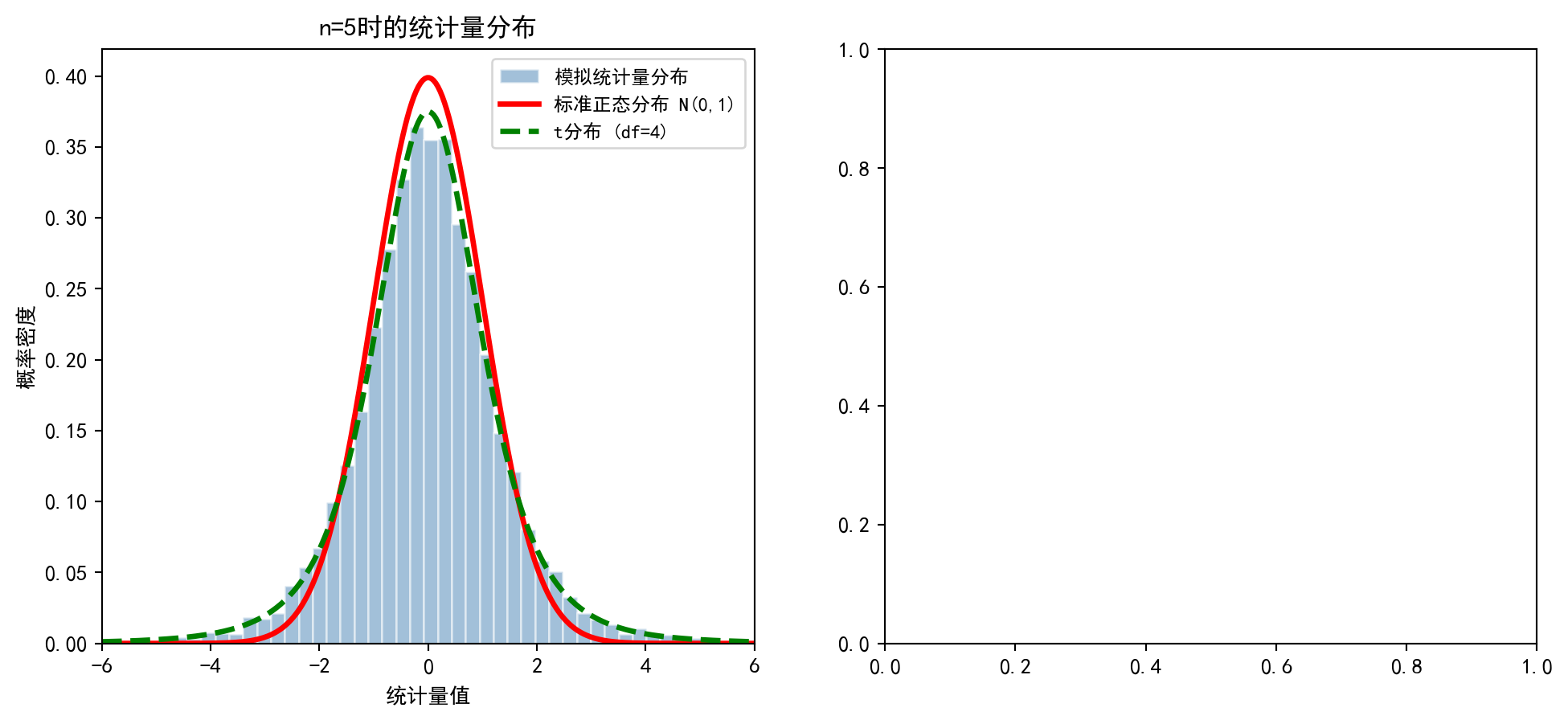

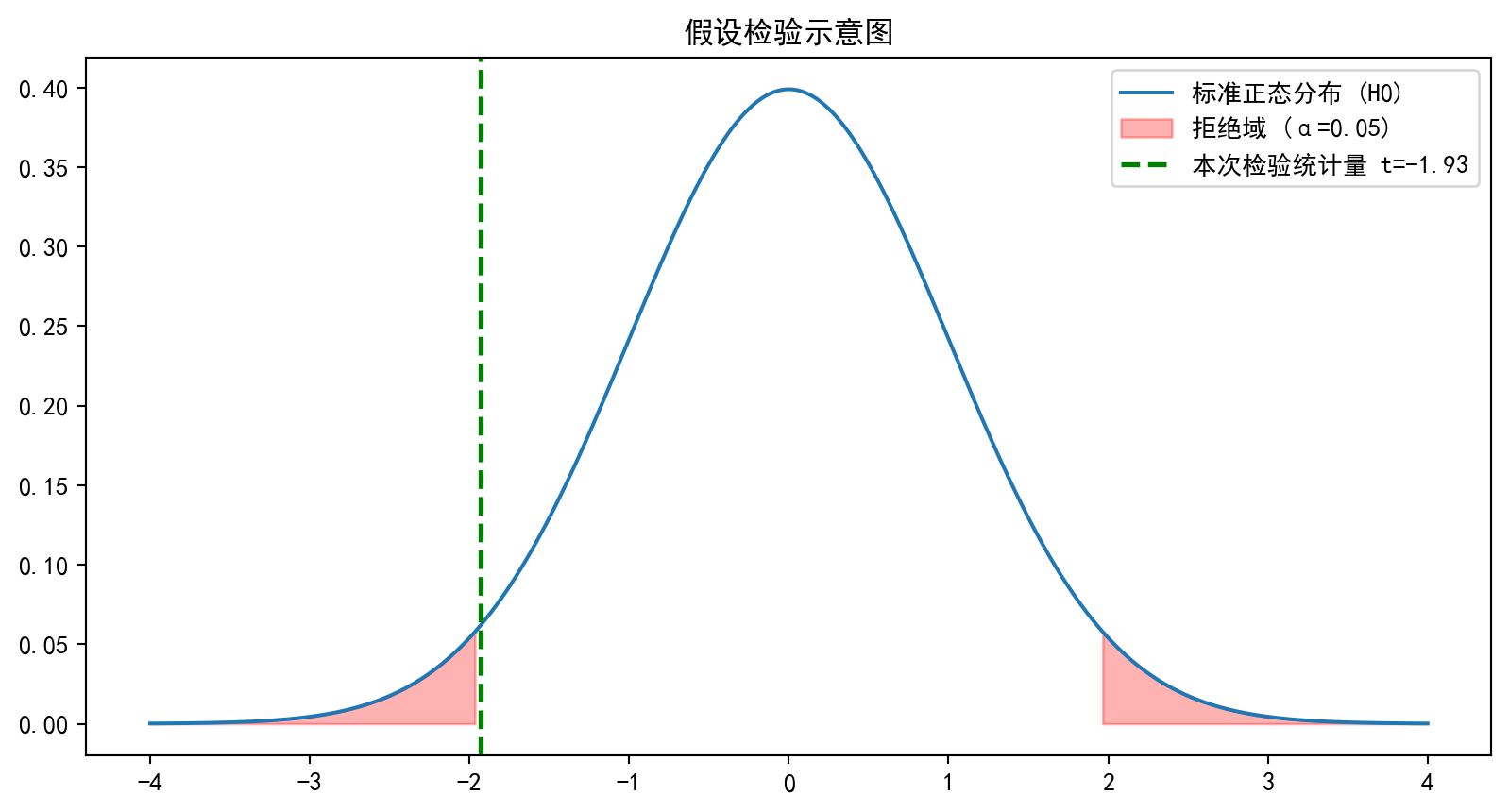

5.3.3 基本概念:惊讶度量与反证法 (Basic Concepts: Measuring Surprise and Proof by Contradiction)

假设检验基于反证法逻辑。我们先假设原假设 \(H_0\) 是对的(比如”银行ROE不高于2.5%“),然后看在这个假设下,我们的数据出现的可能性有多大。

Hypothesis testing is based on the logic of proof by contradiction. We first assume that the null hypothesis \(H_0\) is true (e.g., “the bank’s ROE does not exceed 2.5%”), and then examine how likely our observed data would be under this assumption.

如果数据出现的可能性极小(比如 \(p < 0.05\)),我们就会感到惊讶。这种惊讶迫使我们做出选择: 1. 发生了极小概率事件(运气爆棚)。 2. 原假设根本就是错的,所谓的”惊讶”其实是因为前提错了。

If the probability of the data occurring is extremely small (e.g., \(p < 0.05\)), we feel surprised. This surprise forces us to make a choice: 1. An extremely unlikely event has occurred (extraordinary luck). 2. The null hypothesis is simply wrong—the “surprise” is actually because the premise was incorrect.

科学推断倾向于后者,从而拒绝 \(H_0\)。这与法庭审判的逻辑——“无罪推定”直到”超越合理怀疑”——异曲同工。

Scientific inference favors the latter, leading us to reject \(H_0\). This is analogous to the logic of a court trial—“presumption of innocence” until “beyond reasonable doubt.”

5.3.3.1 假设的结构 (Structure of Hypotheses)

原假设(Null Hypothesis, \(H_0\)): 通常表示”无效应”、“无差异”或”现状”

Null Hypothesis (\(H_0\)): Typically represents “no effect,” “no difference,” or “the status quo”

备择假设(Alternative Hypothesis, \(H_1\) 或 \(H_a\)): 研究者希望证明的效应

Alternative Hypothesis (\(H_1\) or \(H_a\)): The effect the researcher hopes to demonstrate

如何设定假设?

How to formulate hypotheses?

原则: 将”希望证明”的陈述放在 \(H_1\) 中

Principle: Place the statement you “wish to prove” in \(H_1\)

例子1 (新量化策略更优):

- \(H_0\): 新量化策略与旧策略的年化收益率相同

- \(H_1\): 新量化策略比旧策略的年化收益率更高

Example 1 (A new quantitative strategy is superior):

- \(H_0\): The new quantitative strategy has the same annualized return as the old strategy

- \(H_1\): The new quantitative strategy has a higher annualized return than the old strategy

例子2 (检验上市公司财务违规率是否超标):

- \(H_0\): 上市公司财务违规率 ≤ 5%

- \(H_1\): 上市公司财务违规率 > 5%

Example 2 (Testing whether the financial fraud rate of listed companies exceeds the threshold):

- \(H_0\): The financial fraud rate of listed companies ≤ 5%

- \(H_1\): The financial fraud rate of listed companies > 5%

理由: 假设检验的设计使得如果拒绝 \(H_0\),我们有强证据支持 \(H_1\);但如果不能拒绝 \(H_0\),我们只是”没有足够证据”,而不是证明了 \(H_0\) 为真。

Rationale: The design of hypothesis testing ensures that if we reject \(H_0\), we have strong evidence supporting \(H_1\); however, if we fail to reject \(H_0\), we merely “lack sufficient evidence”—we have not proven \(H_0\) to be true.

5.3.3.2 两类错误 (Two Types of Errors)

| \(H_0\) 为真 | \(H_0\) 为假 | |

|---|---|---|

| 拒绝 \(H_0\) | 第一类错误(Type I Error) 假阳性(False Positive) 显著性水平 \(\alpha = P(\text{Type I})\) |

正确决策(True Positive) 功效(Power) = \(1-\beta\) |

| 不能拒绝 \(H_0\) | 正确决策(True Negative) | 第二类错误(Type II Error) 假阴性(False Negative) \(\beta = P(\text{Type II})\) |

| \(H_0\) is true | \(H_0\) is false | |

|---|---|---|

| Reject \(H_0\) | Type I Error False Positive Significance level \(\alpha = P(\text{Type I})\) |

Correct decision (True Positive) Power = \(1-\beta\) |

| Fail to reject \(H_0\) | Correct decision (True Negative) | Type II Error False Negative \(\beta = P(\text{Type II})\) |

经典权衡: 对于固定的样本量,减少 \(\alpha\) 会增加 \(\beta\),反之亦然。通常固定 \(\alpha\)(常用0.05或0.01),然后通过增大样本量来提高功效。

Classic trade-off: For a fixed sample size, decreasing \(\alpha\) increases \(\beta\), and vice versa. Typically, we fix \(\alpha\) (commonly at 0.05 or 0.01) and then increase the sample size to improve statistical power.

5.3.4 p值 (p-value)

定义: 在 \(H_0\) 为真的条件下,观测到当前样本(或更极端情况)的概率。

Definition: The probability of observing the current sample (or a more extreme outcome) given that \(H_0\) is true.

解读:

- p值 < \(\alpha\): 拒绝 \(H_0\) (结果显著)

- p值 ≥ \(\alpha\): 不能拒绝 \(H_0\) (结果不显著)

Interpretation:

- p-value < \(\alpha\): Reject \(H_0\) (result is statistically significant)

- p-value ≥ \(\alpha\): Fail to reject \(H_0\) (result is not statistically significant)

p值的常见误解

Common Misconceptions About p-values

❌ 错误: p值是 \(H_0\) 为真的概率 ✅ 正确: p值是在 \(H_0\) 为真时,得到当前数据的概率

❌ Incorrect: The p-value is the probability that \(H_0\) is true ✅ Correct: The p-value is the probability of obtaining the observed data given that \(H_0\) is true

❌ 错误: 小p值意味着 \(H_1\) 为真的概率大 ✅ 正确: 小p值说明数据与 \(H_0\) 不一致

❌ Incorrect: A small p-value means \(H_1\) is very likely true ✅ Correct: A small p-value indicates the data are inconsistent with \(H_0\)

❌ 错误: p < 0.05 表示发现了重要的、实用的效应 ✅ 正确: p值只衡量统计显著性,不衡量实际重要性。一个极小的p值可能来自一个统计显著但实际微不足道的效应

❌ Incorrect: p < 0.05 means a practically important effect has been discovered ✅ Correct: The p-value only measures statistical significance, not practical importance. An extremely small p-value may arise from an effect that is statistically significant but practically negligible

建议: 始终报告效应大小(如均值差、相关系数)和置信区间,而不仅仅是p值

Recommendation: Always report effect sizes (e.g., mean difference, correlation coefficient) and confidence intervals, not just p-values

5.3.5 单样本均值检验 (z检验与t检验) (One-Sample Mean Test: z-test and t-test)

检验假设: \[ H_0: \mu = \mu_0 \quad \text{vs} \quad H_1: \mu \neq \mu_0 \]

Test hypotheses: \[ H_0: \mu = \mu_0 \quad \text{vs} \quad H_1: \mu \neq \mu_0 \]

检验统计量如 式 5.8 所示:

The test statistic is shown in 式 5.8:

\[ t = \frac{\bar{X} - \mu_0}{S/\sqrt{n}} \sim t_{n-1} \quad (\text{under } H_0) \tag{5.8}\]

拒绝域: \(|t| > t_{\alpha/2, n-1}\) (双侧检验)

Rejection region: \(|t| > t_{\alpha/2, n-1}\) (two-sided test)

5.3.5.1 案例:检验工资水平 (Case Study: Testing Wage Levels)

什么是行业基准的假设检验?

What is hypothesis testing against an industry benchmark?

银行业是典型的高杠杆、强监管行业,其净资产收益率(ROE)长期以来是衡量银行经营效率的关键指标。行业分析师通常会设定一个「行业基准值」(如ROE达到10%),然后通过统计检验来判断某个子行业或区域的银行是否达到了这一基准。

The banking industry is a typical high-leverage, heavily regulated sector, where Return on Equity (ROE) has long served as a key indicator of operational efficiency. Industry analysts typically set an “industry benchmark” (e.g., ROE of 10%) and then use statistical tests to determine whether banks in a particular sub-sector or region meet this benchmark.

单样本t检验正是解决此类问题的标准工具:它将样本均值与一个已知的基准值进行比较,在考虑了样本波动性的情况下,判断总体均值是否与基准值存在统计上的显著差异。下面使用本地财务数据对长三角银行行业的平均ROE进行单样本t检验,结果如 表 5.4 所示。

The one-sample t-test is the standard tool for such problems: it compares the sample mean against a known benchmark value and, after accounting for sampling variability, determines whether the population mean differs significantly from the benchmark. Below, we perform a one-sample t-test on the average ROE of the Yangtze River Delta banking industry using local financial data. The results are shown in 表 5.4.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import numpy as np # 数值计算库

# NumPy library for numerical computation

from scipy import stats # 统计检验模块,提供t分布函数

# SciPy stats module providing t-distribution functions

import pandas as pd # 数据分析库,用于读取和处理HDF5数据

# Pandas library for reading and processing HDF5 data

from pathlib import Path # 路径处理模块,跨平台兼容

# Path module for cross-platform file path handling

# ========== 第1步:加载本地数据 ==========

# ========== Step 1: Load local data ==========

import platform # 导入平台检测模块,用于判断操作系统

# Import platform module to detect the operating system

if platform.system() == 'Windows': # Windows系统数据路径

# Windows system data path

data_directory_path = Path('C:/qiufei/data/stock') # Windows本地数据路径

# Local data path for Windows

else: # Linux系统数据路径

# Linux system data path

data_directory_path = Path('/home/ubuntu/r2_data_mount/qiufei/data/stock') # Linux本地数据路径

# Local data path for Linux

basic_info_dataframe = pd.read_hdf(data_directory_path / 'stock_basic_data.h5') # 读取上市公司基本信息

# Read basic information of listed companies

financial_statement_dataframe = pd.read_hdf(data_directory_path / 'financial_statement.h5') # 读取财务报表数据

# Read financial statement data

# ========== 第2步:数据筛选——锁定长三角银行业上市公司 ==========

# ========== Step 2: Data filtering — Identify YRD banking listed companies ==========

yrd_provinces_list = ['上海市', '江苏省', '浙江省', '安徽省'] # 定义长三角四省市

# Define the four YRD provinces/municipalities

target_industry_name = '货币金融服务' # 国统局行业分类中的银行业名称

# Banking industry name in the NBS industry classification

target_quarter_string = '2023q3' # 选取2023年第三季度作为分析时点

# Select Q3 2023 as the analysis period

# 构建布尔筛选条件:省份在长三角范围内

# Build boolean filter: province within the YRD region

region_mask = basic_info_dataframe['province'].isin(yrd_provinces_list) # 构建地区筛选布尔掩码

# Create a boolean mask for region filtering

# 构建布尔筛选条件:行业为银行业

# Build boolean filter: industry is banking

industry_mask = basic_info_dataframe['industry_name'] == target_industry_name # 筛选货币金融服务行业

# Filter for the monetary and financial services industry

# 取交集:同时满足地区和行业条件的公司

# Take the intersection: companies satisfying both region and industry criteria

target_companies_dataframe = basic_info_dataframe[region_mask & industry_mask] # 长三角银行业公司

# YRD banking companies长三角银行业上市公司筛选完成。下面获取指定季度的财务数据,计算ROE并进行异常值处理。

The filtering of YRD banking listed companies is complete. Next, we retrieve the financial data for the specified quarter, calculate ROE, and handle outliers.

# ========== 第3步:获取指定季度的财务数据并计算ROE ==========

# ========== Step 3: Retrieve quarterly financial data and calculate ROE ==========

# 从财务报表中筛选目标季度的净利润和股东权益数据

# Filter the target quarter's net profit and total equity from financial statements

financial_statement_quarter_dataframe = financial_statement_dataframe[ # 从财务报表筛选目标季度数据

# Filter target quarter data from financial statements

financial_statement_dataframe['quarter'] == target_quarter_string # 筛选2023Q3数据

# Filter for Q3 2023 data

][['order_book_id', 'net_profit', 'total_equity']] # 只保留需要的三列

# Keep only the three required columns

# 将公司基本信息与财务数据按股票代码合并

# Merge company basic information with financial data by stock code

merged_analysis_dataframe = pd.merge( # 内连接合并两张表

# Inner join to merge two tables

target_companies_dataframe, # 左表:目标公司列表

# Left table: list of target companies

financial_statement_quarter_dataframe, # 右表:财务数据

# Right table: financial data

on='order_book_id', # 合并键:股票代码

# Merge key: stock code

how='inner' # 内连接:只保留两表都有的记录

# Inner join: keep only records present in both tables

)

# 计算ROE(净资产收益率)= 净利润 / 股东权益

# Calculate ROE (Return on Equity) = Net Profit / Total Equity

merged_analysis_dataframe['roe'] = merged_analysis_dataframe['net_profit'] / merged_analysis_dataframe['total_equity'] # 计算ROE=净利润/股东权益

# Compute ROE = net profit / total equity

# ========== 第4步:异常值处理——去除极端ROE ==========

# ========== Step 4: Outlier handling — Remove extreme ROE values ==========

# 银行ROE通常较稳定,但防止极端分母导致的异常值

# Bank ROE is typically stable, but extreme denominators may cause outliers

clean_analysis_dataframe = merged_analysis_dataframe[ # 筛选ROE合理范围内的样本

# Filter samples within a reasonable ROE range

(merged_analysis_dataframe['roe'] > -0.5) & # ROE > -50%(排除极端亏损)

# ROE > -50% (exclude extreme losses)

(merged_analysis_dataframe['roe'] < 0.5) # ROE < 50%(排除异常高值)

# ROE < 50% (exclude abnormally high values)

]

roe_sample_series = clean_analysis_dataframe['roe'] # 提取清洗后的ROE序列

# Extract the cleaned ROE series

sample_size_n = len(roe_sample_series) # 计算样本量

# Calculate the sample size数据清洗完毕,长三角银行业上市公司的ROE样本已生成。下面设定假设检验参数,执行单样本t检验,并计算总体均值的95%置信区间,以判断该行业平均季度ROE是否显著高于2.5%(对应年化约10%)。

Data cleaning is complete, and the ROE sample for YRD banking listed companies has been generated. Next, we set the hypothesis test parameters, perform the one-sample t-test, and compute the 95% confidence interval for the population mean to determine whether the industry’s average quarterly ROE is significantly above 2.5% (approximately 10% annualized).

# ========== 第5步:设定假设检验参数 ==========

# ========== Step 5: Set hypothesis test parameters ==========

# H0: μ ≤ 0.025 (长三角银行业季度ROE不超过2.5%,对应年化约10%)

# H0: μ ≤ 0.025 (YRD banking quarterly ROE does not exceed 2.5%, ~10% annualized)

# H1: μ > 0.025 (季度ROE显著高于2.5%)

# H1: μ > 0.025 (quarterly ROE is significantly above 2.5%)

# 这是一个右侧单尾检验

# This is a right-tailed one-sided test

null_hypothesis_mean_value = 0.025 # 原假设下的总体均值μ₀ = 2.5%

# Null hypothesis population mean μ₀ = 2.5%

significance_level_alpha = 0.05 # 显著性水平α = 5%

# Significance level α = 5%

# ========== 第6步:计算检验统计量 ==========

# ========== Step 6: Calculate the test statistic ==========

sample_mean_roe = roe_sample_series.mean() # 样本均值 x̄

# Sample mean x̄

sample_standard_deviation = roe_sample_series.std() # 样本标准差 s(默认ddof=1,即Bessel修正)

# Sample standard deviation s (default ddof=1, i.e., Bessel's correction)

# t统计量 = (x̄ - μ₀) / (s / √n),衡量样本均值偏离原假设值的标准误数

# t-statistic = (x̄ - μ₀) / (s / √n), measuring how many standard errors the sample mean deviates from the null value

t_statistic_value = (sample_mean_roe - null_hypothesis_mean_value) / (sample_standard_deviation / np.sqrt(sample_size_n)) # 计算t统计量

# Calculate the t-statistic

# ========== 第7步:计算p值(右侧单尾) ==========

# ========== Step 7: Calculate the p-value (right-tailed) ==========

# p = P(T > t_obs | H0为真),即在原假设下观测到比当前t值更极端结果的概率

# p = P(T > t_obs | H0 is true), the probability of observing a more extreme result under H0

calculated_p_value_right_tail = 1 - stats.t.cdf(t_statistic_value, df=sample_size_n - 1) # 右侧单尾p值

# Right-tailed one-sided p-value

# ========== 第8步:计算95%双侧置信区间(辅助参考) ==========

# ========== Step 8: Calculate the 95% two-sided confidence interval (supplementary reference) ==========

# 虽然本检验为单侧,但双侧CI有助于直观展示参数的合理范围

# Although this is a one-sided test, the two-sided CI helps visualize the plausible range of the parameter

# CI下界 = x̄ - t_{0.975, n-1} × (s / √n)

# CI lower bound = x̄ - t_{0.975, n-1} × (s / √n)

confidence_interval_lower_bound = sample_mean_roe - stats.t.ppf(0.975, sample_size_n - 1) * sample_standard_deviation / np.sqrt(sample_size_n) # CI下界

# CI lower bound

# CI上界 = x̄ + t_{0.975, n-1} × (s / √n)

# CI upper bound = x̄ + t_{0.975, n-1} × (s / √n)

confidence_interval_upper_bound = sample_mean_roe + stats.t.ppf(0.975, sample_size_n - 1) * sample_standard_deviation / np.sqrt(sample_size_n) # CI上界

# CI upper bound检验统计量和置信区间计算完毕。下面输出完整的假设检验报告。

The test statistic and confidence interval calculations are complete. Below, we output the full hypothesis testing report.

# ========== 第9步:输出完整检验报告 ==========

# ========== Step 9: Output the complete test report ==========

print(f'行业: 长三角{target_industry_name}') # 打印行业范围

# Print the industry scope

print(f'假设检验目标:') # 打印假设结构

# Print hypothesis structure

print(f' H0: 平均季度ROE ≤ {null_hypothesis_mean_value:.1%} (年化约10%)') # 原假设

# Null hypothesis

print(f' H1: 平均季度ROE > {null_hypothesis_mean_value:.1%}') # 备择假设

# Alternative hypothesis

print(f' 显著性水平: α = {significance_level_alpha}') # 显著性水平

# Significance level

print(f'\n样本统计量:') # 分隔:样本描述统计

# Section separator: sample descriptive statistics

print(f' 样本量: n = {sample_size_n}') # 有效样本量

# Effective sample size

print(f' 样本均值: x̄ = {sample_mean_roe:.4f} = {sample_mean_roe:.2%}') # 均值(小数+百分比)

# Mean (decimal + percentage)

print(f' 样本标准差: s = {sample_standard_deviation:.4f}') # 标准差

# Standard deviation

print(f'\n检验结果:') # 分隔:核心检验结论

# Section separator: core test conclusions

print(f' 检验统计量: t = {t_statistic_value:.4f}') # t统计量

# t-statistic

print(f' p值 (单侧): {calculated_p_value_right_tail:.6f}') # 右侧p值

# Right-tailed p-value

print(f'\n结论:') # 分隔:最终判断

# Section separator: final conclusion

if calculated_p_value_right_tail < significance_level_alpha: # 若p < α则拒绝H0

# If p < α, reject H0

print(f' 拒绝 H0。有充分证据表明长三角银行业平均季度ROE显著高于{null_hypothesis_mean_value:.1%}。') # 拒绝原假设的结论

# Conclusion of rejecting the null hypothesis

else: # 否则不能拒绝H0

# Otherwise, fail to reject H0

print(f' 不能拒绝 H0。没有足够证据表明长三角银行业平均季度ROE显著高于{null_hypothesis_mean_value:.1%}。') # 不拒绝的结论

# Conclusion of failing to reject H0

print(f'\n95%置信区间 (均值): [{confidence_interval_lower_bound:.2%}, {confidence_interval_upper_bound:.2%}]') # CI范围

# 95% confidence interval range

print(f'注意: 样本量较小时需谨慎解释,但银行业通常样本量有限。') # 提示小样本注意事项

# Note on small sample size considerations行业: 长三角货币金融服务

假设检验目标:

H0: 平均季度ROE ≤ 2.5% (年化约10%)

H1: 平均季度ROE > 2.5%

显著性水平: α = 0.05

样本统计量:

样本量: n = 18

样本均值: x̄ = 0.0854 = 8.54%

样本标准差: s = 0.0186

检验结果:

检验统计量: t = 13.8137

p值 (单侧): 0.000000

结论:

拒绝 H0。有充分证据表明长三角银行业平均季度ROE显著高于2.5%。

95%置信区间 (均值): [7.62%, 9.46%]

注意: 样本量较小时需谨慎解释,但银行业通常样本量有限。表 5.4 展示了长三角银行业平均ROE的单样本t检验结果。样本包含18家银行,样本均值为8.54%,样本标准差为1.86%。在单侧检验(\(H_1: \mu > 2.5\%\))下,t统计量高达13.8137,对应p值为0.000000(远小于0.05),因此在5%显著性水平下拒绝原假设,有充分证据表明长三角银行业平均季度ROE显著高于2.5%的基准值。95%置信区间为[7.62%, 9.46%],该区间完全位于2.5%基准之上,从另一个角度印证了检验结论。值得注意的是,样本量仅为18家(银行业上市公司数量本身有限),因此使用t分布(而非正态分布)进行小样本推断是恰当的选择。8.54%的季度ROE折合年化约34%,显示银行业整体盈利能力较强。

表 5.4 presents the results of the one-sample t-test on the average ROE of the YRD banking industry. The sample contains 18 banks with a sample mean of 8.54% and a sample standard deviation of 1.86%. Under the one-sided test (\(H_1: \mu > 2.5\%\)), the t-statistic reaches 13.8137 with a p-value of 0.000000 (far below 0.05). Therefore, at the 5% significance level, we reject the null hypothesis, with sufficient evidence that the average quarterly ROE of YRD banks is significantly above the 2.5% benchmark. The 95% confidence interval is [7.62%, 9.46%], lying entirely above the 2.5% benchmark, corroborating the test conclusion from another perspective. It is noteworthy that the sample size is only 18 (the number of publicly listed banks is inherently limited), making the use of the t-distribution (rather than the normal distribution) an appropriate choice for small-sample inference. The 8.54% quarterly ROE translates to approximately 34% annualized, indicating strong overall profitability in the banking industry.

5.3.6 两样本均值检验 (Two-Sample Mean Test)

独立样本t检验: 比较两个独立总体的均值。检验统计量如 式 7.2 所示:

Independent samples t-test: Compares the means of two independent populations. The test statistic is shown in 式 7.2:

\[ t = \frac{\bar{X}_1 - \bar{X}_2}{S_p\sqrt{\frac{1}{n_1} + \frac{1}{n_2}}} \sim t_{n_1+n_2-2} \tag{5.9}\]

其中合并标准差的计算公式如 式 5.10 所示:

where the pooled standard deviation is calculated as shown in 式 5.10:

\[ S_p = \sqrt{\frac{(n_1-1)S_1^2 + (n_2-1)S_2^2}{n_1+n_2-2}} \tag{5.10}\]

配对样本t检验: 比较配对数据的均值差异,检验统计量如 式 7.3 所示:

Paired samples t-test: Compares the mean difference of paired data. The test statistic is shown in 式 7.3:

\[ t = \frac{\bar{d}}{S_d/\sqrt{n}} \sim t_{n-1} \tag{5.11}\]

其中 \(d_i = X_{1i} - X_{2i}\) 是配对差值。

where \(d_i = X_{1i} - X_{2i}\) is the paired difference.

5.3.6.1 案例:A/B测试效果评估 (Case Study: A/B Test Evaluation)

什么是区域经济差异的双样本比较?

What is a two-sample comparison of regional economic differences?

在区域经济研究和投资策略中,比较不同地区企业的经营绩效差异是一个常见且重要的分析任务。例如,上海作为长三角的核心城市和中国的金融中心,其上市公司的ROE是否显著高于安徽省?这种区域差异对于跨区域资产配置和产业布局决策至关重要。

In regional economic research and investment strategy, comparing the operational performance of enterprises across different regions is a common and important analytical task. For instance, as the core city of the Yangtze River Delta and China’s financial center, does Shanghai’s listed companies have significantly higher ROE than those in Anhui Province? Such regional differences are crucial for cross-regional asset allocation and industrial layout decisions.

双样本t检验是比较两个独立群体均值差异的经典统计方法。它不仅能告诉我们两组样本均值是否存在差异,更重要的是能在控制抽样误差的前提下,判断这种差异是否具有统计显著性。下面我们通过对比上海与安徽两地上市公司的平均ROE来演示双样本t检验,结果如 表 5.5 所示。

The two-sample t-test is a classic statistical method for comparing the mean difference between two independent groups. It not only tells us whether there is a difference in sample means but, more importantly, determines whether this difference is statistically significant after controlling for sampling error. Below, we demonstrate the two-sample t-test by comparing the average ROE of listed companies in Shanghai and Anhui. The results are shown in 表 5.5.

import numpy as np # 数值计算库

# NumPy library for numerical computation

from scipy import stats # 统计检验模块

# SciPy stats module for statistical testing

import pandas as pd # 数据分析库

# Pandas library for data analysis

from pathlib import Path # 路径处理模块

# Path module for file path handling

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import numpy as np # 数值计算库

# NumPy library for numerical computation

from scipy import stats # 统计检验模块

# SciPy stats module for statistical testing

import pandas as pd # 数据分析库

# Pandas library for data analysis

from pathlib import Path # 路径处理模块

# Path module for file path handling

# ========== 第1步:加载本地数据 ==========

# ========== Step 1: Load local data ==========

import platform # 导入平台检测模块,用于判断操作系统

# Import platform module to detect the operating system

if platform.system() == 'Windows': # Windows系统数据路径

# Windows system data path

data_directory_path = Path('C:/qiufei/data/stock') # Windows本地数据路径

# Local data path for Windows

else: # Linux系统数据路径

# Linux system data path

data_directory_path = Path('/home/ubuntu/r2_data_mount/qiufei/data/stock') # Linux本地数据路径

# Local data path for Linux

basic_info_dataframe = pd.read_hdf(data_directory_path / 'stock_basic_data.h5') # 读取公司基本信息

# Read company basic information

financial_statement_dataframe = pd.read_hdf(data_directory_path / 'financial_statement.h5') # 读取财务报表

# Read financial statements数据加载完毕。下面按地区筛选上海与安徽的上市公司并提取财务数据。

Data loading is complete. Next, we filter listed companies in Shanghai and Anhui by region and extract the financial data.

# ========== 第2步:按地区分组筛选公司 ==========

# ========== Step 2: Filter companies by region ==========

target_quarter_string = '2023q3' # 目标季度

# Target quarter

# 创建布尔掩码:分别筛选上海和安徽的上市公司

# Create boolean masks: filter listed companies in Shanghai and Anhui respectively

shanghai_companies_mask = basic_info_dataframe['province'] == '上海市' # 上海公司掩码

# Boolean mask for Shanghai companies

anhui_companies_mask = basic_info_dataframe['province'] == '安徽省' # 安徽公司掩码

# Boolean mask for Anhui companies

shanghai_companies_dataframe = basic_info_dataframe[shanghai_companies_mask] # 提取上海公司

# Extract Shanghai companies

anhui_companies_dataframe = basic_info_dataframe[anhui_companies_mask] # 提取安徽公司

# Extract Anhui companies

# ========== 第3步:获取指定季度的财务数据 ==========

# ========== Step 3: Retrieve financial data for the specified quarter ==========

# 从财务报表中筛选2023Q3的净利润和股东权益

# Filter Q3 2023 net profit and total equity from financial statements

financial_statement_quarter_dataframe = financial_statement_dataframe[ # 从财务报表筛选目标季度

# Filter the target quarter from financial statements

financial_statement_dataframe['quarter'] == target_quarter_string # 筛选目标季度

# Filter for the target quarter

][['order_book_id', 'net_profit', 'total_equity']] # 只保留关键列

# Keep only the key columns基础数据加载与地区筛选完成。下面定义ROE计算函数并分别计算上海与安徽两地上市公司的净资产收益率。

Basic data loading and regional filtering are complete. Next, we define the ROE calculation function and compute the return on equity for listed companies in Shanghai and Anhui respectively.

# ========== 第4步:定义ROE清洗函数 ==========

# ========== Step 4: Define the ROE cleaning function ==========

def calculate_cleaned_roe(companies_df, financials_df): # 定义ROE计算与清洗函数

# Define the ROE calculation and cleaning function

"""

计算并清洗公司ROE(净资产收益率)。

步骤:合并数据 → 计算ROE → 去除无穷值/空值 → 分位数去极值(1%-99%)。

参数:

companies_df: 包含公司基本信息的DataFrame

financials_df: 包含财务数据的DataFrame

返回:

清洗后的ROE Series

"""

merged_df = pd.merge(companies_df, financials_df, on='order_book_id', how='inner') # 按股票代码合并

# Merge by stock code

merged_df['roe'] = merged_df['net_profit'] / merged_df['total_equity'] # 计算ROE = 净利润/股东权益

# Calculate ROE = net profit / total equity

# 清洗第一步:将无穷值替换为NaN,然后删除含NaN的行

# Cleaning step 1: Replace infinity values with NaN, then drop rows containing NaN

merged_df = merged_df.replace([np.inf, -np.inf], np.nan).dropna(subset=['roe']) # 将无穷大值替换为NaN并删除空值

# Replace infinity with NaN and drop null values

# 清洗第二步:计算1%和99%分位数阈值,Winsorize去极端值

# Cleaning step 2: Compute 1st and 99th percentile thresholds, Winsorize to remove extreme values

# 这样可以避免少数壳股或ST公司的极端ROE干扰均值对比

# This prevents extreme ROE values from a few shell or ST companies from distorting the mean comparison

quantile_low_threshold = merged_df['roe'].quantile(0.01) # 下限:1%分位数

# Lower bound: 1st percentile

quantile_high_threshold = merged_df['roe'].quantile(0.99) # 上限:99%分位数

# Upper bound: 99th percentile

return merged_df[ # 返回去极值后的ROE序列

# Return the ROE series after removing extreme values

(merged_df['roe'] >= quantile_low_threshold) & # 大于等于下限

# Greater than or equal to the lower bound

(merged_df['roe'] <= quantile_high_threshold) # 小于等于上限

# Less than or equal to the upper bound

]['roe'] # 返回清洗后的ROE序列

# Return the cleaned ROE series

# ========== 第5步:分别计算两地的清洗后ROE ==========

# ========== Step 5: Calculate cleaned ROE for both regions ==========

shanghai_roe_series = calculate_cleaned_roe(shanghai_companies_dataframe, financial_statement_quarter_dataframe) # 上海ROE

# Shanghai ROE

anhui_roe_series = calculate_cleaned_roe(anhui_companies_dataframe, financial_statement_quarter_dataframe) # 安徽ROE

# Anhui ROE两地区上市公司的清洗后ROE数据已准备就绪。下面计算描述性统计量,执行Welch’s t检验,并计算均值差的95%置信区间,以判断上海与安徽上市公司的平均盈利能力是否存在显著差异。

The cleaned ROE data for listed companies in both regions is ready. Next, we calculate descriptive statistics, perform Welch’s t-test, and compute the 95% confidence interval for the mean difference to determine whether there is a significant difference in average profitability between Shanghai and Anhui listed companies.

# ========== 第6步:计算描述性统计量 ==========