# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import pandas as pd # 数据分析库

# Import the pandas library for data analysis

import numpy as np # 数值计算库

# Import the numpy library for numerical computation

from scipy.stats import chisquare # 卡方拟合优度检验函数

# Import the chi-square goodness-of-fit test function

import platform # 操作系统检测

# Import the platform module for OS detection

from pathlib import Path # 跨平台路径处理

# Import Path for cross-platform path handling

# ========== 第1步:设置本地数据路径 ==========

# ========== Step 1: Set local data path ==========

if platform.system() == 'Windows': # Windows系统路径

# Windows system path

data_directory_path = Path('C:/qiufei/data/stock') # 设置Windows本地股票数据目录

# Set the Windows local stock data directory

else: # Linux系统路径

# Linux system path

data_directory_path = Path('/home/ubuntu/r2_data_mount/qiufei/data/stock') # 设置Linux本地股票数据目录

# Set the Linux local stock data directory

# ========== 第2步:读取上市公司基本信息 ==========

# ========== Step 2: Read basic information of listed companies ==========

stock_basic_info_file_path = data_directory_path / 'stock_basic_data.h5' # 构造文件路径

# Construct the file path

stock_basic_info_dataframe = pd.read_hdf(stock_basic_info_file_path) # 加载HDF5数据

# Load the HDF5 data

# ========== 第3步:筛选长三角地区企业 ==========

# ========== Step 3: Filter companies in the Yangtze River Delta ==========

yangtze_river_delta_provinces_list = ['上海市', '浙江省', '江苏省'] # 长三角三省市

# List of three YRD provinces/municipalities

yangtze_river_delta_companies_dataframe = stock_basic_info_dataframe[ # 按省份筛选

# Filter by province

stock_basic_info_dataframe['province'].isin(yangtze_river_delta_provinces_list) # 保留省份在长三角列表中的行

# Keep rows where the province is in the YRD list

]6 拟合优度检验与列联表分析 (Goodness-of-Fit Tests and Contingency Table Analysis)

本章介绍卡方检验(Chi-Square Test)及其在分类数据分析中的应用。卡方检验是检验分类变量关联性和分布拟合优度的重要工具,广泛应用于市场研究、生物统计、质量控制等领域。

This chapter introduces the Chi-Square Test and its applications in the analysis of categorical data. The chi-square test is an important tool for examining the association between categorical variables and the goodness of fit of distributions, and is widely used in market research, biostatistics, quality control, and other fields.

6.1 拟合优度与独立性检验在金融分析中的典型应用 (Typical Applications of Goodness-of-Fit and Independence Tests in Financial Analysis)

卡方检验在金融和经济研究中用于分析分类变量之间的关联性,以及检验实际数据是否符合理论分布。以下展示其在中国资本市场中的核心应用。

The chi-square test is used in financial and economic research to analyze the association between categorical variables and to test whether observed data conform to a theoretical distribution. The following demonstrates its core applications in China’s capital markets.

6.1.1 应用一:行业分类与财务绩效的独立性检验 (Application 1: Independence Test Between Industry Classification and Financial Performance)

投资研究中一个基本问题是:行业分类是否与公司财务绩效存在显著关联?利用 stock_basic_data.h5 中的行业分类和 financial_statement.h5 中的盈利数据,可以将ROE按分位数划分为”优”、“中”、“差”三类,构建行业×绩效等级的列联表,然后使用卡方独立性检验判断行业归属与盈利水平之间是否存在统计上的显著关联。如果拒绝独立性假设,则意味着行业选择本身就是投资收益的重要决定因素。

A fundamental question in investment research is: Is there a significant association between industry classification and corporate financial performance? Using the industry classifications from stock_basic_data.h5 and profitability data from financial_statement.h5, one can classify ROE into three tiers—“high,” “medium,” and “low”—by quantiles, construct an industry × performance tier contingency table, and then apply a chi-square independence test to determine whether there is a statistically significant association between industry affiliation and profitability level. If the independence hypothesis is rejected, it implies that industry selection itself is an important determinant of investment returns.

6.1.2 应用二:收益率分布的拟合优度检验 (Application 2: Goodness-of-Fit Test for Return Distributions)

检验A股收益率是否服从正态分布是金融风险管理中的基础问题。通过对 stock_price_pre_adjusted.h5 中的日收益率数据进行分组,然后使用卡方拟合优度检验比较实际频率与理论正态分布的预期频率,可以严格量化收益率偏离正态分布的程度。这一检验的结果直接影响VaR模型和期权定价模型的选择(参见 章节 4 中对厚尾分布的讨论)。

Testing whether A-share stock returns follow a normal distribution is a fundamental issue in financial risk management. By grouping daily return data from stock_price_pre_adjusted.h5 and then using the chi-square goodness-of-fit test to compare observed frequencies with the expected frequencies under a theoretical normal distribution, one can rigorously quantify the degree to which returns deviate from normality. The results of this test directly influence the choice of VaR models and option pricing models (see the discussion of heavy-tailed distributions in 章节 4).

6.1.3 应用三:市场微观结构中的交易行为分析 (Application 3: Trading Behavior Analysis in Market Microstructure)

在市场微观结构研究中,卡方检验可用于分析交易行为是否存在显著的时间模式(如”周末效应”、“月初效应”)。通过构建”交易日类型×涨跌方向”的列联表,检验涨跌概率是否在不同时段间保持独立,可以为量化交易策略提供统计依据。

In market microstructure research, the chi-square test can be used to analyze whether trading behavior exhibits significant temporal patterns (such as the “weekend effect” or “turn-of-the-month effect”). By constructing a contingency table of “trading day type × price movement direction” and testing whether the probability of price increases or decreases remains independent across different time periods, one can provide a statistical basis for quantitative trading strategies.

6.2 卡方分布 (The Chi-Square Distribution)

6.2.1 定义与性质 (Definition and Properties)

卡方分布是连续概率分布,是独立标准正态随机变量平方和的分布。

The chi-square distribution is a continuous probability distribution that represents the distribution of the sum of squares of independent standard normal random variables.

定义: 若 \(Z_1, Z_2, ..., Z_k\) 是相互独立的标准正态随机变量,则:

Definition: If \(Z_1, Z_2, ..., Z_k\) are mutually independent standard normal random variables, then:

\[ X = Z_1^2 + Z_2^2 + \cdots + Z_k^2 \]

服从自由度为 \(k\) 的卡方分布,记为 \(X \sim \chi^2(k)\)。

follows a chi-square distribution with \(k\) degrees of freedom, denoted as \(X \sim \chi^2(k)\).

重要性质:

Important Properties:

可加性: 若 \(X_1 \sim \chi^2(k_1)\), \(X_2 \sim \chi^2(k_2)\) 且相互独立,则 \(X_1 + X_2 \sim \chi^2(k_1 + k_2)\)

Additivity: If \(X_1 \sim \chi^2(k_1)\), \(X_2 \sim \chi^2(k_2)\) and they are mutually independent, then \(X_1 + X_2 \sim \chi^2(k_1 + k_2)\)

均值与方差:

- 均值: \(E[X] = k\)

- 方差: \(\text{Var}(X) = 2k\)

Mean and Variance:

- Mean: \(E[X] = k\)

- Variance: \(\text{Var}(X) = 2k\)

与正态分布的关系: 当 \(k\) 很大时(\(k > 30\)), \(\sqrt{2\chi^2(k)}\) 近似服从 \(N(\sqrt{2k-1}, 1)\)

Relationship with the Normal Distribution: When \(k\) is large (\(k > 30\)), \(\sqrt{2\chi^2(k)}\) approximately follows \(N(\sqrt{2k-1}, 1)\)

形状: 卡方分布右偏,自由度越小越偏;随着自由度增加,逐渐接近正态分布

Shape: The chi-square distribution is right-skewed; the smaller the degrees of freedom, the greater the skewness. As the degrees of freedom increase, it gradually approaches the normal distribution.

6.2.2 自由度的含义 (The Meaning of Degrees of Freedom)

自由度(Degrees of Freedom, df)是统计学中一个极其重要但也常常令初学者困惑的概念。简单来说,自由度是指在计算某个统计量时,可以自由取值的独立信息的个数。

Degrees of Freedom (df) is an extremely important concept in statistics that often confuses beginners. Simply put, degrees of freedom refers to the number of independent pieces of information that are free to vary when computing a statistic.

直观理解:约束与自由

Intuitive Understanding: Constraints and Freedom

想象你有5个数,我告诉你它们的平均值是10(即总和为50)。那么:

Imagine you have 5 numbers, and I tell you their mean is 10 (i.e., the sum is 50). Then:

第1个数你可以任意选(比如选8)

第2个数你也可以任意选(比如选12)

第3个数你也可以任意选(比如选9)

第4个数你也可以任意选(比如选11)

但第5个数你没有选择余地——它只能是 \(50 - 8 - 12 - 9 - 11 = 10\)

The 1st number can be freely chosen (say, 8)

The 2nd number can also be freely chosen (say, 12)

The 3rd number can also be freely chosen (say, 9)

The 4th number can also be freely chosen (say, 11)

But the 5th number is fully determined—it can only be \(50 - 8 - 12 - 9 - 11 = 10\)

换句话说,在”总和=50”这一约束条件下,5个数中只有4个能自由变化,最后一个被自动确定了。因此自由度为 \(df = 5 - 1 = 4\)。

In other words, under the constraint “sum = 50,” only 4 of the 5 numbers can vary freely; the last one is automatically determined. Therefore, the degrees of freedom is \(df = 5 - 1 = 4\).

一般原则:如果有 \(n\) 个独立的观测值,每施加一个约束(如估计一个参数),就会”消耗”一个自由度。最终的自由度 = 观测值个数 – 施加的约束个数。

General Principle: If there are \(n\) independent observations, each constraint imposed (such as estimating a parameter) “consumes” one degree of freedom. The final degrees of freedom = number of observations – number of constraints imposed.

为什么自由度在统计推断中至关重要?

Why Are Degrees of Freedom Critical in Statistical Inference?

自由度直接决定了检验统计量的抽样分布。以卡方检验为例,同样的 \(\chi^2 = 10\),在 \(df = 2\) 时对应极小的 \(p\) 值(强烈拒绝原假设),而在 \(df = 10\) 时对应较大的 \(p\) 值(不拒绝原假设)。如果自由度计算错误,整个统计推断的结论都会出错。

Degrees of freedom directly determine the sampling distribution of the test statistic. Taking the chi-square test as an example, the same \(\chi^2 = 10\) corresponds to a very small \(p\)-value at \(df = 2\) (strongly rejecting the null hypothesis), but a much larger \(p\)-value at \(df = 10\) (failing to reject the null hypothesis). If the degrees of freedom are calculated incorrectly, the conclusions of the entire statistical inference will be wrong.

在卡方检验中的自由度

Degrees of Freedom in Chi-Square Tests

在卡方检验中,自由度的计算规则如下:

The rules for calculating degrees of freedom in chi-square tests are as follows:

拟合优度检验: \(df = k - 1 - m\),其中 \(k\) 是类别数,\(m\) 是从数据中估计的参数个数

独立性检验: \(df = (r-1)(c-1)\),其中 \(r\) 和 \(c\) 分别是行数和列数

Goodness-of-fit test: \(df = k - 1 - m\), where \(k\) is the number of categories and \(m\) is the number of parameters estimated from the data

Independence test: \(df = (r-1)(c-1)\), where \(r\) and \(c\) are the number of rows and columns, respectively

为什么拟合优度检验中 \(df = k - 1 - m\)?

Why Is \(df = k - 1 - m\) in the Goodness-of-Fit Test?

首先,\(k\) 个类别的频数之和等于总样本量 \(n\),这是一个固有约束,因此从 \(k\) 个频数中必须减去1,得到 \(k-1\) 个自由变化的频数。

其次,如果我们还从数据中估计了分布的参数(比如正态分布的均值 \(\mu\) 和方差 \(\sigma^2\)),每估计一个参数就相当于施加了额外的约束,每个参数再减去1个自由度。所以最终 \(df = k - 1 - m\)。

First, the sum of frequencies across \(k\) categories equals the total sample size \(n\), which is an inherent constraint. Therefore, 1 must be subtracted from \(k\) frequencies, yielding \(k-1\) freely varying frequencies.

Second, if we additionally estimate distribution parameters from the data (such as the mean \(\mu\) and variance \(\sigma^2\) of a normal distribution), each estimated parameter imposes an additional constraint, reducing the degrees of freedom by 1 for each parameter. Thus, the final result is \(df = k - 1 - m\).

为什么独立性检验中 \(df = (r-1)(c-1)\)?

Why Is \(df = (r-1)(c-1)\) in the Independence Test?

列联表有 \(r \times c\) 个单元格,但行合计(\(r\) 个约束,因给定列总数后只有 \(r-1\) 个独立)和列合计(\(c-1\) 个独立约束)加上总样本量的约束,使得最终可自由变化的单元格数为 \((r-1)(c-1)\)。

A contingency table has \(r \times c\) cells, but the row totals (\(r\) constraints, of which only \(r-1\) are independent given the column totals) and column totals (\(c-1\) independent constraints), together with the constraint on the total sample size, result in \((r-1)(c-1)\) freely varying cells.

6.3 卡方拟合优度检验 (Chi-Square Goodness-of-Fit Test)

6.3.1 检验原理 (Test Principle)

拟合优度检验用于检验观测频数是否符合理论分布。

The goodness-of-fit test is used to test whether observed frequencies conform to a theoretical distribution.

假设设置:

Hypothesis Setup:

原假设 \(H_0\): 观测数据服从理论分布

备择假设 \(H_1\): 观测数据不服从理论分布

Null hypothesis \(H_0\): The observed data follow the theoretical distribution

Alternative hypothesis \(H_1\): The observed data do not follow the theoretical distribution

卡方统计量:

Chi-Square Statistic:

如 式 6.1 所示,卡方统计量的计算公式为:

As shown in 式 6.1, the formula for the chi-square statistic is:

\[ \chi^2 = \sum_{i=1}^{k} \frac{(O_i - E_i)^2}{E_i} \tag{6.1}\]

其中:

Where:

\(O_i\): 第 \(i\) 类别的观测频数

\(E_i\): 第 \(i\) 类别的理论频数

\(O_i\): Observed frequency in the \(i\)-th category

\(E_i\): Expected (theoretical) frequency in the \(i\)-th category

6.3.2 卡方统计量的数学本质 (The Mathematical Essence of the Chi-Square Statistic)

为什么 \(\sum \frac{(O-E)^2}{E}\) 会服从卡方分布? 这源于正态近似。

Why does \(\sum \frac{(O-E)^2}{E}\) follow a chi-square distribution? This stems from the normal approximation.

考虑第 \(i\) 个类别的观测频数 \(O_i\)。根据二项分布,它的期望是 \(E_i = np_i\),方差是 \(Var(O_i) = np_i(1-p_i) \approx np_i = E_i\)(当 \(p_i\) 很小时,泊松近似)。

Consider the observed frequency \(O_i\) for the \(i\)-th category. According to the binomial distribution, its expectation is \(E_i = np_i\), and its variance is \(Var(O_i) = np_i(1-p_i) \approx np_i = E_i\) (under the Poisson approximation when \(p_i\) is small).

我们将 \(O_i\) 标准化:

We standardize \(O_i\):

\[ Z_i = \frac{O_i - E_i}{\sqrt{Var(O_i)}} \approx \frac{O_i - E_i}{\sqrt{E_i}} \]

将它们平方并求和:

Squaring and summing them:

\[ \chi^2 = \sum Z_i^2 = \sum \frac{(O_i - E_i)^2}{E_i} \]

这也是为什么卡方检验要求样本量大(保证正态近似有效)且理论频数 \(E_i\) 不能太小(作为分母,太小会导致统计量不稳定)。

This is also why the chi-square test requires a large sample size (to ensure the validity of the normal approximation) and that the expected frequencies \(E_i\) not be too small (as the denominator, very small values would make the statistic unstable).

直观理解:

Intuitive Understanding:

\(\chi^2\) 实际上是标准化残差的平方和。

它衡量了观测数据与理论模型之间的”欧几里得距离”。

\(\chi^2\) is essentially the sum of squared standardized residuals.

It measures the “Euclidean distance” between the observed data and the theoretical model.

6.3.3 适用条件 (Conditions for Applicability)

卡方检验的有效性依赖于以下条件:

The validity of the chi-square test depends on the following conditions:

样本量足够大: 每个类别的理论频数 \(E_i \geq 5\)

- 如果某些类别频数过小,可合并相邻类别

- 或使用精确检验(Fisher’s Exact Test)

Sufficiently large sample size: The expected frequency for each category \(E_i \geq 5\)

- If some categories have frequencies that are too small, adjacent categories can be merged

- Or an exact test (Fisher’s Exact Test) can be used

数据独立: 每个观测相互独立

- 不能有重复测量或配对数据

Independence of data: Each observation is mutually independent

- No repeated measures or paired data are allowed

互斥且穷尽: 每个观测只能落入一个类别,且所有类别覆盖全部可能

Mutually exclusive and exhaustive: Each observation can fall into only one category, and all categories cover every possibility

6.3.4 检验步骤 (Testing Procedure)

- 建立假设: 明确原假设和备择假设

- 计算理论频数: 根据 \(H_0\) 计算每个类别的期望频数

- 计算卡方统计量: 使用公式计算 \(\chi^2\)

- 确定自由度: \(df = k - 1 - m\)

- 查找临界值或计算p值: 与 \(\chi^2\) 分布比较

- 做出决策:

- 若 \(p < \alpha\),拒绝 \(H_0\)

- 若 \(p \geq \alpha\),不能拒绝 \(H_0\)

- Formulate hypotheses: Clearly state the null and alternative hypotheses

- Compute expected frequencies: Calculate expected frequencies for each category under \(H_0\)

- Compute the chi-square statistic: Use the formula to calculate \(\chi^2\)

- Determine degrees of freedom: \(df = k - 1 - m\)

- Find the critical value or compute the p-value: Compare with the \(\chi^2\) distribution

- Make a decision:

- If \(p < \alpha\), reject \(H_0\)

- If \(p \geq \alpha\), fail to reject \(H_0\)

6.3.5 优缺点 (Advantages and Disadvantages)

优点:

Advantages:

非参数方法:不假设数据服从特定连续分布,适用范围广

计算简单:公式直观,只需观测频数和理论频数

检验有力:对分布偏离的检测功效较高

Nonparametric method: Does not assume the data follow a specific continuous distribution; broadly applicable

Computationally simple: The formula is intuitive, requiring only observed and expected frequencies

Powerful test: Has relatively high power for detecting distributional deviations

缺点:

Disadvantages:

对小样本敏感:要求 \(E_i \geq 5\),否则近似不可靠

信息损失:将连续变量分箱会丢失精确信息

方向性不明:只能告诉你”不符合”,不能告诉你”如何不符合”(需要看标准化残差)

对分箱方案敏感:不同的分箱方案可能导致不同结论

Sensitive to small samples: Requires \(E_i \geq 5\); otherwise the approximation is unreliable

Information loss: Binning continuous variables results in loss of precise information

Lack of directionality: Can only tell you “does not fit,” not “how it does not fit” (standardized residuals must be examined)

Sensitive to binning scheme: Different binning schemes may lead to different conclusions

6.3.6 “脏活累活” (Dirty Work):分箱的艺术 (The Art of Binning)

卡方检验最头疼的问题往往不是算 \(p\) 值,而是如何分箱 (Binning)。

The most troublesome issue with the chi-square test is often not computing the \(p\)-value, but rather how to bin the data (Binning).

对于连续变量(如年龄、收入),我们需要先将其离散化。

For continuous variables (such as age or income), we need to discretize them first.

分箱太细:会导致很多格子 \(E_i < 5\),统计检验失效。

分箱太粗:会掩盖数据内部的分布特征(例如把 10-20岁 和 20-60岁 合并,就看不出青年人的特征)。

Too fine: Leads to many cells with \(E_i < 5\), causing the statistical test to break down.

Too coarse: Masks the internal distribution characteristics of the data (e.g., merging 10–20 years old and 20–60 years old makes it impossible to see the characteristics of young people).

黄金法则:

Golden Rules:

理论优先:根据业务逻辑分箱(如:未成年/青年/中年/老年)。

等频分箱:确保每个箱子的样本量大致相等,最大化统计功效。

合并稀疏项:一旦发现某个格子 \(E_i < 5\),哪怕违背业务逻辑,也要将其与相邻格子合并。因为统计有效性是底线。

Theory first: Bin according to business logic (e.g., minor / young adult / middle-aged / elderly).

Equal-frequency binning: Ensure each bin contains roughly the same number of observations to maximize statistical power.

Merge sparse cells: Once a cell with \(E_i < 5\) is found, it must be merged with an adjacent cell, even if it violates business logic, because statistical validity is the baseline requirement.

6.3.7 案例:长三角地区行业分布检验 (Case Study: Industry Distribution Test in the Yangtze River Delta)

什么是行业分布的均匀性检验?

What Is a Uniformity Test for Industry Distribution?

区域经济研究和产业规划中,一个关键问题是:某个地区的产业结构是否均衡,还是存在明显的行业集中化现象?例如,长三角地区是中国经济最活跃的区域之一,其上市公司的行业分布是否均匀,直接反映了该区域的产业多元化程度和经济结构特征。

In regional economic research and industrial planning, a key question is: Is the industrial structure of a given region balanced, or does it exhibit significant industry concentration? For example, the Yangtze River Delta (YRD) is one of the most economically active regions in China, and whether its listed companies are evenly distributed across industries directly reflects the region’s degree of industrial diversification and economic structural characteristics.

卡方拟合优度检验是解决此类问题的经典统计工具:它将观察到的各行业公司数量与「均匀分布」假设下的期望数量进行比较,如果实际分布与均匀分布差异显著,则说明存在行业集中化。下面使用本地数据集检验长三角地区不同行业的公司分布是否均匀,结果如 表 6.1 所示。

The chi-square goodness-of-fit test is a classic statistical tool for addressing such questions: it compares the observed number of companies in each industry with the expected numbers under a “uniform distribution” hypothesis. If the actual distribution differs significantly from a uniform distribution, it indicates the presence of industry concentration. Below, we use a local dataset to test whether the distribution of companies across industries in the YRD is uniform, with results shown in 表 6.1.

长三角地区上市公司筛选完毕。下面统计行业分布并输出前5大行业的频数。

The filtering of listed companies in the YRD is complete. Next, we compute the industry distribution and output the frequencies of the top 5 industries.

# ========== 第4步:统计行业分布并取前5大行业 ==========

# ========== Step 4: Compute industry distribution and select the top 5 industries ==========

industry_frequency_counts_series = yangtze_river_delta_companies_dataframe[ # 计算各行业频数

# Compute the frequency for each industry

'industry_name' # 选取行业名称列

# Select the industry name column

].value_counts() # 统计各行业出现频次并降序排列

# Count the frequency of each industry and sort in descending order

top_five_industries_series = industry_frequency_counts_series.head(5) # 选取前5大行业

# Select the top 5 industries

print('=' * 60) # 打印分隔线

# Print a separator line

print('长三角地区上市公司行业分布') # 输出标题

# Print the title

print('=' * 60) # 打印分隔线

# Print a separator line

print('\n观测频数:') # 提示下方为频数数据

# Indicate that observed frequencies follow

print(top_five_industries_series) # 输出前5行业的频数统计

# Print the frequency statistics for the top 5 industries============================================================

长三角地区上市公司行业分布

============================================================

观测频数:

industry_name

计算机、通信和其他电子设备制造业 180

电气机械和器材制造业 150

专用设备制造业 146

化学原料和化学制品制造业 131

通用设备制造业 118

Name: count, dtype: int64长三角地区前5大行业的观测频数统计结果显示:计算机、通信和其他电子设备制造业以180家公司位居榜首,其后依次为电气机械和器材制造业(150家)、专用设备制造业(146家)、化学原料和化学制品制造业(131家)和通用设备制造业(118家),5个行业合计725家。从直觉上看,这5个行业的公司数量并非完全均等,计算机通信行业明显偏多,而通用设备行业偏少。下面构建均匀分布假设下的期望频数并执行卡方拟合优度检验,用统计方法严格判断这种差异是否达到了显著水平。

The observed frequency statistics for the top 5 industries in the YRD show that Computer, Communication and Other Electronic Equipment Manufacturing ranks first with 180 companies, followed by Electrical Machinery and Equipment Manufacturing (150), Special-Purpose Equipment Manufacturing (146), Chemical Raw Materials and Chemical Products Manufacturing (131), and General-Purpose Equipment Manufacturing (118), totaling 725 companies across the 5 industries. Intuitively, the number of companies across these 5 industries is not perfectly equal—the computer and communications industry is notably overrepresented, while general-purpose equipment is underrepresented. Next, we construct the expected frequencies under the uniform distribution hypothesis and perform a chi-square goodness-of-fit test to rigorously determine whether this difference reaches statistical significance.

# ========== 第5步:构建均匀分布假设下的期望频数 ==========

# ========== Step 5: Construct expected frequencies under the uniform distribution hypothesis ==========

total_companies_in_top_sectors_count = top_five_industries_series.sum() # 前5行业公司总数

# Total number of companies in the top 5 industries

uniform_expected_frequency_value = ( # 均匀分布下每行业期望数

# Expected number per industry under uniform distribution

total_companies_in_top_sectors_count / len(top_five_industries_series) # 总数除以行业数得到均值

# Divide total by the number of industries to get the mean

)

expected_frequencies_list = [uniform_expected_frequency_value] * len( # 构造期望频数列表

# Construct the list of expected frequencies

top_five_industries_series # 列表长度与行业数一致

# List length matches the number of industries

)

# ========== 第6步:执行卡方拟合优度检验 ==========

# ========== Step 6: Perform the chi-square goodness-of-fit test ==========

chi_square_statistic_value, calculated_p_value = chisquare( # scipy卡方检验

# Perform the scipy chi-square test

top_five_industries_series.values, f_exp=expected_frequencies_list # 传入观测频数和期望频数

# Pass in observed frequencies and expected frequencies

)

degrees_of_freedom_value = len(top_five_industries_series) - 1 # 自由度 = 类别数 - 1

# Degrees of freedom = number of categories - 1

print(f'\n原假设 H0: 各行业公司数量相等') # 输出原假设

# Print the null hypothesis

print(f'备择假设 H1: 各行业公司数量不相等') # 输出备择假设

# Print the alternative hypothesis

print(f'\n卡方统计量: {chi_square_statistic_value:.4f}') # 输出χ²统计量

# Print the chi-square statistic

print(f'自由度: {degrees_of_freedom_value}') # 输出自由度

# Print the degrees of freedom

print(f'p值: {calculated_p_value:.8f}') # 输出p值

# Print the p-value

原假设 H0: 各行业公司数量相等

备择假设 H1: 各行业公司数量不相等

卡方统计量: 15.0069

自由度: 4

p值: 0.00468693卡方检验结果为:\(\chi^2 = 15.0069\),自由度 \(df = 4\),\(p = 0.00469\)。在均匀分布的原假设下,每个行业的期望频数为 \(725/5 = 145\) 家。\(p\) 值远小于显著性水平0.05,说明我们有充分的统计学证据拒绝”各行业公司数量相等”的原假设——长三角地区前5大行业的公司数量分布确实不均匀。下面进行显著性判断并计算标准化残差,以识别偏离均匀分布最大的行业。

The chi-square test results are: \(\chi^2 = 15.0069\), degrees of freedom \(df = 4\), and \(p = 0.00469\). Under the null hypothesis of a uniform distribution, the expected frequency for each industry is \(725/5 = 145\) companies. The \(p\)-value is far below the significance level of 0.05, indicating that we have sufficient statistical evidence to reject the null hypothesis that “all industries have equal numbers of companies”—the distribution of companies across the top 5 industries in the YRD is indeed non-uniform. Next, we assess statistical significance and compute standardized residuals to identify the industries that deviate most from the uniform distribution.

# ========== 第7步:统计显著性判断 ==========

# ========== Step 7: Statistical significance assessment ==========

significance_level_alpha = 0.05 # 显著性水平 α=0.05

# Significance level α = 0.05

print(f'\n结论 (α={significance_level_alpha}):') # 输出显著性判断标题

# Print the significance assessment heading

if calculated_p_value < significance_level_alpha: # p < α 则拒绝H0

# If p < α, reject H0

print(f' 拒绝H0 (p={calculated_p_value:.8f} < {significance_level_alpha})') # 输出拒绝原假设结论

# Print the conclusion of rejecting the null hypothesis

print(f' 各行业公司数量存在显著差异,分布不均匀') # 说明行业分布不均匀

# State that significant differences exist among industries; the distribution is non-uniform

else: # p ≥ α 则不拒绝H0

# If p ≥ α, fail to reject H0

print(f' 不能拒绝H0 (p={calculated_p_value:.8f} >= {significance_level_alpha})') # 输出不拒绝结论

# Print the conclusion of failing to reject the null hypothesis

print(f' 没有证据表明各行业公司数量不均衡') # 说明无显著差异

# State that there is no evidence of imbalance among industries

# ========== 第8步:计算标准化残差(识别偏离最大的类别) ==========

# ========== Step 8: Compute standardized residuals (identify the most deviant categories) ==========

observed_frequencies_array = top_five_industries_series.values # 观测频数数组

# Array of observed frequencies

standardized_residuals_array = ( # 标准化残差=(O-E)/√E

# Standardized residuals = (O - E) / √E

(observed_frequencies_array - uniform_expected_frequency_value) # 计算观测与期望的偏差

# Compute the deviation between observed and expected

/ np.sqrt(uniform_expected_frequency_value) # 除以期望频数的平方根进行标准化

# Divide by the square root of the expected frequency for standardization

)

print(f'\n标准化残差:') # 输出残差表头

# Print the residuals heading

for industry_name, residual_value in zip( # 逐行业输出残差

# Output residuals for each industry

top_five_industries_series.index, standardized_residuals_array # 配对行业名与残差值

# Pair industry names with residual values

):

print(f' {industry_name}: {residual_value:.3f}') # 逐行输出行业名及其残差

# Print each industry name and its residual

结论 (α=0.05):

拒绝H0 (p=0.00468693 < 0.05)

各行业公司数量存在显著差异,分布不均匀

标准化残差:

计算机、通信和其他电子设备制造业: 2.907

电气机械和器材制造业: 0.415

专用设备制造业: 0.083

化学原料和化学制品制造业: -1.163

通用设备制造业: -2.242表 6.1 的结果证实了我们的判断:在 \(\alpha = 0.05\) 水平下拒绝原假设(\(p = 0.00469\)),各行业公司数量存在显著差异。标准化残差揭示了偏离的具体方向——计算机、通信行业的残差为 +2.907(绝对值超过2,属于显著偏多),这反映了长三角地区作为中国电子信息产业集聚高地的实际情况;通用设备制造业的残差为 -2.242(显著偏少),说明该行业在长三角的集聚程度相对较低。电气机械(+0.415)、专用设备(+0.083)和化学原料(-1.163)的残差绝对值均未超过2,偏离不显著。这一分析为理解区域产业结构特征提供了定量依据。

The results in 表 6.1 confirm our assessment: at the \(\alpha = 0.05\) level, the null hypothesis is rejected (\(p = 0.00469\)), indicating significant differences in the number of companies across industries. The standardized residuals reveal the specific direction of deviation—the Computer and Communications industry has a residual of +2.907 (absolute value exceeding 2, indicating significantly more companies than expected), reflecting the YRD’s status as a major hub for China’s electronic information industry. The General-Purpose Equipment Manufacturing industry has a residual of -2.242 (significantly fewer than expected), indicating a relatively lower degree of concentration for this industry in the YRD. The residuals for Electrical Machinery (+0.415), Special-Purpose Equipment (+0.083), and Chemical Raw Materials (-1.163) all have absolute values below 2, indicating non-significant deviations. This analysis provides a quantitative basis for understanding regional industrial structure characteristics. ## 从理论到实践:苦活累活 (The “Dirty Work”) {#sec-dirty-work-ch6}

6.4 From Theory to Practice: The “Dirty Work”

卡方检验看似简单,但在实际操作中,它比你想象的要”脏”得多。

The chi-square test may seem simple, but in practice it is much “dirtier” than you might expect.

6.4.1 1. 分箱黑客 (Binning Hacking)

当处理连续变量(如年龄、收入)时,我们需要先将其离散化(分箱)。 - 问题:P值高度依赖于你如何分箱。 - 黑客手段:如果你想要显著结果,就把箱子分得细一点;如果你想要不显著,就把箱子合并一下。通过调整分箱边界(如 10-20岁 改为 10-18岁),你可以轻松”操纵”P值。 - 防御:这就叫 Multiverse Analysis(多重宇宙分析)。诚实的研究者应该报告多种分箱方案下的结果,看结论是否稳健。

When dealing with continuous variables (such as age or income), we first need to discretize (bin) them. - Problem: The P-value is highly dependent on how you bin the data. - Hacking technique: If you want a significant result, make the bins finer; if you want non-significance, merge the bins. By adjusting bin boundaries (e.g., changing 10–20 years old to 10–18 years old), you can easily “manipulate” the P-value. - Defense: This is called Multiverse Analysis. Honest researchers should report results under multiple binning schemes and check whether the conclusions are robust.

6.4.2 2. 样本量的诅咒 (The Curse of Large N)

卡方检验对样本量极其敏感。 - 当 \(N\) 很大(如互联网数据中的百万级用户)时,即使是微不足道的偏差(如 0.1% 的差异),也会产生极小的 P值(P < 0.0001)。 - 后果:你会发现”一切都显著”,但这毫无意义。 - 对策:在大样本下,忘掉 P值,只看 Cramer’s V(效应量)。如果 \(V < 0.1\),哪怕 \(P\) 再小,这个关联也是微不足道的。

The chi-square test is extremely sensitive to sample size. - When \(N\) is large (e.g., millions of users in internet data), even trivial deviations (such as a 0.1% difference) can produce extremely small P-values (P < 0.0001). - Consequence: You will find that “everything is significant,” but it is meaningless. - Countermeasure: With large samples, forget the P-value and look only at Cramer’s V (effect size). If \(V < 0.1\), no matter how small \(P\) is, the association is negligible.

6.5 列联表与独立性检验 (Contingency Tables and Independence Tests)

6.5.1 列联表结构 (Structure of Contingency Tables)

列联表(Contingency Table)是两个或多个分类变量的交叉频数表。对于两个变量,其结构如下:

A contingency table is a cross-tabulation of frequencies for two or more categorical variables. For two variables, its structure is as follows:

| 列1 | 列2 | … | 列c | 行合计 | |

|---|---|---|---|---|---|

| 行1 | O₁₁ | O₁₂ | … | O₁c | R₁ |

| 行2 | O₂₁ | O₂₂ | … | O₂c | R₂ |

| … | … | … | … | … | … |

| 行r | Or₁ | Or₂ | … | Orc | Rr |

| 列合计 | C₁ | C₂ | … | Cc | n |

其中 \(O_{ij}\) 是第 \(i\) 行第 \(j\) 列的观测频数,\(n\) 是总样本量。

where \(O_{ij}\) is the observed frequency in the \(i\)-th row and \(j\)-th column, and \(n\) is the total sample size.

6.5.2 独立性检验 (Independence Test)

检验两个分类变量是否相互独立。

This test examines whether two categorical variables are independent of each other.

假设设置: - 原假设 \(H_0\): 两个变量相互独立(无关联) - 备择假设 \(H_1\): 两个变量不独立(存在关联)

Hypotheses: - Null hypothesis \(H_0\): The two variables are independent (no association) - Alternative hypothesis \(H_1\): The two variables are not independent (association exists)

理论频数计算:

Calculating Expected Frequencies:

在独立性假设下,第 \((i, j)\) 格的理论频数如 式 6.2 所示:

Under the independence assumption, the expected frequency for cell \((i, j)\) is given by 式 6.2:

\[ E_{ij} = \frac{R_i \times C_j}{n} \tag{6.2}\]

其中 \(R_i\) 和 \(C_j\) 分别是第 \(i\) 行和第 \(j\) 列的边际和。

where \(R_i\) and \(C_j\) are the marginal totals for the \(i\)-th row and \(j\)-th column, respectively.

卡方统计量:

Chi-Square Statistic:

独立性检验的卡方统计量如 式 6.3 所示:

The chi-square statistic for the independence test is shown in 式 6.3:

\[ \chi^2 = \sum_{i=1}^{r} \sum_{j=1}^{c} \frac{(O_{ij} - E_{ij})^2}{E_{ij}} \tag{6.3}\]

自由度: \(df = (r-1)(c-1)\)

Degrees of freedom: \(df = (r-1)(c-1)\)

效应量:

Effect Size:

除了统计显著性,我们还应关注关联强度。常用指标包括:

Beyond statistical significance, we should also examine the strength of association. Common measures include:

Phi系数 (\(\phi\)) (仅适用于 \(2 \times 2\) 表): \[ \phi = \sqrt{\frac{\chi^2}{n}} \]

Phi coefficient (\(\phi\)) (only applicable to \(2 \times 2\) tables): \[ \phi = \sqrt{\frac{\chi^2}{n}} \]

Cramer’s V (适用于任意大小的表): \[ V = \sqrt{\frac{\chi^2}{n \times \min(r-1, c-1)}} \]

Cramer’s V (applicable to tables of any size): \[ V = \sqrt{\frac{\chi^2}{n \times \min(r-1, c-1)}} \]

列联系数 (Contingency Coefficient \(C\)): \[ C = \sqrt{\frac{\chi^2}{\chi^2 + n}} \]

Contingency Coefficient (\(C\)): \[ C = \sqrt{\frac{\chi^2}{\chi^2 + n}} \]

解释: - \(0 \leq \phi, V, C \leq 1\) (对于 \(2 \times 2\) 表) - 值越大,关联越强 - 但这些指标的上限受表的大小影响,不同表之间难以直接比较

Interpretation: - \(0 \leq \phi, V, C \leq 1\) (for \(2 \times 2\) tables) - Larger values indicate stronger association - However, the upper bounds of these measures are affected by table dimensions, making direct comparison across different tables difficult

6.5.3 案例:长三角行业与地区的关联 (Case Study: Industry–Region Association in the Yangtze River Delta)

什么是行业与地区的独立性检验?

What Is an Industry–Region Independence Test?

在区域经济研究中,一个重要的研究问题是:一个地区的优势产业是否与其地理位置相关?例如,浙江是否更偏重信息技术行业,而江苏是否更偏重制造业?这种产业布局的区域差异对于产业政策制定、招商引资战略和投资组合的区域配置都具有重要参考价值。

In regional economic research, an important question is whether a region’s dominant industries are related to its geographic location. For example, does Zhejiang lean more heavily toward the information technology sector, while Jiangsu favors manufacturing? Such regional differences in industrial structure have significant implications for industrial policy-making, investment attraction strategies, and geographic allocation of investment portfolios.

卡方独立性检验能够回答这个问题:它检验两个分类变量(行业和省份)之间是否存在统计上的显著关联。如果检验结果拒绝独立性假设,则说明行业分布确实因地区而异,存在显著的区域产业集群效应。下面使用本地上市公司数据检验长三角地区行业分布与省份是否独立,结果如 表 6.2 所示。

The chi-square independence test can answer this question: it tests whether a statistically significant association exists between two categorical variables (industry and province). If the test rejects the independence hypothesis, it indicates that industry distributions do vary across regions, suggesting significant regional industrial clustering effects. Below we use local listed-company data to test whether the industry distribution in the Yangtze River Delta is independent of province, with results shown in 表 6.2.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import pandas as pd # 数据分析库

# Import the pandas library for data analysis

import numpy as np # 数值计算库

# Import the numpy library for numerical computation

from scipy.stats import chi2_contingency # 卡方独立性检验函数

# Import the chi-square independence test function

import platform # 操作系统检测

# Import the platform module for OS detection

from pathlib import Path # 跨平台路径处理

# Import Path for cross-platform path handling

# ========== 第1步:设置本地数据路径 ==========

# ========== Step 1: Set local data path ==========

# 使用本地上市公司数据:检验行业与地区的独立性

# Use local listed-company data: test independence between industry and region

if platform.system() == 'Windows': # Windows系统路径

# Windows system path

stock_data_directory_path = Path('C:/qiufei/data/stock') # 设置Windows本地股票数据目录

# Set the Windows local stock data directory

else: # Linux系统路径

# Linux system path

stock_data_directory_path = Path('/home/ubuntu/r2_data_mount/qiufei/data/stock') # 设置Linux本地股票数据目录

# Set the Linux local stock data directory

# ========== 第2步:读取上市公司基本信息 ==========

# ========== Step 2: Load listed-company basic information ==========

stock_basic_info_dataframe = pd.read_hdf( # 加载HDF5格式数据

# Load data in HDF5 format

stock_data_directory_path / 'stock_basic_data.h5' # 指定上市公司基本信息文件路径

# Specify the file path for listed-company basic information

)上市公司基本信息数据加载完毕。下面筛选长三角企业并构建行业×地区列联表。

Listed-company basic information has been loaded. Next, we filter for Yangtze River Delta companies and construct the industry × region contingency table.

# ========== 第3步:筛选长三角三省企业 ==========

# ========== Step 3: Filter companies in the three YRD provinces ==========

yangtze_river_delta_provinces = ['上海市', '浙江省', '江苏省'] # 长三角三省市

# The three provinces/municipalities of the Yangtze River Delta

yrd_companies_dataframe = stock_basic_info_dataframe[ # 按省份筛选

# Filter by province

stock_basic_info_dataframe['province'].isin(yangtze_river_delta_provinces) # 保留省份属于长三角的公司

# Keep companies whose province belongs to the YRD

].copy() # 深拷贝避免链式赋值警告

# Deep copy to avoid chained-assignment warnings

# ========== 第4步:选取前4大行业并构建列联表 ==========

# ========== Step 4: Select the top 4 industries and build a contingency table ==========

top_four_industries_list = ( # 获取频数最高的4个行业

# Get the 4 industries with the highest frequency

yrd_companies_dataframe['industry_name'].value_counts().head(4).index.tolist() # 统计行业频次并取前4名

# Count industry frequencies and take the top 4

)

yrd_top_industry_dataframe = yrd_companies_dataframe[ # 筛选这4个行业的公司

# Filter companies in these 4 industries

yrd_companies_dataframe['industry_name'].isin(top_four_industries_list) # 保留行业名在前4列表中的公司

# Keep companies whose industry name is in the top 4 list

]

industry_area_contingency_table = pd.crosstab( # 创建 行业×地区 交叉频数表

# Create the industry × region cross-tabulation

yrd_top_industry_dataframe['industry_name'], # 行变量:行业名称

# Row variable: industry name

yrd_top_industry_dataframe['province'], # 列变量:省份

# Column variable: province

margins=False # 不添加边际合计行/列

# Do not add marginal totals

)

print('=' * 60) # 打印分隔线

# Print a separator line

print('行业 vs. 地区 列联表 (长三角上市公司)') # 输出标题

# Print the table title

print('=' * 60) # 打印分隔线

# Print a separator line

print(industry_area_contingency_table) # 输出行业与地区的交叉频数表

# Print the industry–region cross-tabulation============================================================

行业 vs. 地区 列联表 (长三角上市公司)

============================================================

province 上海市 江苏省 浙江省

industry_name

专用设备制造业 32 62 52

化学原料和化学制品制造业 24 60 47

电气机械和器材制造业 23 66 61

计算机、通信和其他电子设备制造业 45 88 47行业与地区的列联表已构建完毕。从列联表中可以初步观察到:计算机、通信行业在上海(45家)和江苏(88家)的集中度较高,而在浙江仅47家;电气机械行业在江苏(66家)和浙江(61家)较为均衡,上海仅23家。这些差异是否具有统计显著性?下面执行卡方独立性检验,计算Cramer’s V效应量和标准化残差,以判断行业分布在长三角三省之间是否存在显著差异。

The contingency table has been constructed. From the table, we can make some preliminary observations: the computer and telecommunications industry shows higher concentration in Shanghai (45 firms) and Jiangsu (88 firms), while only 47 firms are in Zhejiang; the electrical machinery industry is relatively balanced between Jiangsu (66 firms) and Zhejiang (61 firms), with only 23 firms in Shanghai. Are these differences statistically significant? Below we perform the chi-square independence test, calculate Cramer’s V effect size and standardized residuals, to determine whether the industry distribution differs significantly across the three YRD provinces.

# ========== 第5步:执行卡方独立性检验 ==========

# ========== Step 5: Perform the chi-square independence test ==========

chi2_statistic_value, calculated_p_value, degrees_of_freedom_value, expected_frequencies_array = ( # 执行卡方独立性检验

# Perform the chi-square independence test

chi2_contingency(industry_area_contingency_table) # 返回χ²、p值、df、期望频数

# Returns χ², p-value, df, and expected frequencies

)

print(f'\n卡方统计量: {chi2_statistic_value:.4f}') # 输出χ²统计量

# Print the χ² statistic

print(f'自由度: {degrees_of_freedom_value}') # 输出自由度

# Print the degrees of freedom

print(f'p值: {calculated_p_value:.6f}') # 输出p值

# Print the p-value

# ========== 第6步:计算Cramer's V效应量 ==========

# ========== Step 6: Calculate the Cramer's V effect size ==========

total_samples_count = industry_area_contingency_table.sum().sum() # 总样本量

# Total sample size

minimum_dimension_size = min( # min(行数, 列数) - 1

# min(number of rows, number of columns) - 1

industry_area_contingency_table.shape[0], # 列联表行数

# Number of rows in the contingency table

industry_area_contingency_table.shape[1] # 列联表列数

# Number of columns in the contingency table

) - 1 # 取最小维度减1

# Subtract 1 from the minimum dimension

cramers_v_statistic = np.sqrt( # Cramer's V = √(χ²/(N×min(r-1,c-1)))

# Cramer's V = √(χ² / (N × min(r-1, c-1)))

chi2_statistic_value / (total_samples_count * minimum_dimension_size) # 卡方统计量除以样本量与最小维度之积

# Chi-square statistic divided by the product of sample size and minimum dimension

)

print(f'\nCramer\'s V: {cramers_v_statistic:.4f}') # 输出Cramer's V效应量

# Print the Cramer's V effect size

卡方统计量: 10.5034

自由度: 6

p值: 0.104992

Cramer's V: 0.0930卡方独立性检验结果显示:\(\chi^2 = 10.5034\),自由度 \(df = 6\),\(p = 0.1050\)。由于 \(p\) 值大于显著性水平0.05,我们不能拒绝行业与地区独立的原假设——这意味着在统计学意义上,长三角三省的前4大行业分布不存在显著差异。Cramer’s V效应量仅为0.0930,非常接近于零,进一步证实了行业与地区之间的关联强度极为微弱。下面根据效应量大小对关联强度进行定性评价。

The chi-square independence test results show: \(\chi^2 = 10.5034\), degrees of freedom \(df = 6\), \(p = 0.1050\). Since the \(p\)-value exceeds the significance level of 0.05, we cannot reject the null hypothesis of independence between industry and region — this means that, in a statistical sense, the distribution of the top 4 industries does not differ significantly across the three YRD provinces. The Cramer’s V effect size is only 0.0930, very close to zero, further confirming that the association between industry and region is extremely weak. Below, we qualitatively assess the strength of association based on the effect size.

# 根据Cramer's V判断关联强度

# Assess association strength based on Cramer's V

if cramers_v_statistic < 0.1: # V < 0.1: 极弱

# V < 0.1: negligible

association_strength_description = '极弱' # 赋值极弱关联

# Assign negligible association

elif cramers_v_statistic < 0.3: # 0.1 ≤ V < 0.3: 弱

# 0.1 ≤ V < 0.3: weak

association_strength_description = '弱' # 赋值弱关联

# Assign weak association

elif cramers_v_statistic < 0.5: # 0.3 ≤ V < 0.5: 中等

# 0.3 ≤ V < 0.5: moderate

association_strength_description = '中等' # 赋值中等关联

# Assign moderate association

else: # V ≥ 0.5: 强

# V ≥ 0.5: strong

association_strength_description = '强' # 赋值强关联

# Assign strong association

print(f'关联强度: {association_strength_description}') # 输出关联强度定性评价

# Print the qualitative assessment of association strength关联强度: 极弱按照Cohen的标准,Cramer’s V = 0.093 < 0.1被判定为极弱关联。这意味着即使我们在列联表中观察到了一些数值差异(如计算机通信行业在上海相对集中),这些差异在统计上微不足道——行业类型与所在省份几乎是独立的。下面输出理论频数、标准化残差与统计结论,进一步验证这一判断。

According to Cohen’s criteria, Cramer’s V = 0.093 < 0.1 is classified as a negligible association. This means that even though we observed some numerical differences in the contingency table (e.g., the computer and telecommunications industry is relatively concentrated in Shanghai), these differences are statistically trivial — industry type and province are essentially independent. Below we output the expected frequencies, standardized residuals, and statistical conclusion to further verify this assessment.

# ========== 第7步:输出理论频数(独立性假设下) ==========

# ========== Step 7: Output expected frequencies (under the independence assumption) ==========

expected_frequencies_dataframe = pd.DataFrame( # 将数组转为带标签的DataFrame

# Convert the array to a labeled DataFrame

expected_frequencies_array, # 期望频数矩阵

# Expected frequency matrix

index=industry_area_contingency_table.index, # 行索引:行业名称

# Row index: industry names

columns=industry_area_contingency_table.columns # 列索引:省份名称

# Column index: province names

)

print(f'\n理论频数 (独立性假设下):') # 输出理论频数标题

# Print the expected frequencies heading

print(expected_frequencies_dataframe.round(2)) # 保留2位小数

# Round to 2 decimal places

# ========== 第8步:计算标准化残差(|残差|>2为显著偏离) ==========

# ========== Step 8: Calculate standardized residuals (|residual| > 2 indicates significant deviation) ==========

standardized_residuals_matrix = ( # 标准化残差=(O-E)/√E

# Standardized residual = (O - E) / √E

industry_area_contingency_table.values - expected_frequencies_array # 观测频数减去期望频数

# Observed frequencies minus expected frequencies

) / np.sqrt(expected_frequencies_array) # 除以期望频数平方根进行标准化

# Divide by the square root of expected frequencies for standardization

standardized_residuals_dataframe = pd.DataFrame( # 转为带标签DataFrame

# Convert to a labeled DataFrame

standardized_residuals_matrix, # 残差矩阵数据

# Residual matrix data

index=industry_area_contingency_table.index, # 行索引:行业名称

# Row index: industry names

columns=industry_area_contingency_table.columns # 列索引:省份名称

# Column index: province names

)

print(f'\n标准化残差 (|残差|>2 为显著偏离):') # 输出残差表标题

# Print the standardized residuals heading

print(standardized_residuals_dataframe.round(2)) # 打印残差矩阵,保留两位小数

# Print the residual matrix, rounded to 2 decimal places

理论频数 (独立性假设下):

province 上海市 江苏省 浙江省

industry_name

专用设备制造业 29.83 66.39 49.79

化学原料和化学制品制造业 26.76 59.57 44.67

电气机械和器材制造业 30.64 68.20 51.15

计算机、通信和其他电子设备制造业 36.77 81.85 61.38

标准化残差 (|残差|>2 为显著偏离):

province 上海市 江苏省 浙江省

industry_name

专用设备制造业 0.40 -0.54 0.31

化学原料和化学制品制造业 -0.53 0.06 0.35

电气机械和器材制造业 -1.38 -0.27 1.38

计算机、通信和其他电子设备制造业 1.36 0.68 -1.84表 6.2 展示了理论频数和标准化残差矩阵。理论频数矩阵显示了在行业与地区完全独立的假设下,各单元格应有的期望公司数量。标准化残差矩阵中,所有残差的绝对值均小于2(最大为计算机通信×浙江的 -1.84),这说明没有任何单元格存在统计上的显著偏离,与 \(p = 0.105\) 的整体检验结果一致。下面根据检验结果输出统计结论。

表 6.2 presents the expected frequencies and the standardized residuals matrix. The expected frequency matrix shows the number of companies each cell should contain under the assumption of complete independence between industry and region. In the standardized residuals matrix, all absolute values are less than 2 (the largest being −1.84 for computer & telecommunications × Zhejiang), indicating that no cell exhibits a statistically significant deviation, consistent with the overall test result of \(p = 0.105\). Below, we output the statistical conclusion based on the test results.

# ========== 第9步:输出统计结论 ==========

# ========== Step 9: Output the statistical conclusion ==========

print(f'\n结论 (α=0.05):') # 输出统计结论标题

# Print the statistical conclusion heading

if calculated_p_value < 0.05: # p < 0.05 拒绝独立性H0

# p < 0.05: reject the independence null hypothesis

print(f' 拒绝H0 (p={calculated_p_value:.6f} < 0.05)') # 输出拒绝原假设结论

# Print the conclusion of rejecting H0

print(f' 行业分布在长三角三省之间存在显著差异') # 说明行业分布差异显著

# State that industry distribution differs significantly across the three YRD provinces

print(f' 关联强度为{association_strength_description}') # 输出关联强度描述

# Print the association strength description

else: # p ≥ 0.05 不拒绝H0

# p ≥ 0.05: do not reject H0

print(f' 不能拒绝H0 (p={calculated_p_value:.6f} >= 0.05)') # 输出不拒绝结论

# Print the conclusion of not rejecting H0

print(f' 没有证据表明行业分布与地区有显著关联') # 说明无显著关联

# State there is insufficient evidence of a significant association between industry distribution and region

结论 (α=0.05):

不能拒绝H0 (p=0.104992 >= 0.05)

没有证据表明行业分布与地区有显著关联统计结论明确:在 \(\alpha = 0.05\) 水平下不能拒绝原假设(\(p = 0.105\)),没有足够的证据表明长三角三省的主要行业分布存在显著差异。结合Cramer’s V = 0.093(极弱关联),我们可以得出一个有意义的经济学解读——长三角地区的产业一体化程度较高,上海、浙江和江苏三省市在制造业的行业结构上具有高度的同质性。这一发现与长三角作为中国经济引擎的”协同发展”战略定位相吻合。

The statistical conclusion is clear: at the \(\alpha = 0.05\) significance level, we cannot reject the null hypothesis (\(p = 0.105\)); there is insufficient evidence that the distribution of major industries differs significantly across the three YRD provinces. Combined with Cramer’s V = 0.093 (negligible association), we can draw a meaningful economic interpretation — the Yangtze River Delta exhibits a high degree of industrial integration, with Shanghai, Zhejiang, and Jiangsu showing highly homogeneous industrial structures in the manufacturing sector. This finding is consistent with the YRD’s strategic positioning as China’s economic engine for “coordinated development.”

6.5.4 列联表的可视化 (Visualization of Contingency Tables)

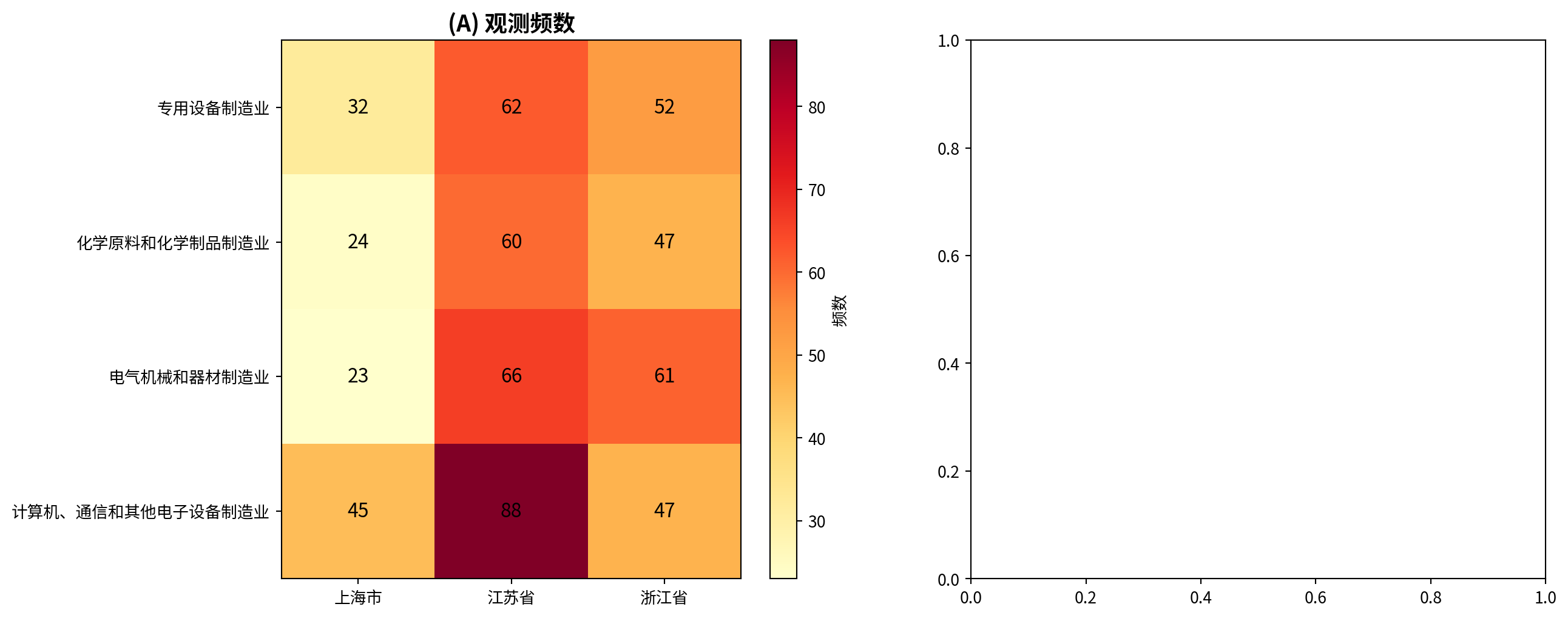

图 6.1 展示了长三角上市公司行业与地区关联的热图可视化。

图 6.1 presents a heatmap visualization of the industry–region association for listed companies in the Yangtze River Delta.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import matplotlib.pyplot as plt # 绘图库

# Import the matplotlib plotting library

import numpy as np # 数值计算库

# Import the numpy library for numerical computation

import platform # 操作系统检测

# Import the platform module for OS detection

from pathlib import Path # 跨平台路径处理

# Import Path for cross-platform path handling

# ========== 第1步:设置数据路径并加载数据 ==========

# ========== Step 1: Set data path and load data ==========

if platform.system() == 'Windows': # Windows系统路径

# Windows system path

heatmap_data_directory = Path('C:/qiufei/data/stock') # 设置Windows本地股票数据目录

# Set the Windows local stock data directory

else: # Linux系统路径

# Linux system path

heatmap_data_directory = Path('/home/ubuntu/r2_data_mount/qiufei/data/stock') # 设置Linux本地股票数据目录

# Set the Linux local stock data directory

heatmap_stock_basic_dataframe = pd.read_hdf( # 加载上市公司基本信息

# Load listed-company basic information

heatmap_data_directory / 'stock_basic_data.h5' # 指定数据文件路径

# Specify the data file path

)上市公司基本信息数据加载完毕。下面筛选长三角企业并构建行业×地区列联表和标准化残差矩阵。

Listed-company basic information has been loaded. Next, we filter for YRD companies and construct the industry × region contingency table and standardized residuals matrix.

# ========== 第2步:筛选长三角企业并构建列联表 ==========

# ========== Step 2: Filter YRD companies and build a contingency table ==========

heatmap_yrd_provinces = ['上海市', '浙江省', '江苏省'] # 三省市列表

# List of three provinces/municipalities

heatmap_yrd_dataframe = heatmap_stock_basic_dataframe[ # 按省份筛选

# Filter by province

heatmap_stock_basic_dataframe['province'].isin(heatmap_yrd_provinces) # 保留省份属于长三角的公司

# Keep companies whose province belongs to the YRD

]

heatmap_top_industries = ( # 取频数最高的4个行业

# Get the 4 industries with the highest frequency

heatmap_yrd_dataframe['industry_name'].value_counts().head(4).index.tolist() # 统计行业频次取前4

# Count industry frequencies and take the top 4

)

heatmap_filtered_dataframe = heatmap_yrd_dataframe[ # 筛选前4大行业

# Filter for the top 4 industries

heatmap_yrd_dataframe['industry_name'].isin(heatmap_top_industries) # 保留行业在前4列表中的公司

# Keep companies whose industry is in the top 4 list

]

heatmap_contingency_table = pd.crosstab( # 构建 行业×地区 列联表

# Build the industry × region contingency table

heatmap_filtered_dataframe['industry_name'], # 行变量:行业名称

# Row variable: industry name

heatmap_filtered_dataframe['province'] # 列变量:省份

# Column variable: province

)

# ========== 第3步:计算理论频数和标准化残差 ==========

# ========== Step 3: Calculate expected frequencies and standardized residuals ==========

from scipy.stats import chi2_contingency # 导入独立性检验函数

# Import the independence test function

_, _, _, heatmap_expected_frequencies = chi2_contingency(heatmap_contingency_table) # 获取期望频数矩阵

# Get the expected frequency matrix

heatmap_observed_matrix = heatmap_contingency_table.values # 观测频数矩阵

# Observed frequency matrix

heatmap_std_residuals = ( # 标准化残差=(O-E)/√E

# Standardized residual = (O - E) / √E

(heatmap_observed_matrix - heatmap_expected_frequencies) # 观测与期望的偏差

# Difference between observed and expected

/ np.sqrt(heatmap_expected_frequencies) # 除以期望频数平方根进行标准化

# Divide by the square root of expected frequencies for standardization

)

heatmap_row_labels = heatmap_contingency_table.index.tolist() # 行标签(行业名称)

# Row labels (industry names)

heatmap_col_labels = heatmap_contingency_table.columns.tolist() # 列标签(省份名称)

# Column labels (province names)列联表及标准化残差矩阵已计算完毕。下面绘制双面板热图:左图展示观测频数,右图展示标准化残差(|残差|>2为显著偏离)。

The contingency table and standardized residuals matrix have been computed. Below we plot a dual-panel heatmap: the left panel shows observed frequencies, and the right panel shows standardized residuals (|residual| > 2 indicates significant deviation).

# ========== 第4步:创建画布并绘制左子图(观测频数热图) ==========

# ========== Step 4: Create the canvas and draw the left subplot (observed frequency heatmap) ==========

matplot_figure, matplot_axes_array = plt.subplots(1, 2, figsize=(14, 6)) # 1×2子图布局

# 1×2 subplot layout

# --- 左图: 观测频数热图 ---

# --- Left panel: observed frequency heatmap ---

heatmap_image_1 = matplot_axes_array[0].imshow( # 用imshow渲染矩阵

# Render the matrix with imshow

heatmap_observed_matrix, cmap='YlOrRd', aspect='auto' # 黄-橙-红色彩映射

# Yellow-orange-red color mapping

)

matplot_axes_array[0].set_xticks(np.arange(len(heatmap_col_labels))) # 设置x轴刻度

# Set x-axis tick positions

matplot_axes_array[0].set_yticks(np.arange(len(heatmap_row_labels))) # 设置y轴刻度

# Set y-axis tick positions

matplot_axes_array[0].set_xticklabels(heatmap_col_labels) # x轴标签(省份)

# x-axis labels (provinces)

matplot_axes_array[0].set_yticklabels(heatmap_row_labels) # y轴标签(行业)

# y-axis labels (industries)

matplot_axes_array[0].set_title('(A) 观测频数', fontsize=14, fontweight='bold') # 左图标题

# Left panel title

for row_index_i in range(len(heatmap_row_labels)): # 在每个格子中标注数值

# Annotate each cell with its value

for col_index_j in range(len(heatmap_col_labels)): # 遍历列索引

# Iterate over column indices

matplot_axes_array[0].text( # 在指定位置添加文本标注

# Add text annotation at the specified position

col_index_j, row_index_i, # 文本位置坐标(x, y)

# Text position coordinates (x, y)

heatmap_observed_matrix[row_index_i, col_index_j], # 要标注的观测频数值

# The observed frequency value to annotate

ha='center', va='center', color='black', fontsize=12 # 居中对齐、黑色字体

# Center alignment, black font

)

plt.colorbar(heatmap_image_1, ax=matplot_axes_array[0], label='频数') # 添加颜色条

# Add a colorbar

左子图(观测频数)绘制完毕。下面绘制右子图(标准化残差热图),其中|残差|>2的格子表示观测值显著偏离独立性假设下的期望值。

The left subplot (observed frequencies) has been drawn. Below we draw the right subplot (standardized residuals heatmap), where cells with |residual| > 2 indicate that observed values deviate significantly from the expected values under the independence assumption.

# --- 右图: 标准化残差热图 ---

# --- Right panel: standardized residuals heatmap ---

heatmap_image_2 = matplot_axes_array[1].imshow( # 用红蓝配色渲染残差

# Render residuals with a red-blue color scheme

heatmap_std_residuals, cmap='RdBu_r', vmin=-3, vmax=3, aspect='auto' # 对称色阶[-3, 3]

# Symmetric color scale [-3, 3]

)

matplot_axes_array[1].set_xticks(np.arange(len(heatmap_col_labels))) # x轴刻度

# x-axis ticks

matplot_axes_array[1].set_yticks(np.arange(len(heatmap_row_labels))) # y轴刻度

# y-axis ticks

matplot_axes_array[1].set_xticklabels(heatmap_col_labels) # 省份标签

# Province labels

matplot_axes_array[1].set_yticklabels(heatmap_row_labels) # 行业标签

# Industry labels

matplot_axes_array[1].set_title( # 子图标题

# Subplot title

'(B) 标准化残差 (|残差|>2为显著)', fontsize=14, fontweight='bold' # 标题文本及样式

# Title text and style

)

for row_index_i in range(len(heatmap_row_labels)): # 在每个格子中标注残差值

# Annotate each cell with its residual value

for col_index_j in range(len(heatmap_col_labels)): # 遍历列索引

# Iterate over column indices

cell_text_color = ( # |残差|≥2用白字突出显示

# Use white text to highlight |residual| ≥ 2

'black' if abs(heatmap_std_residuals[row_index_i, col_index_j]) < 2 # 残差绝对值<2用黑字

# Black text if absolute residual < 2

else 'white' # 残差绝对值≥2用白字突出

# White text if absolute residual ≥ 2 for emphasis

)

matplot_axes_array[1].text( # 在指定位置添加文本标注

# Add text annotation at the specified position

col_index_j, row_index_i, # 文本位置坐标(x, y)

# Text position coordinates (x, y)

f'{heatmap_std_residuals[row_index_i, col_index_j]:.1f}', # 标准化残差值保留1位小数

# Standardized residual value rounded to 1 decimal place

ha='center', va='center', color=cell_text_color, # 居中对齐,根据残差大小设置颜色

# Center alignment, color set based on residual magnitude

fontsize=11, fontweight='bold' # 字体11号加粗

# Font size 11, bold

)

plt.colorbar(heatmap_image_2, ax=matplot_axes_array[1], label='标准化残差') # 添加颜色条

# Add a colorbar

plt.tight_layout() # 自动调整间距

# Automatically adjust spacing

plt.show() # 显示热图

# Display the heatmap<Figure size 672x480 with 0 Axes>图 6.1 的左图(A面板)为观测频数热图,右图(B面板)为标准化残差热图。从频数热图可以看到,江苏省在各行业的公司数量普遍较多(颜色较深),尤其是计算机通信行业(88家);上海在计算机通信行业也有相对集中(45家)。残差热图中,整体色调偏淡,没有出现深红或深蓝的格子——这意味着没有任何行业-地区组合呈现出令人惊讶的异常频数。最引人注目的是计算机通信×浙江的浅蓝色(残差 -1.84)和电气机械×上海的浅蓝色(残差 -1.38),但它们均未超过 \(|2|\) 的显著阈值。这一可视化结果从直觉上验证了前文卡方检验”不能拒绝独立性”的统计结论。

The left panel (Panel A) of 图 6.1 shows the observed frequency heatmap, and the right panel (Panel B) shows the standardized residuals heatmap. From the frequency heatmap, we can see that Jiangsu Province generally has more companies in each industry (darker colors), especially in the computer and telecommunications industry (88 firms); Shanghai also shows relative concentration in the computer and telecommunications industry (45 firms). In the residuals heatmap, the overall color tone is muted, with no deep red or deep blue cells — this means no industry–region combination exhibits a surprisingly anomalous frequency. The most notable cells are the light blue for computer & telecommunications × Zhejiang (residual −1.84) and electrical machinery × Shanghai (residual −1.38), but neither exceeds the significance threshold of \(|2|\). This visualization intuitively corroborates the earlier chi-square test’s statistical conclusion that “we cannot reject independence.” ## 其他类型的卡方检验 (Other Types of Chi-Square Tests) {#sec-other-chisq-tests}

6.5.5 齐性检验(Homogeneity Test)

检验不同群体在某个分类变量的分布是否相同。

Tests whether different populations have the same distribution for a categorical variable.

与独立性检验的区别:

- 独立性检验: 从一个总体中抽样,考察两个变量的关联

- 齐性检验: 从多个总体中分别抽样,比较它们的分布是否一致

Difference from the Independence Test:

- Independence test: Sampling from one population to examine the association between two variables

- Homogeneity test: Sampling separately from multiple populations to compare whether their distributions are the same

计算方法: 卡方统计量计算公式相同,但抽样方式不同

Computation method: The chi-square statistic formula is the same, but the sampling design differs.

6.5.6 McNemar检验(配对卡方检验) (McNemar Test / Paired Chi-Square Test)

适用于配对二分类数据的检验,例如:

- 同一组对象在干预前后的状态变化

- 两种诊断方法对同一组样本的诊断结果比较

Applicable to tests on paired binary data, for example:

- Changes in status of the same group of subjects before and after an intervention

- Comparing diagnostic results of two diagnostic methods on the same set of samples

检验统计量:

Test statistic:

McNemar 检验的统计量如 式 6.4 所示:

The McNemar test statistic is shown in 式 6.4:

\[ \chi^2 = \frac{(b - c)^2}{b + c} \tag{6.4}\]

其中 \(b\) 和 \(c\) 是不一致的配对数。

where \(b\) and \(c\) are the number of discordant pairs.

校正版本 (适用于小样本):

Corrected version (for small samples):

\[ \chi^2_{corr} = \frac{(|b - c| - 1)^2}{b + c} \]

6.6 Fisher精确检验 (Fisher’s Exact Test)

6.6.1 适用场景 (Applicable Scenarios)

当样本量很小(理论频数 \(< 5\)),卡方检验的近似不准确时,应使用Fisher精确检验。

When the sample size is small (expected frequency \(< 5\)) and the chi-square approximation becomes inaccurate, Fisher’s exact test should be used.

原理: 基于超几何分布,计算在边际和固定的条件下,获得当前观测列联表的精确概率。

Principle: Based on the hypergeometric distribution, it calculates the exact probability of obtaining the observed contingency table given fixed marginal totals.

优点:

- 精确,不依赖大样本近似

- 适用于 \(2 \times 2\) 表

Advantages:

- Exact; does not rely on large-sample approximation

- Suitable for \(2 \times 2\) tables

缺点:

- 计算量大(尤其当样本量大时)

- 难以扩展到大表

Disadvantages:

- Computationally intensive (especially with large sample sizes)

- Difficult to extend to larger tables

6.6.2 案例:小样本案例 (Case Study: Small Sample Example)

什么是小样本下的模型比较?

What is model comparison under small samples?

在金融风控实践中,新模型上线前通常需要小规模试点。由于试点期间的样本量往往很小(如仅有50笔异常交易),常规的卡方检验可能因为期望频数过低而失效。这时,我们需要一种在小样本条件下仍然精确有效的检验方法。

In financial risk management practice, a small-scale pilot is usually required before deploying a new model. Because the sample size during the pilot phase is often very small (e.g., only 50 abnormal transactions), the conventional chi-square test may fail due to low expected frequencies. In such cases, we need a test that remains exact and valid under small-sample conditions.

Fisher精确检验通过枚举所有可能的列联表排列,计算精确的p值,不依赖大样本近似,因此特别适合小样本场景。下面是一个典型的应用场景:某长三角地区证券公司的风控部门正在评估新旧两套风控预警模型,由于试点期间仅抽取了50笔异常交易进行人工复核,样本量较小,因此采用Fisher精确检验比较两套模型的预警判断是否存在显著差异,结果如 表 6.3 所示。

Fisher’s exact test enumerates all possible arrangements of the contingency table and computes an exact p-value without relying on large-sample approximation, making it particularly suitable for small-sample scenarios. Below is a typical application: the risk management department of a securities company in the Yangtze River Delta region is evaluating two risk-alert models (old vs. new). Since only 50 abnormal transactions were sampled for manual review during the pilot, the sample size is small, so Fisher’s exact test is used to compare whether the alert decisions of the two models differ significantly. The results are shown in 表 6.3.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

from scipy.stats import fisher_exact # Fisher精确检验函数

# Fisher's exact test function

import numpy as np # 数值计算库

# Numerical computing library

# ========== 第1步:构建2×2观测列联表 ==========

# ========== Step 1: Construct the 2×2 observed contingency table ==========

# 场景:某证券公司对新旧两套风控预警模型进行对比评估

# Scenario: A securities company compares old and new risk-alert models

# 行表示旧模型预警结果,列表示新模型预警结果

# Rows represent old model alert results; columns represent new model alert results

observed_alert_matrix = np.array([ # 构建2×2观测列联表矩阵

# Construct the 2×2 observed contingency table matrix

[15, 5], # 旧模型预警: 新模型也预警15笔, 新模型未预警5笔

# Old model alerted: new model also alerted 15, new model did not alert 5

[10, 20] # 旧模型未预警: 新模型预警10笔, 新模型也未预警20笔

# Old model did not alert: new model alerted 10, new model also did not alert 20

])

# ========== 第2步:打印观测列联表 ==========

# ========== Step 2: Print the observed contingency table ==========

print('=' * 60) # 分隔线

# Separator line

print('Fisher精确检验:风控预警模型比较') # 标题

# Title: Fisher's exact test: risk-alert model comparison

print('=' * 60) # 分隔线

# Separator line

print('\n观测列联表:') # 表头

# Header: Observed contingency table

print(' 新模型预警 新模型未预警 合计') # 输出列标题

# Column headers: New model alerted / New model not alerted / Total

print('-' * 50) # 分隔线

# Separator line

alert_row_labels = ['旧模型预警', '旧模型未预警'] # 行标签(旧模型预警结果)

# Row labels (old model alert results)

for ind_i, row_data in enumerate(observed_alert_matrix): # 逐行打印

# Iterate and print each row

print(f'{alert_row_labels[ind_i]:10s} {row_data[0]:10d} {row_data[1]:10d} {row_data.sum():5d}') # 输出每行观测值及行合计

# Print observed values and row total for each row

alert_col_sums_array = observed_alert_matrix.sum(axis=0) # 列合计

# Column totals

print(f'{"合计":10s} {alert_col_sums_array[0]:10d} {alert_col_sums_array[1]:10d} {alert_col_sums_array.sum():5d}') # 输出合计行

# Print totals row============================================================

Fisher精确检验:风控预警模型比较

============================================================

观测列联表:

新模型预警 新模型未预警 合计

--------------------------------------------------

旧模型预警 15 5 20

旧模型未预警 10 20 30

合计 25 25 50上述代码输出了一个 \(2 \times 2\) 观测列联表,总样本量为50笔交易。其中,旧模型预警的20笔中,新模型也预警了15笔(一致)、但有5笔新模型未预警(遗漏);旧模型未预警的30笔中,新模型预警了10笔(新增发现)、20笔两套模型均未预警(一致)。从边际合计看,新模型共预警25笔、未预警25笔,而旧模型预警20笔、未预警30笔,新模型整体预警率更高。要严格检验两套模型的预警结果是否存在显著差异,我们需要使用Fisher精确检验。

The code above outputs a \(2 \times 2\) observed contingency table with a total sample size of 50 transactions. Among the 20 transactions flagged by the old model, the new model also flagged 15 (agreement) but missed 5 (omission). Among the 30 transactions not flagged by the old model, the new model flagged 10 (new discoveries) while both models agreed on 20 as non-alerts. Looking at the marginal totals, the new model flagged 25 transactions in total versus the old model’s 20, indicating a higher overall alert rate. To rigorously test whether the alert outcomes of the two models differ significantly, we need to use Fisher’s exact test.

观测列联表输出完毕。下面执行Fisher精确检验。

The observed contingency table has been printed. Now we proceed to perform Fisher’s exact test.

# ========== 第3步:执行Fisher精确检验 ==========

# ========== Step 3: Perform Fisher's exact test ==========

fisher_odds_ratio, fisher_calculated_p_value = fisher_exact( # 双侧检验

# Two-sided test

observed_alert_matrix, alternative='two-sided' # 传入2×2矩阵执行Fisher精确检验

# Pass the 2×2 matrix to perform Fisher's exact test

)

print(f'\nFisher精确检验结果:') # 输出检验结果

# Print test results

print(f' 比值比 (Odds Ratio): {fisher_odds_ratio:.3f}') # 比值比

# Odds ratio

print(f' p值 (双侧): {fisher_calculated_p_value:.6f}') # 精确p值

# Exact p-value (two-sided)

Fisher精确检验结果:

比值比 (Odds Ratio): 6.000

p值 (双侧): 0.008579Fisher精确检验结果显示:比值比(Odds Ratio)为6.000,双侧精确p值为0.008579。OR=6.000意味着,在旧模型预警的分组中,新模型也预警的比值是新模型未预警比值的6倍。换言之,当旧模型发出预警时,新模型同样发出预警的可能性远大于不发出预警的可能性,两套模型在”高风险”区域表现出较强的一致性,但在”低风险”区域存在显著分歧(新模型新增了10笔旧模型未识别的预警)。

The Fisher’s exact test results show: the odds ratio (OR) is 6.000 and the two-sided exact p-value is 0.008579. An OR of 6.000 means that within the group flagged by the old model, the odds of the new model also flagging is 6 times the odds of it not flagging. In other words, when the old model issues an alert, it is far more likely that the new model also issues an alert than not, indicating strong agreement between the two models in the “high-risk” zone. However, there is a significant divergence in the “low-risk” zone (the new model newly flagged 10 transactions that the old model missed).

Fisher精确检验计算完成。下面给出统计结论,并与Yates校正卡方检验对比。

Fisher’s exact test computation is complete. Below we present the statistical conclusion and compare it with the Yates-corrected chi-square test.

# ========== 第4步:给出统计结论 ==========

# ========== Step 4: Present the statistical conclusion ==========

print(f'\n结论 (α=0.05):') # 显著性水平α=0.05

# Conclusion at significance level α=0.05

if fisher_calculated_p_value < 0.05: # p值小于α则拒绝原假设

# If p-value < α, reject the null hypothesis

print(f' 拒绝H0 (p={fisher_calculated_p_value:.6f} < 0.05)') # 输出拒绝结论

# Print rejection conclusion

print(f' 两套风控模型的预警结果存在显著差异') # 说明模型差异显著

# The alert outcomes of the two risk models differ significantly

print(f' 比值比{fisher_odds_ratio:.3f}表明:') # 提示比值比含义

# The odds ratio indicates:

if fisher_odds_ratio > 1: # OR>1说明新模型预警更多

# OR > 1 means the new model alerts more

print(f' - 新模型倾向于发出更多预警信号') # 新模型更敏感

# The new model tends to issue more alert signals

else: # OR<1说明新模型预警更少

# OR < 1 means the new model alerts less

print(f' - 新模型倾向于发出更少预警信号') # 新模型更保守

# The new model tends to issue fewer alert signals

else: # p值≥α则不能拒绝原假设

# If p-value >= α, fail to reject the null hypothesis

print(f' 不能拒绝H0 (p={fisher_calculated_p_value:.6f} >= 0.05)') # 输出不拒绝结论

# Print fail-to-reject conclusion

print(f' 没有证据表明两套风控模型的预警存在差异') # 无显著差异

# No evidence that the two risk models' alerts differ

# ========== 第5步:与Yates校正卡方检验对比 ==========

# ========== Step 5: Compare with Yates-corrected chi-square test ==========

from scipy.stats import chi2_contingency # 导入卡方检验函数

# Import the chi-square test function

yates_chi2_statistic, yates_p_value, yates_dof, _ = chi2_contingency( # Yates连续性校正

# Yates continuity correction

observed_alert_matrix, correction=True # 开启校正以适应小样本

# Enable correction for small samples

)

print(f'\n对比: Yates校正卡方检验') # 输出对比结果

# Comparison: Yates-corrected chi-square test

print(f' 卡方统计量: {yates_chi2_statistic:.4f}') # 卡方统计量

# Chi-square statistic

print(f' p值: {yates_p_value:.6f}') # 近似p值

# Approximate p-value

print(f' 注: 当样本量较小时,Fisher检验更准确') # 方法适用性说明

# Note: When sample size is small, Fisher's test is more accurate

结论 (α=0.05):

拒绝H0 (p=0.008579 < 0.05)

两套风控模型的预警结果存在显著差异

比值比6.000表明:

- 新模型倾向于发出更多预警信号

对比: Yates校正卡方检验

卡方统计量: 6.7500

p值: 0.009375

注: 当样本量较小时,Fisher检验更准确上述代码的运行结果分为两部分。第一部分是Fisher精确检验的统计结论:在 \(\alpha = 0.05\) 水平下,p值=0.008579 < 0.05,拒绝原假设 \(H_0\)(两套模型的预警结果无差异),即两套风控模型的预警结果存在统计上的显著差异。比值比OR=6.000(大于1)表明新模型倾向于发出更多预警信号,说明新模型的风险识别灵敏度更高。

The results above consist of two parts. The first part is the statistical conclusion from Fisher’s exact test: at \(\alpha = 0.05\), the p-value = 0.008579 < 0.05, so we reject the null hypothesis \(H_0\) (that the alert outcomes of the two models are the same). This means the alert outcomes of the two risk models are statistically significantly different. The odds ratio OR = 6.000 (greater than 1) indicates that the new model tends to issue more alerts, suggesting higher risk-detection sensitivity.

第二部分是与Yates校正卡方检验的对比:Yates校正 \(\chi^2 = 6.7500\),对应p值为0.009375,同样显著。两种方法结论一致(均拒绝 \(H_0\)),但Fisher精确检验的p值(0.008579)比Yates校正(0.009375)略低。在总样本量仅50笔、且部分格子期望频数较小的条件下,Fisher精确检验基于精确概率分布计算,不依赖大样本渐近近似,因此结果更为可靠。这验证了”当样本量较小或期望频数低于5时,应优先使用Fisher精确检验”的方法论原则。

The second part compares with the Yates-corrected chi-square test: Yates-corrected \(\chi^2 = 6.7500\) with a corresponding p-value of 0.009375, which is also significant. Both methods reach the same conclusion (both reject \(H_0\)), but Fisher’s exact test yields a slightly lower p-value (0.008579) than the Yates correction (0.009375). With only 50 transactions and some cells having small expected frequencies, Fisher’s exact test computes based on exact probability distributions without relying on large-sample asymptotic approximation, making the result more reliable. This validates the methodological principle that “when the sample size is small or expected frequencies fall below 5, Fisher’s exact test should be preferred.”

6.6.3 启发式思考题 (Heuristic Problems)

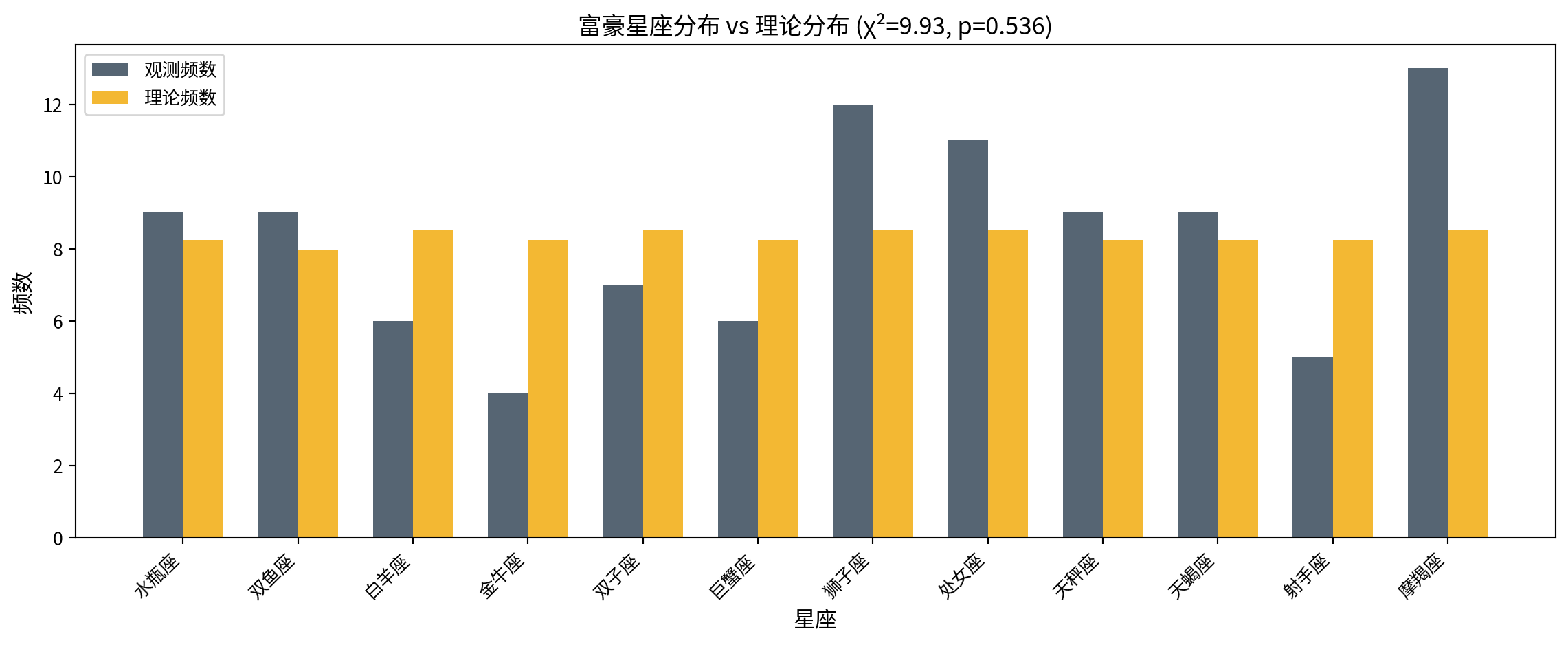

1. 彩票随机性检验 (The Lottery Randomness Test)

许多老彩民坚信彩票有”走势图”。

任务:获取中国福利彩票”双色球”最近100期的红球开奖数据(1-33号)。

统计每个号码出现的频率。

使用拟合优度检验:它们是否服从均匀分布?

如果 P < 0.05,你会怎么做?(提示:样本量才100期×6球=600个号,33个类别,期望频数约18,检验是有效的。如果显著,可能意味着…机器偏差?)

Many veteran lottery players firmly believe that lottery numbers follow “trend charts.”

Task: Obtain the red ball draw data (numbers 1–33) from the most recent 100 draws of China’s Welfare Lottery “Double Color Ball.”

Count the frequency of each number.

Use the goodness-of-fit test: Do they follow a uniform distribution?

If P < 0.05, what would you do? (Hint: The sample size is only 100 draws × 6 balls = 600 numbers, 33 categories, expected frequency ~18—the test is valid. If significant, it might mean… machine bias?)

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import numpy as np # 数值计算库

# Numerical computing library

from scipy.stats import chisquare # 卡方拟合优度检验

# Chi-square goodness-of-fit test

import matplotlib.pyplot as plt # 绘图库

# Plotting library

# ========== 第1步:设定模拟参数 ==========

# ========== Step 1: Set simulation parameters ==========

# 注:此处使用模拟数据演示统计检验方法的应用过程

# Note: Simulated data is used here to demonstrate the application of the statistical test

np.random.seed(42) # 固定随机种子,保证可复现

# Fix random seed for reproducibility

total_lottery_draws_count = 100 # 模拟100期开奖

# Simulate 100 lottery draws

red_balls_per_draw_count = 6 # 每期抽取6个红球

# 6 red balls per draw

max_red_ball_number = 33 # 红球号码范围1-33

# Red ball numbers range from 1 to 33

# ========== 第2步:模拟每期开奖数据 ==========

# ========== Step 2: Simulate draw data for each period ==========

all_drawn_red_balls_list = [] # 存储所有期的红球号码

# Store all drawn red ball numbers

for draw_index in range(total_lottery_draws_count): # 逐期模拟

# Simulate each draw

single_draw_balls = np.random.choice( # 从1-33中不放回抽取6个

# Sample 6 without replacement from 1–33

range(1, max_red_ball_number + 1), # 号码范围1刳33

# Number range 1 to 33

size=red_balls_per_draw_count, replace=False # 抽取6个,不放回

# Draw 6, without replacement

)

all_drawn_red_balls_list.extend(single_draw_balls) # 追加到总列表

# Append to the master list

all_drawn_red_balls_array = np.array(all_drawn_red_balls_list) # 转为NumPy数组

# Convert to NumPy array

# ========== 第3步:统计每个号码的观测频数 ==========

# ========== Step 3: Count the observed frequency of each number ==========

observed_ball_frequencies = np.zeros(max_red_ball_number) # 初始化33个号码的频数

# Initialize frequency array for 33 numbers

for ball_number in range(1, max_red_ball_number + 1): # 遍历每个号码

# Iterate over each number

observed_ball_frequencies[ball_number - 1] = np.sum( # 统计该号码出现次数

# Count occurrences of this number

all_drawn_red_balls_array == ball_number # 比较数组中等于当前号码的元素

# Compare array elements equal to the current number

)彩票开奖模拟和号码频数统计完成。下面计算均匀分布下的理论频数,并执行卡方拟合优度检验。

Lottery draw simulation and number frequency counting are complete. Next, we compute the theoretical frequencies under a uniform distribution and perform the chi-square goodness-of-fit test.

# ========== 第4步:计算均匀分布下的理论频数 ==========

# ========== Step 4: Calculate theoretical frequencies under uniform distribution ==========

# 每期抽6个,共100期,总共600个球;均匀分布下每个号码期望 = 600/33

# 6 balls per draw × 100 draws = 600 balls total; expected frequency per number under uniform = 600/33

total_balls_drawn_count = total_lottery_draws_count * red_balls_per_draw_count # 总球数600

# Total number of balls: 600

uniform_expected_frequency = total_balls_drawn_count / max_red_ball_number # 每号期望≈18.18

# Expected frequency per number ≈ 18.18

expected_ball_frequencies = np.full(max_red_ball_number, uniform_expected_frequency) # 33个相同期望值

# Array of 33 identical expected values

# ========== 第5步:执行卡方拟合优度检验 ==========

# ========== Step 5: Perform chi-square goodness-of-fit test ==========

chi2_lottery_statistic, lottery_p_value = chisquare( # H0: 各号码服从均匀分布

# H0: Each number follows a uniform distribution

observed_ball_frequencies, f_exp=expected_ball_frequencies # 传入观测与期望频数

# Pass observed and expected frequencies

)

print('=' * 60) # 分隔线

# Separator line

print('彩票随机性检验:双色球红球号码拟合优度检验') # 标题

# Title: Lottery randomness test: Double Color Ball red ball goodness-of-fit test

print('=' * 60) # 分隔线

# Separator line

print(f'总开奖期数: {total_lottery_draws_count}') # 期数

# Total number of draws

print(f'总红球个数: {total_balls_drawn_count}') # 总球数

# Total number of red balls

print(f'每个号码的理论频数 (均匀分布): {uniform_expected_frequency:.2f}') # 期望频数

# Theoretical frequency per number (uniform distribution)

print(f'\n卡方统计量: {chi2_lottery_statistic:.4f}') # χ²值

# Chi-square statistic

print(f'自由度: {max_red_ball_number - 1}') # df = 33-1 = 32

# Degrees of freedom = 33 - 1 = 32

print(f'p值: {lottery_p_value:.4f}') # p值

# p-value

if lottery_p_value < 0.05: # 显著性判断

# Significance assessment

print('结论: 拒绝均匀分布假设 → 号码出现频率存在显著偏差') # 拒绝H0的结论

# Conclusion: Reject the uniform distribution hypothesis → significant deviation in number frequencies

else: # p值≥α时

# When p-value >= α

print('结论: 不能拒绝均匀分布假设 → 没有证据表明开奖不随机') # 不拒绝H0的结论

# Conclusion: Fail to reject the uniform distribution hypothesis → no evidence that draws are non-random============================================================

彩票随机性检验:双色球红球号码拟合优度检验

============================================================

总开奖期数: 100

总红球个数: 600

每个号码的理论频数 (均匀分布): 18.18

卡方统计量: 36.5700

自由度: 32

p值: 0.2648

结论: 不能拒绝均匀分布假设 → 没有证据表明开奖不随机卡方拟合优度检验结果显示:在模拟的100期双色球开奖中,共产生600个红球号码,每个号码(1-33号)在均匀分布假设下的期望频数为18.18次。检验统计量 \(\chi^2 = 36.5700\),自由度 \(df = 32\),p值=0.2648。由于p值远大于0.05,我们不能拒绝均匀分布的原假设,即没有统计证据表明某些号码出现的频率高于其他号码。这一结果符合预期——模拟数据本就来自均匀分布的随机抽样过程。在实际应用中,这意味着:即便某些号码在短期内”扎堆”出现,只要样本量合理(此处600次观测、33个类别、每个类别期望频数约18),拟合优度检验就能有效区分随机波动和系统性偏差。所谓”彩票走势图”在统计学检验面前缺乏依据。

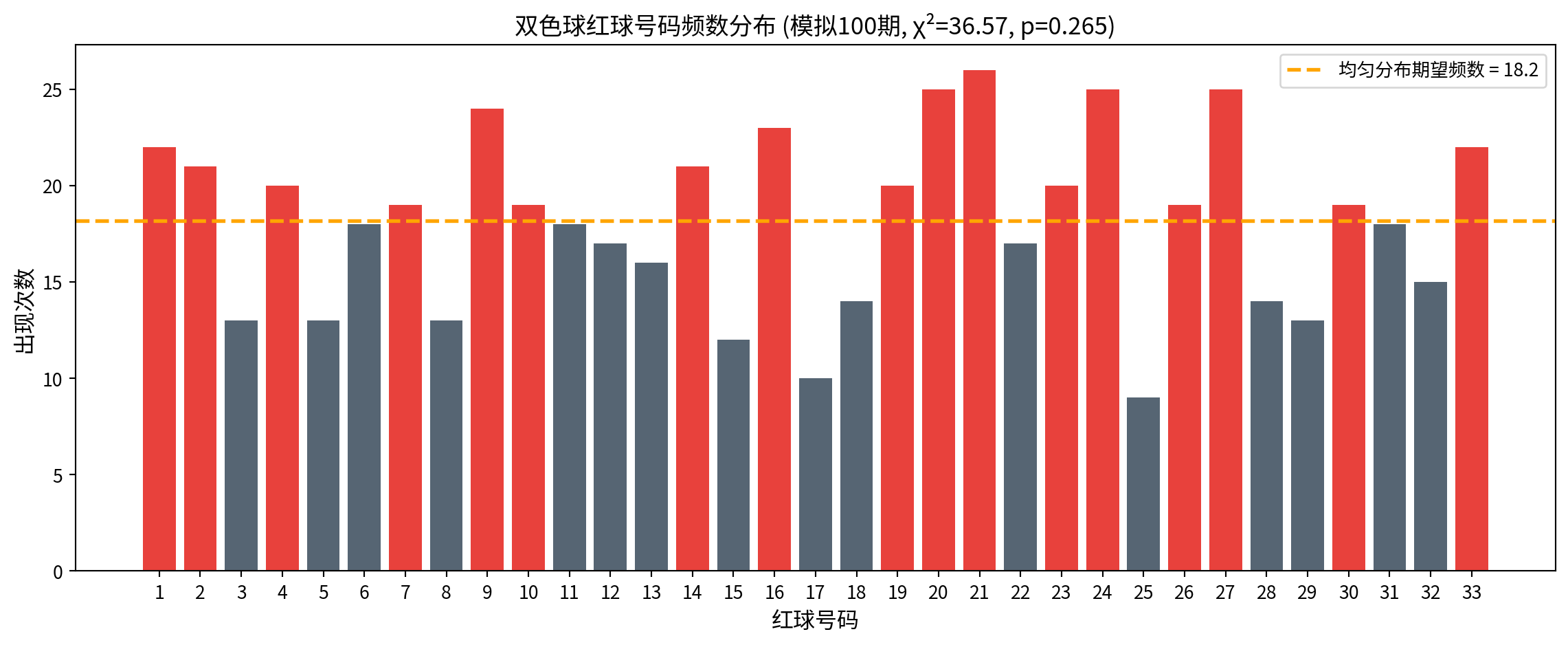

The chi-square goodness-of-fit test results show: in the simulated 100 draws of Double Color Ball, a total of 600 red ball numbers were generated. Under the uniform distribution hypothesis, the expected frequency for each number (1–33) is 18.18. The test statistic \(\chi^2 = 36.5700\), degrees of freedom \(df = 32\), and p-value = 0.2648. Since the p-value is far greater than 0.05, we fail to reject the null hypothesis of uniform distribution—there is no statistical evidence that some numbers appear more frequently than others. This result is expected, as the simulated data is generated from a uniformly random sampling process. In practice, this means: even if some numbers appear to “cluster” in the short term, as long as the sample size is reasonable (here, 600 observations, 33 categories, expected frequency ~18 per category), the goodness-of-fit test can effectively distinguish random fluctuation from systematic bias. The so-called “lottery trend charts” lack any statistical basis.

基于卡方拟合优度检验结果,我们绘制柱状图可视化各号码的观测频数与均匀分布期望频数的对比:

Based on the chi-square goodness-of-fit test results, we plot a bar chart to visualize the comparison between the observed frequency of each number and the expected frequency under a uniform distribution:

# ========== 第6步:可视化——柱状图 + 均匀分布参考线 ==========