# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import pandas as pd # 数据处理与分析库

# Data processing and analysis library

import numpy as np # 数值计算库

# Numerical computation library

from scipy.stats import pearsonr, spearmanr # 皮尔逊和斯皮尔曼相关系数检验函数

# Pearson and Spearman correlation test functions

import matplotlib.pyplot as plt # 导入matplotlib绘图库(后续可视化用)

# Import matplotlib plotting library (for subsequent visualization)

import platform # 系统平台检测库

# System platform detection library

# ========== 第1步:设置本地数据路径 ==========

# ========== Step 1: Set local data path ==========

if platform.system() == 'Windows': # 判断当前操作系统是否为Windows

# Check if the current operating system is Windows

data_path = 'C:/qiufei/data/stock' # Windows平台下的股票数据路径

# Stock data path on Windows

else: # 否则为Linux平台

# Otherwise it is the Linux platform

data_path = '/home/ubuntu/r2_data_mount/qiufei/data/stock' # Linux平台下的股票数据路径

# Stock data path on Linux

# ========== 第2步:读取前复权股价数据 ==========

# ========== Step 2: Read forward-adjusted stock price data ==========

stock_price_dataframe = pd.read_hdf(f'{data_path}/stock_price_pre_adjusted.h5') # 读取前复权日度行情数据

# Read forward-adjusted daily market data

stock_price_dataframe = stock_price_dataframe.reset_index() # 将索引重置为普通列,方便后续筛选

# Reset index to regular columns for easier subsequent filtering8 相关与回归分析 (Correlation and Regression Analysis)

相关与回归分析是研究变量间关系的基础工具。相关衡量变量关联的强度,回归则描述变量间的依赖关系。这两种方法构成了商业分析、金融研究和社会科学定量研究的核心方法论。

Correlation and regression analysis are fundamental tools for studying relationships between variables. Correlation measures the strength of association between variables, while regression describes the dependence relationships among them. Together, these two methods form the core methodology of business analytics, financial research, and quantitative social science research.

8.1 相关与回归在资产定价中的典型应用 (Typical Applications of Correlation and Regression in Asset Pricing)

相关分析和回归分析是资产定价和投资组合管理的数学基石。以下展示其在中国资本市场中的核心应用场景。

Correlation analysis and regression analysis are the mathematical cornerstones of asset pricing and portfolio management. The following demonstrates their core application scenarios in China’s capital markets.

8.1.1 应用一:Beta系数与CAPM模型 (Application 1: Beta Coefficient and the CAPM Model)

资本资产定价模型(CAPM)的核心是Beta系数,它通过将个股收益率对市场收益率进行简单线性回归获得。利用 stock_price_pre_adjusted.h5 中长三角地区上市公司(如海康威视、宁波银行、恒瑞医药等)的日收益率,以沪深300指数作为市场代理,进行回归分析:

The Capital Asset Pricing Model (CAPM) centers on the Beta coefficient, which is obtained by performing a simple linear regression of individual stock returns on market returns. Using the daily returns of Yangtze River Delta listed companies (such as Hikvision, Bank of Ningbo, Hengrui Medicine, etc.) from stock_price_pre_adjusted.h5, with the CSI 300 Index as the market proxy, regression analysis is conducted:

\[ R_{i,t} - R_{f,t} = \alpha_i + \beta_i (R_{m,t} - R_{f,t}) + \varepsilon_{i,t} \]

回归斜率 \(\beta_i\) 衡量了股票对市场风险的敏感度,而截距 \(\alpha_i\) 则代表超额收益——这正是 章节 7 中假设检验方法的应用场景。

The regression slope \(\beta_i\) measures a stock’s sensitivity to market risk, while the intercept \(\alpha_i\) represents excess returns—precisely the application scenario for hypothesis testing methods discussed in 章节 7.

8.1.2 应用二:股票相关性与投资组合分散化 (Application 2: Stock Correlation and Portfolio Diversification)

马科维茨投资组合理论的核心洞见是:当资产之间的相关系数低于1时,分散化可以降低组合风险。使用 stock_price_pre_adjusted.h5 中不同行业的代表性股票,计算两两之间的皮尔逊相关系数,可以发现:同行业股票的相关性通常高于跨行业股票,而在市场极端下跌时相关性会异常升高(“相关性崩溃”),导致分散化失效。这一现象将在 章节 10 中用多元回归进一步分析。

The core insight of Markowitz portfolio theory is that when the correlation coefficient between assets is less than 1, diversification can reduce portfolio risk. Using representative stocks from different industries in stock_price_pre_adjusted.h5 to calculate pairwise Pearson correlation coefficients, one can observe that: intra-industry stock correlations are typically higher than cross-industry correlations, and during extreme market downturns, correlations surge abnormally (“correlation breakdown”), causing diversification to fail. This phenomenon will be further analyzed using multiple regression in 章节 10.

8.1.3 应用三:回归拟合与金融异象的发现 (Application 3: Regression Fitting and the Discovery of Financial Anomalies)

回归分析是发现金融市场”异象”(Anomalies)的核心工具。例如,将股票收益率对公司规模、市净率、动量等因子进行回归,如果截距项显著不为零,则意味着存在CAPM无法解释的超额收益。基于 financial_statement.h5 和 valuation_factors_quarterly_15_years.h5 中的数据,可以实证检验A股市场中是否存在规模效应、价值效应等经典异象。

Regression analysis is the core tool for discovering financial market “anomalies.” For example, by regressing stock returns on factors such as firm size, book-to-market ratio, and momentum, a statistically significant non-zero intercept implies the existence of excess returns unexplained by CAPM. Using data from financial_statement.h5 and valuation_factors_quarterly_15_years.h5, one can empirically test whether classic anomalies such as the size effect and value effect exist in China’s A-share market.

8.2 皮尔逊相关系数 (Pearson Correlation Coefficient)

8.2.1 理论背景 (Theoretical Background)

皮尔逊相关系数(Pearson Correlation Coefficient)衡量两个连续变量之间线性关系的强度和方向。它是应用最广泛的相关性度量指标。

The Pearson Correlation Coefficient measures the strength and direction of the linear relationship between two continuous variables. It is the most widely used measure of correlation.

定义(见 式 8.1):

Definition (see 式 8.1):

\[ r_{XY} = \frac{\sum_{i=1}^{n}(X_i - \bar{X})(Y_i - \bar{Y})}{\sqrt{\sum_{i=1}^{n}(X_i - \bar{X})^2}\sqrt{\sum_{i=1}^{n}(Y_i - \bar{Y})^2}} \tag{8.1}\]

等价形式(基于协方差,见 式 8.2):

Equivalent form (based on covariance, see 式 8.2):

\[ r_{XY} = \frac{\text{Cov}(X,Y)}{\sigma_X \sigma_Y} \tag{8.2}\]

其中:

- \(\text{Cov}(X,Y) = \frac{1}{n-1}\sum_{i=1}^{n}(X_i - \bar{X})(Y_i - \bar{Y})\) 为样本协方差

- \(\sigma_X, \sigma_Y\) 为样本标准差

Where:

- \(\text{Cov}(X,Y) = \frac{1}{n-1}\sum_{i=1}^{n}(X_i - \bar{X})(Y_i - \bar{Y})\) is the sample covariance

- \(\sigma_X, \sigma_Y\) are the sample standard deviations

性质:

- 取值范围:\(-1 \leq r \leq 1\)

- 符号解释:正号表示正相关,负号表示负相关

- 强度解释:绝对值越接近1,相关性越强

- 对称性:\(r_{XY} = r_{YX}\)

- 无量纲:不受变量单位影响

Properties:

- Range: \(-1 \leq r \leq 1\)

- Sign interpretation: A positive sign indicates positive correlation; a negative sign indicates negative correlation

- Strength interpretation: The closer the absolute value is to 1, the stronger the correlation

- Symmetry: \(r_{XY} = r_{YX}\)

- Dimensionless: Not affected by the units of the variables

相关系数的强度判断

Strength Guidelines for Correlation Coefficients

Cohen (1988) 提供了经验标准:

- 小相关:\(|r| \approx 0.1\)

- 中等相关:\(|r| \approx 0.3\)

- 大相关:\(|r| \approx 0.5\)

Cohen (1988) provided the following rules of thumb:

- Small correlation: \(|r| \approx 0.1\)

- Medium correlation: \(|r| \approx 0.3\)

- Large correlation: \(|r| \approx 0.5\)

然而,相关性的”实际意义”取决于具体领域。在金融工程量化交易中,\(r < 0.9\) 可能被认为不够强;而在市场营销研究中,\(r = 0.3\) 可能已经很有价值。因此,始终应结合领域知识解释相关系数。

However, the “practical significance” of a correlation depends on the specific domain. In quantitative trading within financial engineering, \(r < 0.9\) might be considered insufficiently strong; whereas in marketing research, \(r = 0.3\) could already be quite valuable. Therefore, correlation coefficients should always be interpreted in conjunction with domain knowledge.

8.2.2 显著性检验 (Significance Testing)

相关系数是否显著不同于零?我们需要进行假设检验。

Is the correlation coefficient significantly different from zero? We need to conduct a hypothesis test.

假设设置:

- 原假设 \(H_0: \rho = 0\) (总体相关系数为零)

- 备择假设 \(H_1: \rho \neq 0\) (总体相关系数不为零)

Hypothesis setup:

- Null hypothesis \(H_0: \rho = 0\) (the population correlation coefficient is zero)

- Alternative hypothesis \(H_1: \rho \neq 0\) (the population correlation coefficient is not zero)

检验统计量(式 8.3):

Test statistic (式 8.3):

\[ t = \frac{r\sqrt{n-2}}{\sqrt{1-r^2}} \tag{8.3}\]

其中 \(t\) 服从自由度为 \(n-2\) 的t分布。

Where \(t\) follows a t-distribution with \(n-2\) degrees of freedom.

相关系数的置信区间:

Confidence interval for the correlation coefficient:

使用Fisher变换(式 8.4)构建相关系数的置信区间:

The Fisher transformation (式 8.4) is used to construct the confidence interval for the correlation coefficient:

\[ z = \frac{1}{2}\ln\left(\frac{1+r}{1-r}\right) \tag{8.4}\]

变换后的 \(z\) 近似服从正态分布:

The transformed \(z\) approximately follows a normal distribution:

\[ z \sim N\left(\frac{1}{2}\ln\left(\frac{1+\rho}{1-\rho}\right), \frac{1}{n-3}\right)\]

8.2.3 适用条件与局限性 (Assumptions and Limitations)

适用条件:

- 线性关系:只衡量线性相关性

- 连续变量:两个变量都应是连续的

- 双变量正态:\((X, Y)\) 服从二元正态分布

- 无异常值:对异常值敏感

Assumptions:

- Linear relationship: Only measures linear correlation

- Continuous variables: Both variables should be continuous

- Bivariate normality: \((X, Y)\) follows a bivariate normal distribution

- No outliers: Sensitive to outliers

常见误区:

Common Misconceptions:

相关不等于因果

Correlation Does Not Imply Causation

这是统计学中最重要但也最容易被误解的原则之一。

This is one of the most important yet most easily misunderstood principles in statistics.

错误推理:“如果X和Y高度相关,那么X导致Y”

Flawed reasoning: “If X and Y are highly correlated, then X causes Y”

正确理解:

- 相关性仅描述变量共同变化的趋势,不暗示因果关系

- 可能存在混淆变量(confounding variable)同时影响X和Y

- 可能是反向因果(Y导致X)

- 可能纯属巧合(spurious correlation)

Correct understanding:

- Correlation merely describes the tendency of variables to co-vary; it does not imply causation

- There may be a confounding variable that simultaneously influences both X and Y

- It could be reverse causation (Y causes X)

- It could be purely coincidental (spurious correlation)

经典例子:

- 某平台发现空调销量与啤酒销量高度相关

- 但购买空调不会导致购买更多啤酒

- 真实原因是:夏季气温升高同时推动两者的需求增加

Classic example:

- A platform discovers that air conditioner sales and beer sales are highly correlated

- But buying an air conditioner does not cause people to buy more beer

- The real reason is: rising summer temperatures simultaneously drive demand for both

其他局限性:

- 非线性关系:相关系数无法捕捉U型、指数型等非线性关系

- 异常值影响:单个极端值可能显著改变相关系数

- 范围限制:当变量取值范围受限时,相关系数可能被低估

Other limitations:

- Nonlinear relationships: The correlation coefficient cannot capture nonlinear relationships such as U-shaped or exponential patterns

- Outlier effects: A single extreme value can significantly alter the correlation coefficient

- Range restriction: When the range of variable values is restricted, the correlation coefficient may be underestimated

8.2.4 案例:股价与成交量的关系 (Case Study: Relationship Between Stock Price and Trading Volume)

什么是量价相关性分析?

What is Price-Volume Correlation Analysis?

在技术分析和量化交易中,「量价关系」是最基础且最重要的研究主题之一。股票的价格变动与成交量变化之间是否存在稳定的统计关联?例如,海康威视(002415.XSHE)作为长三角地区安防行业的龙头企业,其股票的收益率与成交量变化率之间的相关程度能够反映市场参与者的行为特征。

In technical analysis and quantitative trading, the “price-volume relationship” is one of the most fundamental and important research topics. Is there a stable statistical association between a stock’s price movements and changes in trading volume? For example, Hikvision (002415.XSHE), as the leading security industry company in the Yangtze River Delta region, the correlation between its stock returns and volume changes can reflect behavioral characteristics of market participants.

皮尔逊相关系数和斯皮尔曼秩相关系数是度量两个变量之间线性关联和单调关联的经典统计工具。通过计算这两个指标并进行显著性检验,我们能够严谨地评估量价关系的强度和统计显著性。下面分析海康威视股票的收益率与成交量变化率的相关性,结果如 表 8.1 所示。

The Pearson correlation coefficient and Spearman rank correlation coefficient are classic statistical tools for measuring linear and monotonic associations between two variables. By calculating these two metrics and conducting significance tests, we can rigorously evaluate the strength and statistical significance of price-volume relationships. The following analyzes the correlation between Hikvision’s stock returns and volume changes, with results shown in 表 8.1.

前复权日度行情数据读取完毕。下面筛选海康威视2023年交易数据并计算日收益率与成交量变化率。

Forward-adjusted daily market data has been loaded. Next, we filter Hikvision’s 2023 trading data and calculate daily returns and volume change rates.

# ========== 第3步:筛选海康威视2023年交易数据 ==========

# ========== Step 3: Filter Hikvision 2023 trading data ==========

haikang_stock_dataframe = stock_price_dataframe[(stock_price_dataframe['order_book_id'] == '002415.XSHE') & # 海康威视股票代码

# Hikvision stock code

(stock_price_dataframe['date'] >= '2023-01-01') & # 起始日期为2023年1月1日

# Start date: January 1, 2023

(stock_price_dataframe['date'] <= '2023-12-31')].copy() # 截止日期为2023年12月31日

# End date: December 31, 2023

haikang_stock_dataframe = haikang_stock_dataframe.sort_values('date') # 按日期升序排列

# Sort by date in ascending order

# ========== 第4步:计算日收益率和成交量变化率 ==========

# ========== Step 4: Calculate daily returns and volume change rates ==========

haikang_stock_dataframe['return'] = haikang_stock_dataframe['close'].pct_change() # 计算日收益率(收盘价百分比变化)

# Calculate daily returns (percentage change in closing price)

haikang_stock_dataframe['vol_change'] = haikang_stock_dataframe['volume'].pct_change() # 计算成交量变化率(成交量百分比变化)

# Calculate volume change rate (percentage change in volume)

haikang_stock_dataframe = haikang_stock_dataframe.dropna() # 删除因差分产生的首行缺失值

# Drop first-row missing values caused by differencing

daily_returns_array = haikang_stock_dataframe['return'].values # 提取日收益率为NumPy数组

# Extract daily returns as a NumPy array

volume_changes_array = haikang_stock_dataframe['vol_change'].values # 提取成交量变化率为NumPy数组

# Extract volume change rates as a NumPy array

trading_days_count = len(haikang_stock_dataframe) # 记录有效交易日数量

# Record the number of valid trading days基于海康威视2023年日度行情数据,我们分别计算皮尔逊和斯皮尔曼相关系数,并对相关性强度和方向进行解释:

Based on Hikvision’s 2023 daily market data, we calculate both the Pearson and Spearman correlation coefficients, and interpret the strength and direction of the correlations:

# ========== 第5步:计算皮尔逊和斯皮尔曼相关系数 ==========

# ========== Step 5: Calculate Pearson and Spearman correlation coefficients ==========

pearson_correlation_coefficient, pearson_p_value = pearsonr(daily_returns_array, volume_changes_array) # 皮尔逊相关系数及其p值(衡量线性相关)

# Pearson correlation coefficient and its p-value (measures linear correlation)

spearman_correlation_coefficient, spearman_p_value = spearmanr(daily_returns_array, volume_changes_array) # 斯皮尔曼相关系数及其p值(衡量单调相关)

# Spearman correlation coefficient and its p-value (measures monotonic correlation)

# ========== 第6步:输出描述性统计信息 ==========

# ========== Step 6: Output descriptive statistics ==========

print('=' * 60) # 分隔线

# Separator line

print('海康威视(002415.XSHE)股价与成交量相关性分析') # 标题

# Title

print('=' * 60) # 分隔线

# Separator line

print('\n描述性统计:') # 描述性统计标题

# Descriptive statistics heading

print(f' 交易日数: {trading_days_count}') # 输出有效交易日数量

# Output the number of valid trading days

print(f' 平均日收益率: {np.mean(daily_returns_array)*100:.4f}%') # 输出日均收益率(百分比)

# Output mean daily return (percentage)

print(f' 收益率标准差: {np.std(daily_returns_array, ddof=1)*100:.4f}%') # 输出收益率样本标准差

# Output sample standard deviation of returns

print(f' 平均成交量变化率: {np.mean(volume_changes_array)*100:.2f}%') # 输出成交量平均变化率

# Output mean volume change rate

print(f' 成交量变化率标准差: {np.std(volume_changes_array, ddof=1)*100:.2f}%') # 输出成交量变化率标准差

# Output standard deviation of volume change rate============================================================

海康威视(002415.XSHE)股价与成交量相关性分析

============================================================

描述性统计:

交易日数: 241

平均日收益率: 0.0346%

收益率标准差: 2.0718%

平均成交量变化率: 9.27%

成交量变化率标准差: 57.10%描述性统计结果显示:海康威视2023年共有241个有效交易日,平均日收益率为0.0346%(接近于零,符合日收益率特征),收益率标准差为2.0718%,反映了该股票日度波动约2个百分点。成交量变化率方面,平均变化率为9.27%,但标准差高达57.10%,说明成交量在日间的波动远大于收益率波动——这是股票市场中常见的现象:成交量的分布通常比收益率更为离散且具有明显的右偏特征。

The descriptive statistics reveal that Hikvision had 241 valid trading days in 2023, with a mean daily return of 0.0346% (close to zero, consistent with typical daily return characteristics) and a return standard deviation of 2.0718%, reflecting daily fluctuations of approximately 2 percentage points. Regarding volume changes, the average change rate was 9.27%, but the standard deviation was as high as 57.10%, indicating that daily volume fluctuations are far greater than return fluctuations—a common phenomenon in stock markets: volume distributions are typically more dispersed than return distributions and exhibit pronounced right-skewness.

下面输出皮尔逊和斯皮尔曼相关系数的详细分析结果。

Below, we output the detailed analysis results of the Pearson and Spearman correlation coefficients.

# ========== 第7步:输出相关性分析结果 ==========

# ========== Step 7: Output correlation analysis results ==========

print('\n' + '=' * 60) # 分隔线

# Separator line

print('相关性分析结果') # 标题

# Title

print('=' * 60) # 分隔线

# Separator line

print(f'\n皮尔逊相关系数(线性相关):') # 皮尔逊部分标题

# Pearson section heading

print(f' 相关系数 r: {pearson_correlation_coefficient:.4f}') # 输出皮尔逊r值

# Output Pearson r value

print(f' p值: {pearson_p_value:.6f}') # 输出对应p值

# Output corresponding p-value

print(f' 解释: ', end='') # 输出"解释"前缀

# Output "Interpretation" prefix

if abs(pearson_correlation_coefficient) < 0.1: # 判断相关强度:<0.1为极弱

# Assess correlation strength: <0.1 is very weak

correlation_strength_description = '极弱或无相关' # 极弱或无相关

# Very weak or no correlation

elif abs(pearson_correlation_coefficient) < 0.3: # 0.1~0.3为弱相关

# 0.1–0.3 is weak correlation

correlation_strength_description = '弱相关' # 弱相关

# Weak correlation

elif abs(pearson_correlation_coefficient) < 0.5: # 0.3~0.5为中等相关

# 0.3–0.5 is moderate correlation

correlation_strength_description = '中等相关' # 中等相关

# Moderate correlation

else: # >=0.5为强相关

# >=0.5 is strong correlation

correlation_strength_description = '强相关' # 强相关

# Strong correlation

correlation_direction_description = '正' if pearson_correlation_coefficient > 0 else '负' # 判断相关方向

# Determine correlation direction

print(f'{correlation_direction_description}{correlation_strength_description}') # 输出方向+强度描述

# Output direction + strength description

if pearson_p_value < 0.05: # 判断统计显著性

# Assess statistical significance

print(f' 统计显著性: 在α=0.05水平下显著(p={pearson_p_value:.6f} < 0.05)') # 显著

# Statistically significant at α=0.05

else: # 不显著

# Not significant

print(f' 统计显著性: 不显著(p={pearson_p_value:.6f} >= 0.05)') # 输出不显著

# Output not significant

print(f'\n斯皮尔曼等级相关系数(单调相关):') # 斯皮尔曼部分标题

# Spearman rank correlation coefficient (monotonic correlation) heading

print(f' 相关系数 ρ: {spearman_correlation_coefficient:.4f}') # 输出斯皮尔曼ρ值

# Output Spearman ρ value

print(f' p值: {spearman_p_value:.6f}') # 输出对应p值

# Output corresponding p-value

============================================================

相关性分析结果

============================================================

皮尔逊相关系数(线性相关):

相关系数 r: 0.1197

p值: 0.063649

解释: 正弱相关

统计显著性: 不显著(p=0.063649 >= 0.05)

斯皮尔曼等级相关系数(单调相关):

相关系数 ρ: 0.2300

p值: 0.000318相关性分析结果揭示了一个有趣的发现:皮尔逊相关系数 \(r = 0.1197\),p值为0.063649,在5%显著性水平下未通过显著性检验,属于”正弱相关”。这意味着从严格的线性关系角度看,海康威视的日收益率与成交量变化率之间的线性关联较弱。然而,斯皮尔曼等级相关系数 \(\rho = 0.2300\),p值为0.000318,在1%水平下高度显著。两个相关系数之间的差异提示:量价之间可能存在非线性的单调关系——即收益率与成交量变化率在秩序上的一致性(上涨伴随放量、下跌伴随缩量的趋势)比简单的线性关系更为明显。这一差异对技术分析实践具有重要指导意义。

The correlation analysis reveals an interesting finding: the Pearson correlation coefficient \(r = 0.1197\) with a p-value of 0.063649 fails to pass the significance test at the 5% level, categorized as “positive weak correlation.” This means that from a strict linear relationship perspective, the linear association between Hikvision’s daily returns and volume changes is weak. However, the Spearman rank correlation coefficient \(\rho = 0.2300\) with a p-value of 0.000318 is highly significant at the 1% level. The discrepancy between the two correlation coefficients suggests that a nonlinear monotonic relationship may exist between price and volume—namely, the ordinal consistency between returns and volume changes (the tendency for rising prices to accompany increased volume and falling prices to accompany decreased volume) is more pronounced than a simple linear relationship. This discrepancy has important practical implications for technical analysis.

下面从实际意义角度解读量价关系。

Below, we interpret the price-volume relationship from a practical significance perspective.

# ========== 第8步:输出实际意义解释 ==========

# ========== Step 8: Output practical significance interpretation ==========

print('\n' + '=' * 60) # 分隔线

# Separator line

print('实际意义解释') # 标题

# Title

print('=' * 60) # 分隔线

# Separator line

print(f'股价收益率与成交量变化率的皮尔逊相关系数为{pearson_correlation_coefficient:.4f},') # 总结相关系数

# Summarize the correlation coefficient

print(f'表明两者之间存在{correlation_strength_description}。') # 总结相关强度

# Summarize the correlation strength

print(f'\n在股市技术分析中,量价关系是重要指标:') # 技术分析背景说明

# Background on technical analysis

if pearson_correlation_coefficient > 0: # 如果正相关

# If positively correlated

print(f' - 正相关意味着:股价上涨往往伴随成交量增加') # 正相关的市场含义

# Positive correlation implies: stock price increases are often accompanied by volume increases

print(f' - 这可能反映:买盘积极推动价格上涨') # 可能的驱动机制

# This may reflect: active buying pressure driving prices up

else: # 如果负相关

# If negatively correlated

print(f' - 负相关意味着:股价下跌时成交量可能放大') # 负相关的市场含义

# Negative correlation implies: volume may increase when stock prices fall

print(f' - 这可能反映:恐慌性抛售导致量增价跌') # 可能的驱动机制

# This may reflect: panic selling leading to increased volume and declining prices

print(f'\n注意:相关性不等于因果性。成交量变化未必是') # 因果性警告

# Causality warning

print(f'股价变化的原因,两者可能同时受市场情绪、') # 第三因素说明

# Third-factor explanation

print(f'公司消息等第三因素影响。') # 伪相关提醒

# Spurious correlation reminder

============================================================

实际意义解释

============================================================

股价收益率与成交量变化率的皮尔逊相关系数为0.1197,

表明两者之间存在弱相关。

在股市技术分析中,量价关系是重要指标:

- 正相关意味着:股价上涨往往伴随成交量增加

- 这可能反映:买盘积极推动价格上涨

注意:相关性不等于因果性。成交量变化未必是

股价变化的原因,两者可能同时受市场情绪、

公司消息等第三因素影响。8.2.5 相关性的可视化 (Visualization of Correlation)

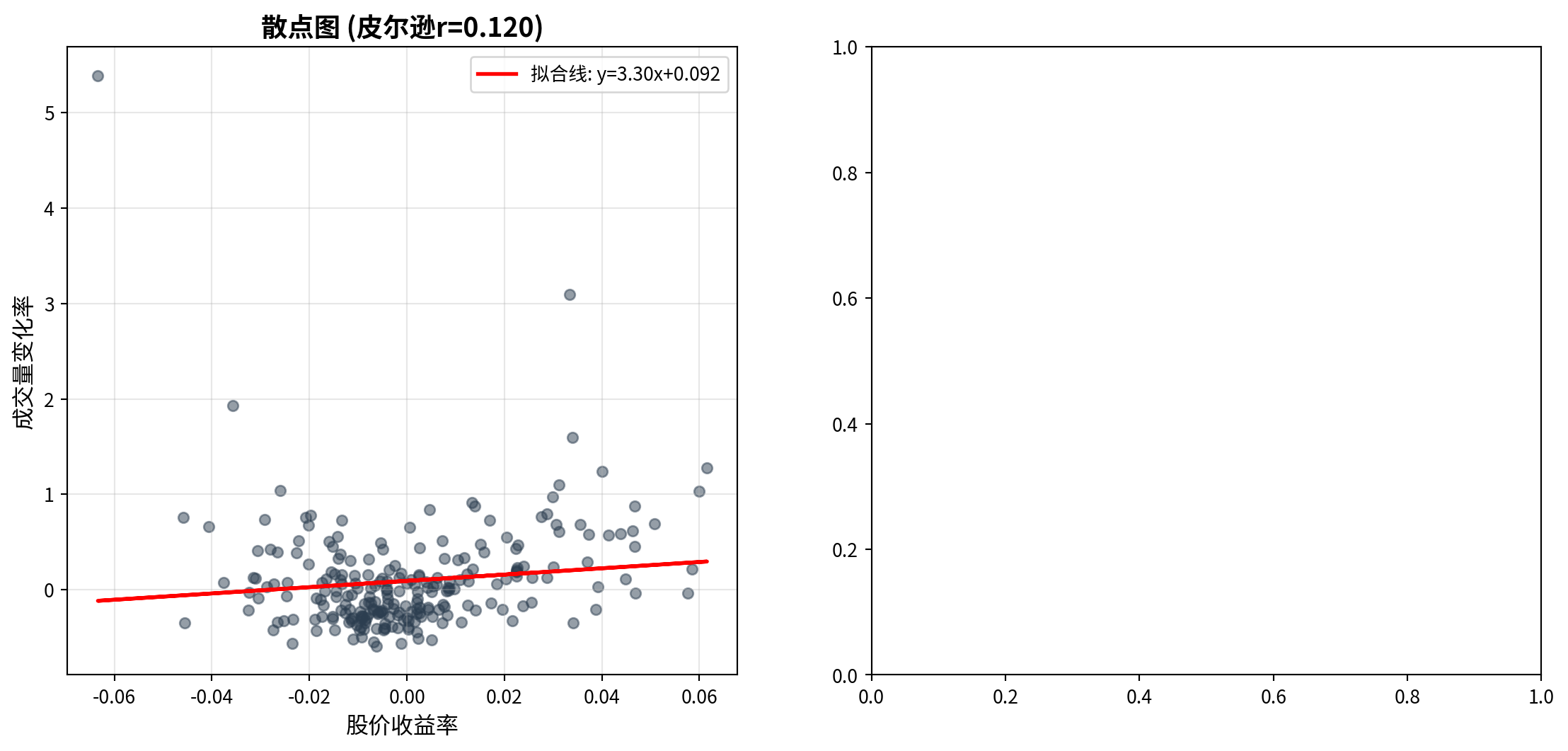

图 8.1 展示了股价收益率与成交量变化率的散点图及时间序列对比。

图 8.1 displays the scatter plot and time series comparison of stock price returns versus volume change rates.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import matplotlib.pyplot as plt # 导入matplotlib绘图库,用于散点图和时间序列可视化

# Import matplotlib for scatter plots and time series visualization

# ========== 第1步:创建1行2列子图画布 ==========

# ========== Step 1: Create a 1-row, 2-column subplot canvas ==========

matplot_figure, matplot_axes_array = plt.subplots(1, 2, figsize=(14, 6)) # 创建14x6英寸的双面板图

# Create a 14x6 inch dual-panel figure

# ========== 第2步:左图——散点图与拟合线 ==========

# ========== Step 2: Left panel — Scatter plot with fitted line ==========

matplot_axes_array[0].scatter(daily_returns_array, volume_changes_array, alpha=0.5, s=30, color='#2C3E50') # 绘制收益率vs成交量变化率散点图

# Plot returns vs. volume change rate scatter plot

# 添加一次多项式拟合线

# Add a first-degree polynomial fitted line

polyfit_coefficients_array = np.polyfit(daily_returns_array, volume_changes_array, 1) # 一阶多项式拟合(即线性拟合),返回斜率和截距

# First-degree polynomial fit (i.e., linear fit), returns slope and intercept

polynomial_function_1d = np.poly1d(polyfit_coefficients_array) # 将拟合系数转换为多项式函数对象

# Convert fit coefficients into a polynomial function object

matplot_axes_array[0].plot(daily_returns_array, polynomial_function_1d(daily_returns_array), 'r-', linewidth=2, label=f'拟合线: y={polyfit_coefficients_array[0]:.2f}x{polyfit_coefficients_array[1]:+.3f}') # 绘制红色拟合线并标注方程

# Plot the red fitted line with the equation annotated

matplot_axes_array[0].set_xlabel('股价收益率', fontsize=12) # 设置x轴标签

# Set x-axis label

matplot_axes_array[0].set_ylabel('成交量变化率', fontsize=12) # 设置y轴标签

# Set y-axis label

matplot_axes_array[0].set_title(f'散点图 (皮尔逊r={pearson_correlation_coefficient:.3f})', fontsize=14, fontweight='bold') # 标题中嵌入皮尔逊r值

# Title with embedded Pearson r value

matplot_axes_array[0].legend(fontsize=10) # 显示图例

# Display legend

matplot_axes_array[0].grid(True, alpha=0.3) # 添加半透明网格线

# Add semi-transparent gridlines

左侧散点图展示了海康威视日收益率(横轴)与成交量变化率(纵轴)之间的分布关系。从图中可以观察到:数据点围绕拟合线呈较为分散的分布,拟合线的正斜率反映了皮尔逊相关系数 \(r = 0.119\) 的正向关联,但大量数据点远离拟合线,直观地印证了这一相关性较弱的统计结论。此外,散点图中成交量变化率的纵向分布范围远大于收益率的横向分布范围,与前述描述性统计中成交量波动率(57.10%)远高于收益率波动率(2.07%)的结论一致。

The left scatter plot displays the distributional relationship between Hikvision’s daily returns (horizontal axis) and volume change rates (vertical axis). From the chart, one can observe that data points are dispersed around the fitted line; the positive slope of the fitted line reflects the positive association of the Pearson correlation coefficient \(r = 0.119\), but many data points lie far from the fitted line, visually confirming the statistical conclusion of a weak correlation. Additionally, the vertical spread of volume change rates in the scatter plot is much larger than the horizontal spread of returns, consistent with the earlier descriptive statistics showing that volume volatility (57.10%) far exceeds return volatility (2.07%).

下面在右侧面板绘制累积时间序列对比图,以观察量价关系的动态演变。

Next, we plot the cumulative time series comparison in the right panel to observe the dynamic evolution of the price-volume relationship.

# ========== 第3步:右图——累积时间序列对比 ==========

# ========== Step 3: Right panel — Cumulative time series comparison ==========

matplot_axes_array[1].plot(range(trading_days_count), np.cumsum(daily_returns_array), linewidth=1.5, label='累积收益率', color='#E3120B') # 绘制累积收益率曲线(红色)

# Plot cumulative return curve (red)

twin_axes_object = matplot_axes_array[1].twinx() # 创建共享x轴的辅助y轴(双纵轴)

# Create a secondary y-axis sharing the same x-axis (dual y-axes)

twin_axes_object.plot(range(trading_days_count), np.cumsum(volume_changes_array), linewidth=1.5, label='累积成交量变化', color='#008080', alpha=0.7) # 绘制累积成交量变化曲线(青色)

# Plot cumulative volume change curve (teal)

matplot_axes_array[1].set_xlabel('交易日', fontsize=12) # 设置x轴标签

# Set x-axis label

matplot_axes_array[1].set_ylabel('累积收益率', fontsize=12, color='#E3120B') # 左y轴标签(红色对应收益率)

# Left y-axis label (red for returns)

twin_axes_object.set_ylabel('累积成交量变化', fontsize=12, color='#008080') # 右y轴标签(青色对应成交量)

# Right y-axis label (teal for volume)

matplot_axes_array[1].set_title('时间序列对比', fontsize=14, fontweight='bold') # 设置子图标题

# Set subplot title

# ========== 第4步:合并双纵轴图例 ==========

# ========== Step 4: Merge dual y-axis legends ==========

plot_lines_primary, plot_labels_primary = matplot_axes_array[1].get_legend_handles_labels() # 获取主y轴的图例句柄和标签

# Get legend handles and labels for the primary y-axis

plot_lines_secondary, plot_labels_secondary = twin_axes_object.get_legend_handles_labels() # 获取辅助y轴的图例句柄和标签

# Get legend handles and labels for the secondary y-axis

matplot_axes_array[1].legend(plot_lines_primary + plot_lines_secondary, plot_labels_primary + plot_labels_secondary, loc='best', fontsize=10) # 合并后显示在最佳位置

# Merge and display at the best position

matplot_axes_array[1].tick_params(axis='y', labelcolor='#E3120B') # 左y轴刻度标签设为红色

# Set left y-axis tick labels to red

twin_axes_object.tick_params(axis='y', labelcolor='#008080') # 右y轴刻度标签设为青色

# Set right y-axis tick labels to teal

matplot_axes_array[1].grid(True, alpha=0.3) # 添加半透明网格线

# Add semi-transparent gridlines

plt.tight_layout() # 自动调整子图间距

# Automatically adjust subplot spacing

plt.show() # 显示图形

# Display the figure<Figure size 672x480 with 0 Axes>图 8.1 的右侧面板展示了累积收益率与累积成交量变化的时间序列对比。从图中可以观察到:累积收益率曲线(红色)在2023年全年呈现先升后降再趋稳的走势,而累积成交量变化曲线(青色)则呈现持续上升的趋势。两条曲线的走势并非严格同步——在某些阶段(如年初),两者方向一致;但在另一些阶段则出现明显分歧。这种不完全同步的动态关系印证了前文皮尔逊相关系数仅为0.12的弱线性关联结论,同时也暗示了量价关系可能具有时变特征,即在不同市场环境下关联强度会发生变化。

The right panel of 图 8.1 displays the time series comparison of cumulative returns and cumulative volume changes. From the chart, one can observe that the cumulative return curve (red) exhibits a pattern of rising first, then declining, and finally stabilizing throughout 2023, while the cumulative volume change curve (teal) shows a persistently upward trend. The trajectories of the two curves are not strictly synchronized—in some phases (such as early in the year), both move in the same direction; but in other phases, they diverge noticeably. This imperfectly synchronized dynamic relationship confirms the earlier conclusion of a weak linear association with a Pearson correlation coefficient of only 0.12, and also suggests that the price-volume relationship may have time-varying characteristics, meaning that the strength of association changes under different market conditions.

8.2.6 其他类型的相关系数 (Other Types of Correlation Coefficients)

除了皮尔逊相关系数,还有其他类型的相关度量:

Besides the Pearson correlation coefficient, there are other types of correlation measures:

1. 斯皮尔曼等级相关系数(Spearman’s ρ)

1. Spearman’s Rank Correlation Coefficient (Spearman’s ρ)

斯皮尔曼相关系数衡量两个变量之间的单调关系(不必线性),其计算基于数据的秩次而非原始值。设 \(d_i\) 为第 \(i\) 个观测值在两个变量上的秩次之差,则:

The Spearman correlation coefficient measures the monotonic relationship (not necessarily linear) between two variables. Its calculation is based on the ranks of the data rather than the raw values. Let \(d_i\) be the difference in ranks of the \(i\)-th observation across the two variables, then:

\[ \rho_s = 1 - \frac{6\sum_{i=1}^{n} d_i^2}{n(n^2-1)} \tag{8.5}\]

对异常值稳健

适用范围更广(有序变量也可)

Robust to outliers

Broader applicability (also applicable to ordinal variables)

2. 肯德尔τ系数(Kendall’s Tau)

2. Kendall’s Tau Coefficient (Kendall’s τ)

肯德尔τ系数基于秩的一致性(concordant)和不一致性(discordant)对数。设 \(C\) 为一致对数,\(D\) 为不一致对数,则:

Kendall’s τ coefficient is based on the number of concordant and discordant pairs of ranks. Let \(C\) be the number of concordant pairs and \(D\) the number of discordant pairs, then:

\[ \tau = \frac{C - D}{\binom{n}{2}} = \frac{C - D}{n(n-1)/2} \tag{8.6}\]

样本量较小时更准确

对异常值稳健

More accurate with small sample sizes

Robust to outliers

选择建议:

- 数据正态且线性关系 → 皮尔逊

- 数据非正态或存在异常值 → 斯皮尔曼或肯德尔

Selection guidelines:

- Data is normally distributed with a linear relationship → Pearson

- Data is non-normal or contains outliers → Spearman or Kendall ## 简单线性回归 (Simple Linear Regression) {#sec-simple-regression}

8.2.7 理论背景 (Theoretical Background)

简单线性回归(Simple Linear Regression)用于建模两个连续变量之间的线性关系。它是所有回归分析的基础,也是理解多元回归、非线性回归的起点。

Simple Linear Regression is used to model the linear relationship between two continuous variables. It is the foundation of all regression analysis and the starting point for understanding multiple regression and nonlinear regression.

模型设定(式 8.7):

Model Specification (式 8.7):

\[ Y_i = \beta_0 + \beta_1 X_i + \varepsilon_i \tag{8.7}\]

其中: - \(Y_i\):因变量(响应变量)的第 \(i\) 个观测值 - \(X_i\):自变量(解释变量)的第 \(i\) 个观测值 - \(\beta_0\):截距项(当 \(X=0\) 时 \(Y\) 的期望值) - \(\beta_1\):斜率系数(\(X\) 每增加1单位,\(Y\) 的期望变化量) - \(\varepsilon_i\):误差项(随机扰动)

Where: - \(Y_i\): The \(i\)-th observation of the dependent (response) variable - \(X_i\): The \(i\)-th observation of the independent (explanatory) variable - \(\beta_0\): The intercept (expected value of \(Y\) when \(X=0\)) - \(\beta_1\): The slope coefficient (expected change in \(Y\) per unit increase in \(X\)) - \(\varepsilon_i\): The error term (random disturbance)

经典假设(Gauss-Markov假设):

Classical Assumptions (Gauss-Markov Assumptions):

线性性:\(Y\) 与 \(X\) 的关系是线性的

外生性:\(E[\varepsilon_i | X_i] = 0\) (误差项条件期望为零)

同方差性:\(\text{Var}(\varepsilon_i | X_i) = \sigma^2\) (误差方差恒定)

无自相关:\(\text{Cov}(\varepsilon_i, \varepsilon_j) = 0\) for \(i \neq j\)

正态性(可选,用于推断):\(\varepsilon_i \sim N(0, \sigma^2)\)

Linearity: The relationship between \(Y\) and \(X\) is linear

Exogeneity: \(E[\varepsilon_i | X_i] = 0\) (the conditional expectation of the error term is zero)

Homoscedasticity: \(\text{Var}(\varepsilon_i | X_i) = \sigma^2\) (constant error variance)

No Autocorrelation: \(\text{Cov}(\varepsilon_i, \varepsilon_j) = 0\) for \(i \neq j\)

Normality (optional, for inference): \(\varepsilon_i \sim N(0, \sigma^2)\)

为什么要这些假设?

Why Are These Assumptions Needed?

线性性:简化模型,便于解释和计算

外生性:确保OLS估计量无偏

同方差性:确保OLS估计量有效(方差最小)

无自相关:确保标准误估计正确

正态性:使得小样本推断(t检验、F检验)有效

Linearity: Simplifies the model, facilitating interpretation and computation

Exogeneity: Ensures the OLS estimator is unbiased

Homoscedasticity: Ensures the OLS estimator is efficient (minimum variance)

No Autocorrelation: Ensures correct standard error estimation

Normality: Enables valid small-sample inference (t-tests, F-tests)

如果某些假设不满足,我们可能需要使用广义最小二乘法(GLS)、稳健标准误或其他方法。

If some assumptions are violated, we may need to use Generalized Least Squares (GLS), robust standard errors, or other methods.

8.2.8 最小二乘估计 (OLS) 的数学与几何 (Mathematics and Geometry of OLS Estimation)

普通最小二乘法 (OLS) 寻找一组参数 \(\beta\),使得预测误差的平方和最小。

Ordinary Least Squares (OLS) seeks a set of parameters \(\beta\) that minimizes the sum of squared prediction errors.

数学推导 (Matrix Calculus): 将模型写成矩阵形式 \(Y = X\beta + \varepsilon\)。残差平方和为:

Mathematical Derivation (Matrix Calculus): Write the model in matrix form \(Y = X\beta + \varepsilon\). The residual sum of squares is:

\[ SSE(\beta) = (Y - X\beta)^T (Y - X\beta) = Y^TY - 2\beta^T X^T Y + \beta^T X^T X \beta \]

对 \(\beta\) 求导并令其为 0:

Taking the derivative with respect to \(\beta\) and setting it to zero:

\[ \frac{\partial SSE}{\partial \beta} = -2X^T Y + 2X^T X \beta = 0 \]

整理得到正规方程 (Normal Equations):

Rearranging yields the Normal Equations:

\[ (X^T X) \beta = X^T Y \]

假设 \(X^T X\) 可逆,得到 OLS 估计量:

Assuming \(X^T X\) is invertible, we obtain the OLS estimator:

\[ \hat{\beta}_{OLS} = (X^T X)^{-1} X^T Y \]

几何解释 (Orthogonal Projection): 想象 \(Y\) 是 \(n\) 维空间中的一个向量。\(X\) 的列向量张成了一个子空间 (Subspace)。 回归问题实际上是寻找子空间中距离 \(Y\) 最近的向量 \(\hat{Y}\)。 根据几何原理,最短距离对应垂线。因此,残差向量 \(\varepsilon = Y - \hat{Y}\) 必须垂直于(正交于) \(X\) 张成的子空间。 这意味着 \(X^T (Y - X\hat{\beta}) = 0\),再次导出了正规方程。

Geometric Interpretation (Orthogonal Projection): Imagine \(Y\) as a vector in \(n\)-dimensional space. The column vectors of \(X\) span a subspace. The regression problem is essentially finding the vector \(\hat{Y}\) in that subspace closest to \(Y\). By geometric principles, the shortest distance corresponds to the perpendicular. Therefore, the residual vector \(\varepsilon = Y - \hat{Y}\) must be perpendicular (orthogonal) to the subspace spanned by \(X\). This means \(X^T (Y - X\hat{\beta}) = 0\), which again leads to the normal equations.

OLS 本质上就是一种正交投影 (Orthogonal Projection),将复杂的高维数据投影到我们能理解的低维模型空间上。

OLS is essentially an orthogonal projection, projecting complex high-dimensional data onto a lower-dimensional model space that we can understand.

斜率和截距的解析解分别如 式 8.8 和 式 8.9 所示:

The analytical solutions for the slope and intercept are shown in 式 8.8 and 式 8.9, respectively:

\[ \hat{\beta}_1 = \frac{\sum_{i=1}^{n}(X_i - \bar{X})(Y_i - \bar{Y})}{\sum_{i=1}^{n}(X_i - \bar{X})^2} \tag{8.8}\]

\[ \hat{\beta}_0 = \bar{Y} - \hat{\beta}_1 \bar{X} \tag{8.9}\]

几何解释: - \(\hat{\beta}_1\) 是协方差与 \(X\) 方差的比值 - 回归线必定通过点 \((\bar{X}, \bar{Y})\) - OLS使残差之和为零:\(\sum_{i=1}^n \hat{\varepsilon}_i = 0\)

Geometric Interpretation: - \(\hat{\beta}_1\) is the ratio of the covariance to the variance of \(X\) - The regression line must pass through the point \((\bar{X}, \bar{Y})\) - OLS ensures the sum of residuals equals zero: \(\sum_{i=1}^n \hat{\varepsilon}_i = 0\)

OLS估计量的性质:

Properties of the OLS Estimator:

在Gauss-Markov假设下,OLS估计量是BLUE: - Best(最小方差) - Linear(线性估计量) - Unbiased(无偏) - Estimator(估计量)

Under the Gauss-Markov assumptions, the OLS estimator is BLUE: - Best (minimum variance) - Linear (a linear estimator) - Unbiased - Estimator

8.2.9 回归模型的质量评估 (Quality Assessment of Regression Models)

8.2.9.1 决定系数 \(R^2\) (Coefficient of Determination \(R^2\))

\[ R^2 = \frac{\text{SSR}}{\text{SST}} = 1 - \frac{\text{SSE}}{\text{SST}} \tag{8.10}\]

其中: - \(\text{SST} = \sum_{i=1}^{n}(Y_i - \bar{Y})^2\) (总平方和) - \(\text{SSR} = \sum_{i=1}^{n}(\hat{Y}_i - \bar{Y})^2\) (回归平方和) - \(\text{SSE} = \sum_{i=1}^{n}(Y_i - \hat{Y}_i)^2\) (残差平方和)

Where: - \(\text{SST} = \sum_{i=1}^{n}(Y_i - \bar{Y})^2\) (Total Sum of Squares) - \(\text{SSR} = \sum_{i=1}^{n}(\hat{Y}_i - \bar{Y})^2\) (Regression Sum of Squares) - \(\text{SSE} = \sum_{i=1}^{n}(Y_i - \hat{Y}_i)^2\) (Error/Residual Sum of Squares)

解释:由 式 10.6 可知,\(R^2\) 表示模型解释的 \(Y\) 变异比例,取值范围 \([0, 1]\)。

Interpretation: As shown in 式 10.6, \(R^2\) represents the proportion of total variation in \(Y\) explained by the model, ranging from \([0, 1]\).

关于 \(R^2\) 的误解

Common Misconceptions About \(R^2\)

误解1:“\(R^2\) 越高,模型越好” - 正确理解:高 \(R^2\) 不一定意味着模型因果正确或预测准确。可能存在过拟合。

Misconception 1: “The higher the \(R^2\), the better the model” - Correct Understanding: A high \(R^2\) does not necessarily mean the model is causally correct or predictively accurate. Overfitting may be present.

误解2:“\(R^2\) 低意味着模型无用” - 正确理解:在社会科学中,\(R^2 = 0.2\) 可能已经很有价值。关键看理论是否合理、系数是否有意义。

Misconception 2: “A low \(R^2\) means the model is useless” - Correct Understanding: In the social sciences, \(R^2 = 0.2\) may already be quite valuable. What matters is whether the theory is sound and the coefficients are meaningful.

误解3:“\(R^2\) 可以直接比较不同模型” - 正确理解:只有当因变量相同时,\(R^2\) 才可比较。对于不同因变量,应使用其他标准(如AIC、BIC)。

Misconception 3: “\(R^2\) can be directly compared across different models” - Correct Understanding: \(R^2\) is only comparable when the dependent variable is the same. For different dependent variables, other criteria (such as AIC, BIC) should be used.

8.2.9.2 回归标准误 (Standard Error of Regression)

\[ s_e = \sqrt{\frac{\text{SSE}}{n-2}} \tag{8.11}\]

解释:由 式 8.11 可知,\(s_e\) 估计误差项的标准差 \(\sigma\),衡量观测值围绕回归线的离散程度。

Interpretation: As shown in 式 8.11, \(s_e\) estimates the standard deviation \(\sigma\) of the error term, measuring the dispersion of observations around the regression line.

8.2.9.3 系数的显著性检验 (Significance Test for Coefficients)

检验斜率系数是否显著不为零(式 8.12):

Testing whether the slope coefficient is significantly different from zero (式 8.12):

\[ t = \frac{\hat{\beta}_1 - 0}{\text{SE}(\hat{\beta}_1)} \tag{8.12}\]

其中标准误如 式 8.13 所定义:

Where the standard error is defined in 式 8.13:

\[ \text{SE}(\hat{\beta}_1) = \frac{s_e}{\sqrt{\sum_{i=1}^{n}(X_i - \bar{X})^2}} \tag{8.13}\]

\(t\) 统计量服从自由度为 \(n-2\) 的t分布。

The \(t\) statistic follows a t-distribution with \(n-2\) degrees of freedom.

回归系数的置信区间(式 8.14):

Confidence interval for the regression coefficient (式 8.14):

\[ \hat{\beta}_1 \pm t_{\alpha/2, n-2} \cdot \text{SE}(\hat{\beta}_1) \tag{8.14}\]

8.2.10 残差诊断 (Residual Diagnostics)

回归模型的可靠性取决于假设是否满足。残差分析是检验假设的重要工具。

The reliability of a regression model depends on whether its assumptions are satisfied. Residual analysis is an important tool for checking these assumptions.

1. 线性性检验 - 绘制残差 vs. 拟合值图 - 如果呈现随机散布,线性假设合理 - 如果呈现系统模式(如U型),可能需要非线性项

1. Linearity Check - Plot residuals vs. fitted values - If the pattern is random scatter, the linearity assumption is reasonable - If a systematic pattern appears (e.g., U-shaped), nonlinear terms may be needed

2. 同方差性检验 - 绘制残差 vs. 拟合值图 - 如果残差扩散程度恒定,同方差假设满足 - 如果呈现漏斗状,存在异方差

2. Homoscedasticity Check - Plot residuals vs. fitted values - If the spread of residuals is constant, the homoscedasticity assumption holds - If a funnel shape appears, heteroscedasticity is present

3. 正态性检验 - 绘制残差的QQ图(Q-Q Plot) - 如果点近似落在对角线上,正态假设合理 - 或使用Shapiro-Wilk检验

3. Normality Check - Plot a Q-Q plot of the residuals - If points approximately fall on the diagonal line, the normality assumption is reasonable - Alternatively, use the Shapiro-Wilk test

4. 独立性检验 - 绘制残差的时间序列图 - 或使用Durbin-Watson检验

4. Independence Check - Plot a time series plot of the residuals - Or use the Durbin-Watson test

8.3 从理论到实践:苦活累活 (From Theory to Practice: The “Dirty Work”)

在教科书中,线性回归是完美的。但在现实世界(尤其是金融市场)中,它充满了陷阱。

In textbooks, linear regression is perfect. But in the real world (especially in financial markets), it is full of pitfalls.

8.3.1 伪回归 (Spurious Regression)

假设你让两个醉汉在街上随机游荡(随机游走),记录他们的路径。你会惊讶地发现,他们的路径之间往往有”显著”的相关性(\(R^2 > 0.8\))!

Suppose you let two drunkards wander randomly on the street (random walks) and record their paths. You would be surprised to find that their paths often show “significant” correlation (\(R^2 > 0.8\))!

原理:这是时间序列分析中的经典陷阱。当两个时间序列都是非平稳(Non-stationary)的(如股价、GDP),它们随时间都有共同的趋势。

后果:直接回归会导致荒谬的结论。Granger 和 Newbold (1974) 证明了这一点。

对策:对数据进行差分(Differencing),即使用”收益率”而不是”价格”进行回归。

Mechanism: This is a classic trap in time series analysis. When two time series are both non-stationary (e.g., stock prices, GDP), they share a common trend over time.

Consequence: Direct regression leads to absurd conclusions. Granger and Newbold (1974) demonstrated this.

Remedy: Difference the data—that is, regress on “returns” rather than “prices.”

8.3.2 异方差与稳健标准误 (Heteroscedasticity and Robust Standard Errors)

在理想的 OLS 世界里,每个样本的误差方差都一样 (\(\text{Var}(\varepsilon_i) = \sigma^2\))。但在现实(尤其是金融数据)中,大公司的营收波动(方差)通常远大于小公司。

In the ideal OLS world, the error variance is the same for every observation (\(\text{Var}(\varepsilon_i) = \sigma^2\)). But in reality (especially with financial data), the revenue volatility (variance) of large firms is typically much greater than that of small firms.

异方差 (Heteroscedasticity):会导致 OLS 估计量依然无偏,但标准误 (\(SE\)) 失效,t 检验结果不可信。

解决方案:在 Python

statsmodels中,永远不要吝啬使用cov_type='HC3'或HC1。这会计算异方差稳健标准误 (Heteroscedasticity-Robust Standard Errors, White’s SE)。它就像给 t 检验穿上了一层防弹衣,即使存在异方差,推断依然有效。Heteroscedasticity: The OLS estimator remains unbiased, but the standard errors (\(SE\)) become invalid, making t-test results unreliable.

Solution: In Python’s

statsmodels, never hesitate to usecov_type='HC3'orHC1. This computes Heteroscedasticity-Robust Standard Errors (White’s SE). It is like putting a bulletproof vest on your t-tests—even when heteroscedasticity is present, inference remains valid.

8.3.3 案例:资产与营收关系(含稳健标准误) (Case Study: Asset–Revenue Relationship with Robust Standard Errors)

什么是企业规模与营收的回归分析?

What Is a Regression Analysis of Firm Size and Revenue?

公司的总资产规模与其营业收入之间通常存在正向关系:规模越大的企业,往往拥有更强的市场覆盖能力和更高的营收。但这种关系的强度如何?我们能否用总资产来预测营收?这对于企业估值、行业对标和投资分析都有实际意义。

There is typically a positive relationship between a company’s total asset size and its operating revenue: larger firms tend to have stronger market coverage and higher revenues. But how strong is this relationship? Can we use total assets to predict revenue? This has practical significance for corporate valuation, industry benchmarking, and investment analysis.

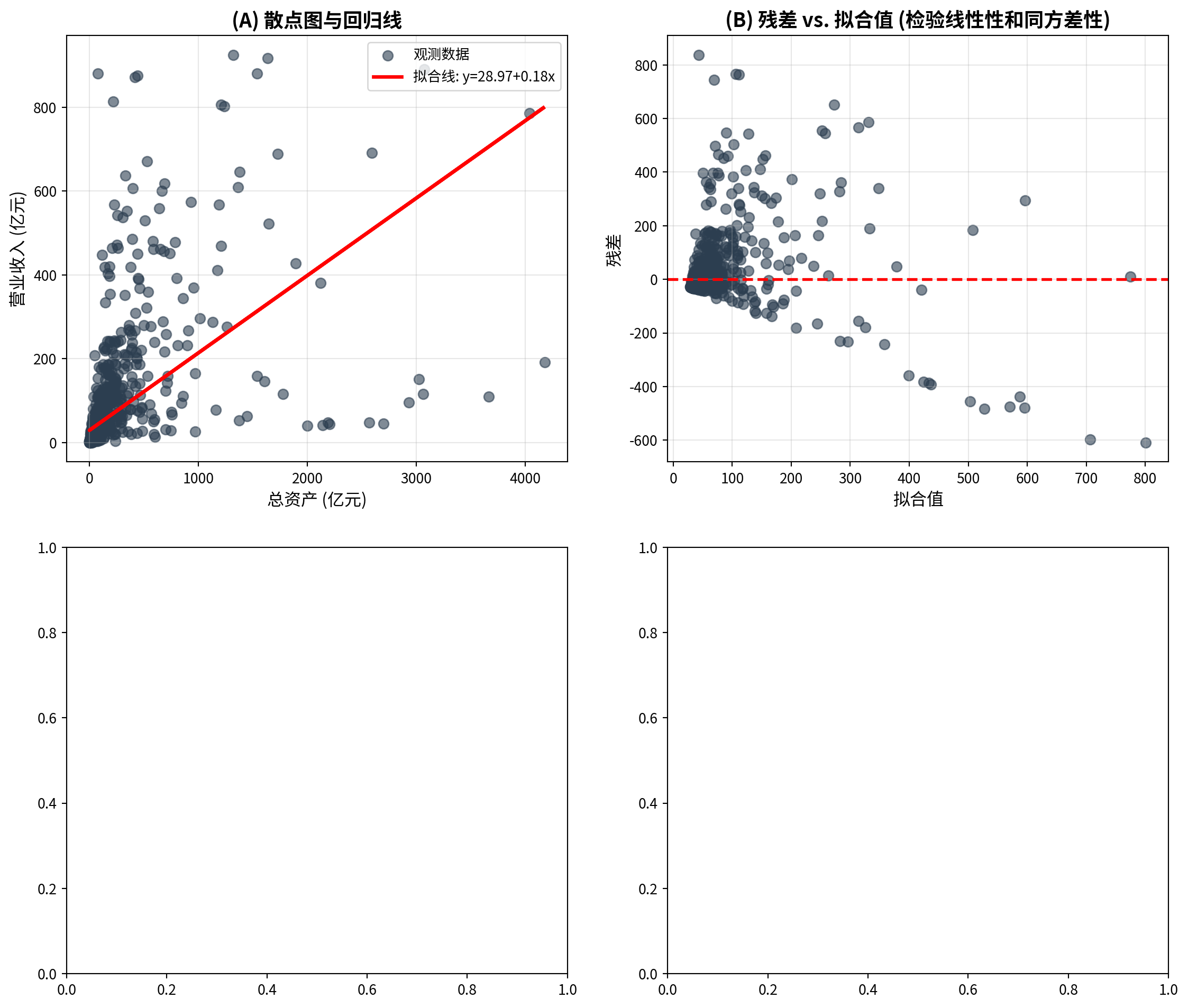

简单线性回归是探索两个定量变量之间线性关系的基础工具。但在实际财务数据中,不同规模企业的误差方差往往不等(异方差问题),这会导致普通OLS的标准误失效。因此,我们使用异方差稳健标准误(HC3)来保证推断的可靠性。下面分析长三角地区上市公司总资产与营业收入的关系,回归结果如 图 8.2 所示。

Simple linear regression is the fundamental tool for exploring the linear relationship between two quantitative variables. However, in real financial data, the error variance often differs across firms of different sizes (the heteroscedasticity problem), which invalidates the standard errors from ordinary OLS. Therefore, we use heteroscedasticity-robust standard errors (HC3) to ensure reliable inference. Below we analyze the relationship between total assets and operating revenue for listed companies in the Yangtze River Delta region; the regression results are shown in 图 8.2.

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import numpy as np # 数值计算库

# NumPy library for numerical computation

import pandas as pd # 数据处理与分析库

# Pandas library for data manipulation and analysis

import matplotlib.pyplot as plt # 导入matplotlib绘图库

# Import matplotlib plotting library

from sklearn.linear_model import LinearRegression # scikit-learn线性回归模型

# Linear regression model from scikit-learn

from scipy import stats # 统计分布和检验函数

# Statistical distributions and test functions from SciPy

import platform # 系统平台检测库

# Platform detection library

# ========== 第1步:设置本地数据路径 ==========

# ========== Step 1: Set local data path ==========

if platform.system() == 'Windows': # 判断当前操作系统是否为Windows

# Check if the current OS is Windows

data_path = 'C:/qiufei/data/stock' # Windows平台下的股票数据路径

# Stock data path on Windows

else: # 否则为Linux平台

# Otherwise it is Linux

data_path = '/home/ubuntu/r2_data_mount/qiufei/data/stock' # Linux平台下的股票数据路径

# Stock data path on Linux

# ========== 第2步:读取本地财务报表和公司基本信息数据 ==========

# ========== Step 2: Load local financial statement and company basic info data ==========

financial_statement_dataframe = pd.read_hdf(f'{data_path}/financial_statement.h5') # 读取上市公司财务报表数据

# Read listed company financial statement data

stock_basic_info_dataframe = pd.read_hdf(f'{data_path}/stock_basic_data.h5') # 读取上市公司基本信息数据

# Read listed company basic information data财务报表和公司基本信息数据加载完毕。下面筛选长三角地区上市公司并准备回归分析数据。

Financial statement and company basic information data have been loaded. Next, we filter listed companies in the Yangtze River Delta region and prepare the data for regression analysis.

# ========== 第3步:筛选长三角地区上市公司 ==========

# ========== Step 3: Filter listed companies in the YRD region ==========

yrd_provinces_list = ['上海市', '浙江省', '江苏省'] # 定义长三角三省市列表

# Define the list of three YRD provinces/municipalities

yrd_stock_codes_list = stock_basic_info_dataframe[stock_basic_info_dataframe['province'].isin(yrd_provinces_list)]['order_book_id'].tolist() # 提取长三角公司股票代码列表

# Extract the list of stock codes for YRD companies

# ========== 第4步:筛选最新年报数据 ==========

# ========== Step 4: Filter the latest annual report data ==========

yrd_financial_dataframe = financial_statement_dataframe[financial_statement_dataframe['order_book_id'].isin(yrd_stock_codes_list)].copy() # 筛选长三角公司的财务数据

# Filter financial data for YRD companies

yrd_financial_dataframe = yrd_financial_dataframe[yrd_financial_dataframe['quarter'].str.endswith('q4')] # 仅保留第四季度年报数据

# Keep only Q4 annual report data

yrd_financial_dataframe = yrd_financial_dataframe.sort_values('quarter', ascending=False) # 按季度降序排列(最新在前)

# Sort by quarter in descending order (latest first)

yrd_financial_dataframe = yrd_financial_dataframe.drop_duplicates(subset='order_book_id', keep='first') # 每家公司保留最新年报

# Keep only the latest annual report for each company

# ========== 第5步:提取总资产和营业收入并转换单位 ==========

# ========== Step 5: Extract total assets and revenue, convert units ==========

yrd_financial_dataframe = yrd_financial_dataframe[['order_book_id', 'total_assets', 'revenue']].dropna() # 提取关键字段并删除缺失值

# Extract key fields and drop missing values

yrd_financial_dataframe['total_assets_billion'] = yrd_financial_dataframe['total_assets'] / 1e8 # 将总资产从元转换为亿元

# Convert total assets from CNY to hundred millions (yi yuan)

yrd_financial_dataframe['revenue_billion'] = yrd_financial_dataframe['revenue'] / 1e8 # 将营业收入从元转换为亿元

# Convert revenue from CNY to hundred millions (yi yuan)

# ========== 第6步:过滤极端值 ==========

# ========== Step 6: Filter extreme values ==========

yrd_financial_dataframe = yrd_financial_dataframe[(yrd_financial_dataframe['total_assets_billion'] > 1) & (yrd_financial_dataframe['total_assets_billion'] < 5000)] # 总资产筛选范围:1~5000亿元

# Total assets filter range: 1–5000 hundred million yuan

yrd_financial_dataframe = yrd_financial_dataframe[(yrd_financial_dataframe['revenue_billion'] > 0) & (yrd_financial_dataframe['revenue_billion'] < 1000)] # 营业收入筛选范围:>0且<1000亿元

# Revenue filter range: >0 and <1000 hundred million yuan

# ========== 第7步:拟合OLS线性回归模型 ==========

# ========== Step 7: Fit OLS linear regression model ==========

total_assets_billion_array = yrd_financial_dataframe['total_assets_billion'].values # 提取总资产为NumPy数组(自变量X)

# Extract total assets as NumPy array (independent variable X)

revenue_billion_array = yrd_financial_dataframe['revenue_billion'].values # 提取营业收入为NumPy数组(因变量Y)

# Extract revenue as NumPy array (dependent variable Y)

independent_variable_matrix = total_assets_billion_array.reshape(-1, 1) # 将自变量转为列向量(sklearn要求二维输入)

# Reshape independent variable to column vector (sklearn requires 2D input)

dependent_variable_array = revenue_billion_array # 因变量为一维数组

# Dependent variable as a 1D array数据准备完成后,下面我们拟合OLS线性回归模型,计算截距、斜率、决定系数 \(R^2\)、系数的t统计量和置信区间等回归统计量,并对模型结果进行经济学解释。

After data preparation, we now fit the OLS linear regression model, compute the intercept, slope, coefficient of determination \(R^2\), t-statistics and confidence intervals for the coefficients, and provide economic interpretation of the model results.

linear_regression_model = LinearRegression() # 实例化线性回归模型

# Instantiate the linear regression model

linear_regression_model.fit(independent_variable_matrix, dependent_variable_array) # 拟合模型:最小化残差平方和

# Fit the model: minimize the residual sum of squares

estimated_intercept_beta0 = linear_regression_model.intercept_ # 提取截距估计值β₀

# Extract the estimated intercept β₀

estimated_slope_beta1 = linear_regression_model.coef_[0] # 提取斜率估计值β₁

# Extract the estimated slope β₁

predicted_revenue_array = linear_regression_model.predict(independent_variable_matrix) # 计算拟合值(预测营业收入)

# Compute fitted values (predicted revenue)

regression_residuals_array = dependent_variable_array - predicted_revenue_array # 计算残差 = 实际值 - 拟合值

# Compute residuals = actual values - fitted values

# ========== 第8步:计算回归统计量 ==========

# ========== Step 8: Compute regression statistics ==========

sample_size_count = len(dependent_variable_array) # 样本量

# Sample size

mean_revenue_value = np.mean(dependent_variable_array) # 因变量(营业收入)均值

# Mean of the dependent variable (revenue)

total_sum_of_squares = np.sum((dependent_variable_array - mean_revenue_value)**2) # SST:总平方和,衡量Y的总离散程度

# SST: Total Sum of Squares, measuring total dispersion of Y

error_sum_of_squares = np.sum(regression_residuals_array**2) # SSE:残差平方和,衡量模型未解释的离散

# SSE: Error Sum of Squares, measuring unexplained dispersion

regression_sum_of_squares = np.sum((predicted_revenue_array - mean_revenue_value)**2) # SSR:回归平方和,衡量模型解释的离散

# SSR: Regression Sum of Squares, measuring explained dispersion

# 计算决定系数R²

# Compute the coefficient of determination R²

r_squared_value = 1 - error_sum_of_squares / total_sum_of_squares # R² = 1 - SSE/SST,衡量模型拟合优度

# R² = 1 - SSE/SST, measuring goodness of fit

# 计算回归标准误

# Compute the regression standard error

regression_standard_error = np.sqrt(error_sum_of_squares / (sample_size_count - 2)) # 回归标准误 = sqrt(SSE/(n-2)),估计σ

# Regression standard error = sqrt(SSE/(n-2)), estimating σ回归统计量(SST、SSE、SSR、R²、标准误)计算完毕。下面计算系数的标准误、t统计量和置信区间。

The regression statistics (SST, SSE, SSR, R², standard error) have been computed. Next we compute the standard errors, t-statistics, and confidence intervals for the coefficients.

# ========== 第9步:计算系数标准误、t统计量和置信区间 ==========

# ========== Step 9: Compute coefficient standard errors, t-statistics, and confidence intervals ==========

standard_error_beta1 = regression_standard_error / np.sqrt(np.sum((total_assets_billion_array - np.mean(total_assets_billion_array))**2)) # β₁的标准误 = s_e / sqrt(Σ(Xi-X̄)²)

# Standard error of β₁ = s_e / sqrt(Σ(Xi-X̄)²)

standard_error_beta0 = regression_standard_error * np.sqrt(1/sample_size_count + (np.mean(total_assets_billion_array)**2) / np.sum((total_assets_billion_array - np.mean(total_assets_billion_array))**2)) # β₀的标准误

# Standard error of β₀

# 计算t统计量和双侧p值

# Compute t-statistics and two-sided p-values

t_statistic_beta1 = estimated_slope_beta1 / standard_error_beta1 # β₁的t统计量 = β̂₁/SE(β̂₁)

# t-statistic of β₁ = β̂₁/SE(β̂₁)

p_value_beta1 = 2 * (1 - stats.t.cdf(abs(t_statistic_beta1), df=sample_size_count-2)) # β₁的双侧p值

# Two-sided p-value of β₁

t_statistic_beta0 = estimated_intercept_beta0 / standard_error_beta0 # β₀的t统计量 = β̂₀/SE(β̂₀)

# t-statistic of β₀ = β̂₀/SE(β̂₀)

p_value_beta0 = 2 * (1 - stats.t.cdf(abs(t_statistic_beta0), df=sample_size_count-2)) # β₀的双侧p值

# Two-sided p-value of β₀

# 计算95%置信区间

# Compute 95% confidence intervals

critical_t_value = stats.t.ppf(0.975, df=sample_size_count-2) # 求t分布97.5%分位数(双侧α=0.05的临界值)

# 97.5th percentile of the t-distribution (critical value for two-sided α=0.05)

confidence_interval_beta1 = (estimated_slope_beta1 - critical_t_value * standard_error_beta1, estimated_slope_beta1 + critical_t_value * standard_error_beta1) # β₁的95%置信区间

# 95% confidence interval of β₁

confidence_interval_beta0 = (estimated_intercept_beta0 - critical_t_value * standard_error_beta0, estimated_intercept_beta0 + critical_t_value * standard_error_beta0) # β₀的95%置信区间

# 95% confidence interval of β₀完成回归统计量计算和系数检验后,我们输出完整的回归分析结果及其经济学解释:

After completing the regression statistics and coefficient tests, we output the full regression results along with their economic interpretation:

# ========== 第10步:输出描述性统计与回归结果 ==========

# ========== Step 10: Output descriptive statistics and regression results ==========

print('=' * 60) # 分隔线

# Separator line

print('长三角上市公司总资产与营业收入线性回归分析') # 标题

# Title

print('=' * 60) # 分隔线

# Separator line

print('\n描述性统计:') # 描述性统计标题

# Descriptive statistics header

print(f' 样本量: {sample_size_count}') # 输出样本量

# Print sample size

print(f' 总资产 - 均值: {np.mean(total_assets_billion_array):.2f} 亿元, 标准差: {np.std(total_assets_billion_array, ddof=1):.2f} 亿元') # 总资产的均值和标准差

# Mean and std dev of total assets

print(f' 营业收入 - 均值: {np.mean(revenue_billion_array):.2f} 亿元, 标准差: {np.std(revenue_billion_array, ddof=1):.2f} 亿元') # 营业收入的均值和标准差

# Mean and std dev of revenue

print('\n' + '=' * 60) # 分隔线

# Separator line

print('回归结果') # 回归结果标题

# Regression results header

print('=' * 60) # 分隔线

# Separator line

print(f'\n拟合方程:') # 拟合方程标题

# Fitted equation header

print(f' 营业收入 = {estimated_intercept_beta0:.2f} + {estimated_slope_beta1:.2f} × 总资产') # 输出回归方程

# Print the regression equation

print(f'\n截距 (β₀):') # 截距部分标题

# Intercept section header

print(f' 估计值: {estimated_intercept_beta0:.2f} 亿元') # 截距估计值

# Estimated intercept value

print(f' 标准误: {standard_error_beta0:.2f}') # 截距标准误

# Standard error of intercept

print(f' t统计量: {t_statistic_beta0:.4f}') # 截距t统计量

# t-statistic of intercept

print(f' p值: {p_value_beta0:.6f}') # 截距p值

# p-value of intercept

print(f' 95% CI: [{confidence_interval_beta0[0]:.2f}, {confidence_interval_beta0[1]:.2f}]') # 截距95%置信区间

# 95% confidence interval of intercept============================================================

长三角上市公司总资产与营业收入线性回归分析

============================================================

描述性统计:

样本量: 1833

总资产 - 均值: 117.43 亿元, 标准差: 320.05 亿元

营业收入 - 均值: 50.67 亿元, 标准差: 108.51 亿元

============================================================

回归结果

============================================================

拟合方程:

营业收入 = 28.97 + 0.18 × 总资产

截距 (β₀):

估计值: 28.97 亿元

标准误: 2.26

t统计量: 12.7948

p值: 0.000000

95% CI: [24.53, 33.41]回归分析基于1833家长三角上市公司的数据(总资产均值117.43亿元、标准差320.05亿元;营业收入均值50.67亿元、标准差108.51亿元),拟合方程为:营业收入 = 28.97 + 0.18 × 总资产。截距 \(\hat{\beta}_0 = 28.97\) 亿元,t统计量为12.7948,p值接近于零(0.000000),在任何常用显著性水平下均高度显著,95%置信区间为[24.53, 33.41]亿元。截距的经济含义是:当总资产为零时,营业收入的预期值约为29亿元——虽然”零资产”在实际中不存在,但截距保证了回归线在数据范围内的拟合精度。

The regression analysis is based on data from 1,833 listed companies in the Yangtze River Delta (mean total assets: 117.43 hundred million yuan, std dev: 320.05; mean revenue: 50.67 hundred million yuan, std dev: 108.51). The fitted equation is: Revenue = 28.97 + 0.18 × Total Assets. The intercept \(\hat{\beta}_0 = 28.97\) hundred million yuan, with a t-statistic of 12.7948 and a p-value close to zero (0.000000), is highly significant at any conventional significance level, with a 95% confidence interval of [24.53, 33.41]. The economic meaning of the intercept is: when total assets are zero, the expected revenue is approximately 29 hundred million yuan—although “zero assets” does not exist in practice, the intercept ensures the accuracy of the regression line’s fit within the data range.

下面输出斜率系数的估计与推断结果及模型拟合度。

Below we output the estimation and inference results for the slope coefficient and the model’s goodness of fit.

print(f'\n斜率 (β₁):') # 输出斜率参数标题

# Slope parameter header

print(f' 估计值: {estimated_slope_beta1:.4f} (每亿元资产带来的营业收入)') # 输出斜率估计值及经济含义

# Estimated slope value and economic meaning

print(f' 标准误: {standard_error_beta1:.4f}') # 输出斜率标准误差

# Standard error of slope

print(f' t统计量: {t_statistic_beta1:.4f}') # 输出斜率t检验统计量

# t-statistic of slope

print(f' p值: {p_value_beta1:.6f}') # 斜率p值

# p-value of slope

print(f' 95% CI: [{confidence_interval_beta1[0]:.4f}, {confidence_interval_beta1[1]:.4f}]') # 斜率95%置信区间

# 95% confidence interval of slope

print(f'\n模型拟合度:') # 模型拟合度标题

# Model goodness of fit header

print(f' R²: {r_squared_value:.4f}') # 输出R²(决定系数)

# Print R² (coefficient of determination)

print(f' 回归标准误: {regression_standard_error:.2f} 亿元') # 输出回归标准误

# Print regression standard error

print(f' 解释: 总资产解释了营业收入变异的{r_squared_value*100:.1f}%') # R²的直观解释

# Interpretation: total assets explain this percentage of revenue variation

斜率 (β₁):

估计值: 0.1848 (每亿元资产带来的营业收入)

标准误: 0.0066

t统计量: 27.8089

p值: 0.000000

95% CI: [0.1717, 0.1978]

模型拟合度:

R²: 0.2969

回归标准误: 91.01 亿元

解释: 总资产解释了营业收入变异的29.7%斜率系数 \(\hat{\beta}_1 = 0.1848\),标准误为0.0066,t统计量高达27.8089,p值为0.000000,95%置信区间为[0.1717, 0.1978]——斜率在统计上高度显著。其经济含义是:长三角地区上市公司的总资产每增加1亿元,营业收入平均增加约0.18亿元(即1848万元),或者说每100亿元的资产规模增长对应约18.48亿元的营业收入增量。模型的决定系数 \(R^2 = 0.2969\),表明总资产规模仅解释了营业收入变异的29.7%,回归标准误为91.01亿元。这一 \(R^2\) 值提示:虽然资产规模是影响营业收入的重要因素,但行业差异、经营效率、市场竞争等其他因素同样重要,实际应用中应考虑多元回归模型。

The slope coefficient \(\hat{\beta}_1 = 0.1848\), with a standard error of 0.0066, a t-statistic as high as 27.8089, a p-value of 0.000000, and a 95% confidence interval of [0.1717, 0.1978]—the slope is highly statistically significant. Its economic meaning is: for every additional 100 million yuan in total assets among YRD-listed companies, operating revenue increases by approximately 0.18 hundred million yuan (i.e., 18.48 million yuan), or equivalently, every 10 billion yuan increase in asset size corresponds to an increase of approximately 1.848 billion yuan in revenue. The coefficient of determination \(R^2 = 0.2969\), indicating that total asset size explains only 29.7% of the variation in operating revenue, with a regression standard error of 91.01 hundred million yuan. This \(R^2\) value suggests that although asset size is an important factor affecting revenue, other factors such as industry differences, operational efficiency, and market competition are equally important, and a multiple regression model should be considered in practice.

下面从经济学角度解释回归结果的实际意义,包括边际效应、模型预测和局限性分析。

Below we interpret the practical significance of the regression results from an economic perspective, including marginal effects, model predictions, and limitations analysis.

# ========== 第11步:输出实际意义解释 ==========

# ========== Step 11: Output practical significance interpretation ==========

print('\n' + '=' * 60) # 分隔线

# Separator line

print('实际意义解释') # 实际意义标题

# Practical significance header

print('=' * 60) # 分隔线

# Separator line

print(f'\n1. 斜率解释:') # 斜率经济含义

# Slope economic meaning

print(f' 总资产每增加1亿元,营业收入平均增加{estimated_slope_beta1:.4f}亿元') # 边际效应解读

# Marginal effect interpretation

print(f' 95%置信区间为[{confidence_interval_beta1[0]:.4f}, {confidence_interval_beta1[1]:.4f}]亿元') # 斜率区间估计

# Slope interval estimate

# ========== 第12步:模型预测与局限性说明 ==========

# ========== Step 12: Model prediction and limitations ==========

print(f'\n2. 模型预测:') # 模型预测标题

# Model prediction header

example_asset_billion = 100 # 设定一个示例总资产值(100亿元)

# Set an example total asset value (10 billion yuan)

example_predicted_revenue = estimated_intercept_beta0 + estimated_slope_beta1 * example_asset_billion # 代入回归方程计算预测值

# Substitute into the regression equation to compute the predicted value

print(f' 预测:总资产{example_asset_billion}亿元的公司,营业收入约为{example_predicted_revenue:.2f}亿元') # 输出点预测结果

# Print point prediction result

print(f'\n3. 模型局限性:') # 模型局限性标题

# Model limitations header

print(f' - R² = {r_squared_value:.4f},说明总资产只能解释部分营业收入变异') # R²不高说明还有其他影响因素

# R² is not high, indicating other factors affect revenue

print(f' - 其他重要因素:行业、经营效率、市场环境等') # 遗漏变量提示

# Omitted variable reminder

print(f' - 实际应用中应使用多元回归模型') # 扩展建议:多元回归

# Extension suggestion: multiple regression

============================================================

实际意义解释

============================================================

1. 斜率解释:

总资产每增加1亿元,营业收入平均增加0.1848亿元

95%置信区间为[0.1717, 0.1978]亿元

2. 模型预测:

预测:总资产100亿元的公司,营业收入约为47.45亿元

3. 模型局限性:

- R² = 0.2969,说明总资产只能解释部分营业收入变异

- 其他重要因素:行业、经营效率、市场环境等

- 实际应用中应使用多元回归模型8.3.4 回归诊断可视化 (Regression Diagnostics Visualization)

图 8.3 展示了回归模型的四幅诊断图。

图 8.3 presents four diagnostic plots for the regression model.

# ========== 第1步:创建2×2子图画布 ==========

# ========== Step 1: Create a 2×2 subplot canvas ==========

matplot_figure, matplot_axes_array = plt.subplots(2, 2, figsize=(14, 12)) # 创建2行2列的子图网格

# Create a 2-row, 2-column subplot grid

# ========== 第2步:面板A——散点图与回归线 ==========

# ========== Step 2: Panel A — Scatter plot with regression line ==========

matplot_axes_array[0, 0].scatter(total_assets_billion_array, revenue_billion_array, alpha=0.6, s=50, color='#2C3E50', label='观测数据') # 绘制散点图(总资产 vs 营业收入)

# Plot scatter (total assets vs revenue)

matplot_axes_array[0, 0].plot(total_assets_billion_array, predicted_revenue_array, 'r-', linewidth=2.5, label=f'拟合线: y={estimated_intercept_beta0:.2f}+{estimated_slope_beta1:.2f}x') # 叠加OLS回归线

# Overlay the OLS regression line

matplot_axes_array[0, 0].set_xlabel('总资产 (亿元)', fontsize=12) # x轴标签:总资产

# x-axis label: Total Assets

matplot_axes_array[0, 0].set_ylabel('营业收入 (亿元)', fontsize=12) # y轴标签:营业收入

# y-axis label: Revenue

matplot_axes_array[0, 0].set_title('(A) 散点图与回归线', fontsize=14, fontweight='bold') # 面板标题

# Panel title

matplot_axes_array[0, 0].legend(fontsize=10) # 显示图例

# Display legend

matplot_axes_array[0, 0].grid(True, alpha=0.3) # 添加网格线

# Add gridlines

# ========== 第3步:面板B——残差vs拟合值图(检验线性性和同方差性) ==========

# ========== Step 3: Panel B — Residuals vs. fitted values (testing linearity and homoscedasticity) ==========

matplot_axes_array[0, 1].scatter(predicted_revenue_array, regression_residuals_array, alpha=0.6, s=50, color='#2C3E50') # 绘制残差散点图

# Plot residual scatter

matplot_axes_array[0, 1].axhline(0, color='red', linestyle='--', linewidth=2) # 添加y=0参考线(残差应围绕0分布)

# Add y=0 reference line (residuals should be centered around 0)

matplot_axes_array[0, 1].set_xlabel('拟合值', fontsize=12) # x轴标签:模型拟合值

# x-axis label: Fitted values

matplot_axes_array[0, 1].set_ylabel('残差', fontsize=12) # y轴标签:残差

# y-axis label: Residuals

matplot_axes_array[0, 1].set_title('(B) 残差 vs. 拟合值 (检验线性性和同方差性)', fontsize=14, fontweight='bold') # 面板标题

# Panel title

matplot_axes_array[0, 1].grid(True, alpha=0.3) # 添加网格线

# Add gridlines

面板A展示了1833家长三角上市公司总资产与营业收入的散点图及OLS回归线,可以看到数据点在低总资产区域高度集中,而在高总资产区域稀疏分布,呈现明显的右偏特征。面板B的残差与拟合值图揭示了重要的诊断信息:残差在低拟合值处集中且方差较小,随着拟合值增大,残差的散布范围明显增大,呈现”喇叭形”扩散模式——这是异方差性(heteroscedasticity)的典型表现,意味着同方差假设可能不满足。此外,残差分布的不对称性提示数据中可能存在需要关注的非线性成分。

Panel A shows the scatter plot and OLS regression line for total assets versus operating revenue of 1,833 YRD-listed companies. The data points are highly concentrated in the low total asset region and sparsely distributed in the high total asset region, exhibiting a clear right-skewed pattern. The residuals-vs.-fitted-values plot in Panel B reveals important diagnostic information: residuals are concentrated with small variance at low fitted values, and the spread of residuals clearly increases as fitted values grow, displaying a “trumpet-shaped” expansion pattern — this is a typical manifestation of heteroscedasticity, indicating that the homoscedasticity assumption may not hold. Furthermore, the asymmetry in the residual distribution suggests that nonlinear components in the data may require attention.

下面绘制面板C(QQ图)和面板D(残差直方图),进一步检验正态性假设。

Below we plot Panel C (Q-Q plot) and Panel D (residual histogram) to further test the normality assumption.

# ========== 第4步:面板C——QQ图(检验残差正态性) ==========

# ========== Step 4: Panel C — Q-Q plot (testing residual normality) ==========

stats.probplot(regression_residuals_array, dist='norm', plot=matplot_axes_array[1, 0]) # 生成正态QQ图

# Generate normal Q-Q plot

matplot_axes_array[1, 0].set_title('(C) 残差QQ图 (检验正态性)', fontsize=14, fontweight='bold') # 面板标题

# Panel title

matplot_axes_array[1, 0].grid(True, alpha=0.3) # 添加网格线

# Add gridlines

# ========== 第5步:面板D——残差直方图(观察残差分布形态) ==========

# ========== Step 5: Panel D — Residual histogram (observing residual distribution shape) ==========

matplot_axes_array[1, 1].hist(regression_residuals_array, bins=20, color='#008080', alpha=0.7, edgecolor='black') # 绘制残差直方图

# Plot residual histogram

matplot_axes_array[1, 1].axvline(0, color='red', linestyle='--', linewidth=2) # 添加x=0参考线

# Add x=0 reference line

matplot_axes_array[1, 1].set_xlabel('残差', fontsize=12) # x轴标签:残差

# x-axis label: Residuals

matplot_axes_array[1, 1].set_ylabel('频数', fontsize=12) # y轴标签:频数

# y-axis label: Frequency

matplot_axes_array[1, 1].set_title('(D) 残差分布直方图', fontsize=14, fontweight='bold') # 面板标题

# Panel title

matplot_axes_array[1, 1].grid(True, alpha=0.3, axis='y') # 仅在y轴方向添加网格线

# Add gridlines on y-axis only

plt.tight_layout() # 自动调整子图间距

# Automatically adjust subplot spacing

plt.show() # 显示图形

# Display the figure<Figure size 672x480 with 0 Axes>图 8.3 的四幅诊断图提供了模型假设检验的综合视角。面板C的QQ图显示残差在两端明显偏离45度对角线,尤其是右尾出现严重上翘,说明残差分布具有”厚尾”特征,正态性假设不能成立。面板D的残差直方图进一步证实了这一判断:直方图呈现显著的右偏形态,峰度高于正态分布(尖峰),且存在较多的正向极端残差。综合四幅诊断图的发现:(1) 异方差性明显(面板B的喇叭形);(2) 正态性不满足(面板C的尾部偏离和面板D的右偏分布)。这些问题提示:在对企业财务数据进行回归分析时,可能需要对数据进行对数变换,或使用稳健标准误(HC标准误)来获得可靠的统计推断。

The four diagnostic plots in 图 8.3 provide a comprehensive perspective on checking model assumptions. The Q-Q plot in Panel C shows that the residuals deviate markedly from the 45-degree diagonal at both tails, especially with severe upward curvature in the right tail, indicating that the residual distribution has “heavy tail” characteristics and the normality assumption cannot be sustained. The residual histogram in Panel D further confirms this finding: the histogram exhibits a pronounced right-skewed shape, with kurtosis higher than the normal distribution (leptokurtic), and a substantial number of large positive residuals. Combining the findings from all four diagnostic plots: (1) heteroscedasticity is evident (the trumpet shape in Panel B); (2) normality is not satisfied (the tail deviation in Panel C and the right-skewed distribution in Panel D). These issues suggest that when performing regression analysis on corporate financial data, a logarithmic transformation of the data may be needed, or robust standard errors (HC standard errors) should be used to obtain reliable statistical inference.

8.4 相关与回归的区别与联系 (Differences and Connections Between Correlation and Regression)

8.4.1 主要区别 (Key Differences)

| 特征 | 相关分析 | 回归分析 |

|---|---|---|

| 目的 | 衡量变量关联强度 | 预测或解释因变量 |

| 变量角色 | 对称,无因变量与自变量之分 | 不对称,区分因变量与自变量 |

| 取值范围 | \(-1\) 到 \(1\) | 系数可为任意实数 |

| 单位 | 无量纲 | 有单位 |

| 因果推断 | 不涉及 | 可用于因果分析(需满足假设) |

| Feature | Correlation Analysis | Regression Analysis |

|---|---|---|

| Purpose | Measure strength of association | Predict or explain the dependent variable |

| Variable Roles | Symmetric; no distinction between DV and IV | Asymmetric; distinguishes DV from IV |

| Range | \(-1\) to \(1\) | Coefficients can be any real number |

| Units | Dimensionless | Has units |

| Causal Inference | Not involved | Can be used for causal analysis (if assumptions hold) |

8.4.2 数值关系 (Numerical Relationship)

在简单线性回归中,相关系数与回归斜率有如 式 8.15 所示的关系:

In simple linear regression, the correlation coefficient and the regression slope have the relationship shown in 式 8.15:

\[ \hat{\beta}_1 = r \cdot \frac{s_Y}{s_X} \tag{8.15}\]

其中: - \(r\) 为皮尔逊相关系数 - \(s_Y, s_X\) 为样本标准差

Where: - \(r\) is the Pearson correlation coefficient - \(s_Y, s_X\) are the sample standard deviations

推论: - 如果 \(X\) 和 \(Y\) 标准化(均值为0,标准差为1),则 \(\hat{\beta}_1 = r\) - 决定系数 \(R^2 = r^2\) (在简单回归中)

Corollaries: - If \(X\) and \(Y\) are standardized (mean zero, standard deviation one), then \(\hat{\beta}_1 = r\) - The coefficient of determination \(R^2 = r^2\) (in simple regression)

8.4.3 选择建议 (Selection Advice)

使用相关分析: - 主要关注变量间的关联强度 - 不区分预测变量和响应变量 - 进行探索性数据分析

Use Correlation Analysis When: - The main focus is on the strength of association between variables - There is no distinction between predictor and response variables - Conducting exploratory data analysis

使用回归分析: - 需要预测或解释 - 需要控制其他变量(多元回归) - 关注因果机制

Use Regression Analysis When: - Prediction or explanation is needed - Other variables need to be controlled (multiple regression) - The focus is on causal mechanisms

8.4.4 启发式思考题 (Heuristic Problems)

1. 安斯库姆四重奏 (Anscombe’s Quartet) - 弗朗西斯·安斯库姆 (Francis Anscombe) 构造了四组数据,它们拥有完全相同的均值、方差、相关系数和回归线,但绘图后形态千差万别。 - Dataset I: 正常的线性关系。 - Dataset II: 完美的非线性(U型)关系,但线性回归强行拟合。 - Dataset III: 只有一个离群值拉歪了回归线。 - Dataset IV: \(X\) 几乎不变,全靠一个极端值撑起相关性。 - 任务:编写 Python 代码复现这四组数据,并计算 R2。这不仅是视觉冲击,更是对”盲目相信统计指标”的当头棒喝。

1. Anscombe’s Quartet - Francis Anscombe constructed four datasets that have exactly the same means, variances, correlation coefficients, and regression lines, yet look completely different when plotted. - Dataset I: A normal linear relationship. - Dataset II: A perfect nonlinear (U-shaped) relationship, but linear regression is forced to fit. - Dataset III: A single outlier skews the regression line. - Dataset IV: \(X\) is nearly constant; the correlation is entirely supported by one extreme point. - Task: Write Python code to reproduce these four datasets and compute R². This is not just a visual shock, but a powerful wake-up call against “blindly trusting statistical indicators.”

2. 厨房水槽回归 (The Kitchen Sink Regression) - 很多初学者认为 \(R^2\) 越高越好,所以把所有能找到的变量都塞进模型里(Everything including the kitchen sink)。 - 实验:生成一个只有噪声的 \(Y\),然后随机生成 100 个只有噪声的 \(X\)。 - 逐步将 \(X\) 加入回归模型。 - 观察:你会发现 \(R^2\) 单调递增,直到达到 1.0(当变量数=样本数时)。 - 反思:这也是为什么我们更看重 调整后 \(R^2\) (Adjusted \(R^2\)),它会惩罚那些对模型没有贡献的冗余变量。

2. The Kitchen Sink Regression - Many beginners believe the higher the \(R^2\), the better, so they stuff every available variable into the model (everything including the kitchen sink). - Experiment: Generate a \(Y\) that is pure noise, then randomly generate 100 noise-only \(X\) variables. - Progressively add the \(X\) variables into the regression model. - Observation: You will find that \(R^2\) monotonically increases, reaching 1.0 (when the number of variables equals the sample size). - Reflection: This is why we place greater emphasis on the Adjusted \(R^2\), which penalizes redundant variables that do not contribute to the model.

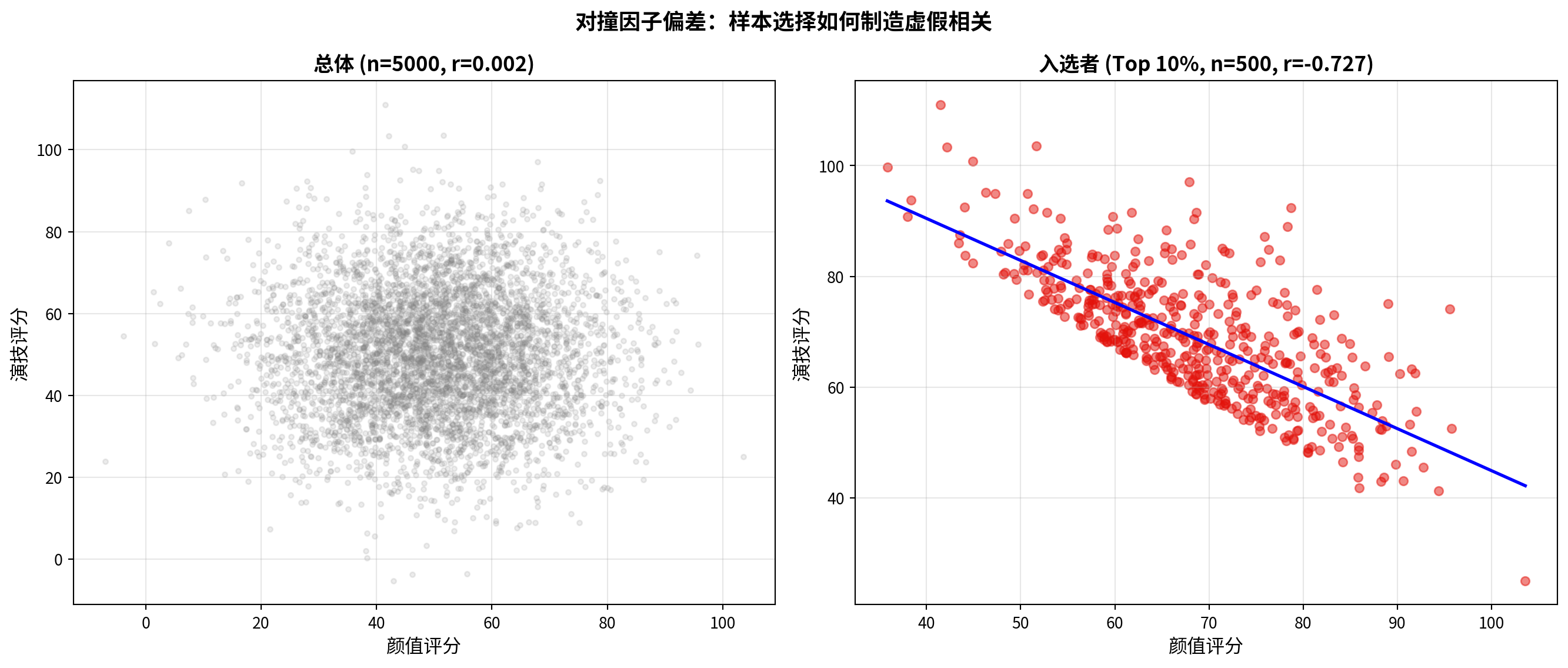

3. 对撞因子偏差 (Collider Bias) - 好莱坞明星往往”颜值高”和”演技好”呈负相关。难道老天是公平的? - 解释:只有”颜值高”或者”演技好”(或二者兼备)的人才能进入好莱坞(样本选择)。 - 任务:模拟两列独立的随机正态数据 \(A\) (颜值) 和 \(B\) (演技)。 - 选择 \(A+B > \text{Top 10\%}\) 的样本。 - 在这个子样本中计算 \(A\) 和 \(B\) 的相关系数。你会发现惊人的负相关!这就是当你仅分析”成功企业”时经常犯的错误。

3. Collider Bias - Among Hollywood stars, “good looks” and “great acting” often appear negatively correlated. Is fate really fair? - Explanation: Only people who are “good-looking” or “talented actors” (or both) can enter Hollywood (sample selection). - Task: Simulate two independent columns of random normal data \(A\) (attractiveness) and \(B\) (acting ability). - Select the subsample where \(A+B > \text{Top 10\%}\). - Compute the correlation coefficient between \(A\) and \(B\) in this subsample. You will find a striking negative correlation! This is exactly the mistake often made when analyzing only “successful firms.” ## 思考与练习 (Exercises and Reflections) {#sec-exercises-ch8}

8.4.5 练习题 (Practice Problems)

习题 8.1:相关系数的计算与解释

Exercise 8.1: Calculation and Interpretation of Correlation Coefficients

某投资分析师收集了10只股票的市盈率(P/E)和年收益率数据:

An investment analyst collected price-to-earnings ratio (P/E) and annual return data for 10 stocks:

股票: 1 2 3 4 5 6 7 8 9 10

P/E: 15 20 18 22 25 12 30 28 16 24

收益率%:8 12 10 11 14 7 16 15 9 13计算皮尔逊相关系数。

Calculate the Pearson correlation coefficient.

在 \(\alpha = 0.05\) 水平下检验相关系数的显著性。

Test the significance of the correlation coefficient at \(\alpha = 0.05\).

计算相关系数的95%置信区间。

Compute the 95% confidence interval for the correlation coefficient.

解释结果的实际意义。

Interpret the practical significance of the results.

习题 8.2:相关性的陷阱

Exercise 8.2: Pitfalls of Correlation

某研究发现,冰淇淋销量与溺水事故次数的相关系数为0.85(p < 0.001)。

A study found that the correlation coefficient between ice cream sales and drowning incidents is 0.85 (p < 0.001).

我们能否据此推断”吃冰淇淋导致溺水”?为什么?

Can we infer that “eating ice cream causes drowning”? Why or why not?

提出2个可能的混淆变量(confounding variables)。

Propose 2 possible confounding variables.

如何设计研究来验证因果关系?

How would you design a study to establish causation?

习题 8.3:简单线性回归

Exercise 8.3: Simple Linear Regression

某电商公司想了解广告投入对销售额的影响。收集了过去12个月的数据:

An e-commerce company wants to understand the effect of advertising expenditure on sales revenue. Data from the past 12 months were collected:

月份: 1 2 3 4 5 6 7 8 9 10 11 12

广告费(万元): 5 8 7 10 12 9 15 14 11 13 16 18

销售额(万元): 25 38 32 45 52 40 65 60 48 55 70 78拟合线性回归模型:销售额 = \(\beta_0\) + \(\beta_1\) × 广告费

Fit a linear regression model: Sales = \(\beta_0\) + \(\beta_1\) × Advertising Expenditure

计算决定系数 \(R^2\) 并解释。

Calculate the coefficient of determination \(R^2\) and interpret it.

检验斜率系数的显著性(\(\alpha = 0.05\))。

Test the significance of the slope coefficient (\(\alpha = 0.05\)).

如果下个月广告预算为20万元,预测销售额。

If next month’s advertising budget is 200,000 yuan, predict the sales revenue.

计算95%预测区间。

Compute the 95% prediction interval.

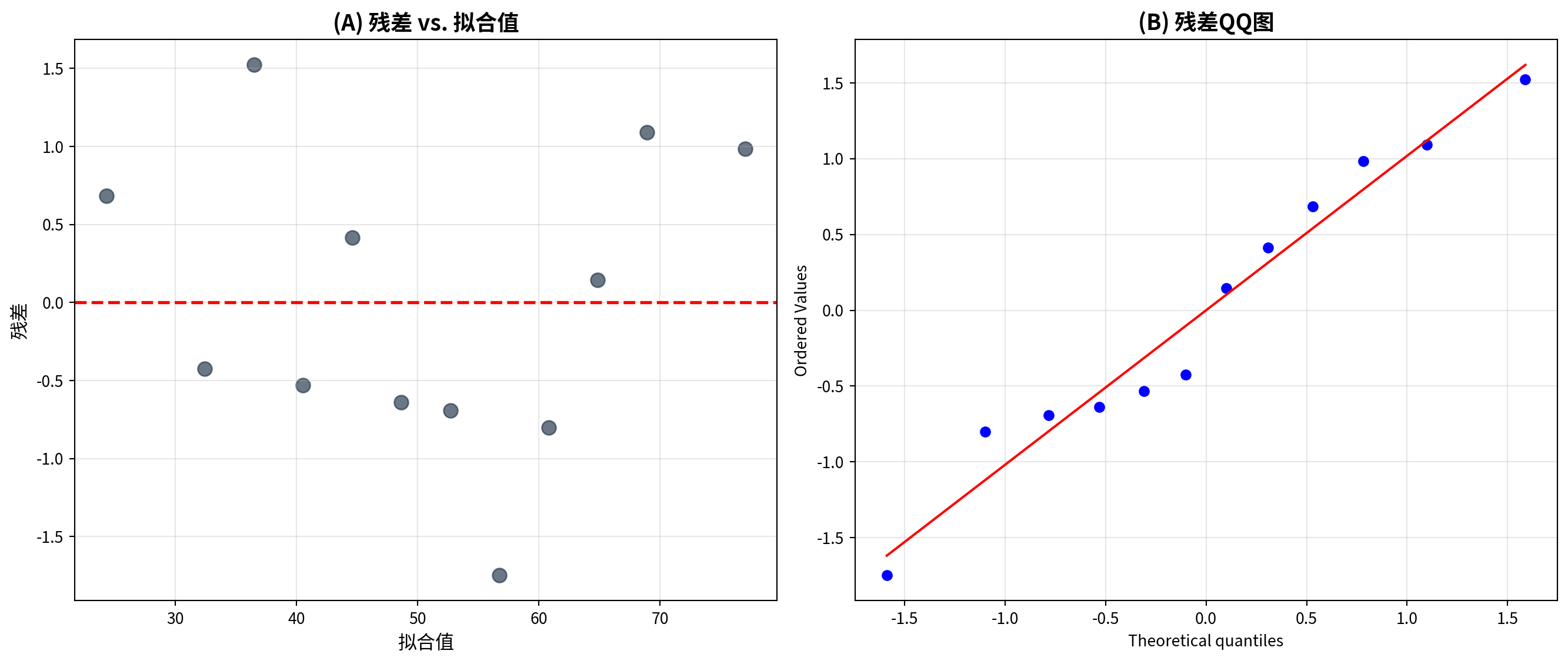

习题 8.4:回归诊断

Exercise 8.4: Regression Diagnostics

对习题8.3的回归模型进行诊断:

Perform diagnostics on the regression model from Exercise 8.3:

绘制残差 vs. 拟合值图,检验线性性和同方差性假设。

Plot residuals vs. fitted values to check the linearity and homoscedasticity assumptions.

绘制残差QQ图,检验正态性假设。

Plot a residual Q-Q plot to check the normality assumption.

如果存在异方差,可能会带来什么后果?

If heteroscedasticity is present, what consequences might it have?

提出可能的改进方法。

Propose possible remedial measures.

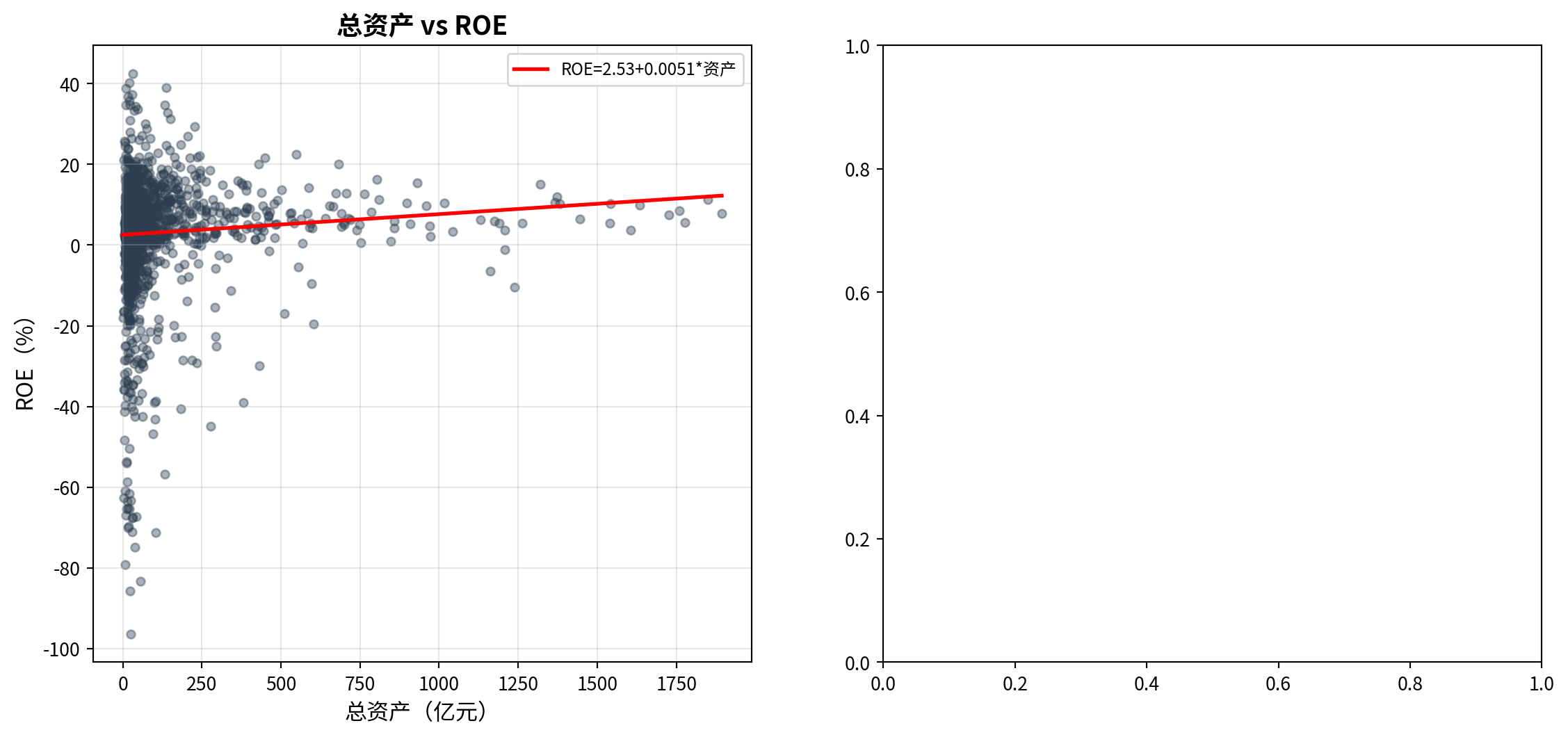

习题 8.5:数据分析项目

Exercise 8.5: Data Analysis Project

使用本地数据或AkShare获取数据,选择一个你感兴趣的相关或回归问题进行分析。例如:

Using local data or data obtained from AkShare, choose a correlation or regression problem of interest and perform an analysis. For example:

分析某上市公司股价与市场指数的相关性

研究GDP增长率与股票收益率的关系

探讨公司规模(总资产)与盈利能力(ROE)的关系

Analyze the correlation between a listed company’s stock price and a market index

Study the relationship between GDP growth rate and stock returns

Explore the relationship between firm size (total assets) and profitability (ROE)

要求:

Requirements:

明确研究问题和变量

Clearly define the research question and variables

进行描述性统计和可视化

Conduct descriptive statistics and visualization

计算相关系数或拟合回归模型

Compute the correlation coefficient or fit a regression model

进行统计推断(显著性检验、置信区间)

Perform statistical inference (significance tests, confidence intervals)

诊断模型假设

Diagnose model assumptions

讨论结果的实际意义和局限性

Discuss the practical significance and limitations of the results

8.4.6 参考答案 (Solutions)

习题 8.1 解答

Solution to Exercise 8.1

# ========== 导入所需库 ==========

# ========== Import required libraries ==========

import numpy as np # 导入数值计算库

# Import the numerical computing library

from scipy.stats import pearsonr # 导入皮尔逊相关系数函数

# Import the Pearson correlation coefficient function

# ========== 第1步:准备原始数据 ==========

# ========== Step 1: Prepare the raw data ==========

price_earnings_ratio_array = np.array([15, 20, 18, 22, 25, 12, 30, 28, 16, 24]) # 市盈率(PE Ratio)数据

# Price-to-earnings (PE) ratio data

stock_returns_array = np.array([8, 12, 10, 11, 14, 7, 16, 15, 9, 13]) # 股票收益率(%)数据